Copyright is held by the author/owner.

WWW10, May 1-5, 2001, Hong Kong.

ACM

1-58113-348-0/01/0005.

This paper describes an application framework for providing and managing personalized, interactive video on the web. The application framework enables content providers and aggregators to stream personalized content to end-users. The server manages video and multimedia content that is structured and profiled. The content profile is matched against an end-users interest profile to determine which parts of the video to include and what hotspots and hyperlinks to provide to the user. The server generates SMIL files that represent the personalized content and returns their unique URLs to the client when responding to end-user requests.

This paper also describes how the application framework is implemented by the HotStreams system. HotStreams is implemented using the Java 2 Enterprise Edition (J2EE). HotStreams is eCommerce and micropayment enabled that provides the means for content provider to specify the value of the content down to the level of individual video clips. This allows the content provider to charge for the parts of the video that the end-user actually viewed or received.

The HotStreams application includes web-based management tools that enable content managers to manage multimedia objects, to compose, structure, and profile the content, to create and profile hotspots and hyperlinks, and to manage pricing information and advertisements. The management tools is implemented in the form of Java applets that run inside a web browser. The applets communicate with the server over HTTP.

Arguably, television has been the most important medium for delivering information and entertainment to consumers for many years. The World-Wide Web is gradually replacing the TV as the dominant vehicle for information and entertainment distribution. Video and media streaming products for wireline connections (such as [1], [2], [3], [4]) and for wireless connections (such as [5]) enable pervasive access to television and video content. The World-Wide Web does not only have the potential of replacing the television. World-Wide Web technologies can also be integrated with traditional television to provide interactive TV. Interactive TV is a fast growing area where also Internet bodies such as W3C [6] and IETF [7] are active.

The World-Wide Web offers service quality features that traditional television cannot match:

The main strength of the television compared to the World-Wide Web is that it allows the viewers to receive information without requiring continuous user interaction. A user who wants to get the latest news will have to go through a number of web pages and select several video streaming objects to view "television" news on the web.

In this paper we discuss a system that combines the main strengths of television with the World-Wide Web quality features we mentioned above, i.e., availability of information objects, interactivity, and personalization. We decided to refer to the technology as interactive video rather than interactive television to emphasize that the end-user may be using a computer or a PDA to access the content and that the content is streamed over the Internet. Interactive video is a subset of interactive multimedia and hypermedia technology where the video content defines the timeline of the presentation and is thereby the driving force. We will discuss the rationale behind this constraint in the next two subsections.

In this section we will discuss a few application areas for interactive video, but it is by no means exhaustive. We have picked a few areas that will help us identify some of the features that an interactive video system needs to provide.

We have already mentioned how interactivity will add value to television news services. The world is becoming increasingly more complex and global. As a result of this, users' interests are getting more diverse than ever before. A European stockbroker working for an international company on Wall Street in New York is usually not satisfied with the television news services provided for the "average" New Yorker. Personalized television news would enable the stockbroker to include more financial and international news stories into the video stream. The stockbroker may find certain news stories important and may want to pause the video to access the World-Wide Web to find more details about the given event.

The rate of change today makes it difficult for everyone to keep up to date on the new technologies. This creates a need for people to constantly acquire new skills and expertise. Distant education using video and multimedia material is a good way to provide training when people need it. Personalized, interactive video will allow the student to customize the training material to fit their needs and knowledge. Assume that a professor is giving a lecture on image processing and recognition. A student graduating in computer science may need to understand the details of common image segmentation while a user of image processing software may only need to know what the limitations of the various techniques might be.

Media streaming has been adopted by many companies that use such technologies to distribute important information to their employees. A personalized, interactive video system will allow employees to increase the effectiveness of these videos by being able to select the information that has an impact on their work and position.

Several digital video systems [1, 2, 3, 8, 9, 10] and document models [11, 12] have been developed for interactive video and hypervideo. The term hypervideo was first proposed by the creators of HyperCafe [8]. Hypercafe was developed as a new way to structure and and dynamically present a combination of video and text-based data. Other systems, such as the Networked Hyper QuickTime prototype [10], takes a video-driven approach where the video playback provides a timeline with which other media (such as slide shows) are synchronized.

The Synchronized Multimedia Integration Language (SMIL) [13] developed by the World-Wide Web Consortium provides a standard for scripting multimedia presentations that can be used to develop interactive video and hypervideo on the web. The streaming server developed by Real Networks, Inc. [1], for instance, implements SMIL on top of the Real-Time Streaming Protocol and provides a standard way to deliver interactive video on the web.

Personalization and adaptation of information to the meet the users needs and interest is a very active research area. Most of this work is related to text and hypertext personalization. A few projects have also been investigating personalization of multimedia content: Not and Zancanaro [14] discuss content adaptation for audio-based hypertext; Papadimitriou et al. [15] describe implementation of personalized entertainment services on broadband network; and Merialdo et al. [16] describe a system for personalizing television news to optimize the content value for a specific user.

There are many hypermedia authoring systems and techniques discussed in the literature and being commercially available. This includes the GRiNS [17] editor for SMIL, the Microsoft Media Producer [18]), MediaLoom [19], the HyperProp system [20], and the authoring tool implemented by Auffret et al. [21]. These systems give the author a high degree of freedom - and responsibility - in designing and organizing the hypermedia document.

Any information system needs to provide delivery features to be used by the viewer to consume content as well as management features that will be used by the service manager to manage the service and the content. Based on results from the most important delivery features can be summarized as follows:

An interactive video delivery system must include a management system for composing and managing content. The management system obviously needs to provide mechanisms that can be used to manage all the delivery features properly. An interactive video management system should have the following characteristics:

This paper is organized as follows: Chapter 2 describes an application framework or template for interactive video applications. Chapter 3 describes the architecture of HotStreams - an interactive video system that implements the proposed framework. Chapter 4 describes in more detail the system's interactivity and delivery features. Chapter 5 describes the system's management tools, while Chapter 6 concludes this paper.

The interactive video application framework proposed in this paper defines a user interface for interactive video playback. The approach taken is similar to the video-based approach used in the Networked Hyper QuickTime system. The interactive video client is running inside a web browser and uses several frames for displaying data to the end-user: One for displaying the interactive table of contents, which provides a textual summary of each of the sections of the video; one for displaying interactive video content; and one or more for displaying other media objects, such as images, illustrations, slides, etc.

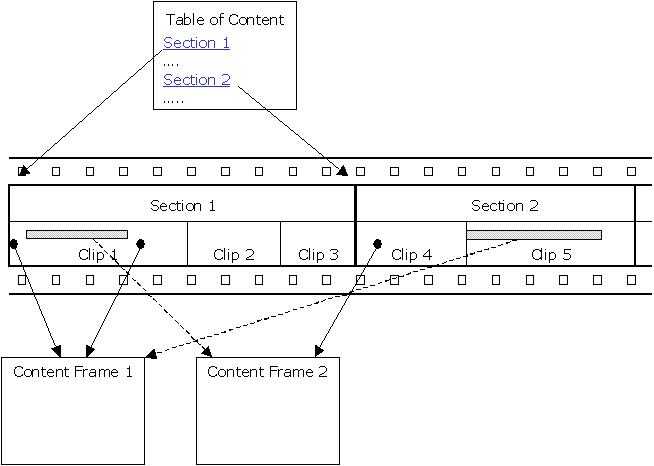

The framework is based on a video-driven content model, similar to the one described by Auffret et al. The content model is illustrated in Figure 1. The model is comprised of the following elements:

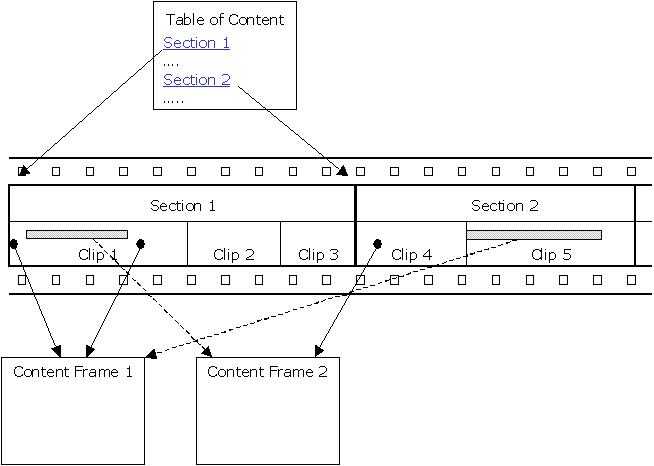

The proposed application framework enables content providers to design their specific user interfaces without modifying the application logic. Figure 2 shows one example application interface where the table of contents frame is located in the lower left part of the figure. The RealNetworks G2 player is located in the frame above. There is only one additional frame for other multimedia objects in this example, the frame to the left on the figure. The framework gives the application access to the information to be displayed in the various frames and for connecting the functionalities of the frames together - for instance to load a given web page into the frame to the left when the user activates a hyperlink.

The application framework is based on a personalization model in which sequence elements, hyperlinks, and synchronization points are profiled using vectors of descriptors. The application framework provides the means for the content provider to define the descriptor schemes and the descriptors that will be used for matching the content against the user profile. A descriptor scheme may be hierarchical or unordered. The descriptors in a hierarchical descriptor scheme are ordered hierarchically. The rating system for American movies is an example of a hierarchical scheme. Any video clip rated G (general audience) can legally be shown to an R (restricted) rated audience. The framework fully supports hierarchical descriptor schemes.

The personalization process consists of the following steps:

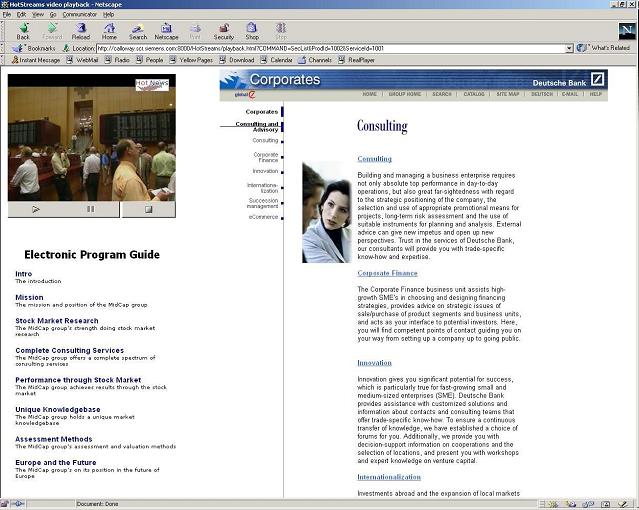

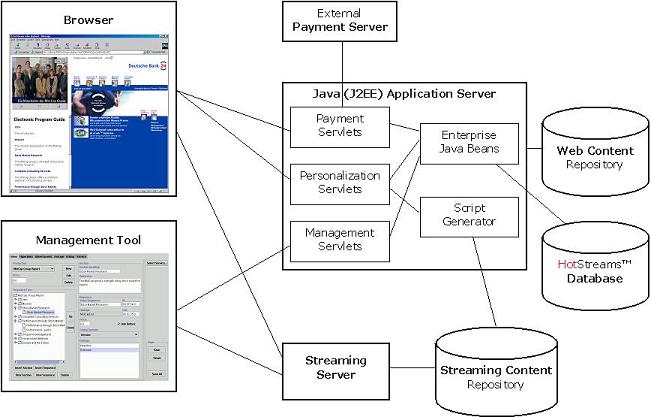

The HotStreams system implements the application framework presented in this paper. It is built on top of the Java 2 Enterprise Edition (J2EE) [23] platform to provide a robust scalable system for the content provider to manage and deliver personalized presentations. HotStreams uses a multi-tier web based architecture consisting of a web tier, middle tier, backend database tier and streaming platform. The web tier consists of JSPs which help in producing dynamic HTML, servlets for controlling the management and personalization workflows, java applets along with HTML and graphic pages. The middle tier is comprised of the Enterprise Java Beans (EJB) with the business logic in the session beans and the entity beans reflecting the database. The data used for personalization is stored in backend databases and HotStreams delivers the personalized video content from streaming video servers.

HotStreams is integrated with a payment system to allow for payment transactions for viewing content from a tiny video clip (e.g., to watch a clip related to your favorite sports team from your evening sports wrap up) to entire movies. Currently, HotStreams system is integrated with Brokat X-Pay system [24] which complies with the Secure Electronic Transaction (SET) [25] standards. The Brokat SET system is integrated with HotStreams through payment EJBs and servlet. The SET components include X-Pay wallet, X-Pay server and X-Pay gateway and accessors.

The personalization server components run within the context of a J2EE application server (see Figure 3). The application server is providing the scalability, security and transaction processing required for a large-scale e-commerce system. The HotStreams components developed are compliant with the J2EE specifications and are tested on the J2EE Reference Implementation (RI). The core functionality of the HotStreams server lies in the session EJBs which provide the personalization of the video content based on the user criteria. Other functionalities, such as dynamic generation of SMIL files and advertisement insertion algorithms, are encapsulated in the session beans.

The personalization server interfaces with the web tier using servlets and communicates using serialized objects and XML on top of HTTP. This allows for applets to access the server through corporate firewalls. The servlets help in the workflow control by accepting requests from the clients (JSP or applets) and forwarding them to the appropriate EJBs in the middle tier. The data is encapsulated in serialized objects and sent back and forth using HTTP. The payment servlet interfaces with the X-Pay server for payment transactions and for keeping a transaction history.

The transaction history not only keeps track of the payment but could also be used for tracking the user experience of the personalized presentation. Currently a transaction involves tracking events for a particular session of the user. Most e-commerce systems are used to trade services and hard goods. A system like HotStreams, however, is used to trade multimedia information. Such systems need to provide security mechanisms that protects the multimedia information from being accessed by non-paying users. HotStreams is handling this through an interaction between the payment servlets and personalization servlets.

The personalization files generated in SMIL consist of the filtered video clips the customer has requested along with the appropriate hyperlinks and graphics to be shown during the presentation. After the personalization query is run through the database, the SMIL generation EJB parses a SMIL template file and uses XML Document Object Model (DOM) functions to create nodes, traverse the hierarchy and change the content of the nodes. The user can easily manipulate template file for setting parameters for viewing the video like how the video should be presented on the screen and for different types like PAL, NTSC, etc. The personalization features are described in more detail in Chapter 4.

The primary function of the asset management server is to provide management functionality. The asset management server contains the management service applet that is downloaded to the web browser when the content manager accesses the management server site and the management servlets (see Figure 3). The management servlet is retrieving and updating data in the database by accessing relevant EJBs.

The G2 server from Real Networks is used as the streaming server in the current version of HotStreams because it supports SMIL streaming. The personalized SMIL files are directly saved into the streaming server's content repository by the script generator.

As the content provider (hotel chain, business news service) might wish to charge the user for viewing certain content. HotStreams provides for a pricing structure of fine granularity where the content provider could charge for tiny clips to large videos like movies. Moreover, there could be several payment systems like subscription where the uses can subscribe to a channel or have a pay-per-view system similar to cable TV today. The main difference being that the user pays for the personalized presentation and only for the content one is interested in. As a HotStreams production is composed of sections that are further decomposed into sequences, one can associate a price from the level of sequence to that of production or to the entire service itself.

The user might charge the presentation to a particular account possibly associated with a corporate accounting system or be able to do micro payments. HotStreams has integrated with Brokat SET system as described earlier.

This section describes how a HotStreams server creates and delivers personalized, interactive content to the end-user. The current implementation of the system is creating SMIL files that represent the personalized content. In this section, we will describe how the content provider may customize the SMIL layout, how the outcome of the personalization is mapped to SMIL constructs, and how the table of contents is created.

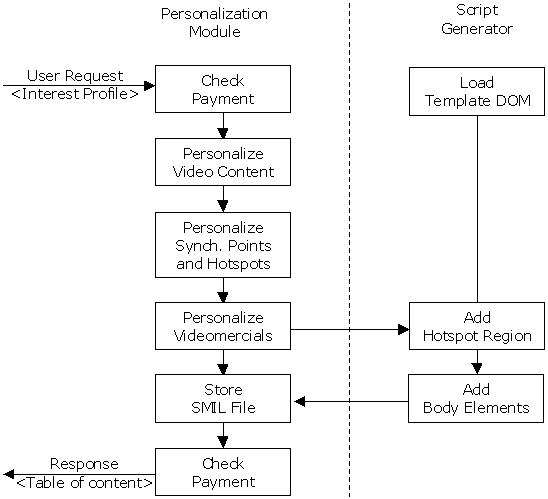

We will start by revisiting the personalization workflow that we briefly presented in Section 2.2. Figure 4 shows how the personalization module and the script generator interact to generate a personalized version of the interactive video in the form of a SMIL file. The following subsections will describe the personalization process in more detail.

SMIL files are logically divided in two parts - the layout part and the body part. A HotStreams server will add SMIL body elements to a personalized SMIL file. The layout part, however, is defined when the application is configured. A HotStreams server loads a template SMIL file when starting up. The template file should define the layout element and leave the body element empty. The following code sample represents a typical template file for HotStreams - it defines a 176 by 144 pixel video region with the fill attribute set to the fit value. The content provider may change the application layout, for instance, to resize the video region or set a different fill attribute, by just modifying the template SMIL file and restart the server.

<?xml version="1.0"?>

<smil>

<head>

<layout>

<root-layout height="144" width="176" />

<region id="vcr" top="0" left="0" height="100%" width="100%"

fill="fit" z-index="4" />

</layout>

</head>

<body>

</body>

</smil>

An interactive video is composed of a sequence of video elements, grouped into sections. The aim of the personalization process is to select the sequence elements and the sections that match the end user's interest profile. The personalization module forwards the sequence elements and sections that "survived" personalization to the script generator. The script generator adds elements to the body of the resulting SMIL file as illustrated in the following SMIL sample:

<sec id="section_0" title="Intro" abstract="The introduction">

<video src="rtsp://theServer/thePath/theFile1" region="vcr"

clip-begin="0ms" clip-end="30000ms" />

<video src="rtsp://theServer/thePath/theFile1" region="vcr"

clip-begin="75000ms" clip-end="90000ms" />

<video src="rtsp://theServer/thePath/theFile2" region="vcr"

clip-begin="0ms" clip-end="30000ms" />

<video src="rtsp://theServer/thePath/theFile3" region="vcr"

clip-begin="0ms" clip-end="60000ms" />

</sec>

Each section of the interactive video is represented as a <seq> element. The server generates an identifier for each of the sections to enable direct access to the start of the section. The server also fills in the title and the abstract attributes with values retrieved from the database. The server concatenates contiguous sequence elements and adds one <video> element for each such concatenated group. The server sets the values of the clip-begin and clip-end attributes with the actual database values.

The sequences that passed the first step of the personalization may be anchor points for hotspots and synchronization points. These will be matched with the user's interest profile. Unfortunately, SMIL does not support synchronization of media being displayed outside the SMIL player. Hence, synchronization of media to be displayed in the web browser has to be done externally. The hyperlinks that pass the personalization are forwarded to the script generator. The script generator needs to add a layout region for the hotspot image - if not already existing. Video clips without hotspots will be generated as illustrated above. Video clips that contain hotspots will look like this:

<par>

<video src="rtsp://theServer/thePath/theFile1" region="vcr"

clip-begin="0ms" clip-end="30000ms" />

<img region="aiu_1" src="http://theServer/thePath/theIcon1.jpg" end="15000ms">

<anchor href="http://www.siemens.com/" show="new" />

</img>

<img region="aiu_2" src="http://theServer/thePath/theIcon2.jpg">

<anchor href="&&theFrame&&http://theServer/thePath/thePage.html"

show="new" />

</img>

</par>

Two hotspots will be shown during the playback of this video clip. The first one will be shown in the region named aiu_1 during the first 15 seconds of the clip. The second will be shown in the region named aiu_2 throughout the whole clip. The href attribute of the second <anchor> element is "decorated": The text appearing between the two pairs of ampersands will be extracted by the browser during playback and interpreted as the name of the frame in which the hyperlink is to be loaded. Hence, the Siemens web page will be loaded in an external browser window if the user clicks on theIcon1 and the thePage will be loaded in a browser frame named theFrame if the user clicks on theIcon2.

The insertion of video commercials is currently based on a value. The application page designer may define a fixed amount of video commercials to be inserted in each video stream or may allow the end user to select. In both cases, the amount of video commercials to insert is determined by a currency value. Each video commercial is also given a price value, and the server will select as many video commercials as necessary to bring the total value to the amount selected by the end user. The server will insert video commercials at one or more of the predefined insertion points (see Section 5.2). The server will also select video commercials that match the profile of the video content.

The personalization module creates a Java Bean object that gives the Table of Content JSP access to information about each of the sections in the personalized video, i.e., the name of the section, the abstract, and the URL that can be passed to the SMIL player to start playback from the beginning of the section. The URL has the generic form: rtsp://theServer/thePath/theFile.smi#section_nn, where nn is the index of the given section.

The application framework proposed in this paper contains a complete set of management tools for assembling, profiling, and managing interactive video content. We already discussed the need for management tools briefly in Section 1.3. We identified three key requirements that an interactive video management system should fulfill. The management tools we have developed address these requirements in the following way:

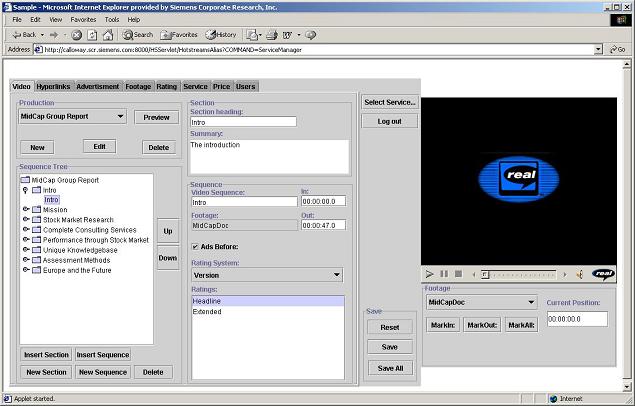

Figure 5 shows the management tools. The user interface is divided in three major parts: The service manager applet is to the left on the interface. This applet provides all the management functions the user needs to manage the service and the content. The embedded RealPlayer® from Real Networks, Inc. [26] is shown in the upper right part of the interface. The playback control applet to the lower right is interacting with the RealPlayer.

The playback control can be used by the content manager to set in and out points in the video. The playback control retrieves the current play position from the RealPlayer when the content manager clicks either of the buttons, and the corresponding value is automatically set in the service manager applet.

The communication between the management tools and the server is carried by HTTP. Currently the applet-servlet protocol is based on passing of serialized Java objects. We will in the following describe the protocol in terms of XML elements because XML is a convenient and precise way to describe the content of the objects being passed.

The asset management protocol defines four types of applet requests:

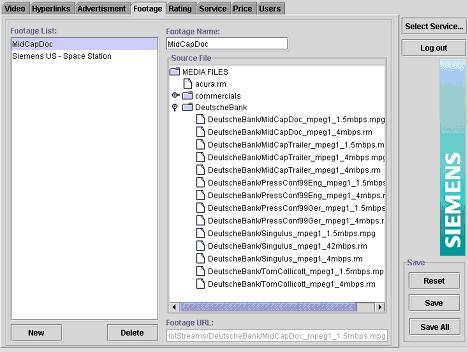

The management tool is used to "register" video files in the database. The content manager can register video files by using the footage panel. The server transfers file and directory information to the panel such that a directory tree of all available files can be shown to the content provider. The content provider may associate any of the available files with a footage object. A footage object is an abstract representation of a video file. Multiple files can be associated with the same footage objects. Different files may store the video content in a different format, bit rate, or location than the other source files for the same content. This feature can be used to provide a content that can be streamed to a diverse range of devices, such as a PDA connected to a mobile network, a remote PC connected to 56 K modem, and a set-top box connected to ADSL. Using World-Wide Web terms, the footage is a resource with a unique URN that may have to exist under one or more URLs. The footage panel is shown in Figure 6.

<footage id="NY_2000_10">

<name>New York City shots, Oct. 2000</name>

<duration>302500</duration>

<sourceFiles>

<sourceFile fileNo="0">

<url>rtsp://theServer/thePath/theFile.mpg</url>

<height>288</height>

<width>352</width>

<bitrate>25.0</bitrate>

</sourceFile>

</sourceFiles>

</footage>

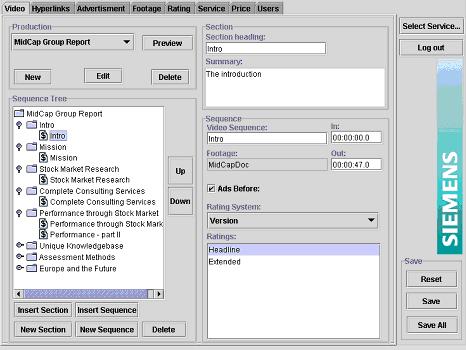

The video panel is the main panel for creating, structuring, and profiling video productions. The panel is shown in Figure 7.

The left hand side of the panel is used to create and structure the video production. The content manager may add and delete sections and sequence elements and may modify the order in which sections and sequence elements appear.

The upper right-hand side of the panel is used to enter the title and the abstract of the selected section. This will be used when the server creates a table of content entry for the section (see Section 4.5). The content manager may add and delete sections and sequence elements and may modify the order in which sections and sequence elements appear.

The lower right hand side of the panel is used to describe the individual sequence elements, i.e., to what footage it belongs, the in (clip-begin) and out (clip-end) points, the rating (profiling) information, and whether the beginning of the sequence element is a good place for insertions of video commercials. The dollar sign appearing in the sequence element icons in the tree indicates that the Ads Before checkbox has been checked for the given sequence elements.

The following XML element shows sample production information being passed between the applet and the servlet. The production has only one section that contains two sequence elements. The second sequence element is specifically targeting Norwegian tourists. video commercials might be inserted in front of the first sequence elements but never between the elements. The XML sample contains pricing information; both sequence elements are delivered free of charge. However, a different panel, the pricing panel, provides the interface for defining pricing information.

<production id="NY_Guide">

<name>New York City Travel Guide</name>

<sections>

<section id="NY_Guide_Sec0" orderNo="0">

<title>Arriving Manhattan by train</title>

<abstract>Penn Station is located in 34th Street</abstract>

<seqElements>

<seqElement id="NY_Guide_sqEl0" orderNo="0">

<name>Intro</name>

<adsBefore checked="true" />

<footage ref="NY_2000_10" />

<in>0</in>

<duration>20000</duration>

<price>0</price>

<ratings>

<rating id="type_tourist" />

<rating id="country_all" />

</ratings>

</seqElement>

<seqElement id="NY_Guide_sqEl1" orderNo="1">

<name>Norwegian Consulate</name>

<adsBefore checked="false" />

<footage ref="NY_2000_10" />

<in>185000</in>

<duration>15000</duration>

<price>0</price>

<ratings>

<rating id="type_tourist" />

<rating id="country_Norway" />

</ratings>

</seqElement>

</seqElements>

</section>

</sections>

</production>

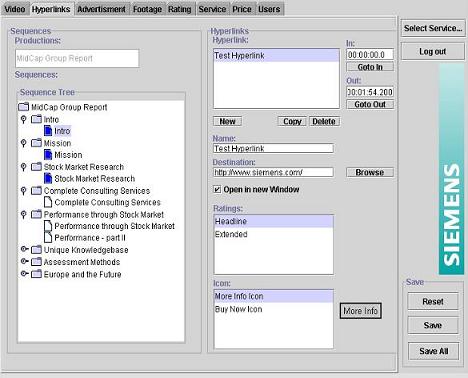

The content manager will use the hyperlink panel to create hyperlinks and specify profiling information. The panel is shown in Figure 8.

The left hand side of the panel is used to visualize the temporal range of the selected hyperlink. The sequence elements during which the hyperlink will be displayed are shown in the sequence tree with dark colored icons.

The right hand side of the panel provides means for creating hyperlinks, defining their appearance and target, and setting profiling descriptors. The panel also enables the content manager to specify in what frame the target object is to be loaded. In this specific configuration, only one frame is defined by the application (see also Figure 2). Hence the tool provides a checkbox for the content manager to check when a specific target is to be loaded in a new browser window.

The following XML element shows sample hyperlink information being passed between the applet and the servlet. The hyperlink is covering 20 seconds of one sequence element. It will be shown to all kinds of users from the US. When shown, it will be linked to the More_Info icon which will be located in the Upper_Right region, which have been created in the database during application setup. When clicked, the hyperlink will load the Grand Central Terminal home page in an external browser window.

<hyperlink id="NY_Grand_Central">

<name>New York Grand Central Station</name>

<url>http://www.grandcentralterminal.com/</url>

<startSeqEl ref="NY_Guide_sqEl0" />

<startOffset>0<startOffset>

<endSeqEl ref="NY_Guide_sqEl0" />

<endOffset>20000<endOffset>

<region ref="Upper_Right" />

<icon ref="More_Info" />

<targetFrame />

<ratings>

<rating ref="type_all" />

<rating ref="country_US" />

</ratings>

</hyperlink>

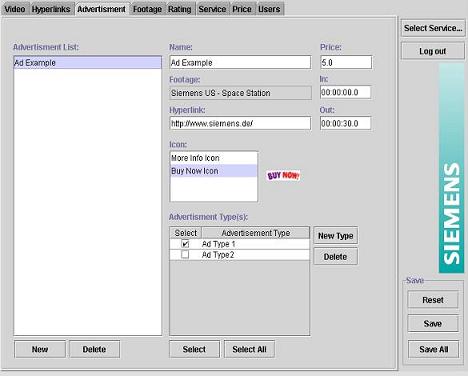

The content manager will use the advertisement panel to create hyperlinks and to classify the video commercial. The panel is shown in Figure 9.

The panel provides the means for the content manager to create new video commercials from existing footage. The content manager may also specify the URL of the advertiser's or the product's home page and a hotspot icon to be used for creating a hyperlink for the advertiser in the video. The content manager may further define the value (price) of the selected video commercial and may assign a advertisement type classification.

The following XML element shows sample videromercial information being passed between the applet and the servlet. The 30 seconds commercial can be found in the beginning of the Siemens_S10 footage. Its price value is 0.05 US dollars and it can be used where commercials for electronics or cellular products are appropriate or for insertion in content targeting young people.

<advertisement id="Siemens S10">

<name>Siemens S10 Cellular Phone</name>

<price>0.05USD</price>

<sections>

<section id="Ad_S10_Sec0" orderNo="0">

<title />

<abstract />

<seqElements>

<seqElement id="Ad_S10_sqEl0" orderNo="0">

<name />

<adsBefore checked="false" />

<footage ref="Siemens_S10" />

<in>0</in>

<duration>30000</duration>

<price>0</price>

<ratings />

</seqElement>

</seqElements>

</section>

</sections>

<adTypes>

<adType ref="ad_electronics" />

<adType ref="ad_cellular" />

<adType ref="ad_youngster" />

</adTypes>

</advertisement>

The current version of the management tool offers more management functions than the ones described so far:

This work contributes to the web application user interface by demonstrating how the design of the user interface for an interactive video application can be completely separated from content creation. This allows a content provider to "brand" all their interactive video content in one common way. It also allows the content manager to focus on the task of deciding the best way to create and profile the content without worrying about too much about the application interface design. This separation of user interface design from application logic was accomplished by providing a JSP based user interface and by use of a SMIL template file.

The proposed application framework shows that it is possible to implement personalized, interactive video systems that are easy to use for users who want to add interactivity to their video content but who do not have the technologies or the training to use professional authoring tools. The major contributions made regarding interactive video composition, personalization, and management can be summarized as:

The current system is based on SMIL 1.0. There are several other formats that could be used to provide interactive video, including SMIL 2.0 and Microsoft ASX. We will be looking into how to best structure the Script Generator to potentially support several script file formats.

The current system is designed to run on ordinary computers. We will be looking into the implications on the system to support other devices, such as TV's, PDA's, etc.

The current system provides the basis for resolving footage objects (URNs) into source files. We will be investigating more sophisticated methods that may take content, network, and user characteristics into consideration when resolving footage URNs.

The current system provides the basis for resolving footage objects (URNs) into source files. We will be investigating more sophisticated methods that may take content, network, and user characteristics into consideration when resolving footage URNs.

Information is exchanged between the applet and the servlet in the form of serialized Java objects. Hence, the client has to be a Java applet or application. An XML Schema [27] based implementation of the protocol will open the management interface for a larger variety of clients.

More research is required in the area of video personalization, for instance, to determine how the content manager can make sure that the video provide meaningful content after being personalized.

Finally, we will be looking at different ways to package the management tool. Currently, all panels are included in the applet sent to the user. There are security as well as performance reasons for not downloading all panels to all users. A targeted packaging may also make the user interface user friendlier because the user would only be seeing the panels he or she really need to get the work done.

We would like to thank the Deutsche Bank for being able to having access to multimedia content for our experiments.

We would also thank Sanjeev Segan, Amogh Chitnis, and Xavier Léauté for their help in implementing and refining the current version of the software.