Results

Loading...

| java.lang.Object | ||

| ↳ | android.hardware.camera2.CameraMetadata<TKey> | |

| ↳ | android.hardware.camera2.CaptureResult | |

Known Direct Subclasses Known Direct Subclasses

|

The subset of the results of a single image capture from the image sensor.

Contains a subset of the final configuration for the capture hardware (sensor, lens, flash), the processing pipeline, the control algorithms, and the output buffers.

CaptureResults are produced by a CameraDevice after processing a

CaptureRequest. All properties listed for capture requests can also

be queried on the capture result, to determine the final values used for

capture. The result also includes additional metadata about the state of the

camera device during the capture.

Not all properties returned by getAvailableCaptureResultKeys()

are necessarily available. Some results are partial and will

not have every key set. Only total results are guaranteed to have

every key available that was enabled by the request.

CaptureResult objects are immutable.

| Nested Classes | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| CaptureResult.Key<T> |

A Key is used to do capture result field lookups with

get(CaptureResult.Key.

|

||||||||||

|

[Expand]

Inherited Constants | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

From class

android.hardware.camera2.CameraMetadata From class

android.hardware.camera2.CameraMetadata

| |||||||||||

| Fields | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| BLACK_LEVEL_LOCK |

Whether black-level compensation is locked to its current values, or is free to vary. |

||||||||||

| COLOR_CORRECTION_ABERRATION_MODE |

Mode of operation for the chromatic aberration correction algorithm. |

||||||||||

| COLOR_CORRECTION_GAINS |

Gains applying to Bayer raw color channels for white-balance. |

||||||||||

| COLOR_CORRECTION_MODE |

The mode control selects how the image data is converted from the sensor's native color into linear sRGB color. |

||||||||||

| COLOR_CORRECTION_TRANSFORM |

A color transform matrix to use to transform from sensor RGB color space to output linear sRGB color space. |

||||||||||

| CONTROL_AE_ANTIBANDING_MODE |

The desired setting for the camera device's auto-exposure algorithm's antibanding compensation. |

||||||||||

| CONTROL_AE_EXPOSURE_COMPENSATION |

Adjustment to auto-exposure (AE) target image brightness. |

||||||||||

| CONTROL_AE_LOCK |

Whether auto-exposure (AE) is currently locked to its latest calculated values. |

||||||||||

| CONTROL_AE_MODE |

The desired mode for the camera device's auto-exposure routine. |

||||||||||

| CONTROL_AE_PRECAPTURE_TRIGGER |

Whether the camera device will trigger a precapture metering sequence when it processes this request. |

||||||||||

| CONTROL_AE_REGIONS |

List of metering areas to use for auto-exposure adjustment. |

||||||||||

| CONTROL_AE_STATE |

Current state of the auto-exposure (AE) algorithm. |

||||||||||

| CONTROL_AE_TARGET_FPS_RANGE |

Range over which the auto-exposure routine can adjust the capture frame rate to maintain good exposure. |

||||||||||

| CONTROL_AF_MODE |

Whether auto-focus (AF) is currently enabled, and what mode it is set to. |

||||||||||

| CONTROL_AF_REGIONS |

List of metering areas to use for auto-focus. |

||||||||||

| CONTROL_AF_STATE |

Current state of auto-focus (AF) algorithm. |

||||||||||

| CONTROL_AF_TRIGGER |

Whether the camera device will trigger autofocus for this request. |

||||||||||

| CONTROL_AWB_LOCK |

Whether auto-white balance (AWB) is currently locked to its latest calculated values. |

||||||||||

| CONTROL_AWB_MODE |

Whether auto-white balance (AWB) is currently setting the color transform fields, and what its illumination target is. |

||||||||||

| CONTROL_AWB_REGIONS |

List of metering areas to use for auto-white-balance illuminant estimation. |

||||||||||

| CONTROL_AWB_STATE |

Current state of auto-white balance (AWB) algorithm. |

||||||||||

| CONTROL_CAPTURE_INTENT |

Information to the camera device 3A (auto-exposure, auto-focus, auto-white balance) routines about the purpose of this capture, to help the camera device to decide optimal 3A strategy. |

||||||||||

| CONTROL_EFFECT_MODE |

A special color effect to apply. |

||||||||||

| CONTROL_MODE |

Overall mode of 3A (auto-exposure, auto-white-balance, auto-focus) control routines. |

||||||||||

| CONTROL_SCENE_MODE |

Control for which scene mode is currently active. |

||||||||||

| CONTROL_VIDEO_STABILIZATION_MODE |

Whether video stabilization is active. |

||||||||||

| EDGE_MODE |

Operation mode for edge enhancement. |

||||||||||

| FLASH_MODE |

The desired mode for for the camera device's flash control. |

||||||||||

| FLASH_STATE |

Current state of the flash unit. |

||||||||||

| HOT_PIXEL_MODE |

Operational mode for hot pixel correction. |

||||||||||

| JPEG_GPS_LOCATION |

A location object to use when generating image GPS metadata. |

||||||||||

| JPEG_ORIENTATION |

The orientation for a JPEG image. |

||||||||||

| JPEG_QUALITY |

Compression quality of the final JPEG image. |

||||||||||

| JPEG_THUMBNAIL_QUALITY |

Compression quality of JPEG thumbnail. |

||||||||||

| JPEG_THUMBNAIL_SIZE |

Resolution of embedded JPEG thumbnail. |

||||||||||

| LENS_APERTURE |

The desired lens aperture size, as a ratio of lens focal length to the effective aperture diameter. |

||||||||||

| LENS_FILTER_DENSITY |

The desired setting for the lens neutral density filter(s). |

||||||||||

| LENS_FOCAL_LENGTH |

The desired lens focal length; used for optical zoom. |

||||||||||

| LENS_FOCUS_DISTANCE |

Desired distance to plane of sharpest focus, measured from frontmost surface of the lens. |

||||||||||

| LENS_FOCUS_RANGE |

The range of scene distances that are in sharp focus (depth of field). |

||||||||||

| LENS_OPTICAL_STABILIZATION_MODE |

Sets whether the camera device uses optical image stabilization (OIS) when capturing images. |

||||||||||

| LENS_STATE |

Current lens status. |

||||||||||

| NOISE_REDUCTION_MODE |

Mode of operation for the noise reduction algorithm. |

||||||||||

| REQUEST_PIPELINE_DEPTH |

Specifies the number of pipeline stages the frame went through from when it was exposed to when the final completed result was available to the framework. |

||||||||||

| SCALER_CROP_REGION |

The desired region of the sensor to read out for this capture. |

||||||||||

| SENSOR_EXPOSURE_TIME |

Duration each pixel is exposed to light. |

||||||||||

| SENSOR_FRAME_DURATION |

Duration from start of frame exposure to start of next frame exposure. |

||||||||||

| SENSOR_GREEN_SPLIT |

The worst-case divergence between Bayer green channels. |

||||||||||

| SENSOR_NEUTRAL_COLOR_POINT |

The estimated camera neutral color in the native sensor colorspace at the time of capture. |

||||||||||

| SENSOR_NOISE_PROFILE |

Noise model coefficients for each CFA mosaic channel. |

||||||||||

| SENSOR_ROLLING_SHUTTER_SKEW |

Duration between the start of first row exposure and the start of last row exposure. |

||||||||||

| SENSOR_SENSITIVITY |

The amount of gain applied to sensor data before processing. |

||||||||||

| SENSOR_TEST_PATTERN_DATA |

A pixel |

||||||||||

| SENSOR_TEST_PATTERN_MODE |

When enabled, the sensor sends a test pattern instead of doing a real exposure from the camera. |

||||||||||

| SENSOR_TIMESTAMP |

Time at start of exposure of first row of the image sensor active array, in nanoseconds. |

||||||||||

| SHADING_MODE |

Quality of lens shading correction applied to the image data. |

||||||||||

| STATISTICS_FACES |

List of the faces detected through camera face detection in this capture. |

||||||||||

| STATISTICS_FACE_DETECT_MODE |

Operating mode for the face detector unit. |

||||||||||

| STATISTICS_HOT_PIXEL_MAP |

List of |

||||||||||

| STATISTICS_HOT_PIXEL_MAP_MODE |

Operating mode for hot pixel map generation. |

||||||||||

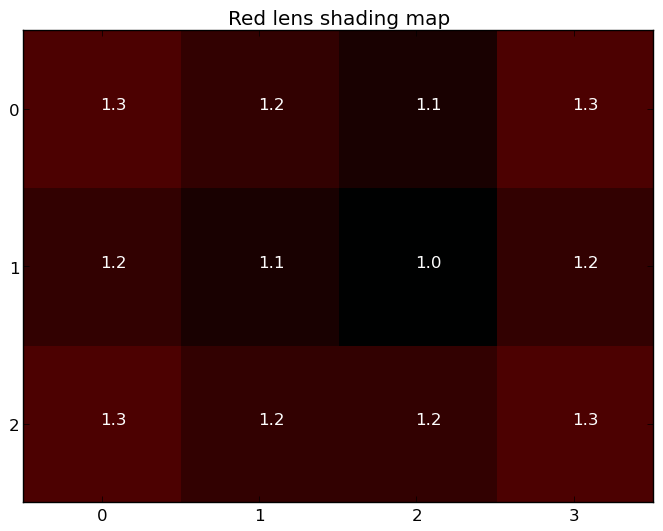

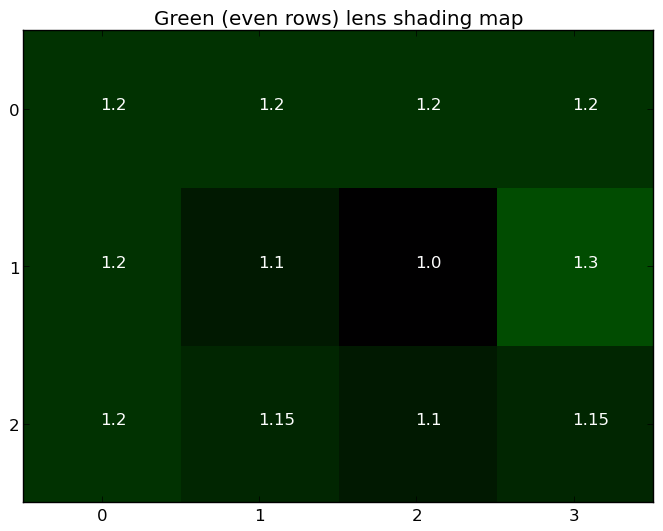

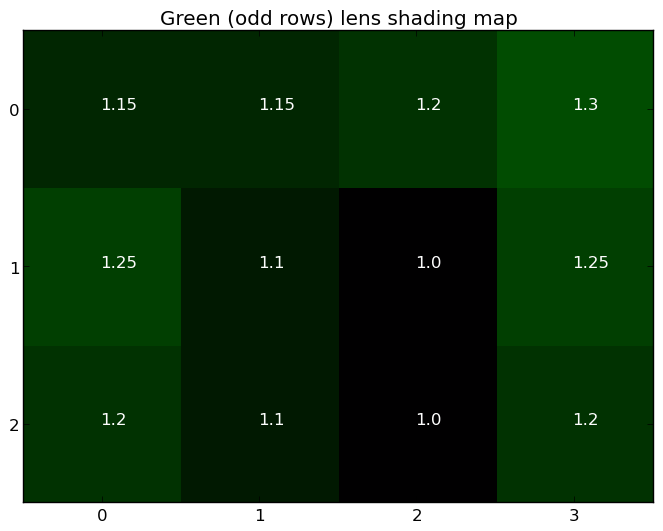

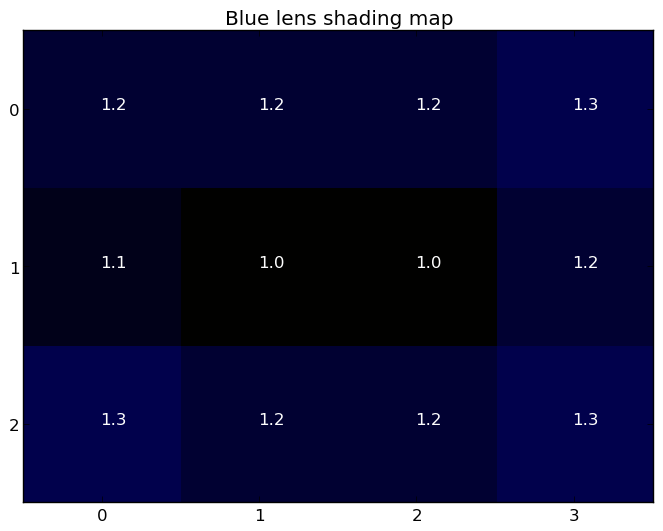

| STATISTICS_LENS_SHADING_CORRECTION_MAP |

The shading map is a low-resolution floating-point map that lists the coefficients used to correct for vignetting, for each Bayer color channel. |

||||||||||

| STATISTICS_LENS_SHADING_MAP_MODE |

Whether the camera device will output the lens shading map in output result metadata. |

||||||||||

| STATISTICS_SCENE_FLICKER |

The camera device estimated scene illumination lighting frequency. |

||||||||||

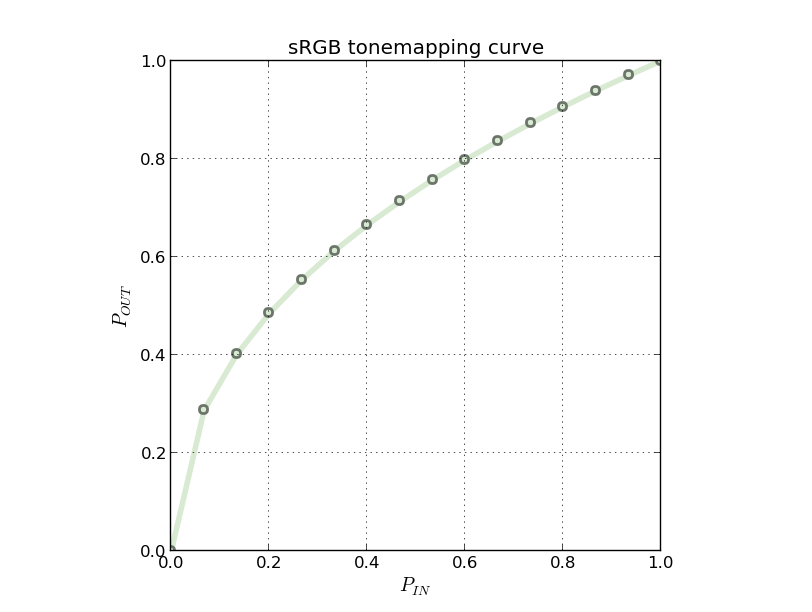

| TONEMAP_CURVE |

Tonemapping / contrast / gamma curve to use when |

||||||||||

| TONEMAP_MODE |

High-level global contrast/gamma/tonemapping control. |

||||||||||

| Public Methods | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

Get a capture result field value.

| |||||||||||

Get the frame number associated with this result.

| |||||||||||

Returns a list of the keys contained in this map.

| |||||||||||

Get the request associated with this result.

| |||||||||||

The sequence ID for this failure that was returned by the

capture(CaptureRequest, CameraCaptureSession.CaptureCallback, Handler) family of functions.

| |||||||||||

|

[Expand]

Inherited Methods | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

From class

android.hardware.camera2.CameraMetadata

From class

android.hardware.camera2.CameraMetadata

| |||||||||||

From class

java.lang.Object

From class

java.lang.Object

| |||||||||||

Whether black-level compensation is locked to its current values, or is free to vary.

Whether the black level offset was locked for this frame. Should be

ON if android.blackLevel.lock was ON in the capture request, unless

a change in other capture settings forced the camera device to

perform a black level reset.

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

Mode of operation for the chromatic aberration correction algorithm.

Chromatic (color) aberration is caused by the fact that different wavelengths of light can not focus on the same point after exiting from the lens. This metadata defines the high level control of chromatic aberration correction algorithm, which aims to minimize the chromatic artifacts that may occur along the object boundaries in an image.

FAST/HIGH_QUALITY both mean that camera device determined aberration correction will be applied. HIGH_QUALITY mode indicates that the camera device will use the highest-quality aberration correction algorithms, even if it slows down capture rate. FAST means the camera device will not slow down capture rate when applying aberration correction.

LEGACY devices will always be in FAST mode.

Possible values:

Available values for this device:

android.colorCorrection.availableAberrationModes

This key is available on all devices.

Gains applying to Bayer raw color channels for white-balance.

These per-channel gains are either set by the camera device

when the request android.colorCorrection.mode is not

TRANSFORM_MATRIX, or directly by the application in the

request when the android.colorCorrection.mode is

TRANSFORM_MATRIX.

The gains in the result metadata are the gains actually applied by the camera device to the current frame.

Units: Unitless gain factors

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The mode control selects how the image data is converted from the sensor's native color into linear sRGB color.

When auto-white balance (AWB) is enabled with android.control.awbMode, this

control is overridden by the AWB routine. When AWB is disabled, the

application controls how the color mapping is performed.

We define the expected processing pipeline below. For consistency across devices, this is always the case with TRANSFORM_MATRIX.

When either FULL or HIGH_QUALITY is used, the camera device may

do additional processing but android.colorCorrection.gains and

android.colorCorrection.transform will still be provided by the

camera device (in the results) and be roughly correct.

Switching to TRANSFORM_MATRIX and using the data provided from FAST or HIGH_QUALITY will yield a picture with the same white point as what was produced by the camera device in the earlier frame.

The expected processing pipeline is as follows:

The white balance is encoded by two values, a 4-channel white-balance gain vector (applied in the Bayer domain), and a 3x3 color transform matrix (applied after demosaic).

The 4-channel white-balance gains are defined as:

android.colorCorrection.gains = [ R G_even G_odd B ]

where G_even is the gain for green pixels on even rows of the

output, and G_odd is the gain for green pixels on the odd rows.

These may be identical for a given camera device implementation; if

the camera device does not support a separate gain for even/odd green

channels, it will use the G_even value, and write G_odd equal to

G_even in the output result metadata.

The matrices for color transforms are defined as a 9-entry vector:

android.colorCorrection.transform = [ I0 I1 I2 I3 I4 I5 I6 I7 I8 ]

which define a transform from input sensor colors, P_in = [ r g b ],

to output linear sRGB, P_out = [ r' g' b' ],

with colors as follows:

r' = I0r + I1g + I2b

g' = I3r + I4g + I5b

b' = I6r + I7g + I8b

Both the input and output value ranges must match. Overflow/underflow values are clipped to fit within the range.

Possible values:

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

A color transform matrix to use to transform from sensor RGB color space to output linear sRGB color space.

This matrix is either set by the camera device when the request

android.colorCorrection.mode is not TRANSFORM_MATRIX, or

directly by the application in the request when the

android.colorCorrection.mode is TRANSFORM_MATRIX.

In the latter case, the camera device may round the matrix to account

for precision issues; the final rounded matrix should be reported back

in this matrix result metadata. The transform should keep the magnitude

of the output color values within [0, 1.0] (assuming input color

values is within the normalized range [0, 1.0]), or clipping may occur.

Units: Unitless scale factors

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The desired setting for the camera device's auto-exposure algorithm's antibanding compensation.

Some kinds of lighting fixtures, such as some fluorescent lights, flicker at the rate of the power supply frequency (60Hz or 50Hz, depending on country). While this is typically not noticeable to a person, it can be visible to a camera device. If a camera sets its exposure time to the wrong value, the flicker may become visible in the viewfinder as flicker or in a final captured image, as a set of variable-brightness bands across the image.

Therefore, the auto-exposure routines of camera devices include antibanding routines that ensure that the chosen exposure value will not cause such banding. The choice of exposure time depends on the rate of flicker, which the camera device can detect automatically, or the expected rate can be selected by the application using this control.

A given camera device may not support all of the possible

options for the antibanding mode. The

android.control.aeAvailableAntibandingModes key contains

the available modes for a given camera device.

The default mode is AUTO, which is supported by all camera devices.

If manual exposure control is enabled (by setting

android.control.aeMode or android.control.mode to OFF),

then this setting has no effect, and the application must

ensure it selects exposure times that do not cause banding

issues. The android.statistics.sceneFlicker key can assist

the application in this.

Possible values:

Available values for this device:

android.control.aeAvailableAntibandingModes

This key is available on all devices.

Adjustment to auto-exposure (AE) target image brightness.

The adjustment is measured as a count of steps, with the

step size defined by android.control.aeCompensationStep and the

allowed range by android.control.aeCompensationRange.

For example, if the exposure value (EV) step is 0.333, '6'

will mean an exposure compensation of +2 EV; -3 will mean an

exposure compensation of -1 EV. One EV represents a doubling

of image brightness. Note that this control will only be

effective if android.control.aeMode != OFF. This control

will take effect even when android.control.aeLock == true.

In the event of exposure compensation value being changed, camera device

may take several frames to reach the newly requested exposure target.

During that time, android.control.aeState field will be in the SEARCHING

state. Once the new exposure target is reached, android.control.aeState will

change from SEARCHING to either CONVERGED, LOCKED (if AE lock is enabled), or

FLASH_REQUIRED (if the scene is too dark for still capture).

Units: Compensation steps

Range of valid values:

android.control.aeCompensationRange

This key is available on all devices.

Whether auto-exposure (AE) is currently locked to its latest calculated values.

When set to true (ON), the AE algorithm is locked to its latest parameters,

and will not change exposure settings until the lock is set to false (OFF).

Note that even when AE is locked, the flash may be fired if

the android.control.aeMode is ON_AUTO_FLASH /

ON_ALWAYS_FLASH / ON_AUTO_FLASH_REDEYE.

When android.control.aeExposureCompensation is changed, even if the AE lock

is ON, the camera device will still adjust its exposure value.

If AE precapture is triggered (see android.control.aePrecaptureTrigger)

when AE is already locked, the camera device will not change the exposure time

(android.sensor.exposureTime) and sensitivity (android.sensor.sensitivity)

parameters. The flash may be fired if the android.control.aeMode

is ON_AUTO_FLASH/ON_AUTO_FLASH_REDEYE and the scene is too dark. If the

android.control.aeMode is ON_ALWAYS_FLASH, the scene may become overexposed.

Since the camera device has a pipeline of in-flight requests, the settings that get locked do not necessarily correspond to the settings that were present in the latest capture result received from the camera device, since additional captures and AE updates may have occurred even before the result was sent out. If an application is switching between automatic and manual control and wishes to eliminate any flicker during the switch, the following procedure is recommended:

See android.control.aeState for AE lock related state transition details.

This key is available on all devices.

The desired mode for the camera device's auto-exposure routine.

This control is only effective if android.control.mode is

AUTO.

When set to any of the ON modes, the camera device's

auto-exposure routine is enabled, overriding the

application's selected exposure time, sensor sensitivity,

and frame duration (android.sensor.exposureTime,

android.sensor.sensitivity, and

android.sensor.frameDuration). If one of the FLASH modes

is selected, the camera device's flash unit controls are

also overridden.

The FLASH modes are only available if the camera device

has a flash unit (android.flash.info.available is true).

If flash TORCH mode is desired, this field must be set to

ON or OFF, and android.flash.mode set to TORCH.

When set to any of the ON modes, the values chosen by the camera device auto-exposure routine for the overridden fields for a given capture will be available in its CaptureResult.

Possible values:

Available values for this device:

android.control.aeAvailableModes

This key is available on all devices.

Whether the camera device will trigger a precapture metering sequence when it processes this request.

This entry is normally set to IDLE, or is not included at all in the request settings. When included and set to START, the camera device will trigger the autoexposure precapture metering sequence.

The precapture sequence should triggered before starting a high-quality still capture for final metering decisions to be made, and for firing pre-capture flash pulses to estimate scene brightness and required final capture flash power, when the flash is enabled.

Normally, this entry should be set to START for only a single request, and the application should wait until the sequence completes before starting a new one.

The exact effect of auto-exposure (AE) precapture trigger

depends on the current AE mode and state; see

android.control.aeState for AE precapture state transition

details.

On LEGACY-level devices, the precapture trigger is not supported; capturing a high-resolution JPEG image will automatically trigger a precapture sequence before the high-resolution capture, including potentially firing a pre-capture flash.

Possible values:

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

List of metering areas to use for auto-exposure adjustment.

Not available if android.control.maxRegionsAe is 0.

Otherwise will always be present.

The maximum number of regions supported by the device is determined by the value

of android.control.maxRegionsAe.

The coordinate system is based on the active pixel array,

with (0,0) being the top-left pixel in the active pixel array, and

(android.sensor.info.activeArraySize.width - 1,

android.sensor.info.activeArraySize.height - 1) being the

bottom-right pixel in the active pixel array.

The weight must be within [0, 1000], and represents a weight

for every pixel in the area. This means that a large metering area

with the same weight as a smaller area will have more effect in

the metering result. Metering areas can partially overlap and the

camera device will add the weights in the overlap region.

The weights are relative to weights of other exposure metering regions, so if only one region is used, all non-zero weights will have the same effect. A region with 0 weight is ignored.

If all regions have 0 weight, then no specific metering area needs to be used by the camera device.

If the metering region is outside the used android.scaler.cropRegion returned in

capture result metadata, the camera device will ignore the sections outside the crop

region and output only the intersection rectangle as the metering region in the result

metadata. If the region is entirely outside the crop region, it will be ignored and

not reported in the result metadata.

Units: Pixel coordinates within android.sensor.info.activeArraySize

Range of valid values:

Coordinates must be between [(0,0), (width, height)) of

android.sensor.info.activeArraySize

Optional - This value may be null on some devices.

Current state of the auto-exposure (AE) algorithm.

Switching between or enabling AE modes (android.control.aeMode) always

resets the AE state to INACTIVE. Similarly, switching between android.control.mode,

or android.control.sceneMode if android.control.mode == USE_SCENE_MODE

The camera device can do several state transitions between two results, if it is allowed by the state transition table. For example: INACTIVE may never actually be seen in a result.

The state in the result is the state for this image (in sync with this image): if AE state becomes CONVERGED, then the image data associated with this result should be good to use.

Below are state transition tables for different AE modes.

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | INACTIVE | Camera device auto exposure algorithm is disabled |

When android.control.aeMode is AE_MODE_ON_*:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | Camera device initiates AE scan | SEARCHING | Values changing |

| INACTIVE | android.control.aeLock is ON |

LOCKED | Values locked |

| SEARCHING | Camera device finishes AE scan | CONVERGED | Good values, not changing |

| SEARCHING | Camera device finishes AE scan | FLASH_REQUIRED | Converged but too dark w/o flash |

| SEARCHING | android.control.aeLock is ON |

LOCKED | Values locked |

| CONVERGED | Camera device initiates AE scan | SEARCHING | Values changing |

| CONVERGED | android.control.aeLock is ON |

LOCKED | Values locked |

| FLASH_REQUIRED | Camera device initiates AE scan | SEARCHING | Values changing |

| FLASH_REQUIRED | android.control.aeLock is ON |

LOCKED | Values locked |

| LOCKED | android.control.aeLock is OFF |

SEARCHING | Values not good after unlock |

| LOCKED | android.control.aeLock is OFF |

CONVERGED | Values good after unlock |

| LOCKED | android.control.aeLock is OFF |

FLASH_REQUIRED | Exposure good, but too dark |

| PRECAPTURE | Sequence done. android.control.aeLock is OFF |

CONVERGED | Ready for high-quality capture |

| PRECAPTURE | Sequence done. android.control.aeLock is ON |

LOCKED | Ready for high-quality capture |

| Any state | android.control.aePrecaptureTrigger is START |

PRECAPTURE | Start AE precapture metering sequence |

For the above table, the camera device may skip reporting any state changes that happen without application intervention (i.e. mode switch, trigger, locking). Any state that can be skipped in that manner is called a transient state.

For example, for above AE modes (AE_MODE_ON_*), in addition to the state transitions listed in above table, it is also legal for the camera device to skip one or more transient states between two results. See below table for examples:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | Camera device finished AE scan | CONVERGED | Values are already good, transient states are skipped by camera device. |

| Any state | android.control.aePrecaptureTrigger is START, sequence done |

FLASH_REQUIRED | Converged but too dark w/o flash after a precapture sequence, transient states are skipped by camera device. |

| Any state | android.control.aePrecaptureTrigger is START, sequence done |

CONVERGED | Converged after a precapture sequence, transient states are skipped by camera device. |

| CONVERGED | Camera device finished AE scan | FLASH_REQUIRED | Converged but too dark w/o flash after a new scan, transient states are skipped by camera device. |

| FLASH_REQUIRED | Camera device finished AE scan | CONVERGED | Converged after a new scan, transient states are skipped by camera device. |

Possible values:

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

Range over which the auto-exposure routine can adjust the capture frame rate to maintain good exposure.

Only constrains auto-exposure (AE) algorithm, not

manual control of android.sensor.exposureTime and

android.sensor.frameDuration.

Units: Frames per second (FPS)

Range of valid values:

Any of the entries in android.control.aeAvailableTargetFpsRanges

This key is available on all devices.

Whether auto-focus (AF) is currently enabled, and what mode it is set to.

Only effective if android.control.mode = AUTO and the lens is not fixed focus

(i.e. android.lens.info.minimumFocusDistance > 0

If the lens is controlled by the camera device auto-focus algorithm,

the camera device will report the current AF status in android.control.afState

in result metadata.

Possible values:

Available values for this device:

android.control.afAvailableModes

This key is available on all devices.

List of metering areas to use for auto-focus.

Not available if android.control.maxRegionsAf is 0.

Otherwise will always be present.

The maximum number of focus areas supported by the device is determined by the value

of android.control.maxRegionsAf.

The coordinate system is based on the active pixel array,

with (0,0) being the top-left pixel in the active pixel array, and

(android.sensor.info.activeArraySize.width - 1,

android.sensor.info.activeArraySize.height - 1) being the

bottom-right pixel in the active pixel array.

The weight must be within [0, 1000], and represents a weight

for every pixel in the area. This means that a large metering area

with the same weight as a smaller area will have more effect in

the metering result. Metering areas can partially overlap and the

camera device will add the weights in the overlap region.

The weights are relative to weights of other metering regions, so if only one region is used, all non-zero weights will have the same effect. A region with 0 weight is ignored.

If all regions have 0 weight, then no specific metering area needs to be used by the camera device.

If the metering region is outside the used android.scaler.cropRegion returned in

capture result metadata, the camera device will ignore the sections outside the crop

region and output only the intersection rectangle as the metering region in the result

metadata. If the region is entirely outside the crop region, it will be ignored and

not reported in the result metadata.

Units: Pixel coordinates within android.sensor.info.activeArraySize

Range of valid values:

Coordinates must be between [(0,0), (width, height)) of

android.sensor.info.activeArraySize

Optional - This value may be null on some devices.

Current state of auto-focus (AF) algorithm.

Switching between or enabling AF modes (android.control.afMode) always

resets the AF state to INACTIVE. Similarly, switching between android.control.mode,

or android.control.sceneMode if android.control.mode == USE_SCENE_MODE

The camera device can do several state transitions between two results, if it is allowed by the state transition table. For example: INACTIVE may never actually be seen in a result.

The state in the result is the state for this image (in sync with this image): if AF state becomes FOCUSED, then the image data associated with this result should be sharp.

Below are state transition tables for different AF modes.

When android.control.afMode is AF_MODE_OFF or AF_MODE_EDOF:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | INACTIVE | Never changes |

When android.control.afMode is AF_MODE_AUTO or AF_MODE_MACRO:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | AF_TRIGGER | ACTIVE_SCAN | Start AF sweep, Lens now moving |

| ACTIVE_SCAN | AF sweep done | FOCUSED_LOCKED | Focused, Lens now locked |

| ACTIVE_SCAN | AF sweep done | NOT_FOCUSED_LOCKED | Not focused, Lens now locked |

| ACTIVE_SCAN | AF_CANCEL | INACTIVE | Cancel/reset AF, Lens now locked |

| FOCUSED_LOCKED | AF_CANCEL | INACTIVE | Cancel/reset AF |

| FOCUSED_LOCKED | AF_TRIGGER | ACTIVE_SCAN | Start new sweep, Lens now moving |

| NOT_FOCUSED_LOCKED | AF_CANCEL | INACTIVE | Cancel/reset AF |

| NOT_FOCUSED_LOCKED | AF_TRIGGER | ACTIVE_SCAN | Start new sweep, Lens now moving |

| Any state | Mode change | INACTIVE |

For the above table, the camera device may skip reporting any state changes that happen without application intervention (i.e. mode switch, trigger, locking). Any state that can be skipped in that manner is called a transient state.

For example, for these AF modes (AF_MODE_AUTO and AF_MODE_MACRO), in addition to the state transitions listed in above table, it is also legal for the camera device to skip one or more transient states between two results. See below table for examples:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | AF_TRIGGER | FOCUSED_LOCKED | Focus is already good or good after a scan, lens is now locked. |

| INACTIVE | AF_TRIGGER | NOT_FOCUSED_LOCKED | Focus failed after a scan, lens is now locked. |

| FOCUSED_LOCKED | AF_TRIGGER | FOCUSED_LOCKED | Focus is already good or good after a scan, lens is now locked. |

| NOT_FOCUSED_LOCKED | AF_TRIGGER | FOCUSED_LOCKED | Focus is good after a scan, lens is not locked. |

When android.control.afMode is AF_MODE_CONTINUOUS_VIDEO:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | Camera device initiates new scan | PASSIVE_SCAN | Start AF scan, Lens now moving |

| INACTIVE | AF_TRIGGER | NOT_FOCUSED_LOCKED | AF state query, Lens now locked |

| PASSIVE_SCAN | Camera device completes current scan | PASSIVE_FOCUSED | End AF scan, Lens now locked |

| PASSIVE_SCAN | Camera device fails current scan | PASSIVE_UNFOCUSED | End AF scan, Lens now locked |

| PASSIVE_SCAN | AF_TRIGGER | FOCUSED_LOCKED | Immediate transition, if focus is good. Lens now locked |

| PASSIVE_SCAN | AF_TRIGGER | NOT_FOCUSED_LOCKED | Immediate transition, if focus is bad. Lens now locked |

| PASSIVE_SCAN | AF_CANCEL | INACTIVE | Reset lens position, Lens now locked |

| PASSIVE_FOCUSED | Camera device initiates new scan | PASSIVE_SCAN | Start AF scan, Lens now moving |

| PASSIVE_UNFOCUSED | Camera device initiates new scan | PASSIVE_SCAN | Start AF scan, Lens now moving |

| PASSIVE_FOCUSED | AF_TRIGGER | FOCUSED_LOCKED | Immediate transition, lens now locked |

| PASSIVE_UNFOCUSED | AF_TRIGGER | NOT_FOCUSED_LOCKED | Immediate transition, lens now locked |

| FOCUSED_LOCKED | AF_TRIGGER | FOCUSED_LOCKED | No effect |

| FOCUSED_LOCKED | AF_CANCEL | INACTIVE | Restart AF scan |

| NOT_FOCUSED_LOCKED | AF_TRIGGER | NOT_FOCUSED_LOCKED | No effect |

| NOT_FOCUSED_LOCKED | AF_CANCEL | INACTIVE | Restart AF scan |

When android.control.afMode is AF_MODE_CONTINUOUS_PICTURE:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | Camera device initiates new scan | PASSIVE_SCAN | Start AF scan, Lens now moving |

| INACTIVE | AF_TRIGGER | NOT_FOCUSED_LOCKED | AF state query, Lens now locked |

| PASSIVE_SCAN | Camera device completes current scan | PASSIVE_FOCUSED | End AF scan, Lens now locked |

| PASSIVE_SCAN | Camera device fails current scan | PASSIVE_UNFOCUSED | End AF scan, Lens now locked |

| PASSIVE_SCAN | AF_TRIGGER | FOCUSED_LOCKED | Eventual transition once the focus is good. Lens now locked |

| PASSIVE_SCAN | AF_TRIGGER | NOT_FOCUSED_LOCKED | Eventual transition if cannot find focus. Lens now locked |

| PASSIVE_SCAN | AF_CANCEL | INACTIVE | Reset lens position, Lens now locked |

| PASSIVE_FOCUSED | Camera device initiates new scan | PASSIVE_SCAN | Start AF scan, Lens now moving |

| PASSIVE_UNFOCUSED | Camera device initiates new scan | PASSIVE_SCAN | Start AF scan, Lens now moving |

| PASSIVE_FOCUSED | AF_TRIGGER | FOCUSED_LOCKED | Immediate trans. Lens now locked |

| PASSIVE_UNFOCUSED | AF_TRIGGER | NOT_FOCUSED_LOCKED | Immediate trans. Lens now locked |

| FOCUSED_LOCKED | AF_TRIGGER | FOCUSED_LOCKED | No effect |

| FOCUSED_LOCKED | AF_CANCEL | INACTIVE | Restart AF scan |

| NOT_FOCUSED_LOCKED | AF_TRIGGER | NOT_FOCUSED_LOCKED | No effect |

| NOT_FOCUSED_LOCKED | AF_CANCEL | INACTIVE | Restart AF scan |

When switch between AF_MODE_CONTINUOUS_* (CAF modes) and AF_MODE_AUTO/AF_MODE_MACRO (AUTO modes), the initial INACTIVE or PASSIVE_SCAN states may be skipped by the camera device. When a trigger is included in a mode switch request, the trigger will be evaluated in the context of the new mode in the request. See below table for examples:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| any state | CAF-->AUTO mode switch | INACTIVE | Mode switch without trigger, initial state must be INACTIVE |

| any state | CAF-->AUTO mode switch with AF_TRIGGER | trigger-reachable states from INACTIVE | Mode switch with trigger, INACTIVE is skipped |

| any state | AUTO-->CAF mode switch | passively reachable states from INACTIVE | Mode switch without trigger, passive transient state is skipped |

Possible values:

INACTIVEPASSIVE_SCANPASSIVE_FOCUSEDACTIVE_SCANFOCUSED_LOCKEDNOT_FOCUSED_LOCKEDPASSIVE_UNFOCUSEDThis key is available on all devices.

Whether the camera device will trigger autofocus for this request.

This entry is normally set to IDLE, or is not included at all in the request settings.

When included and set to START, the camera device will trigger the autofocus algorithm. If autofocus is disabled, this trigger has no effect.

When set to CANCEL, the camera device will cancel any active trigger, and return to its initial AF state.

Generally, applications should set this entry to START or CANCEL for only a single capture, and then return it to IDLE (or not set at all). Specifying START for multiple captures in a row means restarting the AF operation over and over again.

See android.control.afState for what the trigger means for each AF mode.

Possible values:

This key is available on all devices.

Whether auto-white balance (AWB) is currently locked to its latest calculated values.

When set to true (ON), the AWB algorithm is locked to its latest parameters,

and will not change color balance settings until the lock is set to false (OFF).

Since the camera device has a pipeline of in-flight requests, the settings that get locked do not necessarily correspond to the settings that were present in the latest capture result received from the camera device, since additional captures and AWB updates may have occurred even before the result was sent out. If an application is switching between automatic and manual control and wishes to eliminate any flicker during the switch, the following procedure is recommended:

Note that AWB lock is only meaningful when

android.control.awbMode is in the AUTO mode; in other modes,

AWB is already fixed to a specific setting.

Some LEGACY devices may not support ON; the value is then overridden to OFF.

This key is available on all devices.

Whether auto-white balance (AWB) is currently setting the color transform fields, and what its illumination target is.

This control is only effective if android.control.mode is AUTO.

When set to the ON mode, the camera device's auto-white balance

routine is enabled, overriding the application's selected

android.colorCorrection.transform, android.colorCorrection.gains and

android.colorCorrection.mode.

When set to the OFF mode, the camera device's auto-white balance

routine is disabled. The application manually controls the white

balance by android.colorCorrection.transform, android.colorCorrection.gains

and android.colorCorrection.mode.

When set to any other modes, the camera device's auto-white

balance routine is disabled. The camera device uses each

particular illumination target for white balance

adjustment. The application's values for

android.colorCorrection.transform,

android.colorCorrection.gains and

android.colorCorrection.mode are ignored.

Possible values:

Available values for this device:

android.control.awbAvailableModes

This key is available on all devices.

COLOR_CORRECTION_GAINSCOLOR_CORRECTION_MODECOLOR_CORRECTION_TRANSFORMCONTROL_AWB_AVAILABLE_MODESCONTROL_MODECONTROL_AWB_MODE_OFFCONTROL_AWB_MODE_AUTOCONTROL_AWB_MODE_INCANDESCENTCONTROL_AWB_MODE_FLUORESCENTCONTROL_AWB_MODE_WARM_FLUORESCENTCONTROL_AWB_MODE_DAYLIGHTCONTROL_AWB_MODE_CLOUDY_DAYLIGHTCONTROL_AWB_MODE_TWILIGHTCONTROL_AWB_MODE_SHADEList of metering areas to use for auto-white-balance illuminant estimation.

Not available if android.control.maxRegionsAwb is 0.

Otherwise will always be present.

The maximum number of regions supported by the device is determined by the value

of android.control.maxRegionsAwb.

The coordinate system is based on the active pixel array,

with (0,0) being the top-left pixel in the active pixel array, and

(android.sensor.info.activeArraySize.width - 1,

android.sensor.info.activeArraySize.height - 1) being the

bottom-right pixel in the active pixel array.

The weight must range from 0 to 1000, and represents a weight for every pixel in the area. This means that a large metering area with the same weight as a smaller area will have more effect in the metering result. Metering areas can partially overlap and the camera device will add the weights in the overlap region.

The weights are relative to weights of other white balance metering regions, so if only one region is used, all non-zero weights will have the same effect. A region with 0 weight is ignored.

If all regions have 0 weight, then no specific metering area needs to be used by the camera device.

If the metering region is outside the used android.scaler.cropRegion returned in

capture result metadata, the camera device will ignore the sections outside the crop

region and output only the intersection rectangle as the metering region in the result

metadata. If the region is entirely outside the crop region, it will be ignored and

not reported in the result metadata.

Units: Pixel coordinates within android.sensor.info.activeArraySize

Range of valid values:

Coordinates must be between [(0,0), (width, height)) of

android.sensor.info.activeArraySize

Optional - This value may be null on some devices.

Current state of auto-white balance (AWB) algorithm.

Switching between or enabling AWB modes (android.control.awbMode) always

resets the AWB state to INACTIVE. Similarly, switching between android.control.mode,

or android.control.sceneMode if android.control.mode == USE_SCENE_MODE

The camera device can do several state transitions between two results, if it is allowed by the state transition table. So INACTIVE may never actually be seen in a result.

The state in the result is the state for this image (in sync with this image): if AWB state becomes CONVERGED, then the image data associated with this result should be good to use.

Below are state transition tables for different AWB modes.

When android.control.awbMode != AWB_MODE_AUTO

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | INACTIVE | Camera device auto white balance algorithm is disabled |

When android.control.awbMode is AWB_MODE_AUTO:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | Camera device initiates AWB scan | SEARCHING | Values changing |

| INACTIVE | android.control.awbLock is ON |

LOCKED | Values locked |

| SEARCHING | Camera device finishes AWB scan | CONVERGED | Good values, not changing |

| SEARCHING | android.control.awbLock is ON |

LOCKED | Values locked |

| CONVERGED | Camera device initiates AWB scan | SEARCHING | Values changing |

| CONVERGED | android.control.awbLock is ON |

LOCKED | Values locked |

| LOCKED | android.control.awbLock is OFF |

SEARCHING | Values not good after unlock |

For the above table, the camera device may skip reporting any state changes that happen without application intervention (i.e. mode switch, trigger, locking). Any state that can be skipped in that manner is called a transient state.

For example, for this AWB mode (AWB_MODE_AUTO), in addition to the state transitions listed in above table, it is also legal for the camera device to skip one or more transient states between two results. See below table for examples:

| State | Transition Cause | New State | Notes |

|---|---|---|---|

| INACTIVE | Camera device finished AWB scan | CONVERGED | Values are already good, transient states are skipped by camera device. |

| LOCKED | android.control.awbLock is OFF |

CONVERGED | Values good after unlock, transient states are skipped by camera device. |

Possible values:

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

Information to the camera device 3A (auto-exposure, auto-focus, auto-white balance) routines about the purpose of this capture, to help the camera device to decide optimal 3A strategy.

This control (except for MANUAL) is only effective if

android.control.mode != OFF

ZERO_SHUTTER_LAG will be supported if android.request.availableCapabilities

contains ZSL. MANUAL will be supported if android.request.availableCapabilities

contains MANUAL_SENSOR. Other intent values are always supported.

Possible values:

This key is available on all devices.

A special color effect to apply.

When this mode is set, a color effect will be applied to images produced by the camera device. The interpretation and implementation of these color effects is left to the implementor of the camera device, and should not be depended on to be consistent (or present) across all devices.

Possible values:

Available values for this device:

android.control.availableEffects

This key is available on all devices.

Overall mode of 3A (auto-exposure, auto-white-balance, auto-focus) control routines.

This is a top-level 3A control switch. When set to OFF, all 3A control by the camera device is disabled. The application must set the fields for capture parameters itself.

When set to AUTO, the individual algorithm controls in

android.control.* are in effect, such as android.control.afMode.

When set to USE_SCENE_MODE, the individual controls in android.control.* are mostly disabled, and the camera device implements one of the scene mode settings (such as ACTION, SUNSET, or PARTY) as it wishes. The camera device scene mode 3A settings are provided by android.control.sceneModeOverrides.

When set to OFF_KEEP_STATE, it is similar to OFF mode, the only difference is that this frame will not be used by camera device background 3A statistics update, as if this frame is never captured. This mode can be used in the scenario where the application doesn't want a 3A manual control capture to affect the subsequent auto 3A capture results.

LEGACY mode devices will only support AUTO and USE_SCENE_MODE modes. LIMITED mode devices will only support OFF and OFF_KEEP_STATE if they support the MANUAL_SENSOR and MANUAL_POST_PROCSESING capabilities. FULL mode devices will always support OFF and OFF_KEEP_STATE.

Possible values:

This key is available on all devices.

Control for which scene mode is currently active.

Scene modes are custom camera modes optimized for a certain set of conditions and capture settings.

This is the mode that that is active when

android.control.mode == USE_SCENE_MODEandroid.control.aeMode,

android.control.awbMode, and android.control.afMode while in use.

The interpretation and implementation of these scene modes is left to the implementor of the camera device. Their behavior will not be consistent across all devices, and any given device may only implement a subset of these modes.

Possible values:

DISABLEDFACE_PRIORITYACTIONPORTRAITLANDSCAPENIGHTNIGHT_PORTRAITTHEATREBEACHSNOWSUNSETSTEADYPHOTOFIREWORKSSPORTSPARTYCANDLELIGHTBARCODEHIGH_SPEED_VIDEOAvailable values for this device:

android.control.availableSceneModes

This key is available on all devices.

CONTROL_AE_MODECONTROL_AF_MODECONTROL_AVAILABLE_SCENE_MODESCONTROL_AWB_MODECONTROL_MODECONTROL_SCENE_MODE_DISABLEDCONTROL_SCENE_MODE_FACE_PRIORITYCONTROL_SCENE_MODE_ACTIONCONTROL_SCENE_MODE_PORTRAITCONTROL_SCENE_MODE_LANDSCAPECONTROL_SCENE_MODE_NIGHTCONTROL_SCENE_MODE_NIGHT_PORTRAITCONTROL_SCENE_MODE_THEATRECONTROL_SCENE_MODE_BEACHCONTROL_SCENE_MODE_SNOWCONTROL_SCENE_MODE_SUNSETCONTROL_SCENE_MODE_STEADYPHOTOCONTROL_SCENE_MODE_FIREWORKSCONTROL_SCENE_MODE_SPORTSCONTROL_SCENE_MODE_PARTYCONTROL_SCENE_MODE_CANDLELIGHTCONTROL_SCENE_MODE_BARCODECONTROL_SCENE_MODE_HIGH_SPEED_VIDEOWhether video stabilization is active.

Video stabilization automatically translates and scales images from the camera in order to stabilize motion between consecutive frames.

If enabled, video stabilization can modify the

android.scaler.cropRegion to keep the video stream stabilized.

Switching between different video stabilization modes may take several frames to initialize, the camera device will report the current mode in capture result metadata. For example, When "ON" mode is requested, the video stabilization modes in the first several capture results may still be "OFF", and it will become "ON" when the initialization is done.

If a camera device supports both this mode and OIS

(android.lens.opticalStabilizationMode), turning both modes on may

produce undesirable interaction, so it is recommended not to enable

both at the same time.

Possible values:

This key is available on all devices.

Operation mode for edge enhancement.

Edge enhancement improves sharpness and details in the captured image. OFF means no enhancement will be applied by the camera device.

FAST/HIGH_QUALITY both mean camera device determined enhancement will be applied. HIGH_QUALITY mode indicates that the camera device will use the highest-quality enhancement algorithms, even if it slows down capture rate. FAST means the camera device will not slow down capture rate when applying edge enhancement.

Possible values:

Available values for this device:

android.edge.availableEdgeModes

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The desired mode for for the camera device's flash control.

This control is only effective when flash unit is available

(android.flash.info.available == true

When this control is used, the android.control.aeMode must be set to ON or OFF.

Otherwise, the camera device auto-exposure related flash control (ON_AUTO_FLASH,

ON_ALWAYS_FLASH, or ON_AUTO_FLASH_REDEYE) will override this control.

When set to OFF, the camera device will not fire flash for this capture.

When set to SINGLE, the camera device will fire flash regardless of the camera

device's auto-exposure routine's result. When used in still capture case, this

control should be used along with auto-exposure (AE) precapture metering sequence

(android.control.aePrecaptureTrigger), otherwise, the image may be incorrectly exposed.

When set to TORCH, the flash will be on continuously. This mode can be used for use cases such as preview, auto-focus assist, still capture, or video recording.

The flash status will be reported by android.flash.state in the capture result metadata.

Possible values:

This key is available on all devices.

Current state of the flash unit.

When the camera device doesn't have flash unit

(i.e. android.flash.info.available == false

In certain conditions, this will be available on LEGACY devices:

android.control.aeMode == ON_ALWAYS_FLASH

will always return FIRED.android.flash.mode == TORCH

will always return FIRED.In all other conditions the state will not be available on

LEGACY devices (i.e. it will be null).

Possible values:

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

Operational mode for hot pixel correction.

Hotpixel correction interpolates out, or otherwise removes, pixels that do not accurately measure the incoming light (i.e. pixels that are stuck at an arbitrary value or are oversensitive).

Possible values:

Available values for this device:

android.hotPixel.availableHotPixelModes

Optional - This value may be null on some devices.

A location object to use when generating image GPS metadata.

Setting a location object in a request will include the GPS coordinates of the location into any JPEG images captured based on the request. These coordinates can then be viewed by anyone who receives the JPEG image.

This key is available on all devices.

The orientation for a JPEG image.

The clockwise rotation angle in degrees, relative to the orientation to the camera, that the JPEG picture needs to be rotated by, to be viewed upright.

Camera devices may either encode this value into the JPEG EXIF header, or rotate the image data to match this orientation.

Note that this orientation is relative to the orientation of the camera sensor, given

by android.sensor.orientation.

To translate from the device orientation given by the Android sensor APIs, the following sample code may be used:

private int getJpegOrientation(CameraCharacteristics c, int deviceOrientation) {

if (deviceOrientation == android.view.OrientationEventListener.ORIENTATION_UNKNOWN) return 0;

int sensorOrientation = c.get(CameraCharacteristics.SENSOR_ORIENTATION);

// Round device orientation to a multiple of 90

deviceOrientation = (deviceOrientation + 45) / 90 * 90;

// Reverse device orientation for front-facing cameras

boolean facingFront = c.get(CameraCharacteristics.LENS_FACING) == CameraCharacteristics.LENS_FACING_FRONT;

if (facingFront) deviceOrientation = -deviceOrientation;

// Calculate desired JPEG orientation relative to camera orientation to make

// the image upright relative to the device orientation

int jpegOrientation = (sensorOrientation + deviceOrientation + 360) % 360;

return jpegOrientation;

}

Units: Degrees in multiples of 90

Range of valid values:

0, 90, 180, 270

This key is available on all devices.

Compression quality of the final JPEG image.

85-95 is typical usage range.

Range of valid values:

1-100; larger is higher quality

This key is available on all devices.

Compression quality of JPEG thumbnail.

Range of valid values:

1-100; larger is higher quality

This key is available on all devices.

Resolution of embedded JPEG thumbnail.

When set to (0, 0) value, the JPEG EXIF will not contain thumbnail, but the captured JPEG will still be a valid image.

For best results, when issuing a request for a JPEG image, the thumbnail size selected should have the same aspect ratio as the main JPEG output.

If the thumbnail image aspect ratio differs from the JPEG primary image aspect ratio, the camera device creates the thumbnail by cropping it from the primary image. For example, if the primary image has 4:3 aspect ratio, the thumbnail image has 16:9 aspect ratio, the primary image will be cropped vertically (letterbox) to generate the thumbnail image. The thumbnail image will always have a smaller Field Of View (FOV) than the primary image when aspect ratios differ.

Range of valid values:

android.jpeg.availableThumbnailSizes

This key is available on all devices.

The desired lens aperture size, as a ratio of lens focal length to the effective aperture diameter.

Setting this value is only supported on the camera devices that have a variable aperture lens.

When this is supported and android.control.aeMode is OFF,

this can be set along with android.sensor.exposureTime,

android.sensor.sensitivity, and android.sensor.frameDuration

to achieve manual exposure control.

The requested aperture value may take several frames to reach the

requested value; the camera device will report the current (intermediate)

aperture size in capture result metadata while the aperture is changing.

While the aperture is still changing, android.lens.state will be set to MOVING.

When this is supported and android.control.aeMode is one of

the ON modes, this will be overridden by the camera device

auto-exposure algorithm, the overridden values are then provided

back to the user in the corresponding result.

Units: The f-number (f/N)

Range of valid values:

android.lens.info.availableApertures

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The desired setting for the lens neutral density filter(s).

This control will not be supported on most camera devices.

Lens filters are typically used to lower the amount of light the sensor is exposed to (measured in steps of EV). As used here, an EV step is the standard logarithmic representation, which are non-negative, and inversely proportional to the amount of light hitting the sensor. For example, setting this to 0 would result in no reduction of the incoming light, and setting this to 2 would mean that the filter is set to reduce incoming light by two stops (allowing 1/4 of the prior amount of light to the sensor).

It may take several frames before the lens filter density changes

to the requested value. While the filter density is still changing,

android.lens.state will be set to MOVING.

Units: Exposure Value (EV)

Range of valid values:

android.lens.info.availableFilterDensities

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The desired lens focal length; used for optical zoom.

This setting controls the physical focal length of the camera device's lens. Changing the focal length changes the field of view of the camera device, and is usually used for optical zoom.

Like android.lens.focusDistance and android.lens.aperture, this

setting won't be applied instantaneously, and it may take several

frames before the lens can change to the requested focal length.

While the focal length is still changing, android.lens.state will

be set to MOVING.

Optical zoom will not be supported on most devices.

Units: Millimeters

Range of valid values:

android.lens.info.availableFocalLengths

This key is available on all devices.

Desired distance to plane of sharpest focus, measured from frontmost surface of the lens.

Should be zero for fixed-focus cameras

Units: See android.lens.info.focusDistanceCalibration for details

Range of valid values:

>= 0

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The range of scene distances that are in sharp focus (depth of field).

If variable focus not supported, can still report fixed depth of field range

Units: A pair of focus distances in diopters: (near,

far); see android.lens.info.focusDistanceCalibration for details.

Range of valid values:

>=0

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

Sets whether the camera device uses optical image stabilization (OIS) when capturing images.

OIS is used to compensate for motion blur due to small

movements of the camera during capture. Unlike digital image

stabilization (android.control.videoStabilizationMode), OIS

makes use of mechanical elements to stabilize the camera

sensor, and thus allows for longer exposure times before

camera shake becomes apparent.

Switching between different optical stabilization modes may take several frames to initialize, the camera device will report the current mode in capture result metadata. For example, When "ON" mode is requested, the optical stabilization modes in the first several capture results may still be "OFF", and it will become "ON" when the initialization is done.

If a camera device supports both OIS and digital image stabilization

(android.control.videoStabilizationMode), turning both modes on may produce undesirable

interaction, so it is recommended not to enable both at the same time.

Not all devices will support OIS; see

android.lens.info.availableOpticalStabilization for

available controls.

Possible values:

Available values for this device:

android.lens.info.availableOpticalStabilization

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

Current lens status.

For lens parameters android.lens.focalLength, android.lens.focusDistance,

android.lens.filterDensity and android.lens.aperture, when changes are requested,

they may take several frames to reach the requested values. This state indicates

the current status of the lens parameters.

When the state is STATIONARY, the lens parameters are not changing. This could be either because the parameters are all fixed, or because the lens has had enough time to reach the most recently-requested values. If all these lens parameters are not changable for a camera device, as listed below:

android.lens.info.minimumFocusDistance == 0android.lens.focusDistance parameter will always be 0.android.lens.info.availableFocalLengths contains single value),

which means the optical zoom is not supported.android.lens.info.availableFilterDensities contains only 0).android.lens.info.availableApertures contains single value).Then this state will always be STATIONARY.

When the state is MOVING, it indicates that at least one of the lens parameters is changing.

Possible values:

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

Mode of operation for the noise reduction algorithm.

The noise reduction algorithm attempts to improve image quality by removing excessive noise added by the capture process, especially in dark conditions. OFF means no noise reduction will be applied by the camera device.

FAST/HIGH_QUALITY both mean camera device determined noise filtering will be applied. HIGH_QUALITY mode indicates that the camera device will use the highest-quality noise filtering algorithms, even if it slows down capture rate. FAST means the camera device will not slow down capture rate when applying noise filtering.

Possible values:

Available values for this device:

android.noiseReduction.availableNoiseReductionModes

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

Specifies the number of pipeline stages the frame went through from when it was exposed to when the final completed result was available to the framework.

Depending on what settings are used in the request, and what streams are configured, the data may undergo less processing, and some pipeline stages skipped.

See android.request.pipelineMaxDepth for more details.

Range of valid values:

<= android.request.pipelineMaxDepth

This key is available on all devices.

The desired region of the sensor to read out for this capture.

This control can be used to implement digital zoom.

The crop region coordinate system is based off

android.sensor.info.activeArraySize, with (0, 0) being the

top-left corner of the sensor active array.

Output streams use this rectangle to produce their output, cropping to a smaller region if necessary to maintain the stream's aspect ratio, then scaling the sensor input to match the output's configured resolution.

The crop region is applied after the RAW to other color space (e.g. YUV) conversion. Since raw streams (e.g. RAW16) don't have the conversion stage, they are not croppable. The crop region will be ignored by raw streams.

For non-raw streams, any additional per-stream cropping will be done to maximize the final pixel area of the stream.

For example, if the crop region is set to a 4:3 aspect ratio, then 4:3 streams will use the exact crop region. 16:9 streams will further crop vertically (letterbox).

Conversely, if the crop region is set to a 16:9, then 4:3 outputs will crop horizontally (pillarbox), and 16:9 streams will match exactly. These additional crops will be centered within the crop region.

The width and height of the crop region cannot

be set to be smaller than

floor( activeArraySize.width / and

android.scaler.availableMaxDigitalZoom )floor( activeArraySize.height / , respectively.android.scaler.availableMaxDigitalZoom )

The camera device may adjust the crop region to account for rounding and other hardware requirements; the final crop region used will be included in the output capture result.

Units: Pixel coordinates relative to

android.sensor.info.activeArraySize

This key is available on all devices.

Duration each pixel is exposed to light.

If the sensor can't expose this exact duration, it will shorten the duration exposed to the nearest possible value (rather than expose longer). The final exposure time used will be available in the output capture result.

This control is only effective if android.control.aeMode or android.control.mode is set to

OFF; otherwise the auto-exposure algorithm will override this value.

Units: Nanoseconds

Range of valid values:

android.sensor.info.exposureTimeRange

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

Duration from start of frame exposure to start of next frame exposure.

The maximum frame rate that can be supported by a camera subsystem is a function of many factors:

Since these factors can vary greatly between different ISPs and sensors, the camera abstraction tries to represent the bandwidth restrictions with as simple a model as possible.

The model presented has the following characteristics:

The necessary information for the application, given the model above,

is provided via the android.scaler.streamConfigurationMap field

using StreamConfigurationMap#getOutputMinFrameDuration(int, Size).

These are used to determine the maximum frame rate / minimum frame

duration that is possible for a given stream configuration.

Specifically, the application can use the following rules to determine the minimum frame duration it can request from the camera device:

S.S, by

looking it up in android.scaler.streamConfigurationMap using

StreamConfigurationMap#getOutputMinFrameDuration(int, Size) (with

its respective size/format). Let this set of frame durations be called

F.R, the minimum frame duration allowed

for R is the maximum out of all values in F. Let the streams

used in R be called S_r.If none of the streams in S_r have a stall time (listed in

StreamConfigurationMap#getOutputStallDuration(int,Size) using its

respective size/format), then the frame duration in

F determines the steady state frame rate that the application will

get if it uses R as a repeating request. Let this special kind

of request be called Rsimple.

A repeating request Rsimple can be occasionally interleaved

by a single capture of a new request Rstall (which has at least

one in-use stream with a non-0 stall time) and if Rstall has the

same minimum frame duration this will not cause a frame rate loss

if all buffers from the previous Rstall have already been

delivered.

For more details about stalling, see StreamConfigurationMap#getOutputStallDuration(int,Size).

This control is only effective if android.control.aeMode or android.control.mode is set to

OFF; otherwise the auto-exposure algorithm will override this value.

Units: Nanoseconds

Range of valid values:

See android.sensor.info.maxFrameDuration,

android.scaler.streamConfigurationMap. The duration

is capped to max(duration, exposureTime + overhead).

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

The worst-case divergence between Bayer green channels.

This value is an estimate of the worst case split between the Bayer green channels in the red and blue rows in the sensor color filter array.

The green split is calculated as follows:

R = max((mean_Gr + 1)/(mean_Gb + 1), (mean_Gb + 1)/(mean_Gr + 1))The ratio R is the green split divergence reported for this property, which represents how much the green channels differ in the mosaic pattern. This value is typically used to determine the treatment of the green mosaic channels when demosaicing.

The green split value can be roughly interpreted as follows:

Range of valid values:

>= 0

Optional - This value may be null on some devices.

The estimated camera neutral color in the native sensor colorspace at the time of capture.

This value gives the neutral color point encoded as an RGB value in the native sensor color space. The neutral color point indicates the currently estimated white point of the scene illumination. It can be used to interpolate between the provided color transforms when processing raw sensor data.

The order of the values is R, G, B; where R is in the lowest index.

Optional - This value may be null on some devices.

Noise model coefficients for each CFA mosaic channel.

This key contains two noise model coefficients for each CFA channel

corresponding to the sensor amplification (S) and sensor readout

noise (O). These are given as pairs of coefficients for each channel

in the same order as channels listed for the CFA layout key

(see android.sensor.info.colorFilterArrangement). This is

represented as an array of Pair<Double, Double>, where

the first member of the Pair at index n is the S coefficient and the

second member is the O coefficient for the nth color channel in the CFA.

These coefficients are used in a two parameter noise model to describe the amount of noise present in the image for each CFA channel. The noise model used here is:

N(x) = sqrt(Sx + O)

Where x represents the recorded signal of a CFA channel normalized to the range [0, 1], and S and O are the noise model coeffiecients for that channel.

A more detailed description of the noise model can be found in the Adobe DNG specification for the NoiseProfile tag.

Optional - This value may be null on some devices.

Duration between the start of first row exposure and the start of last row exposure.

This is the exposure time skew between the first and last

row exposure start times. The first row and the last row are

the first and last rows inside of the

android.sensor.info.activeArraySize.

For typical camera sensors that use rolling shutters, this is also equivalent to the frame readout time.

Units: Nanoseconds

Range of valid values:

>= 0 and <

StreamConfigurationMap#getOutputMinFrameDuration(int, Size).

Optional - This value may be null on some devices.

Limited capability -

Present on all camera devices that report being at least HARDWARE_LEVEL_LIMITED devices in the

android.info.supportedHardwareLevel key

The amount of gain applied to sensor data before processing.

The sensitivity is the standard ISO sensitivity value, as defined in ISO 12232:2006.

The sensitivity must be within android.sensor.info.sensitivityRange, and

if if it less than android.sensor.maxAnalogSensitivity, the camera device

is guaranteed to use only analog amplification for applying the gain.

If the camera device cannot apply the exact sensitivity requested, it will reduce the gain to the nearest supported value. The final sensitivity used will be available in the output capture result.

Units: ISO arithmetic units

Range of valid values:

android.sensor.info.sensitivityRange

Optional - This value may be null on some devices.

Full capability -

Present on all camera devices that report being HARDWARE_LEVEL_FULL devices in the

android.info.supportedHardwareLevel key

A pixel [R, G_even, G_odd, B] that supplies the test pattern

when android.sensor.testPatternMode is SOLID_COLOR.

Each color channel is treated as an unsigned 32-bit integer. The camera device then uses the most significant X bits that correspond to how many bits are in its Bayer raw sensor output.

For example, a sensor with RAW10 Bayer output would use the 10 most significant bits from each color channel.

Optional - This value may be null on some devices.

When enabled, the sensor sends a test pattern instead of doing a real exposure from the camera.

When a test pattern is enabled, all manual sensor controls specified by android.sensor.* will be ignored. All other controls should work as normal.

For example, if manual flash is enabled, flash firing should still occur (and that the test pattern remain unmodified, since the flash would not actually affect it).

Defaults to OFF.

Possible values:

Available values for this device:

android.sensor.availableTestPatternModes

Optional - This value may be null on some devices.

Time at start of exposure of first row of the image sensor active array, in nanoseconds.

The timestamps are also included in all image buffers produced for the same capture, and will be identical on all the outputs.

When android.sensor.info.timestampSource == UNKNOWN,

the timestamps measure time since an unspecified starting point,

and are monotonically increasing. They can be compared with the

timestamps for other captures from the same camera device, but are

not guaranteed to be comparable to any other time source.

When android.sensor.info.timestampSource == REALTIME,

the timestamps measure time in the same timebase as

android.os.SystemClock#elapsedRealtimeNanos(), and they can be

compared to other timestamps from other subsystems that are using

that base.

Units: Nanoseconds

Range of valid values:

> 0

This key is available on all devices.

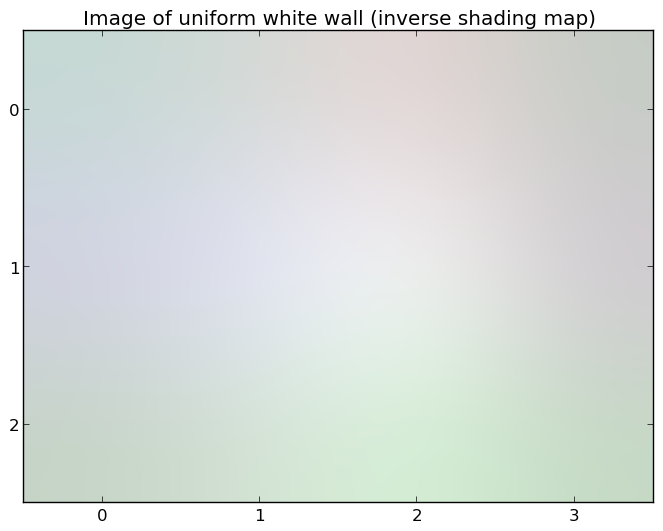

Quality of lens shading correction applied to the image data.

When set to OFF mode, no lens shading correction will be applied by the

camera device, and an identity lens shading map data will be provided

if android.statistics.lensShadingMapMode == ON[ 4, 3 ],

the output android.statistics.lensShadingCorrectionMap for this case will be an identity

map shown below:

[ 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0,

1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0, 1.0 ]

When set to other modes, lens shading correction will be applied by the camera

device. Applications can request lens shading map data by setting

android.statistics.lensShadingMapMode to ON, and then the camera device will provide lens

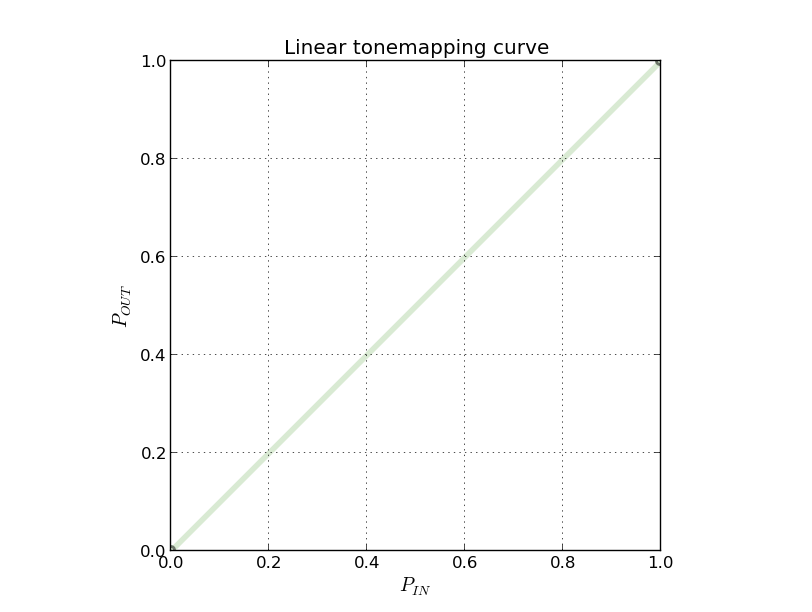

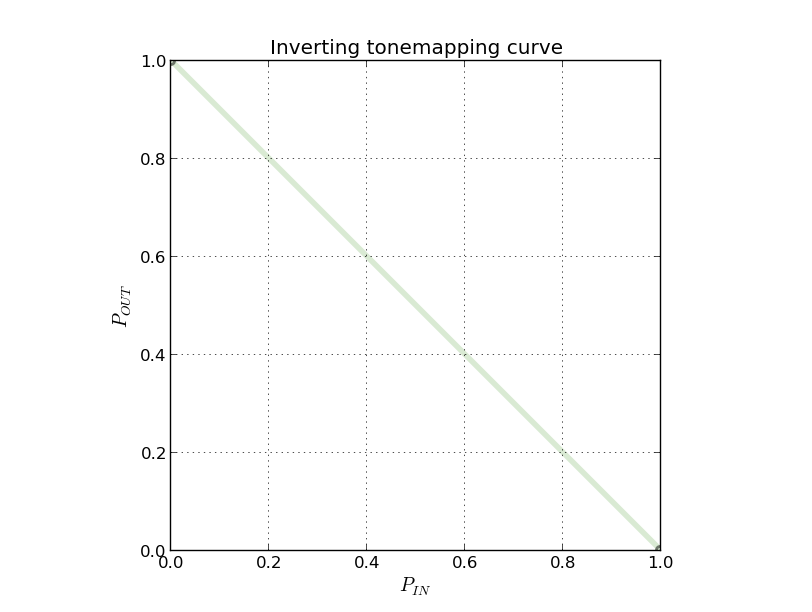

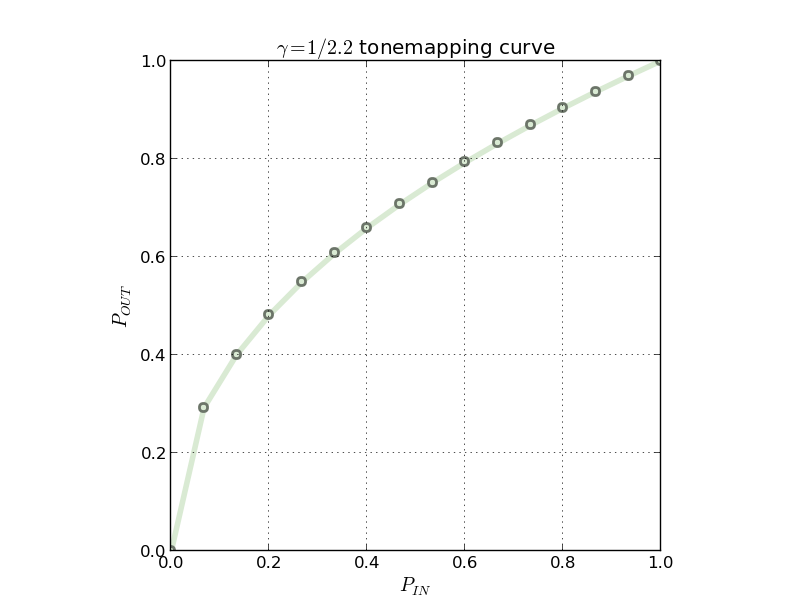

shading map data in android.statistics.lensShadingCorrectionMap; the returned shading map