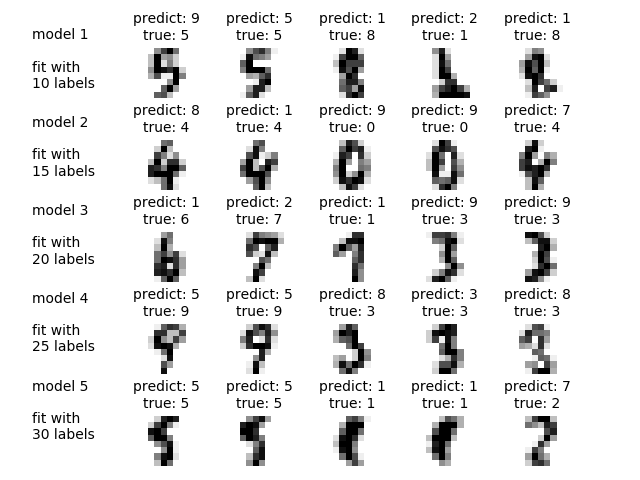

Label Propagation digits active learning¶

Demonstrates an active learning technique to learn handwritten digits using label propagation.

We start by training a label propagation model with only 10 labeled points, then we select the top five most uncertain points to label. Next, we train with 15 labeled points (original 10 + 5 new ones). We repeat this process four times to have a model trained with 30 labeled examples. Note you can increase this to label more than 30 by changing max_iterations. Labeling more than 30 can be useful to get a sense for the speed of convergence of this active learning technique.

A plot will appear showing the top 5 most uncertain digits for each iteration of training. These may or may not contain mistakes, but we will train the next model with their true labels.

Out:

Iteration 0 ______________________________________________________________________

Label Spreading model: 10 labeled & 320 unlabeled (330 total)

precision recall f1-score support

0 0.00 0.00 0.00 24

1 0.51 0.86 0.64 29

2 0.83 0.97 0.90 31

3 0.00 0.00 0.00 28

4 0.00 0.00 0.00 27

5 0.85 0.49 0.62 35

6 0.84 0.95 0.89 40

7 0.70 0.92 0.80 36

8 0.57 0.76 0.65 33

9 0.41 0.86 0.55 37

avg / total 0.51 0.62 0.54 320

Confusion matrix

[[25 3 0 0 0 0 1]

[ 1 30 0 0 0 0 0]

[ 0 0 17 7 0 1 10]

[ 2 0 0 38 0 0 0]

[ 0 3 0 0 33 0 0]

[ 8 0 0 0 0 25 0]

[ 0 0 3 0 0 2 32]]

Iteration 1 ______________________________________________________________________

Label Spreading model: 15 labeled & 315 unlabeled (330 total)

precision recall f1-score support

0 0.00 0.00 0.00 24

1 0.51 0.75 0.61 28

2 0.91 0.97 0.94 31

3 0.00 0.00 0.00 28

4 0.00 0.00 0.00 27

5 0.84 0.97 0.90 33

6 1.00 0.95 0.97 40

7 0.75 0.92 0.83 36

8 0.46 0.81 0.59 31

9 0.43 0.78 0.56 37

avg / total 0.53 0.66 0.58 315

Confusion matrix

[[21 0 0 0 0 6 1]

[ 1 30 0 0 0 0 0]

[ 0 0 32 0 0 0 1]

[ 2 0 0 38 0 0 0]

[ 0 3 0 0 33 0 0]

[ 6 0 0 0 0 25 0]

[ 0 0 6 0 0 2 29]]

Iteration 2 ______________________________________________________________________

Label Spreading model: 20 labeled & 310 unlabeled (330 total)

precision recall f1-score support

0 1.00 1.00 1.00 22

1 0.67 0.71 0.69 28

2 0.94 0.97 0.95 31

3 0.00 0.00 0.00 28

4 0.85 0.92 0.88 24

5 0.89 0.97 0.93 33

6 1.00 0.95 0.97 40

7 1.00 0.92 0.96 36

8 0.50 0.81 0.62 31

9 0.67 0.78 0.72 37

avg / total 0.76 0.81 0.78 310

Confusion matrix

[[22 0 0 0 0 0 0 0 0]

[ 0 20 0 1 0 0 0 6 1]

[ 0 1 30 0 0 0 0 0 0]

[ 0 1 0 22 0 0 0 1 0]

[ 0 0 0 0 32 0 0 0 1]

[ 0 2 0 0 0 38 0 0 0]

[ 0 0 2 1 0 0 33 0 0]

[ 0 6 0 0 0 0 0 25 0]

[ 0 0 0 2 4 0 0 2 29]]

Iteration 3 ______________________________________________________________________

Label Spreading model: 25 labeled & 305 unlabeled (330 total)

precision recall f1-score support

0 1.00 1.00 1.00 22

1 0.68 0.85 0.75 27

2 1.00 0.90 0.95 31

3 1.00 0.77 0.87 26

4 1.00 0.92 0.96 24

5 0.89 0.97 0.93 33

6 1.00 0.97 0.99 39

7 0.95 1.00 0.97 35

8 0.66 0.81 0.72 31

9 0.97 0.78 0.87 37

avg / total 0.91 0.90 0.90 305

Confusion matrix

[[22 0 0 0 0 0 0 0 0 0]

[ 0 23 0 0 0 0 0 0 4 0]

[ 0 1 28 0 0 0 0 2 0 0]

[ 0 0 0 20 0 0 0 0 6 0]

[ 0 1 0 0 22 0 0 0 1 0]

[ 0 0 0 0 0 32 0 0 0 1]

[ 0 1 0 0 0 0 38 0 0 0]

[ 0 0 0 0 0 0 0 35 0 0]

[ 0 6 0 0 0 0 0 0 25 0]

[ 0 2 0 0 0 4 0 0 2 29]]

Iteration 4 ______________________________________________________________________

Label Spreading model: 30 labeled & 300 unlabeled (330 total)

precision recall f1-score support

0 1.00 1.00 1.00 22

1 0.68 0.85 0.75 27

2 1.00 0.87 0.93 31

3 0.92 1.00 0.96 23

4 1.00 0.92 0.96 24

5 0.97 0.94 0.95 33

6 1.00 0.97 0.99 39

7 0.95 1.00 0.97 35

8 0.81 0.81 0.81 31

9 0.94 0.86 0.90 35

avg / total 0.93 0.92 0.92 300

Confusion matrix

[[22 0 0 0 0 0 0 0 0 0]

[ 0 23 0 0 0 0 0 0 4 0]

[ 0 1 27 1 0 0 0 2 0 0]

[ 0 0 0 23 0 0 0 0 0 0]

[ 0 1 0 0 22 0 0 0 1 0]

[ 0 0 0 0 0 31 0 0 0 2]

[ 0 1 0 0 0 0 38 0 0 0]

[ 0 0 0 0 0 0 0 35 0 0]

[ 0 6 0 0 0 0 0 0 25 0]

[ 0 2 0 1 0 1 0 0 1 30]]

print(__doc__)

# Authors: Clay Woolam <[email protected]>

# License: BSD

import numpy as np

import matplotlib.pyplot as plt

from scipy import stats

from sklearn import datasets

from sklearn.semi_supervised import label_propagation

from sklearn.metrics import classification_report, confusion_matrix

digits = datasets.load_digits()

rng = np.random.RandomState(0)

indices = np.arange(len(digits.data))

rng.shuffle(indices)

X = digits.data[indices[:330]]

y = digits.target[indices[:330]]

images = digits.images[indices[:330]]

n_total_samples = len(y)

n_labeled_points = 10

max_iterations = 5

unlabeled_indices = np.arange(n_total_samples)[n_labeled_points:]

f = plt.figure()

for i in range(max_iterations):

if len(unlabeled_indices) == 0:

print("No unlabeled items left to label.")

break

y_train = np.copy(y)

y_train[unlabeled_indices] = -1

lp_model = label_propagation.LabelSpreading(gamma=0.25, max_iter=5)

lp_model.fit(X, y_train)

predicted_labels = lp_model.transduction_[unlabeled_indices]

true_labels = y[unlabeled_indices]

cm = confusion_matrix(true_labels, predicted_labels,

labels=lp_model.classes_)

print("Iteration %i %s" % (i, 70 * "_"))

print("Label Spreading model: %d labeled & %d unlabeled (%d total)"

% (n_labeled_points, n_total_samples - n_labeled_points,

n_total_samples))

print(classification_report(true_labels, predicted_labels))

print("Confusion matrix")

print(cm)

# compute the entropies of transduced label distributions

pred_entropies = stats.distributions.entropy(

lp_model.label_distributions_.T)

# select up to 5 digit examples that the classifier is most uncertain about

uncertainty_index = np.argsort(pred_entropies)[::-1]

uncertainty_index = uncertainty_index[

np.in1d(uncertainty_index, unlabeled_indices)][:5]

# keep track of indices that we get labels for

delete_indices = np.array([])

# for more than 5 iterations, visualize the gain only on the first 5

if i < 5:

f.text(.05, (1 - (i + 1) * .183),

"model %d\n\nfit with\n%d labels" %

((i + 1), i * 5 + 10), size=10)

for index, image_index in enumerate(uncertainty_index):

image = images[image_index]

# for more than 5 iterations, visualize the gain only on the first 5

if i < 5:

sub = f.add_subplot(5, 5, index + 1 + (5 * i))

sub.imshow(image, cmap=plt.cm.gray_r, interpolation='none')

sub.set_title("predict: %i\ntrue: %i" % (

lp_model.transduction_[image_index], y[image_index]), size=10)

sub.axis('off')

# labeling 5 points, remote from labeled set

delete_index, = np.where(unlabeled_indices == image_index)

delete_indices = np.concatenate((delete_indices, delete_index))

unlabeled_indices = np.delete(unlabeled_indices, delete_indices)

n_labeled_points += len(uncertainty_index)

f.suptitle("Active learning with Label Propagation.\nRows show 5 most "

"uncertain labels to learn with the next model.", y=1.15)

plt.subplots_adjust(left=0.2, bottom=0.03, right=0.9, top=0.9, wspace=0.2,

hspace=0.85)

plt.show()

Total running time of the script: ( 0 minutes 0.778 seconds)