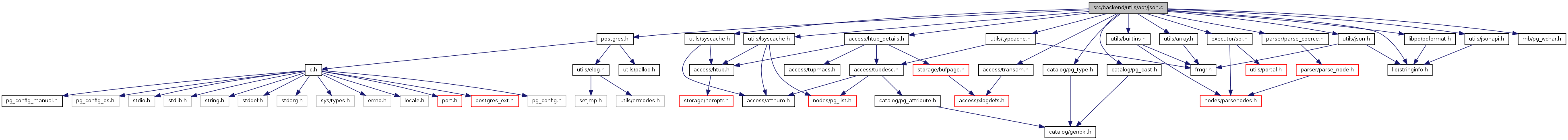

#include "postgres.h"#include "access/htup_details.h"#include "access/transam.h"#include "catalog/pg_cast.h"#include "catalog/pg_type.h"#include "executor/spi.h"#include "lib/stringinfo.h"#include "libpq/pqformat.h"#include "mb/pg_wchar.h"#include "parser/parse_coerce.h"#include "utils/array.h"#include "utils/builtins.h"#include "utils/lsyscache.h"#include "utils/json.h"#include "utils/jsonapi.h"#include "utils/typcache.h"#include "utils/syscache.h"

Go to the source code of this file.

| #define JSON_ALPHANUMERIC_CHAR | ( | c | ) |

(((c) >= 'a' && (c) <= 'z') || \ ((c) >= 'A' && (c) <= 'Z') || \ ((c) >= '0' && (c) <= '9') || \ (c) == '_' || \ IS_HIGHBIT_SET(c))

Definition at line 154 of file json.c.

Referenced by json_lex(), and json_lex_number().

| #define NON_NUMERIC_LETTER "NnAaIiFfTtYy" |

Definition at line 152 of file json.c.

Referenced by datum_to_json().

| #define TYPCATEGORY_JSON 'j' |

Definition at line 148 of file json.c.

Referenced by datum_to_json().

| #define TYPCATEGORY_JSON_CAST 'c' |

Definition at line 150 of file json.c.

Referenced by datum_to_json().

| enum JsonParseContext |

| JSON_PARSE_VALUE | |

| JSON_PARSE_STRING | |

| JSON_PARSE_ARRAY_START | |

| JSON_PARSE_ARRAY_NEXT | |

| JSON_PARSE_OBJECT_START | |

| JSON_PARSE_OBJECT_LABEL | |

| JSON_PARSE_OBJECT_NEXT | |

| JSON_PARSE_OBJECT_COMMA | |

| JSON_PARSE_END |

Definition at line 38 of file json.c.

{

JSON_PARSE_VALUE, /* expecting a value */

JSON_PARSE_STRING, /* expecting a string (for a field name) */

JSON_PARSE_ARRAY_START, /* saw '[', expecting value or ']' */

JSON_PARSE_ARRAY_NEXT, /* saw array element, expecting ',' or ']' */

JSON_PARSE_OBJECT_START, /* saw '{', expecting label or '}' */

JSON_PARSE_OBJECT_LABEL, /* saw object label, expecting ':' */

JSON_PARSE_OBJECT_NEXT, /* saw object value, expecting ',' or '}' */

JSON_PARSE_OBJECT_COMMA, /* saw object ',', expecting next label */

JSON_PARSE_END /* saw the end of a document, expect nothing */

} JsonParseContext;

| static void array_dim_to_json | ( | StringInfo | result, | |

| int | dim, | |||

| int | ndims, | |||

| int * | dims, | |||

| Datum * | vals, | |||

| bool * | nulls, | |||

| int * | valcount, | |||

| TYPCATEGORY | tcategory, | |||

| Oid | typoutputfunc, | |||

| bool | use_line_feeds | |||

| ) | [static] |

Definition at line 1198 of file json.c.

References appendStringInfoChar(), appendStringInfoString(), Assert, datum_to_json(), and i.

Referenced by array_to_json_internal().

{

int i;

const char *sep;

Assert(dim < ndims);

sep = use_line_feeds ? ",\n " : ",";

appendStringInfoChar(result, '[');

for (i = 1; i <= dims[dim]; i++)

{

if (i > 1)

appendStringInfoString(result, sep);

if (dim + 1 == ndims)

{

datum_to_json(vals[*valcount], nulls[*valcount], result, tcategory,

typoutputfunc);

(*valcount)++;

}

else

{

/*

* Do we want line feeds on inner dimensions of arrays? For now

* we'll say no.

*/

array_dim_to_json(result, dim + 1, ndims, dims, vals, nulls,

valcount, tcategory, typoutputfunc, false);

}

}

appendStringInfoChar(result, ']');

}

| Datum array_to_json | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1425 of file json.c.

References array_to_json_internal(), cstring_to_text(), StringInfoData::data, makeStringInfo(), PG_GETARG_DATUM, and PG_RETURN_TEXT_P.

{

Datum array = PG_GETARG_DATUM(0);

StringInfo result;

result = makeStringInfo();

array_to_json_internal(array, result, false);

PG_RETURN_TEXT_P(cstring_to_text(result->data));

}

| static void array_to_json_internal | ( | Datum | array, | |

| StringInfo | result, | |||

| bool | use_line_feeds | |||

| ) | [static] |

Definition at line 1240 of file json.c.

References appendStringInfoString(), ARR_DIMS, ARR_ELEMTYPE, ARR_NDIM, array_dim_to_json(), ArrayGetNItems(), CASTSOURCETARGET, COERCION_METHOD_FUNCTION, DatumGetArrayTypeP, deconstruct_array(), FirstNormalObjectId, get_type_io_data(), GETSTRUCT, HeapTupleIsValid, InvalidOid, IOFunc_output, JSONOID, ObjectIdGetDatum, pfree(), RECORDOID, ReleaseSysCache(), SearchSysCache2, and TypeCategory().

Referenced by array_to_json(), array_to_json_pretty(), and datum_to_json().

{

ArrayType *v = DatumGetArrayTypeP(array);

Oid element_type = ARR_ELEMTYPE(v);

int *dim;

int ndim;

int nitems;

int count = 0;

Datum *elements;

bool *nulls;

int16 typlen;

bool typbyval;

char typalign,

typdelim;

Oid typioparam;

Oid typoutputfunc;

TYPCATEGORY tcategory;

Oid castfunc = InvalidOid;

ndim = ARR_NDIM(v);

dim = ARR_DIMS(v);

nitems = ArrayGetNItems(ndim, dim);

if (nitems <= 0)

{

appendStringInfoString(result, "[]");

return;

}

get_type_io_data(element_type, IOFunc_output,

&typlen, &typbyval, &typalign,

&typdelim, &typioparam, &typoutputfunc);

if (element_type > FirstNormalObjectId)

{

HeapTuple tuple;

Form_pg_cast castForm;

tuple = SearchSysCache2(CASTSOURCETARGET,

ObjectIdGetDatum(element_type),

ObjectIdGetDatum(JSONOID));

if (HeapTupleIsValid(tuple))

{

castForm = (Form_pg_cast) GETSTRUCT(tuple);

if (castForm->castmethod == COERCION_METHOD_FUNCTION)

castfunc = typoutputfunc = castForm->castfunc;

ReleaseSysCache(tuple);

}

}

deconstruct_array(v, element_type, typlen, typbyval,

typalign, &elements, &nulls,

&nitems);

if (castfunc != InvalidOid)

tcategory = TYPCATEGORY_JSON_CAST;

else if (element_type == RECORDOID)

tcategory = TYPCATEGORY_COMPOSITE;

else if (element_type == JSONOID)

tcategory = TYPCATEGORY_JSON;

else

tcategory = TypeCategory(element_type);

array_dim_to_json(result, 0, ndim, dim, elements, nulls, &count, tcategory,

typoutputfunc, use_line_feeds);

pfree(elements);

pfree(nulls);

}

| Datum array_to_json_pretty | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1441 of file json.c.

References array_to_json_internal(), cstring_to_text(), StringInfoData::data, makeStringInfo(), PG_GETARG_BOOL, PG_GETARG_DATUM, and PG_RETURN_TEXT_P.

{

Datum array = PG_GETARG_DATUM(0);

bool use_line_feeds = PG_GETARG_BOOL(1);

StringInfo result;

result = makeStringInfo();

array_to_json_internal(array, result, use_line_feeds);

PG_RETURN_TEXT_P(cstring_to_text(result->data));

}

| static void composite_to_json | ( | Datum | composite, | |

| StringInfo | result, | |||

| bool | use_line_feeds | |||

| ) | [static] |

Definition at line 1316 of file json.c.

References appendStringInfoChar(), appendStringInfoString(), tupleDesc::attrs, CASTSOURCETARGET, COERCION_METHOD_FUNCTION, datum_to_json(), DatumGetHeapTupleHeader, DatumGetPointer, escape_json(), FirstNormalObjectId, GETSTRUCT, getTypeOutputInfo(), heap_getattr, HeapTupleHeaderGetDatumLength, HeapTupleHeaderGetTypeId, HeapTupleHeaderGetTypMod, HeapTupleIsValid, i, InvalidOid, JSONOID, lookup_rowtype_tupdesc(), NameStr, tupleDesc::natts, ObjectIdGetDatum, pfree(), PG_DETOAST_DATUM, PointerGetDatum, RECORDARRAYOID, RECORDOID, ReleaseSysCache(), ReleaseTupleDesc, SearchSysCache2, HeapTupleData::t_data, HeapTupleData::t_len, TypeCategory(), and val.

Referenced by datum_to_json(), row_to_json(), and row_to_json_pretty().

{

HeapTupleHeader td;

Oid tupType;

int32 tupTypmod;

TupleDesc tupdesc;

HeapTupleData tmptup,

*tuple;

int i;

bool needsep = false;

const char *sep;

sep = use_line_feeds ? ",\n " : ",";

td = DatumGetHeapTupleHeader(composite);

/* Extract rowtype info and find a tupdesc */

tupType = HeapTupleHeaderGetTypeId(td);

tupTypmod = HeapTupleHeaderGetTypMod(td);

tupdesc = lookup_rowtype_tupdesc(tupType, tupTypmod);

/* Build a temporary HeapTuple control structure */

tmptup.t_len = HeapTupleHeaderGetDatumLength(td);

tmptup.t_data = td;

tuple = &tmptup;

appendStringInfoChar(result, '{');

for (i = 0; i < tupdesc->natts; i++)

{

Datum val,

origval;

bool isnull;

char *attname;

TYPCATEGORY tcategory;

Oid typoutput;

bool typisvarlena;

Oid castfunc = InvalidOid;

if (tupdesc->attrs[i]->attisdropped)

continue;

if (needsep)

appendStringInfoString(result, sep);

needsep = true;

attname = NameStr(tupdesc->attrs[i]->attname);

escape_json(result, attname);

appendStringInfoChar(result, ':');

origval = heap_getattr(tuple, i + 1, tupdesc, &isnull);

getTypeOutputInfo(tupdesc->attrs[i]->atttypid,

&typoutput, &typisvarlena);

if (tupdesc->attrs[i]->atttypid > FirstNormalObjectId)

{

HeapTuple cast_tuple;

Form_pg_cast castForm;

cast_tuple = SearchSysCache2(CASTSOURCETARGET,

ObjectIdGetDatum(tupdesc->attrs[i]->atttypid),

ObjectIdGetDatum(JSONOID));

if (HeapTupleIsValid(cast_tuple))

{

castForm = (Form_pg_cast) GETSTRUCT(cast_tuple);

if (castForm->castmethod == COERCION_METHOD_FUNCTION)

castfunc = typoutput = castForm->castfunc;

ReleaseSysCache(cast_tuple);

}

}

if (castfunc != InvalidOid)

tcategory = TYPCATEGORY_JSON_CAST;

else if (tupdesc->attrs[i]->atttypid == RECORDARRAYOID)

tcategory = TYPCATEGORY_ARRAY;

else if (tupdesc->attrs[i]->atttypid == RECORDOID)

tcategory = TYPCATEGORY_COMPOSITE;

else if (tupdesc->attrs[i]->atttypid == JSONOID)

tcategory = TYPCATEGORY_JSON;

else

tcategory = TypeCategory(tupdesc->attrs[i]->atttypid);

/*

* If we have a toasted datum, forcibly detoast it here to avoid

* memory leakage inside the type's output routine.

*/

if (typisvarlena && !isnull)

val = PointerGetDatum(PG_DETOAST_DATUM(origval));

else

val = origval;

datum_to_json(val, isnull, result, tcategory, typoutput);

/* Clean up detoasted copy, if any */

if (val != origval)

pfree(DatumGetPointer(val));

}

appendStringInfoChar(result, '}');

ReleaseTupleDesc(tupdesc);

}

| static void datum_to_json | ( | Datum | val, | |

| bool | is_null, | |||

| StringInfo | result, | |||

| TYPCATEGORY | tcategory, | |||

| Oid | typoutputfunc | |||

| ) | [static] |

Definition at line 1130 of file json.c.

References appendStringInfoString(), array_to_json_internal(), composite_to_json(), DatumGetBool, DatumGetTextP, escape_json(), NON_NUMERIC_LETTER, NULL, OidFunctionCall1, OidOutputFunctionCall(), pfree(), text_to_cstring(), TYPCATEGORY_ARRAY, TYPCATEGORY_BOOLEAN, TYPCATEGORY_COMPOSITE, TYPCATEGORY_JSON, TYPCATEGORY_JSON_CAST, and TYPCATEGORY_NUMERIC.

Referenced by array_dim_to_json(), composite_to_json(), json_agg_transfn(), and to_json().

{

char *outputstr;

text *jsontext;

if (is_null)

{

appendStringInfoString(result, "null");

return;

}

switch (tcategory)

{

case TYPCATEGORY_ARRAY:

array_to_json_internal(val, result, false);

break;

case TYPCATEGORY_COMPOSITE:

composite_to_json(val, result, false);

break;

case TYPCATEGORY_BOOLEAN:

if (DatumGetBool(val))

appendStringInfoString(result, "true");

else

appendStringInfoString(result, "false");

break;

case TYPCATEGORY_NUMERIC:

outputstr = OidOutputFunctionCall(typoutputfunc, val);

/*

* Don't call escape_json here if it's a valid JSON number.

* Numeric output should usually be a valid JSON number and JSON

* numbers shouldn't be quoted. Quote cases like "Nan" and

* "Infinity", however.

*/

if (strpbrk(outputstr, NON_NUMERIC_LETTER) == NULL)

appendStringInfoString(result, outputstr);

else

escape_json(result, outputstr);

pfree(outputstr);

break;

case TYPCATEGORY_JSON:

/* JSON will already be escaped */

outputstr = OidOutputFunctionCall(typoutputfunc, val);

appendStringInfoString(result, outputstr);

pfree(outputstr);

break;

case TYPCATEGORY_JSON_CAST:

jsontext = DatumGetTextP(OidFunctionCall1(typoutputfunc, val));

outputstr = text_to_cstring(jsontext);

appendStringInfoString(result, outputstr);

pfree(outputstr);

pfree(jsontext);

break;

default:

outputstr = OidOutputFunctionCall(typoutputfunc, val);

escape_json(result, outputstr);

pfree(outputstr);

break;

}

}

| void escape_json | ( | StringInfo | buf, | |

| const char * | str | |||

| ) |

Definition at line 1707 of file json.c.

References appendStringInfo(), appendStringInfoCharMacro, and appendStringInfoString().

Referenced by composite_to_json(), datum_to_json(), escape_yaml(), ExplainDummyGroup(), ExplainOpenGroup(), ExplainProperty(), ExplainPropertyList(), hstore_to_json(), and hstore_to_json_loose().

{

const char *p;

appendStringInfoCharMacro(buf, '\"');

for (p = str; *p; p++)

{

switch (*p)

{

case '\b':

appendStringInfoString(buf, "\\b");

break;

case '\f':

appendStringInfoString(buf, "\\f");

break;

case '\n':

appendStringInfoString(buf, "\\n");

break;

case '\r':

appendStringInfoString(buf, "\\r");

break;

case '\t':

appendStringInfoString(buf, "\\t");

break;

case '"':

appendStringInfoString(buf, "\\\"");

break;

case '\\':

appendStringInfoString(buf, "\\\\");

break;

default:

if ((unsigned char) *p < ' ')

appendStringInfo(buf, "\\u%04x", (int) *p);

else

appendStringInfoCharMacro(buf, *p);

break;

}

}

appendStringInfoCharMacro(buf, '\"');

}

| static char * extract_mb_char | ( | char * | s | ) | [static] |

Definition at line 1110 of file json.c.

References palloc(), and pg_mblen().

Referenced by json_lex_string().

| Datum json_agg_finalfn | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1686 of file json.c.

References AggCheckCallContext(), appendStringInfoChar(), Assert, cstring_to_text(), StringInfoData::data, NULL, PG_ARGISNULL, PG_GETARG_POINTER, PG_RETURN_NULL, and PG_RETURN_TEXT_P.

{

StringInfo state;

/* cannot be called directly because of internal-type argument */

Assert(AggCheckCallContext(fcinfo, NULL));

state = PG_ARGISNULL(0) ? NULL : (StringInfo) PG_GETARG_POINTER(0);

if (state == NULL)

PG_RETURN_NULL();

appendStringInfoChar(state, ']');

PG_RETURN_TEXT_P(cstring_to_text(state->data));

}

| Datum json_agg_transfn | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1566 of file json.c.

References AggCheckCallContext(), appendStringInfoChar(), appendStringInfoString(), CASTSOURCETARGET, COERCION_METHOD_FUNCTION, datum_to_json(), DatumGetPointer, elog, ereport, errcode(), errmsg(), ERROR, FirstNormalObjectId, get_fn_expr_argtype(), GETSTRUCT, getTypeOutputInfo(), HeapTupleIsValid, InvalidOid, JSONOID, makeStringInfo(), MemoryContextSwitchTo(), ObjectIdGetDatum, pfree(), PG_ARGISNULL, PG_DETOAST_DATUM, PG_GETARG_DATUM, PG_GETARG_POINTER, PG_RETURN_POINTER, PointerGetDatum, RECORDARRAYOID, RECORDOID, ReleaseSysCache(), SearchSysCache2, TYPCATEGORY_ARRAY, TYPCATEGORY_COMPOSITE, TypeCategory(), and val.

{

Oid val_type = get_fn_expr_argtype(fcinfo->flinfo, 1);

MemoryContext aggcontext,

oldcontext;

StringInfo state;

Datum orig_val,

val;

TYPCATEGORY tcategory;

Oid typoutput;

bool typisvarlena;

Oid castfunc = InvalidOid;

if (val_type == InvalidOid)

ereport(ERROR,

(errcode(ERRCODE_INVALID_PARAMETER_VALUE),

errmsg("could not determine input data type")));

if (!AggCheckCallContext(fcinfo, &aggcontext))

{

/* cannot be called directly because of internal-type argument */

elog(ERROR, "json_agg_transfn called in non-aggregate context");

}

if (PG_ARGISNULL(0))

{

/*

* Make this StringInfo in a context where it will persist for the

* duration off the aggregate call. It's only needed for this initial

* piece, as the StringInfo routines make sure they use the right

* context to enlarge the object if necessary.

*/

oldcontext = MemoryContextSwitchTo(aggcontext);

state = makeStringInfo();

MemoryContextSwitchTo(oldcontext);

appendStringInfoChar(state, '[');

}

else

{

state = (StringInfo) PG_GETARG_POINTER(0);

appendStringInfoString(state, ", ");

}

/* fast path for NULLs */

if (PG_ARGISNULL(1))

{

orig_val = (Datum) 0;

datum_to_json(orig_val, true, state, 0, InvalidOid);

PG_RETURN_POINTER(state);

}

orig_val = PG_GETARG_DATUM(1);

getTypeOutputInfo(val_type, &typoutput, &typisvarlena);

if (val_type > FirstNormalObjectId)

{

HeapTuple tuple;

Form_pg_cast castForm;

tuple = SearchSysCache2(CASTSOURCETARGET,

ObjectIdGetDatum(val_type),

ObjectIdGetDatum(JSONOID));

if (HeapTupleIsValid(tuple))

{

castForm = (Form_pg_cast) GETSTRUCT(tuple);

if (castForm->castmethod == COERCION_METHOD_FUNCTION)

castfunc = typoutput = castForm->castfunc;

ReleaseSysCache(tuple);

}

}

if (castfunc != InvalidOid)

tcategory = TYPCATEGORY_JSON_CAST;

else if (val_type == RECORDARRAYOID)

tcategory = TYPCATEGORY_ARRAY;

else if (val_type == RECORDOID)

tcategory = TYPCATEGORY_COMPOSITE;

else if (val_type == JSONOID)

tcategory = TYPCATEGORY_JSON;

else

tcategory = TypeCategory(val_type);

/*

* If we have a toasted datum, forcibly detoast it here to avoid memory

* leakage inside the type's output routine.

*/

if (typisvarlena)

val = PointerGetDatum(PG_DETOAST_DATUM(orig_val));

else

val = orig_val;

if (!PG_ARGISNULL(0) &&

(tcategory == TYPCATEGORY_ARRAY || tcategory == TYPCATEGORY_COMPOSITE))

{

appendStringInfoString(state, "\n ");

}

datum_to_json(val, false, state, tcategory, typoutput);

/* Clean up detoasted copy, if any */

if (val != orig_val)

pfree(DatumGetPointer(val));

/*

* The transition type for array_agg() is declared to be "internal", which

* is a pass-by-value type the same size as a pointer. So we can safely

* pass the ArrayBuildState pointer through nodeAgg.c's machinations.

*/

PG_RETURN_POINTER(state);

}

| Datum json_in | ( | PG_FUNCTION_ARGS | ) |

Definition at line 165 of file json.c.

References cstring_to_text(), makeJsonLexContext(), PG_GETARG_CSTRING, pg_parse_json(), and PG_RETURN_TEXT_P.

{

char *json = PG_GETARG_CSTRING(0);

text *result = cstring_to_text(json);

JsonLexContext *lex;

/* validate it */

lex = makeJsonLexContext(result, false);

pg_parse_json(lex, NullSemAction);

/* Internal representation is the same as text, for now */

PG_RETURN_TEXT_P(result);

}

| static void json_lex | ( | JsonLexContext * | lex | ) | [inline, static] |

Definition at line 504 of file json.c.

References JsonLexContext::input, JsonLexContext::input_length, JSON_ALPHANUMERIC_CHAR, json_lex_number(), json_lex_string(), JsonLexContext::line_number, memcmp(), JsonLexContext::prev_token_terminator, report_invalid_token(), JsonLexContext::token_start, JsonLexContext::token_terminator, and JsonLexContext::token_type.

Referenced by lex_accept(), and pg_parse_json().

{

char *s;

int len;

/* Skip leading whitespace. */

s = lex->token_terminator;

len = s - lex->input;

while (len < lex->input_length &&

(*s == ' ' || *s == '\t' || *s == '\n' || *s == '\r'))

{

if (*s == '\n')

++lex->line_number;

++s;

++len;

}

lex->token_start = s;

/* Determine token type. */

if (len >= lex->input_length)

{

lex->token_start = NULL;

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s;

lex->token_type = JSON_TOKEN_END;

}

else

switch (*s)

{

/* Single-character token, some kind of punctuation mark. */

case '{':

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

lex->token_type = JSON_TOKEN_OBJECT_START;

break;

case '}':

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

lex->token_type = JSON_TOKEN_OBJECT_END;

break;

case '[':

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

lex->token_type = JSON_TOKEN_ARRAY_START;

break;

case ']':

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

lex->token_type = JSON_TOKEN_ARRAY_END;

break;

case ',':

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

lex->token_type = JSON_TOKEN_COMMA;

break;

case ':':

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

lex->token_type = JSON_TOKEN_COLON;

break;

case '"':

/* string */

json_lex_string(lex);

lex->token_type = JSON_TOKEN_STRING;

break;

case '-':

/* Negative number. */

json_lex_number(lex, s + 1);

lex->token_type = JSON_TOKEN_NUMBER;

break;

case '0':

case '1':

case '2':

case '3':

case '4':

case '5':

case '6':

case '7':

case '8':

case '9':

/* Positive number. */

json_lex_number(lex, s);

lex->token_type = JSON_TOKEN_NUMBER;

break;

default:

{

char *p;

/*

* We're not dealing with a string, number, legal

* punctuation mark, or end of string. The only legal

* tokens we might find here are true, false, and null,

* but for error reporting purposes we scan until we see a

* non-alphanumeric character. That way, we can report

* the whole word as an unexpected token, rather than just

* some unintuitive prefix thereof.

*/

for (p = s; JSON_ALPHANUMERIC_CHAR(*p) && p - s < lex->input_length - len; p++)

/* skip */ ;

/*

* We got some sort of unexpected punctuation or an

* otherwise unexpected character, so just complain about

* that one character.

*/

if (p == s)

{

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

report_invalid_token(lex);

}

/*

* We've got a real alphanumeric token here. If it

* happens to be true, false, or null, all is well. If

* not, error out.

*/

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = p;

if (p - s == 4)

{

if (memcmp(s, "true", 4) == 0)

lex->token_type = JSON_TOKEN_TRUE;

else if (memcmp(s, "null", 4) == 0)

lex->token_type = JSON_TOKEN_NULL;

else

report_invalid_token(lex);

}

else if (p - s == 5 && memcmp(s, "false", 5) == 0)

lex->token_type = JSON_TOKEN_FALSE;

else

report_invalid_token(lex);

}

} /* end of switch */

}

| static void json_lex_number | ( | JsonLexContext * | lex, | |

| char * | s | |||

| ) | [inline, static] |

Definition at line 824 of file json.c.

References error(), JsonLexContext::input, JsonLexContext::input_length, JSON_ALPHANUMERIC_CHAR, JsonLexContext::prev_token_terminator, report_invalid_token(), and JsonLexContext::token_terminator.

Referenced by json_lex().

{

bool error = false;

char *p;

int len;

len = s - lex->input;

/* Part (1): leading sign indicator. */

/* Caller already did this for us; so do nothing. */

/* Part (2): parse main digit string. */

if (*s == '0')

{

s++;

len++;

}

else if (*s >= '1' && *s <= '9')

{

do

{

s++;

len++;

} while (*s >= '0' && *s <= '9' && len < lex->input_length);

}

else

error = true;

/* Part (3): parse optional decimal portion. */

if (len < lex->input_length && *s == '.')

{

s++;

len++;

if (len == lex->input_length || *s < '0' || *s > '9')

error = true;

else

{

do

{

s++;

len++;

} while (*s >= '0' && *s <= '9' && len < lex->input_length);

}

}

/* Part (4): parse optional exponent. */

if (len < lex->input_length && (*s == 'e' || *s == 'E'))

{

s++;

len++;

if (len < lex->input_length && (*s == '+' || *s == '-'))

{

s++;

len++;

}

if (len == lex->input_length || *s < '0' || *s > '9')

error = true;

else

{

do

{

s++;

len++;

} while (len < lex->input_length && *s >= '0' && *s <= '9');

}

}

/*

* Check for trailing garbage. As in json_lex(), any alphanumeric stuff

* here should be considered part of the token for error-reporting

* purposes.

*/

for (p = s; JSON_ALPHANUMERIC_CHAR(*p) && len < lex->input_length; p++, len++)

error = true;

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = p;

if (error)

report_invalid_token(lex);

}

| static void json_lex_string | ( | JsonLexContext * | lex | ) | [inline, static] |

Definition at line 645 of file json.c.

References appendStringInfoChar(), appendStringInfoString(), ereport, errcode(), errdetail(), errmsg(), ERROR, extract_mb_char(), i, JsonLexContext::input, JsonLexContext::input_length, NULL, pfree(), pg_any_to_server(), pg_mblen(), PG_UTF8, pg_utf_mblen(), JsonLexContext::prev_token_terminator, report_invalid_token(), report_json_context(), resetStringInfo(), JsonLexContext::strval, JsonLexContext::token_start, JsonLexContext::token_terminator, and unicode_to_utf8().

Referenced by json_lex().

{

char *s;

int len;

if (lex->strval != NULL)

resetStringInfo(lex->strval);

len = lex->token_start - lex->input;

len++;

for (s = lex->token_start + 1; *s != '"'; s++, len++)

{

/* Premature end of the string. */

if (len >= lex->input_length)

{

lex->token_terminator = s;

report_invalid_token(lex);

}

else if ((unsigned char) *s < 32)

{

/* Per RFC4627, these characters MUST be escaped. */

/* Since *s isn't printable, exclude it from the context string */

lex->token_terminator = s;

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Character with value 0x%02x must be escaped.",

(unsigned char) *s),

report_json_context(lex)));

}

else if (*s == '\\')

{

/* OK, we have an escape character. */

s++;

len++;

if (len >= lex->input_length)

{

lex->token_terminator = s;

report_invalid_token(lex);

}

else if (*s == 'u')

{

int i;

int ch = 0;

for (i = 1; i <= 4; i++)

{

s++;

len++;

if (len >= lex->input_length)

{

lex->token_terminator = s;

report_invalid_token(lex);

}

else if (*s >= '0' && *s <= '9')

ch = (ch * 16) + (*s - '0');

else if (*s >= 'a' && *s <= 'f')

ch = (ch * 16) + (*s - 'a') + 10;

else if (*s >= 'A' && *s <= 'F')

ch = (ch * 16) + (*s - 'A') + 10;

else

{

lex->token_terminator = s + pg_mblen(s);

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("\"\\u\" must be followed by four hexadecimal digits."),

report_json_context(lex)));

}

}

if (lex->strval != NULL)

{

char utf8str[5];

int utf8len;

char *converted;

unicode_to_utf8(ch, (unsigned char *) utf8str);

utf8len = pg_utf_mblen((unsigned char *) utf8str);

utf8str[utf8len] = '\0';

converted = pg_any_to_server(utf8str, utf8len, PG_UTF8);

appendStringInfoString(lex->strval, converted);

if (converted != utf8str)

pfree(converted);

}

}

else if (lex->strval != NULL)

{

switch (*s)

{

case '"':

case '\\':

case '/':

appendStringInfoChar(lex->strval, *s);

break;

case 'b':

appendStringInfoChar(lex->strval, '\b');

break;

case 'f':

appendStringInfoChar(lex->strval, '\f');

break;

case 'n':

appendStringInfoChar(lex->strval, '\n');

break;

case 'r':

appendStringInfoChar(lex->strval, '\r');

break;

case 't':

appendStringInfoChar(lex->strval, '\t');

break;

default:

/* Not a valid string escape, so error out. */

lex->token_terminator = s + pg_mblen(s);

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Escape sequence \"\\%s\" is invalid.",

extract_mb_char(s)),

report_json_context(lex)));

}

}

else if (strchr("\"\\/bfnrt", *s) == NULL)

{

/*

* Simpler processing if we're not bothered about de-escaping

*

* It's very tempting to remove the strchr() call here and

* replace it with a switch statement, but testing so far has

* shown it's not a performance win.

*/

lex->token_terminator = s + pg_mblen(s);

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Escape sequence \"\\%s\" is invalid.",

extract_mb_char(s)),

report_json_context(lex)));

}

}

else if (lex->strval != NULL)

{

appendStringInfoChar(lex->strval, *s);

}

}

/* Hooray, we found the end of the string! */

lex->prev_token_terminator = lex->token_terminator;

lex->token_terminator = s + 1;

}

| Datum json_out | ( | PG_FUNCTION_ARGS | ) |

Definition at line 183 of file json.c.

References PG_GETARG_DATUM, PG_RETURN_CSTRING, and TextDatumGetCString.

{

/* we needn't detoast because text_to_cstring will handle that */

Datum txt = PG_GETARG_DATUM(0);

PG_RETURN_CSTRING(TextDatumGetCString(txt));

}

| Datum json_recv | ( | PG_FUNCTION_ARGS | ) |

Definition at line 209 of file json.c.

References buf, StringInfoData::cursor, StringInfoData::len, makeJsonLexContext(), palloc(), PG_GETARG_POINTER, pg_parse_json(), PG_RETURN_TEXT_P, pq_getmsgtext(), SET_VARSIZE, VARDATA, and VARHDRSZ.

{

StringInfo buf = (StringInfo) PG_GETARG_POINTER(0);

text *result;

char *str;

int nbytes;

JsonLexContext *lex;

str = pq_getmsgtext(buf, buf->len - buf->cursor, &nbytes);

result = palloc(nbytes + VARHDRSZ);

SET_VARSIZE(result, nbytes + VARHDRSZ);

memcpy(VARDATA(result), str, nbytes);

/* Validate it. */

lex = makeJsonLexContext(result, false);

pg_parse_json(lex, NullSemAction);

PG_RETURN_TEXT_P(result);

}

| Datum json_send | ( | PG_FUNCTION_ARGS | ) |

Definition at line 195 of file json.c.

References buf, PG_GETARG_TEXT_PP, PG_RETURN_BYTEA_P, pq_begintypsend(), pq_endtypsend(), pq_sendtext(), VARDATA_ANY, and VARSIZE_ANY_EXHDR.

{

text *t = PG_GETARG_TEXT_PP(0);

StringInfoData buf;

pq_begintypsend(&buf);

pq_sendtext(&buf, VARDATA_ANY(t), VARSIZE_ANY_EXHDR(t));

PG_RETURN_BYTEA_P(pq_endtypsend(&buf));

}

| static bool lex_accept | ( | JsonLexContext * | lex, | |

| JsonTokenType | token, | |||

| char ** | lexeme | |||

| ) | [inline, static] |

Definition at line 103 of file json.c.

References StringInfoData::data, json_lex(), JSON_TOKEN_STRING, NULL, palloc(), pstrdup(), JsonLexContext::strval, JsonLexContext::token_start, JsonLexContext::token_terminator, and JsonLexContext::token_type.

Referenced by lex_expect(), parse_array(), parse_object(), parse_object_field(), and parse_scalar().

{

if (lex->token_type == token)

{

if (lexeme != NULL)

{

if (lex->token_type == JSON_TOKEN_STRING)

{

if (lex->strval != NULL)

*lexeme = pstrdup(lex->strval->data);

}

else

{

int len = (lex->token_terminator - lex->token_start);

char *tokstr = palloc(len + 1);

memcpy(tokstr, lex->token_start, len);

tokstr[len] = '\0';

*lexeme = tokstr;

}

}

json_lex(lex);

return true;

}

return false;

}

| static void lex_expect | ( | JsonParseContext | ctx, | |

| JsonLexContext * | lex, | |||

| JsonTokenType | token | |||

| ) | [inline, static] |

Definition at line 137 of file json.c.

References lex_accept(), NULL, and report_parse_error().

Referenced by parse_array(), parse_object(), parse_object_field(), and pg_parse_json().

{

if (!lex_accept(lex, token, NULL))

report_parse_error(ctx, lex);;

}

| static JsonTokenType lex_peek | ( | JsonLexContext * | lex | ) | [inline, static] |

Definition at line 88 of file json.c.

References JsonLexContext::token_type.

Referenced by parse_array(), parse_array_element(), parse_object(), parse_object_field(), parse_scalar(), and pg_parse_json().

{

return lex->token_type;

}

| JsonLexContext* makeJsonLexContext | ( | text * | json, | |

| bool | need_escapes | |||

| ) |

Definition at line 240 of file json.c.

References JsonLexContext::input, JsonLexContext::input_length, JsonLexContext::line_number, JsonLexContext::line_start, makeStringInfo(), palloc0(), JsonLexContext::strval, JsonLexContext::token_terminator, VARDATA, and VARSIZE.

Referenced by each_worker(), get_json_object_as_hash(), get_worker(), json_array_elements(), json_array_length(), json_in(), json_object_keys(), json_populate_recordset(), and json_recv().

{

JsonLexContext *lex = palloc0(sizeof(JsonLexContext));

lex->input = lex->token_terminator = lex->line_start = VARDATA(json);

lex->line_number = 1;

lex->input_length = VARSIZE(json) - VARHDRSZ;

if (need_escapes)

lex->strval = makeStringInfo();

return lex;

}

| static void parse_array | ( | JsonLexContext * | lex, | |

| JsonSemAction | sem | |||

| ) | [static] |

Definition at line 462 of file json.c.

References jsonSemAction::array_end, jsonSemAction::array_start, JSON_PARSE_ARRAY_NEXT, JSON_PARSE_ARRAY_START, JSON_TOKEN_ARRAY_END, JSON_TOKEN_ARRAY_START, JSON_TOKEN_COMMA, lex_accept(), lex_expect(), JsonLexContext::lex_level, lex_peek(), NULL, parse_array_element(), and jsonSemAction::semstate.

Referenced by parse_array_element(), parse_object_field(), and pg_parse_json().

{

/*

* an array is a possibly empty sequence of array elements, separated by

* commas and surrounded by square brackets.

*/

json_struct_action astart = sem->array_start;

json_struct_action aend = sem->array_end;

if (astart != NULL)

(*astart) (sem->semstate);

/*

* Data inside an array at at a higher nesting level than the array

* itself. Note that we increment this after we call the semantic routine

* for the array start and restore it before we call the routine for the

* array end.

*/

lex->lex_level++;

lex_expect(JSON_PARSE_ARRAY_START, lex, JSON_TOKEN_ARRAY_START);

if (lex_peek(lex) != JSON_TOKEN_ARRAY_END)

{

parse_array_element(lex, sem);

while (lex_accept(lex, JSON_TOKEN_COMMA, NULL))

parse_array_element(lex, sem);

}

lex_expect(JSON_PARSE_ARRAY_NEXT, lex, JSON_TOKEN_ARRAY_END);

lex->lex_level--;

if (aend != NULL)

(*aend) (sem->semstate);

}

| static void parse_array_element | ( | JsonLexContext * | lex, | |

| JsonSemAction | sem | |||

| ) | [static] |

Definition at line 431 of file json.c.

References jsonSemAction::array_element_end, jsonSemAction::array_element_start, JSON_TOKEN_ARRAY_START, JSON_TOKEN_OBJECT_START, lex_peek(), NULL, parse_array(), parse_object(), parse_scalar(), and jsonSemAction::semstate.

Referenced by parse_array().

{

json_aelem_action astart = sem->array_element_start;

json_aelem_action aend = sem->array_element_end;

JsonTokenType tok = lex_peek(lex);

bool isnull;

isnull = tok == JSON_TOKEN_NULL;

if (astart != NULL)

(*astart) (sem->semstate, isnull);

/* an array element is any object, array or scalar */

switch (tok)

{

case JSON_TOKEN_OBJECT_START:

parse_object(lex, sem);

break;

case JSON_TOKEN_ARRAY_START:

parse_array(lex, sem);

break;

default:

parse_scalar(lex, sem);

}

if (aend != NULL)

(*aend) (sem->semstate, isnull);

}

| static void parse_object | ( | JsonLexContext * | lex, | |

| JsonSemAction | sem | |||

| ) | [static] |

Definition at line 383 of file json.c.

References JSON_PARSE_OBJECT_NEXT, JSON_PARSE_OBJECT_START, JSON_TOKEN_COMMA, JSON_TOKEN_OBJECT_END, JSON_TOKEN_OBJECT_START, JSON_TOKEN_STRING, lex_accept(), lex_expect(), JsonLexContext::lex_level, lex_peek(), NULL, jsonSemAction::object_end, jsonSemAction::object_start, parse_object_field(), report_parse_error(), and jsonSemAction::semstate.

Referenced by parse_array_element(), parse_object_field(), and pg_parse_json().

{

/*

* an object is a possibly empty sequence of object fields, separated by

* commas and surrounde by curly braces.

*/

json_struct_action ostart = sem->object_start;

json_struct_action oend = sem->object_end;

JsonTokenType tok;

if (ostart != NULL)

(*ostart) (sem->semstate);

/*

* Data inside an object at at a higher nesting level than the object

* itself. Note that we increment this after we call the semantic routine

* for the object start and restore it before we call the routine for the

* object end.

*/

lex->lex_level++;

/* we know this will succeeed, just clearing the token */

lex_expect(JSON_PARSE_OBJECT_START, lex, JSON_TOKEN_OBJECT_START);

tok = lex_peek(lex);

switch (tok)

{

case JSON_TOKEN_STRING:

parse_object_field(lex, sem);

while (lex_accept(lex, JSON_TOKEN_COMMA, NULL))

parse_object_field(lex, sem);

break;

case JSON_TOKEN_OBJECT_END:

break;

default:

/* case of an invalid initial token inside the object */

report_parse_error(JSON_PARSE_OBJECT_START, lex);

}

lex_expect(JSON_PARSE_OBJECT_NEXT, lex, JSON_TOKEN_OBJECT_END);

lex->lex_level--;

if (oend != NULL)

(*oend) (sem->semstate);

}

| static void parse_object_field | ( | JsonLexContext * | lex, | |

| JsonSemAction | sem | |||

| ) | [static] |

Definition at line 335 of file json.c.

References JSON_PARSE_OBJECT_LABEL, JSON_PARSE_STRING, JSON_TOKEN_ARRAY_START, JSON_TOKEN_COLON, JSON_TOKEN_OBJECT_START, JSON_TOKEN_STRING, lex_accept(), lex_expect(), lex_peek(), NULL, jsonSemAction::object_field_end, jsonSemAction::object_field_start, parse_array(), parse_object(), parse_scalar(), pfree(), report_parse_error(), and jsonSemAction::semstate.

Referenced by parse_object().

{

/*

* an object field is "fieldname" : value where value can be a scalar,

* object or array

*/

char *fname = NULL; /* keep compiler quiet */

json_ofield_action ostart = sem->object_field_start;

json_ofield_action oend = sem->object_field_end;

bool isnull;

char **fnameaddr = NULL;

JsonTokenType tok;

if (ostart != NULL || oend != NULL)

fnameaddr = &fname;

if (!lex_accept(lex, JSON_TOKEN_STRING, fnameaddr))

report_parse_error(JSON_PARSE_STRING, lex);

lex_expect(JSON_PARSE_OBJECT_LABEL, lex, JSON_TOKEN_COLON);

tok = lex_peek(lex);

isnull = tok == JSON_TOKEN_NULL;

if (ostart != NULL)

(*ostart) (sem->semstate, fname, isnull);

switch (tok)

{

case JSON_TOKEN_OBJECT_START:

parse_object(lex, sem);

break;

case JSON_TOKEN_ARRAY_START:

parse_array(lex, sem);

break;

default:

parse_scalar(lex, sem);

}

if (oend != NULL)

(*oend) (sem->semstate, fname, isnull);

if (fname != NULL)

pfree(fname);

}

| static void parse_scalar | ( | JsonLexContext * | lex, | |

| JsonSemAction | sem | |||

| ) | [inline, static] |

Definition at line 299 of file json.c.

References JSON_PARSE_VALUE, JSON_TOKEN_FALSE, JSON_TOKEN_NULL, JSON_TOKEN_NUMBER, JSON_TOKEN_STRING, JSON_TOKEN_TRUE, lex_accept(), lex_peek(), NULL, report_parse_error(), jsonSemAction::scalar, jsonSemAction::semstate, and val.

Referenced by parse_array_element(), parse_object_field(), and pg_parse_json().

{

char *val = NULL;

json_scalar_action sfunc = sem->scalar;

char **valaddr;

JsonTokenType tok = lex_peek(lex);

valaddr = sfunc == NULL ? NULL : &val;

/* a scalar must be a string, a number, true, false, or null */

switch (tok)

{

case JSON_TOKEN_TRUE:

lex_accept(lex, JSON_TOKEN_TRUE, valaddr);

break;

case JSON_TOKEN_FALSE:

lex_accept(lex, JSON_TOKEN_FALSE, valaddr);

break;

case JSON_TOKEN_NULL:

lex_accept(lex, JSON_TOKEN_NULL, valaddr);

break;

case JSON_TOKEN_NUMBER:

lex_accept(lex, JSON_TOKEN_NUMBER, valaddr);

break;

case JSON_TOKEN_STRING:

lex_accept(lex, JSON_TOKEN_STRING, valaddr);

break;

default:

report_parse_error(JSON_PARSE_VALUE, lex);

}

if (sfunc != NULL)

(*sfunc) (sem->semstate, val, tok);

}

| void pg_parse_json | ( | JsonLexContext * | lex, | |

| JsonSemAction | sem | |||

| ) |

Definition at line 263 of file json.c.

References json_lex(), JSON_PARSE_END, JSON_TOKEN_ARRAY_START, JSON_TOKEN_END, JSON_TOKEN_OBJECT_START, lex_expect(), lex_peek(), parse_array(), parse_object(), and parse_scalar().

Referenced by each_worker(), get_json_object_as_hash(), get_worker(), json_array_elements(), json_array_length(), json_in(), json_object_keys(), json_populate_recordset(), and json_recv().

{

JsonTokenType tok;

/* get the initial token */

json_lex(lex);

tok = lex_peek(lex);

/* parse by recursive descent */

switch (tok)

{

case JSON_TOKEN_OBJECT_START:

parse_object(lex, sem);

break;

case JSON_TOKEN_ARRAY_START:

parse_array(lex, sem);

break;

default:

parse_scalar(lex, sem); /* json can be a bare scalar */

}

lex_expect(JSON_PARSE_END, lex, JSON_TOKEN_END);

}

| static void report_invalid_token | ( | JsonLexContext * | lex | ) | [static] |

Definition at line 1016 of file json.c.

References ereport, errcode(), errdetail(), errmsg(), ERROR, palloc(), report_json_context(), JsonLexContext::token_start, and JsonLexContext::token_terminator.

Referenced by json_lex(), json_lex_number(), and json_lex_string().

{

char *token;

int toklen;

/* Separate out the offending token. */

toklen = lex->token_terminator - lex->token_start;

token = palloc(toklen + 1);

memcpy(token, lex->token_start, toklen);

token[toklen] = '\0';

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Token \"%s\" is invalid.", token),

report_json_context(lex)));

}

| static int report_json_context | ( | JsonLexContext * | lex | ) | [static] |

Definition at line 1045 of file json.c.

References errcontext, JsonLexContext::input, JsonLexContext::input_length, IS_HIGHBIT_SET, JSON_TOKEN_END, palloc(), pg_mblen(), JsonLexContext::token_terminator, and JsonLexContext::token_type.

Referenced by json_lex_string(), report_invalid_token(), and report_parse_error().

{

const char *context_start;

const char *context_end;

const char *line_start;

int line_number;

char *ctxt;

int ctxtlen;

const char *prefix;

const char *suffix;

/* Choose boundaries for the part of the input we will display */

context_start = lex->input;

context_end = lex->token_terminator;

line_start = context_start;

line_number = 1;

for (;;)

{

/* Always advance over newlines (context_end test is just paranoia) */

if (*context_start == '\n' && context_start < context_end)

{

context_start++;

line_start = context_start;

line_number++;

continue;

}

/* Otherwise, done as soon as we are close enough to context_end */

if (context_end - context_start < 50)

break;

/* Advance to next multibyte character */

if (IS_HIGHBIT_SET(*context_start))

context_start += pg_mblen(context_start);

else

context_start++;

}

/*

* We add "..." to indicate that the excerpt doesn't start at the

* beginning of the line ... but if we're within 3 characters of the

* beginning of the line, we might as well just show the whole line.

*/

if (context_start - line_start <= 3)

context_start = line_start;

/* Get a null-terminated copy of the data to present */

ctxtlen = context_end - context_start;

ctxt = palloc(ctxtlen + 1);

memcpy(ctxt, context_start, ctxtlen);

ctxt[ctxtlen] = '\0';

/*

* Show the context, prefixing "..." if not starting at start of line, and

* suffixing "..." if not ending at end of line.

*/

prefix = (context_start > line_start) ? "..." : "";

suffix = (lex->token_type != JSON_TOKEN_END && context_end - lex->input < lex->input_length && *context_end != '\n' && *context_end != '\r') ? "..." : "";

return errcontext("JSON data, line %d: %s%s%s",

line_number, prefix, ctxt, suffix);

}

| static void report_parse_error | ( | JsonParseContext | ctx, | |

| JsonLexContext * | lex | |||

| ) | [static] |

Definition at line 909 of file json.c.

References elog, ereport, errcode(), errdetail(), errmsg(), ERROR, JSON_PARSE_ARRAY_NEXT, JSON_PARSE_ARRAY_START, JSON_PARSE_END, JSON_PARSE_OBJECT_COMMA, JSON_PARSE_OBJECT_LABEL, JSON_PARSE_OBJECT_NEXT, JSON_PARSE_OBJECT_START, JSON_PARSE_STRING, JSON_PARSE_VALUE, JSON_TOKEN_END, NULL, palloc(), report_json_context(), JsonLexContext::token_start, JsonLexContext::token_terminator, and JsonLexContext::token_type.

Referenced by lex_expect(), parse_object(), parse_object_field(), and parse_scalar().

{

char *token;

int toklen;

/* Handle case where the input ended prematurely. */

if (lex->token_start == NULL || lex->token_type == JSON_TOKEN_END)

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("The input string ended unexpectedly."),

report_json_context(lex)));

/* Separate out the current token. */

toklen = lex->token_terminator - lex->token_start;

token = palloc(toklen + 1);

memcpy(token, lex->token_start, toklen);

token[toklen] = '\0';

/* Complain, with the appropriate detail message. */

if (ctx == JSON_PARSE_END)

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected end of input, but found \"%s\".",

token),

report_json_context(lex)));

else

{

switch (ctx)

{

case JSON_PARSE_VALUE:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected JSON value, but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_STRING:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected string, but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_ARRAY_START:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected array element or \"]\", but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_ARRAY_NEXT:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected \",\" or \"]\", but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_OBJECT_START:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected string or \"}\", but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_OBJECT_LABEL:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected \":\", but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_OBJECT_NEXT:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected \",\" or \"}\", but found \"%s\".",

token),

report_json_context(lex)));

break;

case JSON_PARSE_OBJECT_COMMA:

ereport(ERROR,

(errcode(ERRCODE_INVALID_TEXT_REPRESENTATION),

errmsg("invalid input syntax for type json"),

errdetail("Expected string, but found \"%s\".",

token),

report_json_context(lex)));

break;

default:

elog(ERROR, "unexpected json parse state: %d", ctx);

}

}

}

| Datum row_to_json | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1458 of file json.c.

References composite_to_json(), cstring_to_text(), StringInfoData::data, makeStringInfo(), PG_GETARG_DATUM, and PG_RETURN_TEXT_P.

{

Datum array = PG_GETARG_DATUM(0);

StringInfo result;

result = makeStringInfo();

composite_to_json(array, result, false);

PG_RETURN_TEXT_P(cstring_to_text(result->data));

}

| Datum row_to_json_pretty | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1474 of file json.c.

References composite_to_json(), cstring_to_text(), StringInfoData::data, makeStringInfo(), PG_GETARG_BOOL, PG_GETARG_DATUM, and PG_RETURN_TEXT_P.

{

Datum array = PG_GETARG_DATUM(0);

bool use_line_feeds = PG_GETARG_BOOL(1);

StringInfo result;

result = makeStringInfo();

composite_to_json(array, result, use_line_feeds);

PG_RETURN_TEXT_P(cstring_to_text(result->data));

}

| Datum to_json | ( | PG_FUNCTION_ARGS | ) |

Definition at line 1491 of file json.c.

References CASTSOURCETARGET, COERCION_METHOD_FUNCTION, cstring_to_text(), StringInfoData::data, datum_to_json(), DatumGetPointer, ereport, errcode(), errmsg(), ERROR, FirstNormalObjectId, get_fn_expr_argtype(), GETSTRUCT, getTypeOutputInfo(), HeapTupleIsValid, InvalidOid, JSONOID, makeStringInfo(), ObjectIdGetDatum, pfree(), PG_ARGISNULL, PG_DETOAST_DATUM, PG_GETARG_DATUM, PG_RETURN_TEXT_P, PointerGetDatum, RECORDARRAYOID, RECORDOID, ReleaseSysCache(), SearchSysCache2, TypeCategory(), and val.

{

Oid val_type = get_fn_expr_argtype(fcinfo->flinfo, 0);

StringInfo result;

Datum orig_val,

val;

TYPCATEGORY tcategory;

Oid typoutput;

bool typisvarlena;

Oid castfunc = InvalidOid;

if (val_type == InvalidOid)

ereport(ERROR,

(errcode(ERRCODE_INVALID_PARAMETER_VALUE),

errmsg("could not determine input data type")));

result = makeStringInfo();

orig_val = PG_ARGISNULL(0) ? (Datum) 0 : PG_GETARG_DATUM(0);

getTypeOutputInfo(val_type, &typoutput, &typisvarlena);

if (val_type > FirstNormalObjectId)

{

HeapTuple tuple;

Form_pg_cast castForm;

tuple = SearchSysCache2(CASTSOURCETARGET,

ObjectIdGetDatum(val_type),

ObjectIdGetDatum(JSONOID));

if (HeapTupleIsValid(tuple))

{

castForm = (Form_pg_cast) GETSTRUCT(tuple);

if (castForm->castmethod == COERCION_METHOD_FUNCTION)

castfunc = typoutput = castForm->castfunc;

ReleaseSysCache(tuple);

}

}

if (castfunc != InvalidOid)

tcategory = TYPCATEGORY_JSON_CAST;

else if (val_type == RECORDARRAYOID)

tcategory = TYPCATEGORY_ARRAY;

else if (val_type == RECORDOID)

tcategory = TYPCATEGORY_COMPOSITE;

else if (val_type == JSONOID)

tcategory = TYPCATEGORY_JSON;

else

tcategory = TypeCategory(val_type);

/*

* If we have a toasted datum, forcibly detoast it here to avoid memory

* leakage inside the type's output routine.

*/

if (typisvarlena && orig_val != (Datum) 0)

val = PointerGetDatum(PG_DETOAST_DATUM(orig_val));

else

val = orig_val;

datum_to_json(val, false, result, tcategory, typoutput);

/* Clean up detoasted copy, if any */

if (val != orig_val)

pfree(DatumGetPointer(val));

PG_RETURN_TEXT_P(cstring_to_text(result->data));

}

jsonSemAction nullSemAction [static] |

JsonSemAction NullSemAction = &nullSemAction [static] |

1.7.1

1.7.1