|

OpenCV

3.0.0-dev

Open Source Computer Vision

|

|

OpenCV

3.0.0-dev

Open Source Computer Vision

|

Classes | |

| class | cv::text::ERFilter::Callback |

| Callback with the classifier is made a class. More... | |

| class | cv::text::ERFilter |

| Base class for 1st and 2nd stages of Neumann and Matas scene text detection algorithm [Neumann12]. : More... | |

| struct | cv::text::ERStat |

| The ERStat structure represents a class-specific Extremal Region (ER). More... | |

Enumerations | |

| enum | { cv::text::ERFILTER_NM_RGBLGrad, cv::text::ERFILTER_NM_IHSGrad } |

| computeNMChannels operation modes More... | |

| enum | cv::text::erGrouping_Modes { cv::text::ERGROUPING_ORIENTATION_HORIZ, cv::text::ERGROUPING_ORIENTATION_ANY } |

| text::erGrouping operation modes More... | |

Functions | |

| void | cv::text::computeNMChannels (InputArray _src, OutputArrayOfArrays _channels, int _mode=ERFILTER_NM_RGBLGrad) |

| Compute the different channels to be processed independently in the N&M algorithm [Neumann12]. More... | |

| Ptr< ERFilter > | cv::text::createERFilterNM1 (const Ptr< ERFilter::Callback > &cb, int thresholdDelta=1, float minArea=0.00025, float maxArea=0.13, float minProbability=0.4, bool nonMaxSuppression=true, float minProbabilityDiff=0.1) |

| Create an Extremal Region Filter for the 1st stage classifier of N&M algorithm [Neumann12]. More... | |

| Ptr< ERFilter > | cv::text::createERFilterNM2 (const Ptr< ERFilter::Callback > &cb, float minProbability=0.3) |

| Create an Extremal Region Filter for the 2nd stage classifier of N&M algorithm [Neumann12]. More... | |

| void | cv::text::erGrouping (InputArray img, InputArrayOfArrays channels, std::vector< std::vector< ERStat > > ®ions, std::vector< std::vector< Vec2i > > &groups, std::vector< Rect > &groups_rects, int method=ERGROUPING_ORIENTATION_HORIZ, const std::string &filename=std::string(), float minProbablity=0.5) |

| Find groups of Extremal Regions that are organized as text blocks. More... | |

| Ptr< ERFilter::Callback > | cv::text::loadClassifierNM1 (const std::string &filename) |

| Allow to implicitly load the default classifier when creating an ERFilter object. More... | |

| Ptr< ERFilter::Callback > | cv::text::loadClassifierNM2 (const std::string &filename) |

| Allow to implicitly load the default classifier when creating an ERFilter object. More... | |

| void | cv::text::MSERsToERStats (InputArray image, std::vector< std::vector< Point > > &contours, std::vector< std::vector< ERStat > > ®ions) |

| Converts MSER contours (vector<Point>) to ERStat regions. More... | |

The scene text detection algorithm described below has been initially proposed by Lukás Neumann & Jiri Matas [Neumann12]. The main idea behind Class-specific Extremal Regions is similar to the MSER in that suitable Extremal Regions (ERs) are selected from the whole component tree of the image. However, this technique differs from MSER in that selection of suitable ERs is done by a sequential classifier trained for character detection, i.e. dropping the stability requirement of MSERs and selecting class-specific (not necessarily stable) regions.

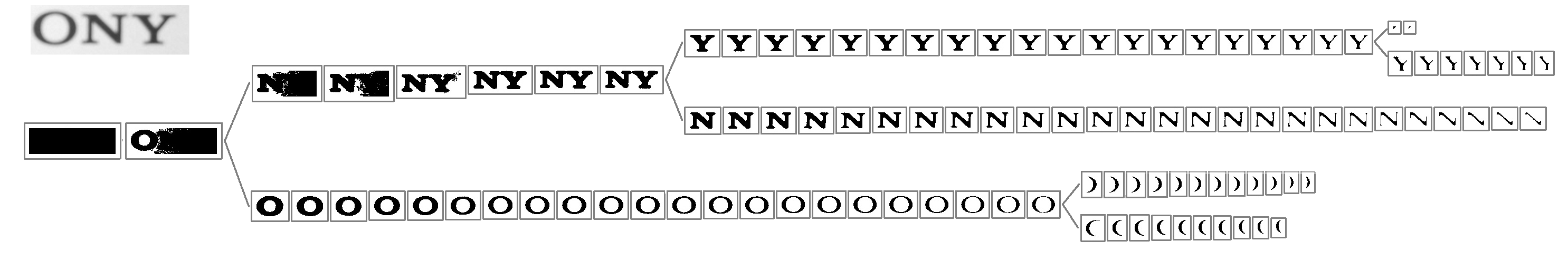

The component tree of an image is constructed by thresholding by an increasing value step-by-step from 0 to 255 and then linking the obtained connected components from successive levels in a hierarchy by their inclusion relation:

The component tree may conatain a huge number of regions even for a very simple image as shown in the previous image. This number can easily reach the order of 1 x 10\^6 regions for an average 1 Megapixel image. In order to efficiently select suitable regions among all the ERs the algorithm make use of a sequential classifier with two differentiated stages.

In the first stage incrementally computable descriptors (area, perimeter, bounding box, and euler number) are computed (in O(1)) for each region r and used as features for a classifier which estimates the class-conditional probability p(r|character). Only the ERs which correspond to local maximum of the probability p(r|character) are selected (if their probability is above a global limit p_min and the difference between local maximum and local minimum is greater than a delta_min value).

In the second stage, the ERs that passed the first stage are classified into character and non-character classes using more informative but also more computationally expensive features. (Hole area ratio, convex hull ratio, and the number of outer boundary inflexion points).

This ER filtering process is done in different single-channel projections of the input image in order to increase the character localization recall.

After the ER filtering is done on each input channel, character candidates must be grouped in high-level text blocks (i.e. words, text lines, paragraphs, ...). The opencv_text module implements two different grouping algorithms: the Exhaustive Search algorithm proposed in [Neumann11] for grouping horizontally aligned text, and the method proposed by Lluis Gomez and Dimosthenis Karatzas in [Gomez13][Gomez14] for grouping arbitrary oriented text (see erGrouping).

To see the text detector at work, have a look at the textdetection demo: https://github.com/Itseez/opencv_contrib/blob/master/modules/text/samples/textdetection.cpp

text::erGrouping operation modes

| void cv::text::computeNMChannels | ( | InputArray | _src, |

| OutputArrayOfArrays | _channels, | ||

| int | _mode = ERFILTER_NM_RGBLGrad |

||

| ) |

Compute the different channels to be processed independently in the N&M algorithm [Neumann12].

| _src | Source image. Must be RGB CV_8UC3. |

| _channels | Output vector<Mat> where computed channels are stored. |

| _mode | Mode of operation. Currently the only available options are: ERFILTER_NM_RGBLGrad** (used by default) and ERFILTER_NM_IHSGrad. |

In N&M algorithm, the combination of intensity (I), hue (H), saturation (S), and gradient magnitude channels (Grad) are used in order to obtain high localization recall. This implementation also provides an alternative combination of red (R), green (G), blue (B), lightness (L), and gradient magnitude (Grad).

| Ptr<ERFilter> cv::text::createERFilterNM1 | ( | const Ptr< ERFilter::Callback > & | cb, |

| int | thresholdDelta = 1, |

||

| float | minArea = 0.00025, |

||

| float | maxArea = 0.13, |

||

| float | minProbability = 0.4, |

||

| bool | nonMaxSuppression = true, |

||

| float | minProbabilityDiff = 0.1 |

||

| ) |

Create an Extremal Region Filter for the 1st stage classifier of N&M algorithm [Neumann12].

Create an Extremal Region Filter for the 1st stage classifier of N&M algorithm Neumann L., Matas J.: Real-Time Scene Text Localization and Recognition, CVPR 2012

The component tree of the image is extracted by a threshold increased step by step from 0 to 255, incrementally computable descriptors (aspect_ratio, compactness, number of holes, and number of horizontal crossings) are computed for each ER and used as features for a classifier which estimates the class-conditional probability P(er|character). The value of P(er|character) is tracked using the inclusion relation of ER across all thresholds and only the ERs which correspond to local maximum of the probability P(er|character) are selected (if the local maximum of the probability is above a global limit pmin and the difference between local maximum and local minimum is greater than minProbabilityDiff).

| cb | – Callback with the classifier. Default classifier can be implicitly load with function loadClassifierNM1(), e.g. from file in samples/cpp/trained_classifierNM1.xml |

| thresholdDelta | – Threshold step in subsequent thresholds when extracting the component tree |

| minArea | – The minimum area (% of image size) allowed for retreived ER’s |

| maxArea | – The maximum area (% of image size) allowed for retreived ER’s |

| minProbability | – The minimum probability P(er|character) allowed for retreived ER’s |

| nonMaxSuppression | – Whenever non-maximum suppression is done over the branch probabilities |

| minProbabilityDiff | – The minimum probability difference between local maxima and local minima ERs |

| cb | : Callback with the classifier. Default classifier can be implicitly load with function loadClassifierNM1, e.g. from file in samples/cpp/trained_classifierNM1.xml |

| thresholdDelta | : Threshold step in subsequent thresholds when extracting the component tree |

| minArea | : The minimum area (% of image size) allowed for retreived ER's |

| minArea | : The maximum area (% of image size) allowed for retreived ER's |

| minProbability | : The minimum probability P(er|character) allowed for retreived ER's |

| nonMaxSuppression | : Whenever non-maximum suppression is done over the branch probabilities |

| minProbability | : The minimum probability difference between local maxima and local minima ERs |

The component tree of the image is extracted by a threshold increased step by step from 0 to 255, incrementally computable descriptors (aspect_ratio, compactness, number of holes, and number of horizontal crossings) are computed for each ER and used as features for a classifier which estimates the class-conditional probability P(er|character). The value of P(er|character) is tracked using the inclusion relation of ER across all thresholds and only the ERs which correspond to local maximum of the probability P(er|character) are selected (if the local maximum of the probability is above a global limit pmin and the difference between local maximum and local minimum is greater than minProbabilityDiff).

| Ptr<ERFilter> cv::text::createERFilterNM2 | ( | const Ptr< ERFilter::Callback > & | cb, |

| float | minProbability = 0.3 |

||

| ) |

Create an Extremal Region Filter for the 2nd stage classifier of N&M algorithm [Neumann12].

| cb | : Callback with the classifier. Default classifier can be implicitly load with function loadClassifierNM2, e.g. from file in samples/cpp/trained_classifierNM2.xml |

| minProbability | : The minimum probability P(er|character) allowed for retreived ER's |

In the second stage, the ERs that passed the first stage are classified into character and non-character classes using more informative but also more computationally expensive features. The classifier uses all the features calculated in the first stage and the following additional features: hole area ratio, convex hull ratio, and number of outer inflexion points.

| void cv::text::erGrouping | ( | InputArray | img, |

| InputArrayOfArrays | channels, | ||

| std::vector< std::vector< ERStat > > & | regions, | ||

| std::vector< std::vector< Vec2i > > & | groups, | ||

| std::vector< Rect > & | groups_rects, | ||

| int | method = ERGROUPING_ORIENTATION_HORIZ, |

||

| const std::string & | filename = std::string(), |

||

| float | minProbablity = 0.5 |

||

| ) |

Find groups of Extremal Regions that are organized as text blocks.

| img | Original RGB or Greyscale image from wich the regions were extracted. |

| channels | Vector of single channel images CV_8UC1 from wich the regions were extracted. |

| regions | Vector of ER's retreived from the ERFilter algorithm from each channel. |

| groups | The output of the algorithm is stored in this parameter as set of lists of indexes to provided regions. |

| groups_rects | The output of the algorithm are stored in this parameter as list of rectangles. |

| method | Grouping method (see text::erGrouping_Modes). Can be one of ERGROUPING_ORIENTATION_HORIZ, ERGROUPING_ORIENTATION_ANY. |

| filename | The XML or YAML file with the classifier model (e.g. samples/trained_classifier_erGrouping.xml). Only to use when grouping method is ERGROUPING_ORIENTATION_ANY. |

| minProbablity | The minimum probability for accepting a group. Only to use when grouping method is ERGROUPING_ORIENTATION_ANY. |

| Ptr<ERFilter::Callback> cv::text::loadClassifierNM1 | ( | const std::string & | filename | ) |

Allow to implicitly load the default classifier when creating an ERFilter object.

| filename | The XML or YAML file with the classifier model (e.g. trained_classifierNM1.xml) |

returns a pointer to ERFilter::Callback.

| Ptr<ERFilter::Callback> cv::text::loadClassifierNM2 | ( | const std::string & | filename | ) |

Allow to implicitly load the default classifier when creating an ERFilter object.

| filename | The XML or YAML file with the classifier model (e.g. trained_classifierNM2.xml) |

returns a pointer to ERFilter::Callback.

| void cv::text::MSERsToERStats | ( | InputArray | image, |

| std::vector< std::vector< Point > > & | contours, | ||

| std::vector< std::vector< ERStat > > & | regions | ||

| ) |

Converts MSER contours (vector<Point>) to ERStat regions.

| image | Source image CV_8UC1 from which the MSERs where extracted. |

| contours | Intput vector with all the contours (vector<Point>). |

| regions | Output where the ERStat regions are stored. |

It takes as input the contours provided by the OpenCV MSER feature detector and returns as output two vectors of ERStats. This is because MSER() output contains both MSER+ and MSER- regions in a single vector<Point>, the function separates them in two different vectors (this is as if the ERStats where extracted from two different channels).

An example of MSERsToERStats in use can be found in the text detection webcam_demo: https://github.com/Itseez/opencv_contrib/blob/master/modules/text/samples/webcam_demo.cpp

1.8.9.1

1.8.9.1