| Home · All Classes · Modules |

Phonon is a cross-platform multimedia framework that enables the use of audio and video content in PyQt applications. More...

Phonon is a cross-platform multimedia framework that enables the use of audio and video content in Qt applications. The Phonon namespace contains a list of all classes, functions and namespaces provided by the module.

To import the module use, for example, the following statement:

from PyQt4 import phonon

The Phonon module is part of the Qt Desktop Edition and the Qt Open Source Edition.

This file is part of the KDE project

Copyright (C) 2005-2007 Matthias Kretz <kretz@kde.org>

Copyright (C) 2007-2008 Trolltech ASA. <thierry.bastian@trolltech.com>

This library is free software; you can redistribute it and/or modify it under the terms of the GNU Library General Public License version 2 as published by the Free Software Foundation.

This library is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU Library General Public License for more details.

You should have received a copy of the GNU Library General Public License along with this library; see the file COPYING.LIB. If not, write to the Free Software Foundation, Inc., 51 Franklin Street, Fifth Floor, Boston, MA 02110-1301, USA.

Multimedia is content that combines multiple forms of media information, such as audio, text, animations and video, within a single stream of data, and which is often used to provide a basic level of interactivity in applications.

Qt uses the Phonon multimedia framework to provide functionality for playback of the most common multimedia formats.The media can be read from files or streamed over a network, using an URL to a file.

In this overview, we take a look at the main concepts of Phonon. We also explain the architecture, examine the core API classes, and show examples on how to use the classes provided.

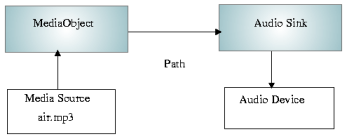

Phonon has tree basic concepts: media objects, sinks, and paths. A media object manages a media source, for instance, a music file; it provides simple playback control, such as starting, stopping, and pausing the playback. A sink is an output device, which can play back the media, e.g., video on a widget using a graphics card, or sound using a sound card. Paths are used to connect Phonon objects, i.e., a media object and a sink, in a graph.

When using Phonon for playback, one builds a graph, of which each node takes a media stream as input, processes it, and then outputs it again, either to another node in the graph, or to an output device, such as a sound card. The playback is started by a media object. As an example, we show a media graph for an audio stream:

The playback is started and managed by the media object, which send the media stream to any sinks connected to it by a path. The sink then plays the stream back, usually though a sound card.

All nodes in the graph are synchronized by the framework, meaning that if more than one sink is connected to the same media object, the framework will handle the synchronization between the sinks; this happens for instance when a media source containing video with sound is played back. More on this later.

The media object, an instance of the MediaObject class, knows how to playback media, and provides control over the state of the playback. For video playback, for instance, a media object can start, stop, fast forward, and rewind. You may think of the object as a simple media player. It can also queue media for playback.

The media data is provided by a media source, which is encapsulated by the media object. The media source is a separate object, an instance of MediaSource, in Phonon, and not part of the graph itself. The source will supply the media object with raw data. The data can be read from files and streamed over a network. The contents of the source will be interpreted by the media object.

A media object is always instantiated with the default constructor and then supplied with a media source. Concrete code examples are given later in this overview.

As a complement to the media object, Phonon also provides MediaController, which provides control over features that are optional for a given media. For instance, for chapters, menus, and titles of a vob file will be features managed by a MediaController.

A sink is a node that can output media from the graph, i.e., it does not send its output to other nodes. A sink is usually a rendering device.

The input of sinks in a Phonon media graph comes from a MediaObject, though it might have been processed through other nodes on the way.

While the MediaObject controls the playback, the sink has basic controls for manipulation of the media. With an audio sink, for instance, you can control the volume and mute the sound, i.e., it represents a virtual audio device. Another example is the VideoWidget, which can render video on a QWidget and alter the brightness, hue, and scaling of the video.

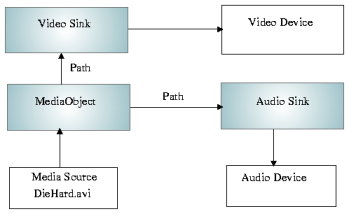

As an example we give an image of a graph used for playing back a video file with sound.

Phonon does not allow manipulation of media streams directly, i.e., one cannot alter a media stream's bytes programmatically after they have been given to a media object. We have other nodes to help with this: processors, which are placed in the graph on the path somewhere between the media object and its sinks. In Phonon, processors are subclasses of the Effect class.

When inserted into the rendering process, the processor will alter the media stream, and will be active as long as it is part of the graph. To stop, it needs to be removed.

The Effects may also have controls that affect how the media stream is manipulated. A processor applying a depth effect to audio, for instance, can have a value controlling the amount of depth. An Effect can be configured at any point in time.

In some common cases, it is not necessary to build a graph yourself.

Phonon has convenience functions for building common graphs. For playing an audio file, you can use the createPlayer() function. This will set up the necessary graph and return the media object node; the sound can then be started by calling its play() function.

Phonon.MediaObject *music =

Phonon.createPlayer(Phonon.MusicCategory,

Phonon.MediaSource("/path/mysong.wav"));

music->play();

We have a similar solution for playing video files, the VideoPlayer.

Phonon.VideoPlayer *player =

new Phonon.VideoPlayer(Phonon.VideoCategory, parentWidget);

player->play(url);

The VideoPlayer is a widget onto which the video will be drawn.

The .pro file for a project needs the following line to be added:

QT += phonon

Phonon comes with several widgets that provide functionality commonly associated with multimedia players - notably SeekSlider for controlling the position of the stream, VolumeSlider for controlling sound volume, and EffectWidget for controlling the parameters of an effect. You can learn about them in the API documentation.

If you need more freedom than the convenience functions described in the previous section offers you, you can build the graphs yourself. We will now take a look at how some common graphs are built. Starting a graph up is a matter of calling the play() function of the media object.

If the media source contains several types of media, for instance, a stream with both video and audio, the graph will contain two output nodes: one for the video and one for the audio.

We will now look at the code required to build the graphs discussed previously in the Architecture section.

When playing back audio, you create the media object and connect it to an audio output node - a node that inherits from AbstractAudioOutput. Currently, AudioOutput, which outputs audio to the sound card, is provided.

The code to create the graph is straight forward:

Phonon.MediaObject *mediaObject = new Phonon.MediaObject(this);

mediaObject->setCurrentSource(Phonon.MediaSource("/mymusic/barbiegirl.wav"));

Phonon.AudioOutput *audioOutput =

new Phonon.AudioOutput(Phonon.MusicCategory, this);

Phonon.Path path = Phonon.createPath(mediaObject, audioOutput);

Notice that the type of media an input source has is resolved by Phonon, so you need not be concerned with this. If a source contains multiple media formats, this is also handled automatically.

The media object is always created using the default constructor since it handles all multimedia formats.

The setting of a Category, Phonon.MusicCategory in this case, does not affect the actual playback; the category can be used by KDE to control the playback through, for instance, the control panel.

The AudioOutput class outputs the audio media to a sound card, that is, one of the audio devices of the operating system. An audio device can be a sound card or a intermediate technology, such as DirectShow on windows. A default device will be chosen if one is not set with setOutputDevice().

The AudioOutput node will work with all audio formats supported by the back end, so you don't need to know what format a specific media source has.

For a an extensive example of audio playback, see the Phonon Music Player.

Since a media stream cannot be manipulated directly, the backend can produce nodes that can process the media streams. These nodes are inserted into the graph between a media object and an output node.

Nodes that process media streams inherit from the Effect class. The effects available depends on the underlying system. Most of these effects will be supported by Phonon. See the Querying Backends for Support section for information on how to resolve the available effects on a particular system.

We will now continue the example from above using the Path variable path to add an effect. The code is again trivial:

Phonon.Effect *effect =

new Phonon.Effect(

Phonon.BackendCapabilities.availableAudioEffects()[0], this);

path.insertEffect(effect);

Here we simply take the first available effect on the system.

The effect will start immediately after being inserted into the graph if the media object is playing. To stop it, you have to detach it again using removeEffect() of the Path.

For playing video, VideoWidget is provided. This class functions both as a node in the graph and as a widget upon which it draws the video stream. The widget will automatically chose an available device for playing the video, which is usually a technology between the Qt application and the graphics card, such as DirectShow on Windows.

The video widget does not play the audio (if any) in the media stream. If you want to play the audio as well, you will need an AudioOutput node. You create and connect it to the graph as shown in the previous section.

The code for creating this graph is given below, after which one can play the video with play().

Phonon.MediaObject *mediaObject = new Phonon.MediaObject(this);

Phonon.VideoWidget *videoWidget = new Phonon.VideoWidget(this);

Phonon.createPath(mediaObject, videoWidget);

Phonon.AudioOutput *audioOutput =

new Phonon.AudioOutput(Phonon.VideoCategory, this);

Phonon.createPath(mediaObject, audioOutput);

The VideoWidget does not need to be set to a Category, it is automatically classified to VideoCategory, we only need to assure that the audio is also classified in the same category.

The media object will split files with different media content into separate streams before sending them off to other nodes in the graph. It is the media object that determines the type of content appropriate for nodes that connect to it.

The multimedia functionality is not implemented by Phonon itself, but by a back end - often also referred to as an engine. This includes connecting to, managing, and driving the underlying hardware or intermediate technology. For the programmer, this implies that the media nodes, e.g., media objects, processors, and sinks, are produced by the back end. Also, it is responsible for building the graph, i.e., connecting the nodes.

The backends of Qt use the media systems DirectShow (which requires DirectX) on Windows, QuickTime on Mac, and GStreamer on Linux. The functionality provided on the different platforms are dependent on these underlying systems and may vary somewhat, e.g., in the media formats supported.

Backends expose information about the underlying system. It can tell which media formats are supported, e.g., AVI, mp3, or OGG.

A user can often add support for new formats and filters to the underlying system, by, for instance, installing the DivX codex. We can therefore not give an exact overview of which formats are available with the Qt backends.

As mentioned, Phonon depends on the backend to provide its functionality. Depending on the individual backend, full support of the API may not be in place. Applications therefore need to check with the backend if functionality they require is implemented. In this section, we take look at how this is done.

The backend provides the availableMimeTypes() and isMimeTypeAvailable() functions to query which MIME types the backend can produce nodes for. The types are listed as strings, which for any type is equal for any backend or platform.

The backend will emit a signal - Notifier.capabilitiesChanged() - if it's abilities have changed. If the available audio devices have changed, the Phonon.BackendCapabilities.Notifier.availableAudioOutputDevicesChanged() signal is emitted instead.

To query the actual audio devices possible, we have the availableAudioDevices() as mentioned in the Sinks section. To query information about the individual devices, you can examine its name(); this string is dependent on the operating system, and the Qt backends does not analyze the devices further.

The sink for playback of video does not have a selection of devices. For convenience, the VideoWidget is both a node in the graph and a widget on which the video output is rendered. To query the various video formats available, use isMimeTypeAvailable(). To add it to a path, you can use the Phonon.createPath() as usual. After creating a media object, it is also possible to call its hasVideo() function.

See also the Capabilities Example.

On Windows, Phonon requires DirectX version 9 or higher.

For Phonon application development, the platform and DirectX SDKs must be installed. The name of the platform SDK may very between Windows versions; on Vista, it is called Windows SDK.

Note that these SDKs must be placed before your compiler in the include path; though, this should be auto-detected and handled by Windows.

The Qt backend on Linux uses GStreamer (minimum version is 0.10), which must be installed on the system. It is a good idea to install every package available for GStreamer to get support for as many MIME types, and audio effects as possible. At a minimum, you need the GStreamer library and base plugins, which provides support for .ogg files. The package names may vary between Linux distributions; on Mandriva, they have the following names:

| Package | Description |

|---|---|

| libgstreamer0.10_0.10 | The GStreamer base library. |

| libgstreamer0.10_0.10-devel | Contains files for developing applications with GStreamer. |

| libgstreamer-plugins-base0.10 | Contains the basic plugins for audio and video playback, and will enable support for ogg files. |

| libgstreamer-plugins-base0.10-devel | Makes it possible to develop applications using the base plugins. |

On Mac OS X, Qt uses QuickTime for its backend. The minimum supported version is 7.0.

Phonon and its Qt backends, though fully functional for multimedia playback, are still under development. Functionality to come is the possibility to capture media and more processors for both music and video files.

Another important consideration is to implement support for storing media to files; i.e., not playing back media directly.

We also hope in the future to be able to support direct manipulation of media streams. This will give the programmer more freedom to manipulate streams than just through processors.

Currently, the multimedia framework supports one input source. It will be possible to include several sources. This is useful in, for example, audio mixer applications where several audio sources can be sent, processed and output as a single audio stream.

| PyQt 4.12.1 for X11 | Copyright © Riverbank Computing Ltd and The Qt Company 2015 | Qt 4.8.7 |