Table of Contents

- 15.1. MySQL Cluster Overview

- 15.2. Basic MySQL Cluster Concepts

- 15.3. Simple Multi-Computer How-To

- 15.4. MySQL Cluster Configuration

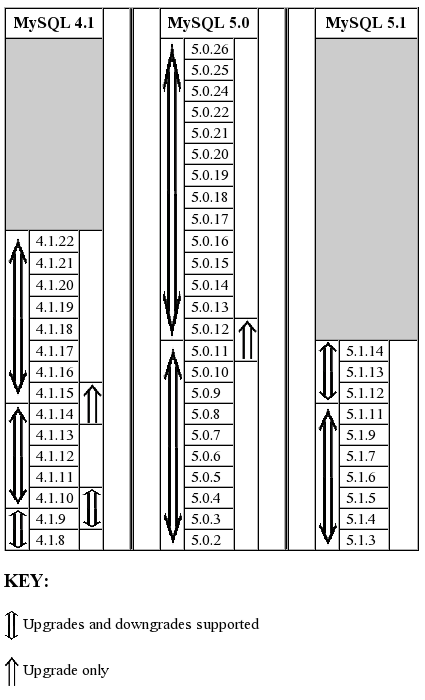

- 15.5. Upgrading and Downgrading MySQL Cluster

- 15.6. Process Management in MySQL Cluster

- 15.7. Management of MySQL Cluster

- 15.8. On-line Backup of MySQL Cluster

- 15.9. Cluster Utility Programs

- 15.9.1. ndb_config

- 15.9.2. ndb_delete_all

- 15.9.3. ndb_desc

- 15.9.4. ndb_drop_index

- 15.9.5. ndb_drop_table

- 15.9.6. ndb_error_reporter

- 15.9.7. ndb_print_backup_file

- 15.9.8. ndb_print_schema_file

- 15.9.9. ndb_print_sys_file

- 15.9.10. ndb_select_all

- 15.9.11. ndb_select_count

- 15.9.12. ndb_show_tables

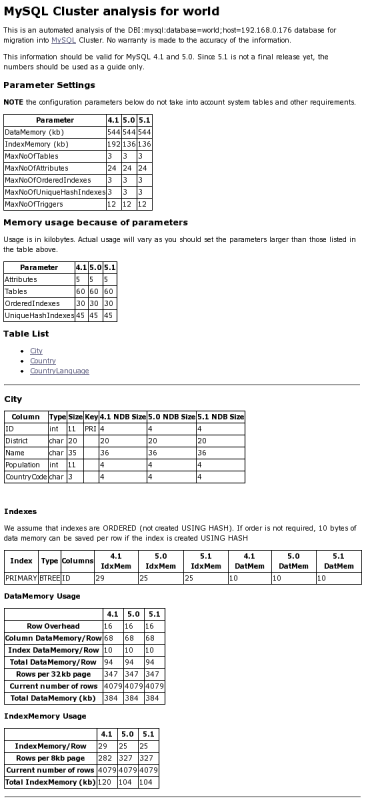

- 15.9.13. ndb_size.pl — NDBCluster Size Requirement Estimator

- 15.9.14. ndb_waiter

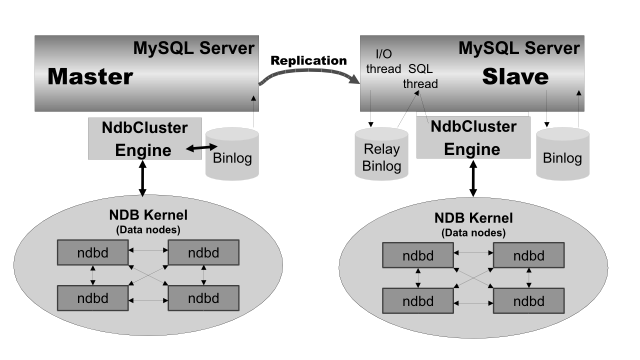

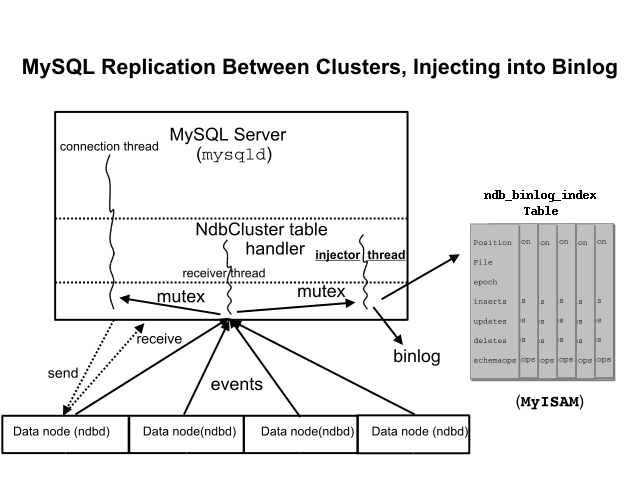

- 15.10. MySQL Cluster Replication

- 15.10.1. Abbreviations and Symbols

- 15.10.2. Assumptions and General Requirements

- 15.10.3. Known Issues

- 15.10.4. Replication Schema and Tables

- 15.10.5. Preparing the Cluster for Replication

- 15.10.6. Starting Replication (Single Replication Channel)

- 15.10.7. Using Two Replication Channels

- 15.10.8. Implementing Failover with MySQL Cluster

- 15.10.9. MySQL Cluster Backups With Replication

- 15.11. MySQL Cluster Disk Data Storage

- 15.12. Using High-Speed Interconnects with MySQL Cluster

- 15.13. Known Limitations of MySQL Cluster

- 15.14. MySQL Cluster Development Roadmap

- 15.15. MySQL Cluster Glossary

Due to a build slippage, binary distributions of MySQL 5.1.12 contained neither NDB Cluster nor Partitioning. We apologize for any inconvenience. Please upgrade to 5.1.14. if you build from source, you can execute configure with the --with-ndbcluster and --with-partition options.

MySQL Cluster is a high-availability, high-redundancy version of

MySQL adapted for the distributed computing environment. It uses the

NDB Cluster storage engine to enable running

several MySQL servers in a cluster. This storage engine is available

in MySQL 5.1 binary releases and in RPMs compatible

with most modern Linux distributions.

MySQL Cluster is currently available and supported on the following platforms:

Linux: x86, AMD64, EMT64, s/390, PPC, Alpha, SPARC, UltraSparc

Solaris: SPARC, UltraSparc, x86, AMD64, EMT64

BSD (FreeBSD, NetBSD, OpenBSD): x86, AMD64, EMT64, PPC

Mac OS X: PPC

HP-UX: PA-RISC

Tru64: Alpha

OpenVMS: Alpha

IRIX: MIPS

Novell Netware: x86

QNX Neutrino: x86

SCO OpenServer, OpenUnix, UnixWare: x86

For exact levels of support available for MySQL Cluster on specific combinations of operating system versions, operating system distributions, and hardware platforms, please refer to the Cluster Supported Platforms list maintained by the MySQL Support Team on the MySQL AB Web site.

MySQL Cluster is not currently supported on Microsoft Windows. We are working to make Cluster available on all operating systems supported by MySQL, including Windows, and will update the information provided here as this work continues.

This chapter represents a work in progress, and its contents are subject to revision as MySQL Cluster continues to evolve. Additional information regarding MySQL Cluster can be found on the MySQL AB Web site at http://www.mysql.com/products/cluster/.

Additional resources

Answers to some commonly asked questions about Cluster may be found in the Section A.11, “MySQL 5.1 FAQ — MySQL Cluster”.

The MySQL Cluster mailing list: http://lists.mysql.com/cluster.

The MySQL Cluster Forum: http://forums.mysql.com/list.php?25.

Many MySQL Cluster users and some of the MySQL Cluster developers blog about their experiences with Cluster, and make feeds of these available through PlanetMySQL.

If you are new to MySQL Cluster, you may find our Developer Zone article How to set up a MySQL Cluster for two servers to be helpful.

MySQL Cluster is a technology that enables clustering of in-memory databases in a shared-nothing system. The shared-nothing architecture allows the system to work with very inexpensive hardware, and with a minimum of specific requirements for hardware or software.

MySQL Cluster is designed not to have any single point of failure. For this reason, each component is expected to have its own memory and disk, and the use of shared storage mechanisms such as network shares, network filesystems, and SANs is not recommended or supported.

MySQL Cluster integrates the standard MySQL server with an

in-memory clustered storage engine called NDB.

In our documentation, the term NDB refers to

the part of the setup that is specific to the storage engine,

whereas “MySQL Cluster” refers to the combination of

MySQL and the NDB storage engine.

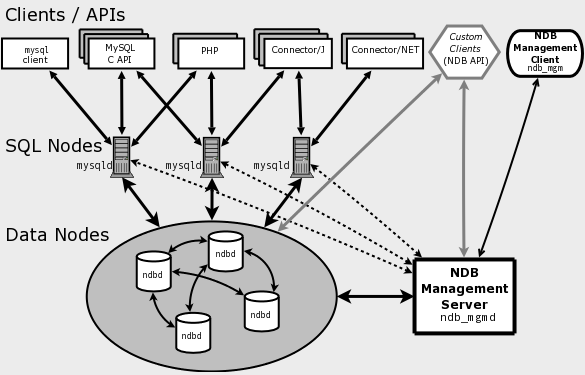

A MySQL Cluster consists of a set of computers, each running a one or more processes which may include a MySQL server, a data node, a management server, and (possibly) a specialized data access programs. The relationship of these components in a cluster is shown here:

All these programs work together to form a MySQL Cluster. When

data is stored in the NDB Cluster storage

engine, the tables are stored in the data nodes. Such tables are

directly accessible from all other MySQL servers in the cluster.

Thus, in a payroll application storing data in a cluster, if one

application updates the salary of an employee, all other MySQL

servers that query this data can see this change immediately.

The data stored in the data nodes for MySQL Cluster can be mirrored; the cluster can handle failures of individual data nodes with no other impact than that a small number of transactions are aborted due to losing the transaction state. Because transactional applications are expected to handle transaction failure, this should not be a source of problems.

NDB is an in-memory

storage engine offering high-availability and data-persistence

features.

The NDB storage engine can be configured with a

range of failover and load-balancing options, but it is easiest to

start with the storage engine at the cluster level. MySQL

Cluster's NDB storage engine contains a

complete set of data, dependent only on other data within the

cluster itself.

The cluster portion of MySQL Cluster is currently configured independently of the MySQL servers. In a MySQL Cluster, each part of the cluster is considered to be a node.

Note: In many contexts, the term “node” is used to indicate a computer, but when discussing MySQL Cluster it means a process. It is possible to run any number of nodes on a single computer, for which we use the term cluster host.

(However, it should be noted MySQL does not currently support the use of multiple data nodes on a single computer in a production setting. See Issues exclusive to MySQL Cluster.)

There are three types of cluster nodes, and in a minimal MySQL Cluster configuration, there will be at least three nodes, one of each of these types:

Management node (MGM node): The role of this type of node is to manage the other nodes within the MySQL Cluster, performing such functions as providing configuration data, starting and stopping nodes, running backup, and so forth. Because this node type manages the configuration of the other nodes, a node of this type should be started first, before any other node. An MGM node is started with the command ndb_mgmd.

Data node: This type of node stores cluster data. There are as many data nodes as there are replicas, times the number of fragments. For example, with two replicas, each having two fragments, you will need four data nodes. It is not necessary to have more than one replica. A data node is started with the command ndbd.

SQL node: This is a node that accesses the cluster data. In the case of MySQL Cluster, an SQL node is a traditional MySQL server that uses the

NDB Clusterstorage engine. An SQL node is typically started with the command mysqld --ndbcluster or by using mysqld with thendbclusteroption added tomy.cnf.An SQL node is actually just a specialised type of API node, which designates any application which accesses Cluster data. One example of an API node is the ndb_restore utility that is used to restore a cluster backup. It is possible to write such applications using the NDB API.

Important: It is not realistic to expect to employ a three-node setup in a production environment. Such a configuration provides no redundancy; in order to benefit from MySQL Cluster's high-availability features, you must use multiple data and SQL nodes. The use of multiple management nodes is also highly recommended.

For a brief introduction to the relationships between nodes, node groups, replicas, and partitions in MySQL Cluster, see Section 15.2.1, “MySQL Cluster Nodes, Node Groups, Replicas, and Partitions”.

Configuration of a cluster involves configuring each individual node in the cluster and setting up individual communication links between nodes. MySQL Cluster is currently designed with the intention that data nodes are homogeneous in terms of processor power, memory space, and bandwidth. In addition, to provide a single point of configuration, all configuration data for the cluster as a whole is located in one configuration file.

The management server (MGM node) manages the cluster configuration file and the cluster log. Each node in the cluster retrieves the configuration data from the management server, and so requires a way to determine where the management server resides. When interesting events occur in the data nodes, the nodes transfer information about these events to the management server, which then writes the information to the cluster log.

In addition, there can be any number of cluster client processes or applications. These are of two types:

Standard MySQL clients: These are no different for MySQL Cluster than they are for standard (non-Cluster) MySQL. In other words, MySQL Cluster can be accessed from existing MySQL applications written in PHP, Perl, C, C++, Java, Python, Ruby, and so on.

Management clients: These clients connect to the management server and provide commands for starting and stopping nodes gracefully, starting and stopping message tracing (debug versions only), showing node versions and status, starting and stopping backups, and so on.

This section discusses the manner in which MySQL Cluster divides and duplicates data for storage.

Central to an understanding of this topic are the following concepts, listed here with brief definitions:

(Data) Node: An ndbd process, which stores a replica —that is, a copy of the partition (see below) assigned to the node group of which the node is a member.

Each data node should be located on a separate computer. While it is also possible to host multiple ndbd processes on a single computer, such a configuration is not supported.

It is common for the terms “node” and “data node” to be used interchangeably when referring to an ndbd process; where mentioned, management (MGM) nodes (ndb_mgmd processes) and SQL nodes (mysqld processes) are specified as such in this discussion.

Node Group: A node group consists of one or more nodes, and stores partitions, or sets of replicas (see next item).

Note: Currently, all node groups in a cluster must have the same number of nodes.

Partition: This is a portion of the data stored by the cluster. There are as many cluster partitions as nodes participating in the cluster. Each node is responsible for keeping at least one copy of any partitions assigned to it (that is, at least one replica) available to the cluster.

A replica belongs entirely to a single node; a node can (and usually does) store several replicas.

Replica: This is a copy of a cluster partition. Each node in a node group stores a replica. Also sometimes known as a partition replica. The number of replicas is equal to the number of nodes per node group.

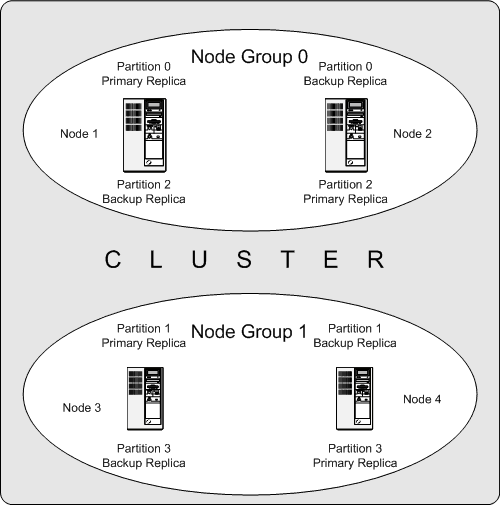

The following diagram illustrates a MySQL Cluster with four data nodes, arranged in two node groups of two nodes each; nodes 1 and 2 belong to node group 0, and nodes 3 and 4 belong to node group 1. Note that only data (ndbd) nodes are shown here; although a working cluster requires an ndb_mgm process for cluster management and at least one SQL node to access the data stored by the cluster, these have been omitted in the figure for clarity.

The data stored by the cluster is divided into four partitions, numbered 0, 1, 2, and 3. Each partition is stored — in multiple copies — on the same node group. Partitions are stored on alternate node groups:

Partition 0 is stored on node group 0; a primary replica (primary copy) is stored on node 1, and a backup replica (backup copy of the partition) is stored on node 2.

Partition 1 is stored on the other node group (node group 1); this partition's primary replica is on node 3, and its backup replica is on node 4.

Partition 2 is stored on node group 0. However, the placing of its two replicas is reversed from that of Partition 0; for Partition 2, the primary replica is stored on node 2, and the backup on node 1.

Partition 3 is stored on node group 1, and the placement of its two replicas are reversed from those of partition 1. That is, its primary replica is located on node 4, with the backup on node 3.

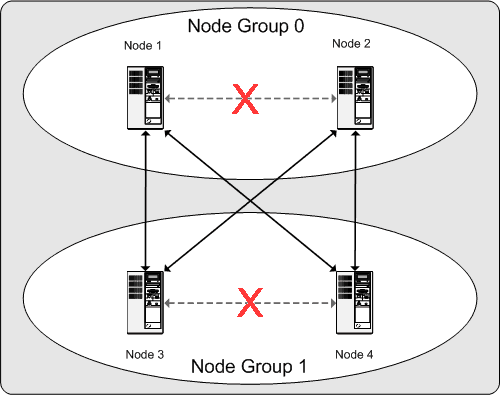

What this means regarding the continued operation of a MySQL Cluster is this: so long as each node group participating in the cluster has at least one node operating, the cluster has a complete copy of all data and remains viable. This is illustrated in the next diagram.

In this example, where the cluster consists of two node groups of two nodes each, any combination of at least one node in node group 0 and at least one node in node group 1 is sufficient to keep the cluster “alive” (indicated by arrows in the diagram). However, if both nodes from either node group fail, the remaining two nodes are not sufficient (shown by the arrows marked out with an X); in either case, the cluster has lost an entire partition and so can no longer provide access to a complete set of all cluster data.

This section is a “How-To” that describes the basics for how to plan, install, configure, and run a MySQL Cluster. Whereas the examples in Section 15.4, “MySQL Cluster Configuration” provide more in-depth information on a variety of clustering options and configuration, the result of following the guidelines and procedures outlined here should be a usable MySQL Cluster which meets the minimum requirements for availability and safeguarding of data.

This section covers hardware and software requirements; networking issues; installation of MySQL Cluster; configuration issues; starting, stopping, and restarting the cluster; loading of a sample database; and performing queries.

Basic Assumptions

This How-To makes the following assumptions:

The cluster setup has four nodes, each on a separate host, and each with a fixed network address on a typical Ethernet as shown here:

Node IP Address Management (MGM) node 192.168.0.10 MySQL server (SQL) node 192.168.0.20 Data (NDBD) node "A" 192.168.0.30 Data (NDBD) node "B" 192.168.0.40 This may be made clearer in the following diagram:

Note: In the interest of simplicity (and reliability), this How-To uses only numeric IP addresses. However, if DNS resolution is available on your network, it is possible to use hostnames in lieu of IP addresses in configuring Cluster. Alternatively, you can use the

/etc/hostsfile or your operating system's equivalent for providing a means to do host lookup if such is available.Each host in our scenario is an Intel-based desktop PC running a common, generic Linux distribution installed to disk in a standard configuration, and running no unnecessary services. The core OS with standard TCP/IP networking capabilities should be sufficient. Also for the sake of simplicity, we also assume that the filesystems on all hosts are set up identically. In the event that they are not, you will need to adapt these instructions accordingly.

Standard 100 Mbps or 1 gigabit Ethernet cards are installed on each machine, along with the proper drivers for the cards, and that all four hosts are connected via a standard-issue Ethernet networking appliance such as a switch. (All machines should use network cards with the same throughout. That is, all four machines in the cluster should have 100 Mbps cards or all four machines should have 1 Gbps cards.) MySQL Cluster will work in a 100 Mbps network; however, gigabit Ethernet will provide better performance.

Note that MySQL Cluster is not intended for use in a network for which throughput is less than 100 Mbps. For this reason (among others), attempting to run a MySQL Cluster over a public network such as the Internet is not likely to be successful, and is not recommended.

For our sample data, we will use the

worlddatabase which is available for download from the MySQL AB Web site. As this database takes up a relatively small amount of space, we assume that each machine has 256MB RAM, which should be sufficient for running the operating system, host NDB process, and (for the data nodes) for storing the database.

Although we refer to a Linux operating system in this How-To, the instructions and procedures that we provide here should be easily adaptable to other supported operating systems. We also assume that you already know how to perform a minimal installation and configuration of the operating system with networking capability, or that you are able to obtain assistance in this elsewhere if needed.

We discuss MySQL Cluster hardware, software, and networking requirements in somewhat greater detail in the next section. (See Section 15.3.1, “Hardware, Software, and Networking”.)

One of the strengths of MySQL Cluster is that it can be run on commodity hardware and has no unusual requirements in this regard, other than for large amounts of RAM, due to the fact that all live data storage is done in memory. (Note that this is not the case with Disk Data tables — see Section 15.11, “MySQL Cluster Disk Data Storage”, for more information about these.) Naturally, multiple and faster CPUs will enhance performance. Memory requirements for other Cluster processes are relatively small.

The software requirements for Cluster are also modest. Host operating systems do not require any unusual modules, services, applications, or configuration to support MySQL Cluster. For supported operating systems, a standard installation should be sufficient. The MySQL software requirements are simple: all that is needed is a production release of MySQL 5.1 to have Cluster support. It is not necessary to compile MySQL yourself merely to be able to use Cluster. In this How-To, we assume that you are using the server binary appropriate to your operating system, available via the MySQL software downloads page at http://dev.mysql.com/downloads/.

For inter-node communication, Cluster supports TCP/IP networking in any standard topology, and the minimum expected for each host is a standard 100 Mbps Ethernet card, plus a switch, hub, or router to provide network connectivity for the cluster as a whole. We strongly recommend that a MySQL Cluster be run on its own subnet which is not shared with non-Cluster machines for the following reasons:

Security: Communications between Cluster nodes are not encrypted or shielded in any way. The only means of protecting transmissions within a MySQL Cluster is to run your Cluster on a protected network. If you intend to use MySQL Cluster for Web applications, the cluster should definitely reside behind your firewall and not in your network's De-Militarized Zone (DMZ) or elsewhere.

Efficiency: Setting up a MySQL Cluster on a private or protected network allows the cluster to make exclusive use of bandwidth between cluster hosts. Using a separate switch for your MySQL Cluster not only helps protect against unauthorized access to Cluster data, it also ensures that Cluster nodes are shielded from interference caused by transmissions between other computers on the network. For enhanced reliability, you can use dual switches and dual cards to remove the network as a single point of failure; many device drivers support failover for such communication links.

It is also possible to use the high-speed Scalable Coherent Interface (SCI) with MySQL Cluster, but this is not a requirement. See Section 15.12, “Using High-Speed Interconnects with MySQL Cluster”, for more about this protocol and its use with MySQL Cluster.

Each MySQL Cluster host computer running data or SQL nodes must have installed on it a MySQL server binary. For management nodes, it is not necessary to install the MySQL server binary, but you do have to install the MGM server daemon and client binaries (ndb_mgmd and ndb_mgm, respectively). This section covers the steps necessary to install the correct binaries for each type of Cluster node.

MySQL AB provides precompiled binaries that support Cluster, and

there is generally no need to compile these yourself. Therefore,

the first step in the installation process for each cluster host

is to download the file

mysql-5.1.15-beta-pc-linux-gnu-i686.tar.gz

from the MySQL downloads

area. We assume that you have placed it in each

machine's /var/tmp directory. (If you do

require a custom binary, see

Section 2.9.3, “Installing from the Development Source Tree”.)

RPMs are also available for both 32-bit and 64-bit Linux platforms. For a MySQL Cluster, four RPMs are required:

The Server RPM (for example,

MySQL-server-5.1.15-beta-0.glibc23.i386.rpm), which supplies the core files needed to run a MySQL Server.The Server/Max RPM (for example,

MySQL-Max-5.1.15-beta-0.glibc23.i386.rpm), which provides a MySQL Server binary with clustering support.The NDB Cluster - Storage engine RPM (for example,

MySQL-ndb-storage-5.1.15-beta-0.glibc23.i386.rpm), which supplies the MySQL Cluster data node binary (ndbd).The NDB Cluster - Storage engine management RPM (for example,

MySQL-ndb-management-5.1.15-beta-0.glibc23.i386.rpm), which provides the MySQL Cluster management server binary (ndb_mgmd).

In addition, you should also obtain the

NDB Cluster - Storage engine basic

tools RPM (for example,

MySQL-ndb-tools-5.1.15-beta-0.glibc23.i386.rpm),

which supplies several useful applications for working with a

MySQL Cluster. The most important of the these is the MySQL

Cluster management client (ndb_mgm). The

NDB Cluster - Storage engine extra

tools RPM (for example,

MySQL-ndb-extra-5.1.15-beta-0.glibc23.i386.rpm)

contains some additional testing and monitoring programs, but is

not required to install a MySQL Cluster. (For more information

about these additional programs, see

Section 15.9, “Cluster Utility Programs”.)

The MySQL version number in the RPM filenames (shown here as

5.1.15-beta) can vary according to the

version which you are actually using. It is very

important that all of the Cluster RPMs to be installed have the

same MySQL version number. The

glibc version number (if present —

shown here as glibc23), and architecture

designation (shown here as i386) should be

appropriate to the machine on which the RPM is to be installed.

See Section 2.4, “Installing MySQL on Linux”, for general information about installing MySQL using RPMs supplied by MySQL AB.

After installing from RPM, you still need to configure the cluster as discussed in Section 15.3.3, “Multi-Computer Configuration”.

Note: After completing the installation, do not yet start any of the binaries. We show you how to do so following the configuration of all nodes.

Data and SQL Node Installation —

.tar.gz Binary

On each of the machines designated to host data or SQL nodes,

perform the following steps as the system

root user:

Check your

/etc/passwdand/etc/groupfiles (or use whatever tools are provided by your operating system for managing users and groups) to see whether there is already amysqlgroup andmysqluser on the system. Some OS distributions create these as part of the operating system installation process. If they are not already present, create a newmysqluser group, and then add amysqluser to this group:shell>

groupadd mysqlshell>useradd -g mysql mysqlThe syntax for useradd and groupadd may differ slightly on different versions of Unix, or they may have different names such as adduser and addgroup.

Change location to the directory containing the downloaded file, unpack the archive, and create a symlink to the

mysql. Note that the actual file and directory names will vary according to the MySQL version number.shell>

cd /var/tmpshell>tar -C /usr/local -xzvf mysql-5.1.15-beta-pc-linux-gnu-i686.tar.gzshell>ln -s /usr/local/mysql-5.1.15-beta-pc-linux-gnu-i686 /usr/local/mysqlChange location to the

mysqldirectory and run the supplied script for creating the system databases:shell>

cd mysqlshell>scripts/mysql_install_db --user=mysqlSet the necessary permissions for the MySQL server and data directories:

shell>

chown -R root .shell>chown -R mysql datashell>chgrp -R mysql .Note that the data directory on each machine hosting a data node is

/usr/local/mysql/data. We will use this piece of information when we configure the management node. (See Section 15.3.3, “Multi-Computer Configuration”.)Copy the MySQL startup script to the appropriate directory, make it executable, and set it to start when the operating system is booted up:

shell>

cp support-files/mysql.server /etc/rc.d/init.d/shell>chmod +x /etc/rc.d/init.d/mysql.servershell>chkconfig --add mysql.server(The startup scripts directory may vary depending on your operating system and version — for example, in some Linux distributions, it is

/etc/init.d.)Here we use Red Hat's chkconfig for creating links to the startup scripts; use whatever means is appropriate for this purpose on your operating system and distribution, such as update-rc.d on Debian.

Remember that the preceding steps must be performed separately on each machine where a data node or SQL node is to reside.

SQL Node Installation — RPM Files

On each machine to be used for hosting a cluster SQL node, install the MySQL Max RPM by executing the following command as the system root user, replacing the name shown for the RPM as necessary to match the name of the RPM downloaded from the MySQL AB web site:

shell>rpm -Uhv MySQL-server-5.1.15-beta-0.glibc23.i386.rpmshell>rpm -Uhv MySQL-Max-5.1.15-beta-0.glibc23.i386.rpm

This installs the MySQL server binary

(mysqld) in the

/usr/sbin directory, as well as all needed

MySQL Server support files. It also installs the

mysql.server and

mysqld_safe startup scripts in

/usr/share/mysql and

/usr/bin, respectively. The RPM installer

should take care of general configuration issues (such as

creating the mysql user and group, if needed)

automatically.

Data Node Installation — RPM Files

On a computer that is to host a cluster data node it is necessary to install only the NDB Cluster - Storage engine RPM. To do so, copy this RPM to the data node host, and run the following command as the system root user, replacing the name shown for the RPM as necessary to match that of the RPM downloaded from the MySQL AB web site:

shell> rpm -Uhv MySQL-ndb-storage-5.1.15-beta-0.glibc23.i386.rpm

The previous command installs the MySQL Cluster data node binary

(ndbd) in the /usr/sbin

directory.

Management Node Installation —

.tar.gz Binary

Installation for the management (MGM) node does not require

installation of the mysqld binary. Only the

binaries for the MGM server and client are required, which can

be found in the downloaded archive. Again, we assume that you

have placed this file in /var/tmp.

As system root (that is, after using

sudo, su root, or your

system's equivalent for temporarily assuming the system

administrator account's privileges), perform the following steps

to install ndb_mgmd and

ndb_mgm on the Cluster management node host:

Change location to the

/var/tmpdirectory, and extract the ndb_mgm and ndb_mgmd from the archive into a suitable directory such as/usr/local/bin:shell>

cd /var/tmpshell>tar -zxvf mysql-5.1.15-beta-pc-linux-gnu-i686.tar.gzshell>cd mysql-5.1.15-beta-pc-linux-gnu-i686shell>cp /bin/ndb_mgm* /usr/local/bin(You can safely delete the directory created by unpacking the downloaded archive, and the files it contains, from

/var/tmponce ndb_mgm and ndb_mgmd have been copied to the executables directory.)Change location to the directory into which you copied the files, and then make both of them executable:

shell>

cd /usr/local/binshell>chmod +x ndb_mgm*

Management Node Installation — RPM File

To install the MySQL Cluster management server, it is necessary only to use the NDB Cluster - Storage engine management RPM. Copy this RPM to the computer intended to host the management node, and then install it by running the following command as the system root user (replace the name shown for the RPM as necessary to match that of the Storage engine management RPM downloaded from the MySQL AB web site):

shell> rpm -Uhv MySQL-ndb-management-5.1.15-beta-0.glibc23.i386.rpm

This installs the management server binary

(ndb_mgmd) to the

/usr/sbin directory.

You should also install the NDB management

client, which is supplied by the Storage

engine basic tools RPM. Copy this RPM to the same

computer as the management node, and then install it by running

the following command as the system root user (again, replace

the name shown for the RPM as necessary to match that of the

Storage engine basic tools RPM

downloaded from the MySQL AB web site):

shell> rpm -Uhv MySQL-ndb-tools-5.1.15-beta-0.glibc23.i386.rpm

The Storage engine basic tools

RPM installs the MySQL Cluster management client

(ndb_mgm) to the

/usr/bin directory.

In Section 15.3.3, “Multi-Computer Configuration”, we create configuration files for all of the nodes in our example Cluster.

For our four-node, four-host MySQL Cluster, it is necessary to write four configuration files, one per node host.

Each data node or SQL node requires a

my.cnffile that provides two pieces of information: a connectstring that tells the node where to find the MGM node, and a line telling the MySQL server on this host (the machine hosting the data node) to run in NDB mode.For more information on connectstrings, see Section 15.4.4.2, “The Cluster

connectstring”.The management node needs a

config.inifile telling it how many replicas to maintain, how much memory to allocate for data and indexes on each data node, where to find the data nodes, where to save data to disk on each data node, and where to find any SQL nodes.

Configuring the Storage and SQL Nodes

The my.cnf file needed for the data nodes

is fairly simple. The configuration file should be located in

the /etc directory and can be edited using

any text editor. (Create the file if it does not exist.) For

example:

shell> vi /etc/my.cnf

We show vi being used here to create the file, but any text editor should work just as well.

For each data node and SQL node in our example setup,

my.cnf should look like this:

# Options for mysqld process: [MYSQLD] ndbcluster # run NDB storage engine ndb-connectstring=192.168.0.10 # location of management server # Options for ndbd process: [MYSQL_CLUSTER] ndb-connectstring=192.168.0.10 # location of management server

After entering the preceding information, save this file and exit the text editor. Do this for the machines hosting data node “A”, data node “B”, and the SQL node.

Important: Once you have

started a mysqld process with the

ndbcluster and

ndb-connectstring parameters in the

[MYSQLD] in the my.cnf

file as shown previously, you cannot execute any CREATE

TABLE or ALTER TABLE statements

without having actually started the cluster. Otherwise, these

statements will fail with an error. This is by

design.

Configuring the Management Node

The first step in configuring the MGM node is to create the

directory in which the configuration file can be found and then

to create the file itself. For example (running as

root):

shell>mkdir /var/lib/mysql-clustershell>cd /var/lib/mysql-clustershell>vi config.ini

For our representative setup, the

config.ini file should read as follows:

# Options affecting ndbd processes on all data nodes:

[NDBD DEFAULT]

NoOfReplicas=2 # Number of replicas

DataMemory=80M # How much memory to allocate for data storage

IndexMemory=18M # How much memory to allocate for index storage

# For DataMemory and IndexMemory, we have used the

# default values. Since the "world" database takes up

# only about 500KB, this should be more than enough for

# this example Cluster setup.

# TCP/IP options:

[TCP DEFAULT]

portnumber=2202 # This the default; however, you can use any

# port that is free for all the hosts in cluster

# Note: It is recommended beginning with MySQL 5.0 that

# you do not specify the portnumber at all and simply allow

# the default value to be used instead

# Management process options:

[NDB_MGMD]

hostname=192.168.0.10 # Hostname or IP address of MGM node

datadir=/var/lib/mysql-cluster # Directory for MGM node log files

# Options for data node "A":

[NDBD]

# (one [NDBD] section per data node)

hostname=192.168.0.30 # Hostname or IP address

datadir=/usr/local/mysql/data # Directory for this data node's data files

# Options for data node "B":

[NDBD]

hostname=192.168.0.40 # Hostname or IP address

datadir=/usr/local/mysql/data # Directory for this data node's data files

# SQL node options:

[MYSQLD]

hostname=192.168.0.20 # Hostname or IP address

# (additional mysqld connections can be

# specified for this node for various

# purposes such as running ndb_restore)

(Note: The

world database can be downloaded from

http://dev.mysql.com/doc/, where it can be found listed

under “Examples”.)

After all the configuration files have been created and these minimal options have been specified, you are ready to proceed with starting the cluster and verifying that all processes are running. We discuss how this is done in Section 15.3.4, “Initial Startup”.

For more detailed information about the available MySQL Cluster configuration parameters and their uses, see Section 15.4.4, “Configuration File”, and Section 15.4, “MySQL Cluster Configuration”. For configuration of MySQL Cluster as relates to making backups, see Section 15.8.4, “Configuration for Cluster Backup”.

Note: The default port for Cluster management nodes is 1186; the default port for data nodes is 2202. Beginning with MySQL 5.0.3, this restriction is lifted, and the cluster automatically allocates ports for data nodes from those that are already free.

Starting the cluster is not very difficult after it has been configured. Each cluster node process must be started separately, and on the host where it resides. The management node ahould be started first, followed by the data nodes, and then finally by any SQL nodes:

On the management host, issue the following command from the system shell to start the MGM node process:

shell>

ndb_mgmd -f /var/lib/mysql-cluster/config.iniNote that ndb_mgmd must be told where to find its configuration file, using the

-for--config-fileoption. (See Section 15.6.3, “ndb_mgmd, the Management Server Process”, for details.)On each of the data node hosts, run this command to start the ndbd process for the first time:

shell>

ndbd --initialNote that it is very important to use the

--initialparameter only when starting ndbd for the first time, or when restarting after a backup/restore operation or a configuration change. This is because the--initialoption causes the node to delete any files created by earlier ndbd instances that are needed for recovery, including the recovery log files.An exception to this is that

--initialdoes not delete Disk Data files. If you do need to perform an initial restart of the cluster, you must delete any existing Disk Data log files and data files manually.If you used RPM files to install MySQL on the cluster host where the SQL node is to reside, you can (and should) use the supplied startup script to start the MySQL server process on the SQL node.

If all has gone well, and the cluster has been set up correctly, the cluster should now be operational. You can test this by invoking the ndb_mgm management node client. The output should look like that shown here, although you might see some slight differences in the output depending upon the exact version of MySQL that you are using:

shell>ndb_mgm-- NDB Cluster -- Management Client -- ndb_mgm>SHOWConnected to Management Server at: localhost:1186 Cluster Configuration --------------------- [ndbd(NDB)] 2 node(s) id=2 @192.168.0.30 (Version: 5.1.15-beta, Nodegroup: 0, Master) id=3 @192.168.0.40 (Version: 5.1.15-beta, Nodegroup: 0) [ndb_mgmd(MGM)] 1 node(s) id=1 @192.168.0.10 (Version: 5.1.15-beta) [mysqld(SQL)] 1 node(s) id=4 (Version: 5.1.15-beta)

Note: The SQL node is

referenced here as [mysqld(API)]. This is

perfectly normal, and reflects the fact that the

mysqld process is acting as a cluster API

node.

You should now be ready to work with databases, tables, and data in MySQL Cluster. See Section 15.3.5, “Loading Sample Data and Performing Queries”, for a brief discussion.

Working with data in MySQL Cluster is not much different from doing so in MySQL without Cluster. There are two points to keep in mind:

For a table to be replicated in the cluster, it must use the

NDB Clusterstorage engine. To specify this, use theENGINE=NDBorENGINE=NDBCLUSTERtable option. You can add this option when creating the table:CREATE TABLE

tbl_name( ... ) ENGINE=NDBCLUSTER;Alternatively, for an existing table that uses a different storage engine, use

ALTER TABLEto change the table to useNDB Cluster:ALTER TABLE

tbl_nameENGINE=NDBCLUSTER;Each

NDBtable must have a primary key. If no primary key is defined by the user when a table is created, theNDB Clusterstorage engine automatically generates a hidden one. (Note: This hidden key takes up space just as does any other table index. It is not uncommon to encounter problems due to insufficient memory for accommodating these automatically created indexes.)

If you are importing tables from an existing database using the

output of mysqldump, you can open the SQL

script in a text editor and add the ENGINE

option to any table creation statements, or replace any existing

ENGINE (or TYPE) options.

Suppose that you have the world sample

database on another MySQL server that does not support MySQL

Cluster, and you want to export the City

table:

shell> mysqldump --add-drop-table world City > city_table.sql

The resulting city_table.sql file will

contain this table creation statement (and the

INSERT statements necessary to import the

table data):

DROP TABLE IF EXISTS `City`;

CREATE TABLE `City` (

`ID` int(11) NOT NULL auto_increment,

`Name` char(35) NOT NULL default '',

`CountryCode` char(3) NOT NULL default '',

`District` char(20) NOT NULL default '',

`Population` int(11) NOT NULL default '0',

PRIMARY KEY (`ID`)

) ENGINE=MyISAM DEFAULT CHARSET=latin1;

INSERT INTO `City` VALUES (1,'Kabul','AFG','Kabol',1780000);

INSERT INTO `City` VALUES (2,'Qandahar','AFG','Qandahar',237500);

INSERT INTO `City` VALUES (3,'Herat','AFG','Herat',186800);

(remaining INSERT statements omitted)

You will need to make sure that MySQL uses the NDB storage

engine for this table. There are two ways that this can be

accomplished. One of these is to modify the table definition

before importing it into the Cluster

database. Using the City table as an example,

modify the ENGINE option of the definition as

follows:

DROP TABLE IF EXISTS `City`;

CREATE TABLE `City` (

`ID` int(11) NOT NULL auto_increment,

`Name` char(35) NOT NULL default '',

`CountryCode` char(3) NOT NULL default '',

`District` char(20) NOT NULL default '',

`Population` int(11) NOT NULL default '0',

PRIMARY KEY (`ID`)

) ENGINE=NDBCLUSTER DEFAULT CHARSET=latin1;

INSERT INTO `City` VALUES (1,'Kabul','AFG','Kabol',1780000);

INSERT INTO `City` VALUES (2,'Qandahar','AFG','Qandahar',237500);

INSERT INTO `City` VALUES (3,'Herat','AFG','Herat',186800);

(remaining INSERT statements omitted)

This must be done for the definition of each table that is to be

part of the clustered database. The easiest way to accomplish

this is to do a search-and-replace on the file that contains the

definitions and replace all instances of

TYPE=

or

engine_nameENGINE=

with engine_nameENGINE=NDBCLUSTER. If you do not want to

modify the file, you can use the unmodified file to create the

tables, and then use ALTER TABLE to change

their storage engine. The particulars are given later in this

section.

Assuming that you have already created a database named

world on the SQL node of the cluster, you can

then use the mysql command-line client to

read city_table.sql, and create and

populate the corresponding table in the usual manner:

shell> mysql world < city_table.sql

It is very important to keep in mind that the preceding command

must be executed on the host where the SQL node is running (in

this case, on the machine with the IP address

192.168.0.20).

To create a copy of the entire world database

on the SQL node, use mysqldump on the

non-cluster server to export the database to a file named

world.sql; for example, in the

/tmp directory. Then modify the table

definitions as just described and import the file into the SQL

node of the cluster like this:

shell> mysql world < /tmp/world.sql

If you save the file to a different location, adjust the preceding instructions accordingly.

It is important to note that NDB Cluster in

MySQL 5.1 does not support autodiscovery of

databases. (See Section 15.13, “Known Limitations of MySQL Cluster”.)

This means that, once the world database and

its tables have been created on one data node, you need to issue

the CREATE SCHEMA world statement.

Running SELECT queries on the SQL node is no

different from running them on any other instance of a MySQL

server. To run queries from the command line, you first need to

log in to the MySQL Monitor in the usual way (specify the

root password at the Enter

password: prompt):

shell> mysql -u root -p

Enter password:

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 1 to server version: 5.1.15-beta

Type 'help;' or '\h' for help. Type '\c' to clear the buffer.

mysql>

We simply use the MySQL server's root account

and assume that you have followed the standard security

precautions for installing a MySQL server, including setting a

strong root password. For more information,

see Section 2.10.3, “Securing the Initial MySQL Accounts”.

It is worth taking into account that Cluster nodes do not make

use of the MySQL privilege system when accessing one another.

Setting or changing MySQL user accounts (including the

root account) effects only applications that

access the SQL node, not interaction between nodes.

If you did not modify the ENGINE clauses in

the table definitions prior to importing the SQL script, you

should run the following statements at this point:

mysql>USE world;mysql>ALTER TABLE City ENGINE=NDBCLUSTER;mysql>ALTER TABLE Country ENGINE=NDBCLUSTER;mysql>ALTER TABLE CountryLanguage ENGINE=NDBCLUSTER;

Selecting a database and running a SELECT query against a table in that database is also accomplished in the usual manner, as is exiting the MySQL Monitor:

mysql>USE world;mysql>SELECT Name, Population FROM City ORDER BY Population DESC LIMIT 5;+-----------+------------+ | Name | Population | +-----------+------------+ | Bombay | 10500000 | | Seoul | 9981619 | | São Paulo | 9968485 | | Shanghai | 9696300 | | Jakarta | 9604900 | +-----------+------------+ 5 rows in set (0.34 sec) mysql>\qBye shell>

Applications that use MySQL can employ standard APIs to access

NDB tables. It is important to remember that your application

must access the SQL node, and not the MGM or data nodes. This

brief example shows how we might execute the

SELECT statement just shown by using PHP 5's

mysqli extension running on a Web server

elsewhere on the network:

<!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN"

"http://www.w3.org/TR/html4/loose.dtd">

<html>

<head>

<meta http-equiv="Content-Type"

content="text/html; charset=iso-8859-1">

<title>SIMPLE mysqli SELECT</title>

</head>

<body>

<?php

# connect to SQL node:

$link = new mysqli('192.168.0.20', 'root', 'root_password', 'world');

# parameters for mysqli constructor are:

# host, user, password, database

if( mysqli_connect_errno() )

die("Connect failed: " . mysqli_connect_error());

$query = "SELECT Name, Population

FROM City

ORDER BY Population DESC

LIMIT 5";

# if no errors...

if( $result = $link->query($query) )

{

?>

<table border="1" width="40%" cellpadding="4" cellspacing ="1">

<tbody>

<tr>

<th width="10%">City</th>

<th>Population</th>

</tr>

<?

# then display the results...

while($row = $result->fetch_object())

printf(<tr>\n <td align=\"center\">%s</td><td>%d</td>\n</tr>\n",

$row->Name, $row->Population);

?>

</tbody

</table>

<?

# ...and verify the number of rows that were retrieved

printf("<p>Affected rows: %d</p>\n", $link->affected_rows);

}

else

# otherwise, tell us what went wrong

echo mysqli_error();

# free the result set and the mysqli connection object

$result->close();

$link->close();

?>

</body>

</html>

We assume that the process running on the Web server can reach the IP address of the SQL node.

In a similar fashion, you can use the MySQL C API, Perl-DBI, Python-mysql, or MySQL AB's own Connectors to perform the tasks of data definition and manipulation just as you would normally with MySQL.

To shut down the cluster, enter the following command in a shell on the machine hosting the MGM node:

shell> ndb_mgm -e shutdown

The -e option here is used to pass a command to

the ndb_mgm client from the shell. See

Section 4.3.1, “Using Options on the Command Line”. The command causes the

ndb_mgm, ndb_mgmd, and any

ndbd processes to terminate gracefully. Any

SQL nodes can be terminated using mysqladmin

shutdown and other means.

To restart the cluster, run these commands:

On the management host (

192.168.0.10in our example setup):shell>

ndb_mgmd -f /var/lib/mysql-cluster/config.iniOn each of the data node hosts (

192.168.0.30and192.168.0.40):shell>

ndbdRemember not to invoke this command with the

--initialoption when restarting an NDBD node normally.On the SQL host (

192.168.0.20):shell>

mysqld &

For information on making Cluster backups, see Section 15.8.2, “Using The Management Client to Create a Backup”.

To restore the cluster from backup requires the use of the ndb_restore command. This is covered in Section 15.8.3, “How to Restore a Cluster Backup”.

More information on configuring MySQL Cluster can be found in Section 15.4, “MySQL Cluster Configuration”.

A MySQL server that is part of a MySQL Cluster differs in only one

respect from a normal (non-clustered) MySQL server, in that it

employs the NDB Cluster storage engine. This

engine is also referred to simply as NDB, and

the two forms of the name are synonymous.

To avoid unnecessary allocation of resources, the server is

configured by default with the NDB storage

engine disabled. To enable NDB, you must modify

the server's my.cnf configuration file, or

start the server with the --ndbcluster option.

The MySQL server is a part of the cluster, so it also must know

how to access an MGM node to obtain the cluster configuration

data. The default behavior is to look for the MGM node on

localhost. However, should you need to specify

that its location is elsewhere, this can be done in

my.cnf or on the MySQL server command line.

Before the NDB storage engine can be used, at

least one MGM node must be operational, as well as any desired

data nodes.

NDB, the Cluster storage engine, is available

in binary distributions for Linux, Mac OS X, and Solaris. We are

working to make Cluster run on all operating systems supported

by MySQL, including Windows.

If you choose to build from a source tarball or the MySQL

5.1 BitKeeper tree, be sure to use the

--with-ndbcluster option when running

configure. You can also use the

BUILD/compile-pentium-max build script. Note

that this script includes OpenSSL, so you must either have or

obtain OpenSSL to build successfully, or else modify

compile-pentium-max to exclude this

requirement. Of course, you can also just follow the standard

instructions for compiling your own binaries, and then perform

the usual tests and installation procedure. See

Section 2.9.3, “Installing from the Development Source Tree”.

In the next few sections, we assume that you are already familiar with installing MySQL, and here we cover only the differences between configuring MySQL Cluster and configuring MySQL without clustering. (See Chapter 2, Installing and Upgrading MySQL, if you require more information about the latter.)

You will find Cluster configuration easiest if you have already

have all management and data nodes running first; this is likely

to be the most time-consuming part of the configuration. Editing

the my.cnf file is fairly straightforward,

and this section will cover only any differences from

configuring MySQL without clustering.

To familiarize you with the basics, we will describe the simplest possible configuration for a functional MySQL Cluster. After this, you should be able to design your desired setup from the information provided in the other relevant sections of this chapter.

First, you need to create a configuration directory such as

/var/lib/mysql-cluster, by executing the

following command as the system root user:

shell> mkdir /var/lib/mysql-cluster

In this directory, create a file named

config.ini that contains the following

information. Substitute appropriate values for

HostName and DataDir as

necessary for your system.

# file "config.ini" - showing minimal setup consisting of 1 data node, # 1 management server, and 3 MySQL servers. # The empty default sections are not required, and are shown only for # the sake of completeness. # Data nodes must provide a hostname but MySQL Servers are not required # to do so. # If you don't know the hostname for your machine, use localhost. # The DataDir parameter also has a default value, but it is recommended to # set it explicitly. # Note: DB, API, and MGM are aliases for NDBD, MYSQLD, and NDB_MGMD # respectively. DB and API are deprecated and should not be used in new # installations. [NDBD DEFAULT] NoOfReplicas= 1 [MYSQLD DEFAULT] [NDB_MGMD DEFAULT] [TCP DEFAULT] [NDB_MGMD] HostName= myhost.example.com [NDBD] HostName= myhost.example.com DataDir= /var/lib/mysql-cluster [MYSQLD] [MYSQLD] [MYSQLD]

You can now start the ndb_mgmd management

server. By default, it atttempts to read the

config.ini file in its current working

directory, so change location into the directory where the file

is located and then invoke ndb_mgmd:

shell>cd /var/lib/mysql-clustershell>ndb_mgmd

Then start a single data node by running

ndbd. When starting ndbd

for a given data node for the very first time, you should use

the --initial option as shown here:

shell> ndbd --initial

For subsequent ndbd starts, you will

generally want to omit the

--initial option:

shell> ndbd

The reason for omitting --initial on subsequent

restarts is that this option causes ndbd to

delete and re-create all existing data and log files (as well as

all table metadata) for this data node. One exception to this

rule about not using --initial except for the

first ndbd invocation is that you use it when

restarting the cluster and restoring from backup after adding

new data nodes.

By default, ndbd looks for the management

server at localhost on port 1186.

Note: If you have installed

MySQL from a binary tarball, you will need to specify the path

of the ndb_mgmd and ndbd

servers explicitly. (Normally, these will be found in

/usr/local/mysql/bin.)

Finally, change location to the MySQL data directory (usually

/var/lib/mysql or

/usr/local/mysql/data), and make sure that

the my.cnf file contains the option

necessary to enable the NDB storage engine:

[mysqld] ndbcluster

You can now start the MySQL server as usual:

shell> mysqld_safe --user=mysql &

Wait a moment to make sure the MySQL server is running properly.

If you see the notice mysql ended, check the

server's .err file to find out what went

wrong.

If all has gone well so far, you now can start using the

cluster. Connect to the server and verify that the

NDBCLUSTER storage engine is enabled:

shell>mysqlWelcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 1 to server version: 5.1.15-beta Type 'help;' or '\h' for help. Type '\c' to clear the buffer. mysql>SHOW ENGINES\G... *************************** 12. row *************************** Engine: NDBCLUSTER Support: YES Comment: Clustered, fault-tolerant, memory-based tables *************************** 13. row *************************** Engine: NDB Support: YES Comment: Alias for NDBCLUSTER ...

The row numbers shown in the preceding example output may be different from those shown on your system, depending upon how your server is configured.

Try to create an NDBCLUSTER table:

shell>mysqlmysql>USE test;Database changed mysql>CREATE TABLE ctest (i INT) ENGINE=NDBCLUSTER;Query OK, 0 rows affected (0.09 sec) mysql>SHOW CREATE TABLE ctest \G*************************** 1. row *************************** Table: ctest Create Table: CREATE TABLE `ctest` ( `i` int(11) default NULL ) ENGINE=ndbcluster DEFAULT CHARSET=latin1 1 row in set (0.00 sec)

To check that your nodes were set up properly, start the management client:

shell> ndb_mgm

Use the SHOW command from within the management client to obtain a report on the cluster's status:

NDB> SHOW

Cluster Configuration

---------------------

[ndbd(NDB)] 1 node(s)

id=2 @127.0.0.1 (Version: 3.5.3, Nodegroup: 0, Master)

[ndb_mgmd(MGM)] 1 node(s)

id=1 @127.0.0.1 (Version: 3.5.3)

[mysqld(API)] 3 node(s)

id=3 @127.0.0.1 (Version: 3.5.3)

id=4 (not connected, accepting connect from any host)

id=5 (not connected, accepting connect from any host)

At this point, you have successfully set up a working MySQL

Cluster. You can now store data in the cluster by using any

table created with ENGINE=NDBCLUSTER or its

alias ENGINE=NDB.

- 15.4.4.1. Basic Example Configuration

- 15.4.4.2. The Cluster

connectstring - 15.4.4.3. Defining Cluster Computers

- 15.4.4.4. Defining the Management Server

- 15.4.4.5. Defining Data Nodes

- 15.4.4.6. Defining SQL and Other API Nodes

- 15.4.4.7. Cluster TCP/IP Connections

- 15.4.4.8. TCP/IP Connections Using Direct Connections

- 15.4.4.9. Shared-Memory Connections

- 15.4.4.10. SCI Transport Connections

Configuring MySQL Cluster requires working with two files:

my.cnf: Specifies options for all MySQL Cluster executables. This file, with which you should be familiar with from previous work with MySQL, must be accessible by each executable running in the cluster.config.ini: This file is read only by the MySQL Cluster management server, which then distributes the information contained therein to all processes participating in the cluster.config.inicontains a description of each node involved in the cluster. This includes configuration parameters for data nodes and configuration parameters for connections between all nodes in the cluster. For a quick reference to the sections that can appear in this file, and what sorts of configuration parameters may be placed in each section, see Sections of theconfig.iniFile.

We are continuously making improvements in Cluster configuration and attempting to simplify this process. Although we strive to maintain backward compatibility, there may be times when introduce an incompatible change. In such cases we will try to let Cluster users know in advance if a change is not backward compatible. If you find such a change and we have not documented it, please report it in the MySQL bugs database using the instructions given in Section 1.8, “How to Report Bugs or Problems”.

To support MySQL Cluster, you will need to update

my.cnf as shown in the following example.

Note that the options shown here should not be confused with

those that are used in config.ini files.

You may also specify these parameters on the command line when

invoking the executables.

# my.cnf # example additions to my.cnf for MySQL Cluster # (valid in MySQL 5.1) # enable ndbcluster storage engine, and provide connectstring for # management server host (default port is 1186) [mysqld] ndbcluster ndb-connectstring=ndb_mgmd.mysql.com # provide connectstring for management server host (default port: 1186) [ndbd] connect-string=ndb_mgmd.mysql.com # provide connectstring for management server host (default port: 1186) [ndb_mgm] connect-string=ndb_mgmd.mysql.com # provide location of cluster configuration file [ndb_mgmd] config-file=/etc/config.ini

(For more information on connectstrings, see

Section 15.4.4.2, “The Cluster connectstring”.)

# my.cnf # example additions to my.cnf for MySQL Cluster # (will work on all versions) # enable ndbcluster storage engine, and provide connectstring for management # server host to the default port 1186 [mysqld] ndbcluster ndb-connectstring=ndb_mgmd.mysql.com:1186

Important: Once you have

started a mysqld process with the

ndbcluster and

ndb-connectstring parameters in the

[MYSQLD] in the my.cnf

file as shown previously, you cannot execute any

CREATE TABLE or ALTER

TABLE statements without having actually started the

cluster. Otherwise, these statements will fail with an error.

This is by design.

You may also use a separate [mysql_cluster]

section in the cluster my.cnf file for

settings to be read and used by all executables:

# cluster-specific settings [mysql_cluster] ndb-connectstring=ndb_mgmd.mysql.com:1186

For additional NDB variables that can be

set in the my.cnf file, see

Section 5.2.3, “System Variables”.

The configuration file is named

config.ini by default. It is read by

ndb_mgmd at startup and can be placed

anywhere. Its location and name are specified by using

--config-file=

on the ndb_mgmd command line. If the

configuration file is not specified,

ndb_mgmd by default tries to read a file

named path_nameconfig.ini located in the current

working directory.

Currently, the configuration file is in INI format, which

consists of sections preceded by section headings (surrounded

by square brackets), followed by the appropriate parameter

names and values. One deviation from the standard INI format

is that the parameter name and value can be separated by a

colon (‘:’) as well as the

equals sign (‘=’). Another

deviation is that sections are not uniquely identified by

section name. Instead, unique sections (such as two different

nodes of the same type) are identified by a unique ID

specified as a parameter within the section.

Default values are defined for most parameters, and can also

be specified in config.ini. To create a

default value section, simply add the word

DEFAULT to the section name. For example,

an [NDBD] section contains parameters that

apply to a particular data node, whereas an [NDBD

DEFAULT] section contains parameters that apply to

all data nodes. Suppose that all data nodes should use the

same data memory size. To configure them all, create an

[NDBD DEFAULT] section that contains a

DataMemory line to specify the data memory

size.

At a minimum, the configuration file must define the computers and nodes involved in the cluster and on which computers these nodes are located. An example of a simple configuration file for a cluster consisting of one management server, two data nodes and two MySQL servers is shown here:

# file "config.ini" - 2 data nodes and 2 SQL nodes # This file is placed in the startup directory of ndb_mgmd (the # management server) # The first MySQL Server can be started from any host. The second # can be started only on the host mysqld_5.mysql.com [NDBD DEFAULT] NoOfReplicas= 2 DataDir= /var/lib/mysql-cluster [NDB_MGMD] Hostname= ndb_mgmd.mysql.com DataDir= /var/lib/mysql-cluster [NDBD] HostName= ndbd_2.mysql.com [NDBD] HostName= ndbd_3.mysql.com [MYSQLD] [MYSQLD] HostName= mysqld_5.mysql.com

Note that each node has its own section in the

config.ini. For instance, this cluster

has two data nodes, so the preceding configuration file

contains two [NDBD] sections defining these

nodes.

Sections of the

config.ini File

There are six different sections that you can use in the

config.ini configuration file, as

described in the following list:

[COMPUTER]: Defines cluster hosts. This is not required to configure a viable MySQL Cluster, but be may used as a convenience when setting up a large cluster. See Section 15.4.4.3, “Defining Cluster Computers”, for more information.[NDBD]: Defines a cluster data node (ndbd process). See Section 15.4.4.5, “Defining Data Nodes”, for details.[MYSQLD]: Defines the cluster's MySQL server nodes (also called SQL or API nodes). For a discussion of SQL node configuration, see Section 15.4.4.6, “Defining SQL and Other API Nodes”.[MGM]or[NDB_MGMD]: Defines a cluster management server (MGM) node. For information concerning the configuration of MGM nodes, see Section 15.4.4.4, “Defining the Management Server”.[TCP]: Defines a TCP/IP connection between cluster nodes, with TCP/IP being the default connection protocol. Normally,[TCP]or[TCP DEFAULT]sections are not required to set up a MySQL Cluster, as the cluster handles this automatically; however, it may be necessary in some situations to override the defaults provided by the cluster. See Section 15.4.4.7, “Cluster TCP/IP Connections”, for information about available TCP/IP configuration parameters and how to use them. (You may also find Section 15.4.4.8, “TCP/IP Connections Using Direct Connections” to be of interest in some cases.)[SHM]: Defines shared-memory connections between nodes. In MySQL 5.1, it is enabled by default, but should still be considered experimental. For a discussion of SHM interconnects, see Section 15.4.4.9, “Shared-Memory Connections”.[SCI]:Defines Scalable Coherent Interface connections between cluster data nodes. Such connections require software which, while freely available, is not part of the MySQL Cluster distribution, as well as specialised hardware. See Section 15.4.4.10, “SCI Transport Connections” for detailed information about SCI interconnects.

You can define DEFAULT values for each

section. All Cluster parameter names are case-insensitive,

which differs from parameters specified in

my.cnf or my.ini

files.

With the exception of the MySQL Cluster management server (ndb_mgmd), each node that is part of a MySQL Cluster requires a connectstring that points to the management server's location. This connectstring is used in establishing a connection to the management server as well as in performing other tasks depending on the node's role in the cluster. The syntax for a connectstring is as follows:

<connectstring> :=

[<nodeid-specification>,]<host-specification>[,<host-specification>]

<nodeid-specification> := node_id

<host-specification> := host_name[:port_num]

node_id is an integer larger than 1 which

identifies a node in config.ini.

host_name is a string representing

a valid Internet host name or IP address.

port_num is an integer referring to

a TCP/IP port number.

example 1 (long): "nodeid=2,myhost1:1100,myhost2:1100,192.168.0.3:1200" example 2 (short): "myhost1"

All nodes will use localhost:1186 as the

default connectstring value if none is provided. If

port_num is omitted from the

connectstring, the default port is 1186. This port should

always be available on the network because it has been

assigned by IANA for this purpose (see

http://www.iana.org/assignments/port-numbers

for details).

By listing multiple

<host-specification> values, it is

possible to designate several redundant management servers. A

cluster node will attempt to contact successive management

servers on each host in the order specified, until a

successful connection has been established.

There are a number of different ways to specify the connectstring:

Each executable has its own command-line option which enables specifying the management server at startup. (See the documentation for the respective executable.)

It is also possible to set the connectstring for all nodes in the cluster at once by placing it in a

[mysql_cluster]section in the management server'smy.cnffile.For backward compatibility, two other options are available, using the same syntax:

Set the

NDB_CONNECTSTRINGenvironment variable to contain the connectstring.Write the connectstring for each executable into a text file named

Ndb.cfgand place this file in the executable's startup directory.

However, these are now deprecated and should not be used for new installations.

The recommended method for specifying the connectstring is to

set it on the command line or in the

my.cnf file for each executable.

The [COMPUTER] section has no real

significance other than serving as a way to avoid the need of

defining host names for each node in the system. All

parameters mentioned here are required.

The [NDB_MGMD] section is used to configure

the behavior of the management server.

[MGM] can be used as an alias; the two

section names are equivalent. All parameters in the following

list are optional and assume their default values if omitted.

Note: If neither the

ExecuteOnComputer nor the

HostName parameter is present, the default

value localhost will be assumed for both.

Each node in the cluster has a unique identity, which is represented by an integer value in the range 1 to 63 inclusive. This ID is used by all internal cluster messages for addressing the node.

This refers to the

Idset for one of the computers defined in a[COMPUTER]section of theconfig.inifile.This is the port number on which the management server listens for configuration requests and management commands.

Specifying this parameter defines the hostname of the computer on which the management node is to reside. To specify a hostname other than

localhost, either this parameter orExecuteOnComputeris required.This parameter specifies where to send cluster logging information. There are three options in this regard —

CONSOLE,SYSLOG, andFILE— withFILEbeing the default:CONSOLEoutputs the log tostdout:CONSOLE

SYSLOGsends the log to asyslogfacility, possible values being one ofauth,authpriv,cron,daemon,ftp,kern,lpr,mail,news,syslog,user,uucp,local0,local1,local2,local3,local4,local5,local6, orlocal7.Note: Not every facility is necessarily supported by every operating system.

SYSLOG:facility=syslog

FILEpipes the cluster log output to a regular file on the same machine. The following values can be specified:filename: The name of the log file.maxsize: The maximum size (in bytes) to which the file can grow before logging rolls over to a new file. When this occurs, the old log file is renamed by appending.Nto the filename, whereNis the next number not yet used with this name.maxfiles: The maximum number of log files.

FILE:filename=cluster.log,maxsize=1000000,maxfiles=6

The default value for the

FILEparameter isFILE:filename=ndb_, wherenode_id_cluster.log,maxsize=1000000,maxfiles=6node_idis the ID of the node.

It is possible to specify multiple log destinations separated by semicolons as shown here:

CONSOLE;SYSLOG:facility=local0;FILE:filename=/var/log/mgmd

This parameter is used to define which nodes can act as arbitrators. Only MGM nodes and SQL nodes can be arbitrators.

ArbitrationRankcan take one of the following values:0: The node will never be used as an arbitrator.1: The node has high priority; that is, it will be preferred as an arbitrator over low-priority nodes.2: Indicates a low-priority node which be used as an arbitrator only if a node with a higher priority is not available for that purpose.

Normally, the management server should be configured as an arbitrator by setting its

ArbitrationRankto 1 (the default value) and that of all SQL nodes to 0.An integer value which causes the management server's responses to arbitration requests to be delayed by that number of milliseconds. By default, this value is 0; it is normally not necessary to change it.

This specifies the directory where output files from the management server will be placed. These files include cluster log files, process output files, and the daemon's process ID (PID) file. (For log files, this location can be overridden by setting the

FILEparameter forLogDestinationas discussed previously in this section.)The default value for this parameter is the directory in which ndb_mgmd is located.

The [NDBD] and [NDBD DEFAULT] sections are

used to configure the behavior of the cluster's data nodes.

There are many parameters which control buffer sizes, pool

sizes, timeouts, and so forth. The only mandatory parameters

are:

Either

ExecuteOnComputerorHostName, which must be defined in the local[NDBD]section.The parameter

NoOfReplicas, which must be defined in the [NDBD DEFAULT] section, as it is common to all Cluster data nodes.

Most data node parameters are set in the [NDBD

DEFAULT] section. Only those parameters explicitly

stated as being able to set local values are allowed to be

changed in the [NDBD] section. Where

present, HostName, Id

and ExecuteOnComputer

must be defined in the local

[NDBD] section, and not in any other

section of config.ini. In other words,

settings for these parameters are specific to one data node.

For those parameters affecting memory usage or buffer sizes,

it is possible to use K,

M, or G as a suffix to

indicate units of 1024, 1024×1024, or

1024×1024×1024. (For example,

100K means 100 × 1024 = 102400.)

Parameter names and values are currently case-sensitive.

The Id value (that is, the data node

identifier) can be allocated on the command line when the node

is started or in the configuration file.

This is the node ID used as the address of the node for all cluster internal messages. This is an integer in the range 1 to 63 inclusive. Each node in the cluster must have a unique identity.

This refers to the

Idset for one of the computers defined in a[COMPUTER]section.Specifying this parameter defines the hostname of the computer on which the data node is to reside. To specify a hostname other than

localhost, either this parameter orExecuteOnComputeris required.Each node in the cluster uses a port to connect to other nodes. This port is used also for non-TCP transporters in the connection setup phase. The default port is allocated dynamically in such a way as to ensure that no two nodes on the same computer receive the same port number, so it should not normally be necessary to specify a value for this parameter.

This global parameter can be set only in the

[NDBD DEFAULT]section, and defines the number of replicas for each table stored in the cluster. This parameter also specifies the size of node groups. A node group is a set of nodes all storing the same information.Node groups are formed implicitly. The first node group is formed by the set of data nodes with the lowest node IDs, the next node group by the set of the next lowest node identities, and so on. By way of example, assume that we have 4 data nodes and that

NoOfReplicasis set to 2. The four data nodes have node IDs 2, 3, 4 and 5. Then the first node group is formed from nodes 2 and 3, and the second node group by nodes 4 and 5. It is important to configure the cluster in such a manner that nodes in the same node groups are not placed on the same computer because a single hardware failure would cause the entire cluster to crash.If no node IDs are provided, the order of the data nodes will be the determining factor for the node group. Whether or not explicit assignments are made, they can be viewed in the output of the management client's

SHOWstatement.There is no default value for

NoOfReplicas; the maximum possible value is 4.Important: The value for this parameter must divide evenly into the number of data nodes in the cluster. For example, if there are two data nodes, then

NoOfReplicasmust be equal to either 1 or 2, since 2/3 and 2/4 both yield fractional values; if there are four data nodes, thenNoOfReplicasmust be equal to 1, 2, or 4.This parameter specifies the directory where trace files, log files, pid files and error logs are placed.

This parameter specifies the directory where all files created for metadata, REDO logs, UNDO logs and data files are placed. The default is the directory specified by

DataDir. Note: This directory must exist before the ndbd process is initiated.The recommended directory hierarchy for MySQL Cluster includes

/var/lib/mysql-cluster, under which a directory for the node's filesystem is created. The name of this subdirectory contains the node ID. For example, if the node ID is 2, this subdirectory is namedndb_2_fs.This parameter specifies the directory in which backups are placed. If omitted, the default backup location is the directory named

BACKUPunder the location specified by theFileSystemPathparameter. (See above.)

Data Memory, Index Memory, and String Memory

DataMemory and

IndexMemory are [NDBD]

parameters specifying the size of memory segments used to

store the actual records and their indexes. In setting values

for these, it is important to understand how

DataMemory and

IndexMemory are used, as they usually need

to be updated to reflect actual usage by the cluster:

This parameter defines the amount of space (in bytes) available for storing database records. The entire amount specified by this value is allocated in memory, so it is extremely important that the machine has sufficient physical memory to accommodate it.

The memory allocated by

DataMemoryis used to store both the actual records and indexes. There is a 16-byte overhead on each record; an additional amount for each record is incurred because it is stored in a 32KB page with 128 byte page overhead (see below). There is also a small amount wasted per page due to the fact that each record is stored in only one page.For variable-size table attributes in MySQL 5.1, the data is stored on separate datapages, allocated from

DataMemory. Variable-length records use a fixed-size part with an extra overhead of 4 bytes to reference the variable-size part. The variable-size part has 2 bytes overhead plus 2 bytes per attribute.The maximum record size is currently 8052 bytes.

The memory space defined by

DataMemoryis also used to store ordered indexes, which use about 10 bytes per record. Each table row is represented in the ordered index. A common error among users is to assume that all indexes are stored in the memory allocated byIndexMemory, but this is not the case: Only primary key and unique hash indexes use this memory; ordered indexes use the memory allocated byDataMemory. However, creating a primary key or unique hash index also creates an ordered index on the same keys, unless you specifyUSING HASHin the index creation statement. This can be verified by running ndb_desc -ddb_nametable_namein the management client.The memory space allocated by