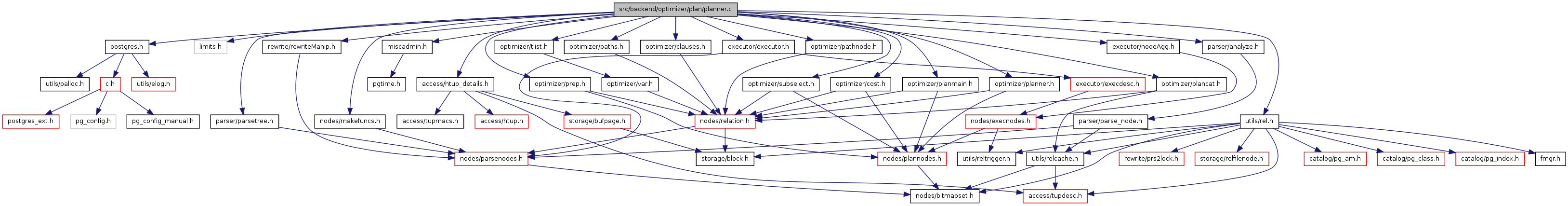

#include "postgres.h"#include <limits.h>#include "access/htup_details.h"#include "executor/executor.h"#include "executor/nodeAgg.h"#include "miscadmin.h"#include "nodes/makefuncs.h"#include "optimizer/clauses.h"#include "optimizer/cost.h"#include "optimizer/pathnode.h"#include "optimizer/paths.h"#include "optimizer/plancat.h"#include "optimizer/planmain.h"#include "optimizer/planner.h"#include "optimizer/prep.h"#include "optimizer/subselect.h"#include "optimizer/tlist.h"#include "parser/analyze.h"#include "parser/parsetree.h"#include "rewrite/rewriteManip.h"#include "utils/rel.h"

Go to the source code of this file.

Data Structures | |

| struct | standard_qp_extra |

Defines | |

| #define | EXPRKIND_QUAL 0 |

| #define | EXPRKIND_TARGET 1 |

| #define | EXPRKIND_RTFUNC 2 |

| #define | EXPRKIND_RTFUNC_LATERAL 3 |

| #define | EXPRKIND_VALUES 4 |

| #define | EXPRKIND_VALUES_LATERAL 5 |

| #define | EXPRKIND_LIMIT 6 |

| #define | EXPRKIND_APPINFO 7 |

| #define | EXPRKIND_PHV 8 |

Functions | |

| static Node * | preprocess_expression (PlannerInfo *root, Node *expr, int kind) |

| static void | preprocess_qual_conditions (PlannerInfo *root, Node *jtnode) |

| static Plan * | inheritance_planner (PlannerInfo *root) |

| static Plan * | grouping_planner (PlannerInfo *root, double tuple_fraction) |

| static void | preprocess_rowmarks (PlannerInfo *root) |

| static double | preprocess_limit (PlannerInfo *root, double tuple_fraction, int64 *offset_est, int64 *count_est) |

| static bool | limit_needed (Query *parse) |

| static void | preprocess_groupclause (PlannerInfo *root) |

| static void | standard_qp_callback (PlannerInfo *root, void *extra) |

| static bool | choose_hashed_grouping (PlannerInfo *root, double tuple_fraction, double limit_tuples, double path_rows, int path_width, Path *cheapest_path, Path *sorted_path, double dNumGroups, AggClauseCosts *agg_costs) |

| static bool | choose_hashed_distinct (PlannerInfo *root, double tuple_fraction, double limit_tuples, double path_rows, int path_width, Cost cheapest_startup_cost, Cost cheapest_total_cost, Cost sorted_startup_cost, Cost sorted_total_cost, List *sorted_pathkeys, double dNumDistinctRows) |

| static List * | make_subplanTargetList (PlannerInfo *root, List *tlist, AttrNumber **groupColIdx, bool *need_tlist_eval) |

| static int | get_grouping_column_index (Query *parse, TargetEntry *tle) |

| static void | locate_grouping_columns (PlannerInfo *root, List *tlist, List *sub_tlist, AttrNumber *groupColIdx) |

| static List * | postprocess_setop_tlist (List *new_tlist, List *orig_tlist) |

| static List * | select_active_windows (PlannerInfo *root, WindowFuncLists *wflists) |

| static List * | make_windowInputTargetList (PlannerInfo *root, List *tlist, List *activeWindows) |

| static List * | make_pathkeys_for_window (PlannerInfo *root, WindowClause *wc, List *tlist) |

| static void | get_column_info_for_window (PlannerInfo *root, WindowClause *wc, List *tlist, int numSortCols, AttrNumber *sortColIdx, int *partNumCols, AttrNumber **partColIdx, Oid **partOperators, int *ordNumCols, AttrNumber **ordColIdx, Oid **ordOperators) |

| PlannedStmt * | planner (Query *parse, int cursorOptions, ParamListInfo boundParams) |

| PlannedStmt * | standard_planner (Query *parse, int cursorOptions, ParamListInfo boundParams) |

| Plan * | subquery_planner (PlannerGlobal *glob, Query *parse, PlannerInfo *parent_root, bool hasRecursion, double tuple_fraction, PlannerInfo **subroot) |

| Expr * | preprocess_phv_expression (PlannerInfo *root, Expr *expr) |

| void | add_tlist_costs_to_plan (PlannerInfo *root, Plan *plan, List *tlist) |

| bool | is_dummy_plan (Plan *plan) |

| static Bitmapset * | get_base_rel_indexes (Node *jtnode) |

| Expr * | expression_planner (Expr *expr) |

| bool | plan_cluster_use_sort (Oid tableOid, Oid indexOid) |

Variables | |

| double | cursor_tuple_fraction = DEFAULT_CURSOR_TUPLE_FRACTION |

| planner_hook_type | planner_hook = NULL |

| #define EXPRKIND_LIMIT 6 |

Definition at line 58 of file planner.c.

Referenced by subquery_planner().

| #define EXPRKIND_PHV 8 |

Definition at line 60 of file planner.c.

Referenced by preprocess_phv_expression().

| #define EXPRKIND_QUAL 0 |

Definition at line 52 of file planner.c.

Referenced by preprocess_expression(), preprocess_qual_conditions(), and subquery_planner().

| #define EXPRKIND_RTFUNC 2 |

Definition at line 54 of file planner.c.

Referenced by preprocess_expression().

| #define EXPRKIND_RTFUNC_LATERAL 3 |

Definition at line 55 of file planner.c.

Referenced by subquery_planner().

| #define EXPRKIND_VALUES 4 |

Definition at line 56 of file planner.c.

Referenced by preprocess_expression().

| #define EXPRKIND_VALUES_LATERAL 5 |

Definition at line 57 of file planner.c.

Referenced by subquery_planner().

| void add_tlist_costs_to_plan | ( | PlannerInfo * | root, | |

| Plan * | plan, | |||

| List * | tlist | |||

| ) |

Definition at line 1811 of file planner.c.

References cost_qual_eval(), cpu_tuple_cost, QualCost::per_tuple, Plan::plan_rows, QualCost::startup, Plan::startup_cost, tlist_returns_set_rows(), and Plan::total_cost.

Referenced by grouping_planner(), make_agg(), make_group(), make_windowagg(), and optimize_minmax_aggregates().

{

QualCost tlist_cost;

double tlist_rows;

cost_qual_eval(&tlist_cost, tlist, root);

plan->startup_cost += tlist_cost.startup;

plan->total_cost += tlist_cost.startup +

tlist_cost.per_tuple * plan->plan_rows;

tlist_rows = tlist_returns_set_rows(tlist);

if (tlist_rows > 1)

{

/*

* We assume that execution costs of the tlist proper were all

* accounted for by cost_qual_eval. However, it still seems

* appropriate to charge something more for the executor's general

* costs of processing the added tuples. The cost is probably less

* than cpu_tuple_cost, though, so we arbitrarily use half of that.

*/

plan->total_cost += plan->plan_rows * (tlist_rows - 1) *

cpu_tuple_cost / 2;

plan->plan_rows *= tlist_rows;

}

}

| static bool choose_hashed_distinct | ( | PlannerInfo * | root, | |

| double | tuple_fraction, | |||

| double | limit_tuples, | |||

| double | path_rows, | |||

| int | path_width, | |||

| Cost | cheapest_startup_cost, | |||

| Cost | cheapest_total_cost, | |||

| Cost | sorted_startup_cost, | |||

| Cost | sorted_total_cost, | |||

| List * | sorted_pathkeys, | |||

| double | dNumDistinctRows | |||

| ) | [static] |

Definition at line 2636 of file planner.c.

References AGG_HASHED, compare_fractional_path_costs(), cost_agg(), cost_group(), cost_sort(), PlannerInfo::distinct_pathkeys, Query::distinctClause, enable_hashagg, ereport, errcode(), errdetail(), errmsg(), ERROR, grouping_is_hashable(), grouping_is_sortable(), Query::hasDistinctOn, list_length(), MAXALIGN, NULL, PlannerInfo::parse, parse(), pathkeys_contained_in(), PlannerInfo::sort_pathkeys, Query::sortClause, Path::startup_cost, Path::total_cost, and work_mem.

Referenced by grouping_planner().

{

Query *parse = root->parse;

int numDistinctCols = list_length(parse->distinctClause);

bool can_sort;

bool can_hash;

Size hashentrysize;

List *current_pathkeys;

List *needed_pathkeys;

Path hashed_p;

Path sorted_p;

/*

* If we have a sortable DISTINCT ON clause, we always use sorting. This

* enforces the expected behavior of DISTINCT ON.

*/

can_sort = grouping_is_sortable(parse->distinctClause);

if (can_sort && parse->hasDistinctOn)

return false;

can_hash = grouping_is_hashable(parse->distinctClause);

/* Quick out if only one choice is workable */

if (!(can_hash && can_sort))

{

if (can_hash)

return true;

else if (can_sort)

return false;

else

ereport(ERROR,

(errcode(ERRCODE_FEATURE_NOT_SUPPORTED),

errmsg("could not implement DISTINCT"),

errdetail("Some of the datatypes only support hashing, while others only support sorting.")));

}

/* Prefer sorting when enable_hashagg is off */

if (!enable_hashagg)

return false;

/*

* Don't do it if it doesn't look like the hashtable will fit into

* work_mem.

*/

hashentrysize = MAXALIGN(path_width) + MAXALIGN(sizeof(MinimalTupleData));

if (hashentrysize * dNumDistinctRows > work_mem * 1024L)

return false;

/*

* See if the estimated cost is no more than doing it the other way. While

* avoiding the need for sorted input is usually a win, the fact that the

* output won't be sorted may be a loss; so we need to do an actual cost

* comparison.

*

* We need to consider cheapest_path + hashagg [+ final sort] versus

* sorted_path [+ sort] + group [+ final sort] where brackets indicate a

* step that may not be needed.

*

* These path variables are dummies that just hold cost fields; we don't

* make actual Paths for these steps.

*/

cost_agg(&hashed_p, root, AGG_HASHED, NULL,

numDistinctCols, dNumDistinctRows,

cheapest_startup_cost, cheapest_total_cost,

path_rows);

/*

* Result of hashed agg is always unsorted, so if ORDER BY is present we

* need to charge for the final sort.

*/

if (parse->sortClause)

cost_sort(&hashed_p, root, root->sort_pathkeys, hashed_p.total_cost,

dNumDistinctRows, path_width,

0.0, work_mem, limit_tuples);

/*

* Now for the GROUP case. See comments in grouping_planner about the

* sorting choices here --- this code should match that code.

*/

sorted_p.startup_cost = sorted_startup_cost;

sorted_p.total_cost = sorted_total_cost;

current_pathkeys = sorted_pathkeys;

if (parse->hasDistinctOn &&

list_length(root->distinct_pathkeys) <

list_length(root->sort_pathkeys))

needed_pathkeys = root->sort_pathkeys;

else

needed_pathkeys = root->distinct_pathkeys;

if (!pathkeys_contained_in(needed_pathkeys, current_pathkeys))

{

if (list_length(root->distinct_pathkeys) >=

list_length(root->sort_pathkeys))

current_pathkeys = root->distinct_pathkeys;

else

current_pathkeys = root->sort_pathkeys;

cost_sort(&sorted_p, root, current_pathkeys, sorted_p.total_cost,

path_rows, path_width,

0.0, work_mem, -1.0);

}

cost_group(&sorted_p, root, numDistinctCols, dNumDistinctRows,

sorted_p.startup_cost, sorted_p.total_cost,

path_rows);

if (parse->sortClause &&

!pathkeys_contained_in(root->sort_pathkeys, current_pathkeys))

cost_sort(&sorted_p, root, root->sort_pathkeys, sorted_p.total_cost,

dNumDistinctRows, path_width,

0.0, work_mem, limit_tuples);

/*

* Now make the decision using the top-level tuple fraction. First we

* have to convert an absolute count (LIMIT) into fractional form.

*/

if (tuple_fraction >= 1.0)

tuple_fraction /= dNumDistinctRows;

if (compare_fractional_path_costs(&hashed_p, &sorted_p,

tuple_fraction) < 0)

{

/* Hashed is cheaper, so use it */

return true;

}

return false;

}

| static bool choose_hashed_grouping | ( | PlannerInfo * | root, | |

| double | tuple_fraction, | |||

| double | limit_tuples, | |||

| double | path_rows, | |||

| int | path_width, | |||

| Path * | cheapest_path, | |||

| Path * | sorted_path, | |||

| double | dNumGroups, | |||

| AggClauseCosts * | agg_costs | |||

| ) | [static] |

Definition at line 2466 of file planner.c.

References AGG_HASHED, AGG_SORTED, compare_fractional_path_costs(), cost_agg(), cost_group(), cost_sort(), PlannerInfo::distinct_pathkeys, enable_hashagg, ereport, errcode(), errdetail(), errmsg(), ERROR, PlannerInfo::group_pathkeys, Query::groupClause, grouping_is_hashable(), grouping_is_sortable(), Query::hasAggs, hash_agg_entry_size(), list_length(), MAXALIGN, AggClauseCosts::numAggs, AggClauseCosts::numOrderedAggs, PlannerInfo::parse, parse(), Path::pathkeys, pathkeys_contained_in(), PlannerInfo::sort_pathkeys, Path::startup_cost, Path::total_cost, AggClauseCosts::transitionSpace, and work_mem.

Referenced by grouping_planner().

{

Query *parse = root->parse;

int numGroupCols = list_length(parse->groupClause);

bool can_hash;

bool can_sort;

Size hashentrysize;

List *target_pathkeys;

List *current_pathkeys;

Path hashed_p;

Path sorted_p;

/*

* Executor doesn't support hashed aggregation with DISTINCT or ORDER BY

* aggregates. (Doing so would imply storing *all* the input values in

* the hash table, and/or running many sorts in parallel, either of which

* seems like a certain loser.)

*/

can_hash = (agg_costs->numOrderedAggs == 0 &&

grouping_is_hashable(parse->groupClause));

can_sort = grouping_is_sortable(parse->groupClause);

/* Quick out if only one choice is workable */

if (!(can_hash && can_sort))

{

if (can_hash)

return true;

else if (can_sort)

return false;

else

ereport(ERROR,

(errcode(ERRCODE_FEATURE_NOT_SUPPORTED),

errmsg("could not implement GROUP BY"),

errdetail("Some of the datatypes only support hashing, while others only support sorting.")));

}

/* Prefer sorting when enable_hashagg is off */

if (!enable_hashagg)

return false;

/*

* Don't do it if it doesn't look like the hashtable will fit into

* work_mem.

*/

/* Estimate per-hash-entry space at tuple width... */

hashentrysize = MAXALIGN(path_width) + MAXALIGN(sizeof(MinimalTupleData));

/* plus space for pass-by-ref transition values... */

hashentrysize += agg_costs->transitionSpace;

/* plus the per-hash-entry overhead */

hashentrysize += hash_agg_entry_size(agg_costs->numAggs);

if (hashentrysize * dNumGroups > work_mem * 1024L)

return false;

/*

* When we have both GROUP BY and DISTINCT, use the more-rigorous of

* DISTINCT and ORDER BY as the assumed required output sort order. This

* is an oversimplification because the DISTINCT might get implemented via

* hashing, but it's not clear that the case is common enough (or that our

* estimates are good enough) to justify trying to solve it exactly.

*/

if (list_length(root->distinct_pathkeys) >

list_length(root->sort_pathkeys))

target_pathkeys = root->distinct_pathkeys;

else

target_pathkeys = root->sort_pathkeys;

/*

* See if the estimated cost is no more than doing it the other way. While

* avoiding the need for sorted input is usually a win, the fact that the

* output won't be sorted may be a loss; so we need to do an actual cost

* comparison.

*

* We need to consider cheapest_path + hashagg [+ final sort] versus

* either cheapest_path [+ sort] + group or agg [+ final sort] or

* presorted_path + group or agg [+ final sort] where brackets indicate a

* step that may not be needed. We assume query_planner() will have

* returned a presorted path only if it's a winner compared to

* cheapest_path for this purpose.

*

* These path variables are dummies that just hold cost fields; we don't

* make actual Paths for these steps.

*/

cost_agg(&hashed_p, root, AGG_HASHED, agg_costs,

numGroupCols, dNumGroups,

cheapest_path->startup_cost, cheapest_path->total_cost,

path_rows);

/* Result of hashed agg is always unsorted */

if (target_pathkeys)

cost_sort(&hashed_p, root, target_pathkeys, hashed_p.total_cost,

dNumGroups, path_width,

0.0, work_mem, limit_tuples);

if (sorted_path)

{

sorted_p.startup_cost = sorted_path->startup_cost;

sorted_p.total_cost = sorted_path->total_cost;

current_pathkeys = sorted_path->pathkeys;

}

else

{

sorted_p.startup_cost = cheapest_path->startup_cost;

sorted_p.total_cost = cheapest_path->total_cost;

current_pathkeys = cheapest_path->pathkeys;

}

if (!pathkeys_contained_in(root->group_pathkeys, current_pathkeys))

{

cost_sort(&sorted_p, root, root->group_pathkeys, sorted_p.total_cost,

path_rows, path_width,

0.0, work_mem, -1.0);

current_pathkeys = root->group_pathkeys;

}

if (parse->hasAggs)

cost_agg(&sorted_p, root, AGG_SORTED, agg_costs,

numGroupCols, dNumGroups,

sorted_p.startup_cost, sorted_p.total_cost,

path_rows);

else

cost_group(&sorted_p, root, numGroupCols, dNumGroups,

sorted_p.startup_cost, sorted_p.total_cost,

path_rows);

/* The Agg or Group node will preserve ordering */

if (target_pathkeys &&

!pathkeys_contained_in(target_pathkeys, current_pathkeys))

cost_sort(&sorted_p, root, target_pathkeys, sorted_p.total_cost,

dNumGroups, path_width,

0.0, work_mem, limit_tuples);

/*

* Now make the decision using the top-level tuple fraction. First we

* have to convert an absolute count (LIMIT) into fractional form.

*/

if (tuple_fraction >= 1.0)

tuple_fraction /= dNumGroups;

if (compare_fractional_path_costs(&hashed_p, &sorted_p,

tuple_fraction) < 0)

{

/* Hashed is cheaper, so use it */

return true;

}

return false;

}

Definition at line 3414 of file planner.c.

References eval_const_expressions(), fix_opfuncids(), and NULL.

Referenced by ATExecAddColumn(), ATPrepAlterColumnType(), BeginCopyFrom(), CheckMutability(), ExecPrepareExpr(), and GetDomainConstraints().

{

Node *result;

/*

* Convert named-argument function calls, insert default arguments and

* simplify constant subexprs

*/

result = eval_const_expressions(NULL, (Node *) expr);

/* Fill in opfuncid values if missing */

fix_opfuncids(result);

return (Expr *) result;

}

Definition at line 1877 of file planner.c.

References bms_join(), bms_make_singleton(), elog, ERROR, FromExpr::fromlist, IsA, JoinExpr::larg, lfirst, nodeTag, NULL, and JoinExpr::rarg.

Referenced by preprocess_rowmarks().

{

Bitmapset *result;

if (jtnode == NULL)

return NULL;

if (IsA(jtnode, RangeTblRef))

{

int varno = ((RangeTblRef *) jtnode)->rtindex;

result = bms_make_singleton(varno);

}

else if (IsA(jtnode, FromExpr))

{

FromExpr *f = (FromExpr *) jtnode;

ListCell *l;

result = NULL;

foreach(l, f->fromlist)

result = bms_join(result,

get_base_rel_indexes(lfirst(l)));

}

else if (IsA(jtnode, JoinExpr))

{

JoinExpr *j = (JoinExpr *) jtnode;

result = bms_join(get_base_rel_indexes(j->larg),

get_base_rel_indexes(j->rarg));

}

else

{

elog(ERROR, "unrecognized node type: %d",

(int) nodeTag(jtnode));

result = NULL; /* keep compiler quiet */

}

return result;

}

| static void get_column_info_for_window | ( | PlannerInfo * | root, | |

| WindowClause * | wc, | |||

| List * | tlist, | |||

| int | numSortCols, | |||

| AttrNumber * | sortColIdx, | |||

| int * | partNumCols, | |||

| AttrNumber ** | partColIdx, | |||

| Oid ** | partOperators, | |||

| int * | ordNumCols, | |||

| AttrNumber ** | ordColIdx, | |||

| Oid ** | ordOperators | |||

| ) | [static] |

Definition at line 3309 of file planner.c.

References elog, SortGroupClause::eqop, ERROR, extract_grouping_ops(), lappend(), lfirst, list_length(), make_pathkeys_for_sortclauses(), WindowClause::orderClause, palloc(), and WindowClause::partitionClause.

Referenced by grouping_planner().

{

int numPart = list_length(wc->partitionClause);

int numOrder = list_length(wc->orderClause);

if (numSortCols == numPart + numOrder)

{

/* easy case */

*partNumCols = numPart;

*partColIdx = sortColIdx;

*partOperators = extract_grouping_ops(wc->partitionClause);

*ordNumCols = numOrder;

*ordColIdx = sortColIdx + numPart;

*ordOperators = extract_grouping_ops(wc->orderClause);

}

else

{

List *sortclauses;

List *pathkeys;

int scidx;

ListCell *lc;

/* first, allocate what's certainly enough space for the arrays */

*partNumCols = 0;

*partColIdx = (AttrNumber *) palloc(numPart * sizeof(AttrNumber));

*partOperators = (Oid *) palloc(numPart * sizeof(Oid));

*ordNumCols = 0;

*ordColIdx = (AttrNumber *) palloc(numOrder * sizeof(AttrNumber));

*ordOperators = (Oid *) palloc(numOrder * sizeof(Oid));

sortclauses = NIL;

pathkeys = NIL;

scidx = 0;

foreach(lc, wc->partitionClause)

{

SortGroupClause *sgc = (SortGroupClause *) lfirst(lc);

List *new_pathkeys;

sortclauses = lappend(sortclauses, sgc);

new_pathkeys = make_pathkeys_for_sortclauses(root,

sortclauses,

tlist);

if (list_length(new_pathkeys) > list_length(pathkeys))

{

/* this sort clause is actually significant */

(*partColIdx)[*partNumCols] = sortColIdx[scidx++];

(*partOperators)[*partNumCols] = sgc->eqop;

(*partNumCols)++;

pathkeys = new_pathkeys;

}

}

foreach(lc, wc->orderClause)

{

SortGroupClause *sgc = (SortGroupClause *) lfirst(lc);

List *new_pathkeys;

sortclauses = lappend(sortclauses, sgc);

new_pathkeys = make_pathkeys_for_sortclauses(root,

sortclauses,

tlist);

if (list_length(new_pathkeys) > list_length(pathkeys))

{

/* this sort clause is actually significant */

(*ordColIdx)[*ordNumCols] = sortColIdx[scidx++];

(*ordOperators)[*ordNumCols] = sgc->eqop;

(*ordNumCols)++;

pathkeys = new_pathkeys;

}

}

/* complain if we didn't eat exactly the right number of sort cols */

if (scidx != numSortCols)

elog(ERROR, "failed to deconstruct sort operators into partitioning/ordering operators");

}

}

| static int get_grouping_column_index | ( | Query * | parse, | |

| TargetEntry * | tle | |||

| ) | [static] |

Definition at line 2936 of file planner.c.

References Query::groupClause, lfirst, TargetEntry::ressortgroupref, and SortGroupClause::tleSortGroupRef.

Referenced by make_subplanTargetList().

{

int colno = 0;

Index ressortgroupref = tle->ressortgroupref;

ListCell *gl;

/* No need to search groupClause if TLE hasn't got a sortgroupref */

if (ressortgroupref == 0)

return -1;

foreach(gl, parse->groupClause)

{

SortGroupClause *grpcl = (SortGroupClause *) lfirst(gl);

if (grpcl->tleSortGroupRef == ressortgroupref)

return colno;

colno++;

}

return -1;

}

| static Plan * grouping_planner | ( | PlannerInfo * | root, | |

| double | tuple_fraction | |||

| ) | [static] |

Definition at line 1007 of file planner.c.

References standard_qp_extra::activeWindows, add_tlist_costs_to_plan(), add_to_flat_tlist(), AGG_HASHED, Assert, choose_hashed_distinct(), choose_hashed_grouping(), CMD_SELECT, Query::commandType, copyObject(), count_agg_clauses(), create_plan(), PlannerInfo::distinct_pathkeys, Query::distinctClause, WindowClause::endOffset, ereport, errcode(), errmsg(), ERROR, extract_grouping_cols(), extract_grouping_ops(), find_window_functions(), WindowClause::frameOptions, get_column_info_for_window(), PlannerInfo::group_pathkeys, Query::groupClause, Query::hasAggs, Query::hasDistinctOn, PlannerInfo::hasHavingQual, PlannerInfo::hasRecursion, Query::hasWindowFuncs, Query::havingQual, is_projection_capable_plan(), lfirst, Query::limitCount, Query::limitOffset, list_length(), lnext, locate_grouping_columns(), make_agg(), make_group(), make_pathkeys_for_sortclauses(), make_pathkeys_for_window(), make_result(), make_sort_from_groupcols(), make_sort_from_pathkeys(), make_subplanTargetList(), make_unique(), make_windowagg(), make_windowInputTargetList(), MemSet, Min, NIL, NULL, Sort::numCols, WindowFuncLists::numWindowFuncs, optimize_minmax_aggregates(), Path::parent, PlannerInfo::parse, parse(), Path::pathkeys, pathkeys_contained_in(), Plan::plan_rows, plan_set_operations(), Plan::plan_width, postprocess_setop_tlist(), preprocess_groupclause(), preprocess_limit(), preprocess_minmax_aggregates(), preprocess_targetlist(), query_planner(), Query::rowMarks, RelOptInfo::rows, select_active_windows(), Query::setOperations, PlannerInfo::sort_pathkeys, Query::sortClause, Sort::sortColIdx, standard_qp_callback(), WindowClause::startOffset, Plan::startup_cost, Path::startup_cost, Plan::targetlist, Query::targetList, standard_qp_extra::tlist, tlist_same_exprs(), Plan::total_cost, Path::total_cost, RelOptInfo::width, Query::windowClause, WindowFuncLists::windowFuncs, and WindowClause::winref.

Referenced by inheritance_planner(), and subquery_planner().

{

Query *parse = root->parse;

List *tlist = parse->targetList;

int64 offset_est = 0;

int64 count_est = 0;

double limit_tuples = -1.0;

Plan *result_plan;

List *current_pathkeys;

double dNumGroups = 0;

bool use_hashed_distinct = false;

bool tested_hashed_distinct = false;

/* Tweak caller-supplied tuple_fraction if have LIMIT/OFFSET */

if (parse->limitCount || parse->limitOffset)

{

tuple_fraction = preprocess_limit(root, tuple_fraction,

&offset_est, &count_est);

/*

* If we have a known LIMIT, and don't have an unknown OFFSET, we can

* estimate the effects of using a bounded sort.

*/

if (count_est > 0 && offset_est >= 0)

limit_tuples = (double) count_est + (double) offset_est;

}

if (parse->setOperations)

{

List *set_sortclauses;

/*

* If there's a top-level ORDER BY, assume we have to fetch all the

* tuples. This might be too simplistic given all the hackery below

* to possibly avoid the sort; but the odds of accurate estimates here

* are pretty low anyway.

*/

if (parse->sortClause)

tuple_fraction = 0.0;

/*

* Construct the plan for set operations. The result will not need

* any work except perhaps a top-level sort and/or LIMIT. Note that

* any special work for recursive unions is the responsibility of

* plan_set_operations.

*/

result_plan = plan_set_operations(root, tuple_fraction,

&set_sortclauses);

/*

* Calculate pathkeys representing the sort order (if any) of the set

* operation's result. We have to do this before overwriting the sort

* key information...

*/

current_pathkeys = make_pathkeys_for_sortclauses(root,

set_sortclauses,

result_plan->targetlist);

/*

* We should not need to call preprocess_targetlist, since we must be

* in a SELECT query node. Instead, use the targetlist returned by

* plan_set_operations (since this tells whether it returned any

* resjunk columns!), and transfer any sort key information from the

* original tlist.

*/

Assert(parse->commandType == CMD_SELECT);

tlist = postprocess_setop_tlist(copyObject(result_plan->targetlist),

tlist);

/*

* Can't handle FOR [KEY] UPDATE/SHARE here (parser should have checked

* already, but let's make sure).

*/

if (parse->rowMarks)

ereport(ERROR,

(errcode(ERRCODE_FEATURE_NOT_SUPPORTED),

errmsg("row-level locks are not allowed with UNION/INTERSECT/EXCEPT")));

/*

* Calculate pathkeys that represent result ordering requirements

*/

Assert(parse->distinctClause == NIL);

root->sort_pathkeys = make_pathkeys_for_sortclauses(root,

parse->sortClause,

tlist);

}

else

{

/* No set operations, do regular planning */

List *sub_tlist;

double sub_limit_tuples;

AttrNumber *groupColIdx = NULL;

bool need_tlist_eval = true;

standard_qp_extra qp_extra;

Path *cheapest_path;

Path *sorted_path;

Path *best_path;

long numGroups = 0;

AggClauseCosts agg_costs;

int numGroupCols;

double path_rows;

int path_width;

bool use_hashed_grouping = false;

WindowFuncLists *wflists = NULL;

List *activeWindows = NIL;

MemSet(&agg_costs, 0, sizeof(AggClauseCosts));

/* A recursive query should always have setOperations */

Assert(!root->hasRecursion);

/* Preprocess GROUP BY clause, if any */

if (parse->groupClause)

preprocess_groupclause(root);

numGroupCols = list_length(parse->groupClause);

/* Preprocess targetlist */

tlist = preprocess_targetlist(root, tlist);

/*

* Locate any window functions in the tlist. (We don't need to look

* anywhere else, since expressions used in ORDER BY will be in there

* too.) Note that they could all have been eliminated by constant

* folding, in which case we don't need to do any more work.

*/

if (parse->hasWindowFuncs)

{

wflists = find_window_functions((Node *) tlist,

list_length(parse->windowClause));

if (wflists->numWindowFuncs > 0)

activeWindows = select_active_windows(root, wflists);

else

parse->hasWindowFuncs = false;

}

/*

* Generate appropriate target list for subplan; may be different from

* tlist if grouping or aggregation is needed.

*/

sub_tlist = make_subplanTargetList(root, tlist,

&groupColIdx, &need_tlist_eval);

/*

* Do aggregate preprocessing, if the query has any aggs.

*

* Note: think not that we can turn off hasAggs if we find no aggs. It

* is possible for constant-expression simplification to remove all

* explicit references to aggs, but we still have to follow the

* aggregate semantics (eg, producing only one output row).

*/

if (parse->hasAggs)

{

/*

* Collect statistics about aggregates for estimating costs. Note:

* we do not attempt to detect duplicate aggregates here; a

* somewhat-overestimated cost is okay for our present purposes.

*/

count_agg_clauses(root, (Node *) tlist, &agg_costs);

count_agg_clauses(root, parse->havingQual, &agg_costs);

/*

* Preprocess MIN/MAX aggregates, if any. Note: be careful about

* adding logic between here and the optimize_minmax_aggregates

* call. Anything that is needed in MIN/MAX-optimizable cases

* will have to be duplicated in planagg.c.

*/

preprocess_minmax_aggregates(root, tlist);

}

/*

* Figure out whether there's a hard limit on the number of rows that

* query_planner's result subplan needs to return. Even if we know a

* hard limit overall, it doesn't apply if the query has any

* grouping/aggregation operations.

*/

if (parse->groupClause ||

parse->distinctClause ||

parse->hasAggs ||

parse->hasWindowFuncs ||

root->hasHavingQual)

sub_limit_tuples = -1.0;

else

sub_limit_tuples = limit_tuples;

/* Set up data needed by standard_qp_callback */

qp_extra.tlist = tlist;

qp_extra.activeWindows = activeWindows;

/*

* Generate the best unsorted and presorted paths for this Query (but

* note there may not be any presorted path). We also generate (in

* standard_qp_callback) pathkey representations of the query's sort

* clause, distinct clause, etc. query_planner will also estimate the

* number of groups in the query.

*/

query_planner(root, sub_tlist, tuple_fraction, sub_limit_tuples,

standard_qp_callback, &qp_extra,

&cheapest_path, &sorted_path, &dNumGroups);

/*

* Extract rowcount and width estimates for possible use in grouping

* decisions. Beware here of the possibility that

* cheapest_path->parent is NULL (ie, there is no FROM clause).

*/

if (cheapest_path->parent)

{

path_rows = cheapest_path->parent->rows;

path_width = cheapest_path->parent->width;

}

else

{

path_rows = 1; /* assume non-set result */

path_width = 100; /* arbitrary */

}

if (parse->groupClause)

{

/*

* If grouping, decide whether to use sorted or hashed grouping.

*/

use_hashed_grouping =

choose_hashed_grouping(root,

tuple_fraction, limit_tuples,

path_rows, path_width,

cheapest_path, sorted_path,

dNumGroups, &agg_costs);

/* Also convert # groups to long int --- but 'ware overflow! */

numGroups = (long) Min(dNumGroups, (double) LONG_MAX);

}

else if (parse->distinctClause && sorted_path &&

!root->hasHavingQual && !parse->hasAggs && !activeWindows)

{

/*

* We'll reach the DISTINCT stage without any intermediate

* processing, so figure out whether we will want to hash or not

* so we can choose whether to use cheapest or sorted path.

*/

use_hashed_distinct =

choose_hashed_distinct(root,

tuple_fraction, limit_tuples,

path_rows, path_width,

cheapest_path->startup_cost,

cheapest_path->total_cost,

sorted_path->startup_cost,

sorted_path->total_cost,

sorted_path->pathkeys,

dNumGroups);

tested_hashed_distinct = true;

}

/*

* Select the best path. If we are doing hashed grouping, we will

* always read all the input tuples, so use the cheapest-total path.

* Otherwise, trust query_planner's decision about which to use.

*/

if (use_hashed_grouping || use_hashed_distinct || !sorted_path)

best_path = cheapest_path;

else

best_path = sorted_path;

/*

* Check to see if it's possible to optimize MIN/MAX aggregates. If

* so, we will forget all the work we did so far to choose a "regular"

* path ... but we had to do it anyway to be able to tell which way is

* cheaper.

*/

result_plan = optimize_minmax_aggregates(root,

tlist,

&agg_costs,

best_path);

if (result_plan != NULL)

{

/*

* optimize_minmax_aggregates generated the full plan, with the

* right tlist, and it has no sort order.

*/

current_pathkeys = NIL;

}

else

{

/*

* Normal case --- create a plan according to query_planner's

* results.

*/

bool need_sort_for_grouping = false;

result_plan = create_plan(root, best_path);

current_pathkeys = best_path->pathkeys;

/* Detect if we'll need an explicit sort for grouping */

if (parse->groupClause && !use_hashed_grouping &&

!pathkeys_contained_in(root->group_pathkeys, current_pathkeys))

{

need_sort_for_grouping = true;

/*

* Always override create_plan's tlist, so that we don't sort

* useless data from a "physical" tlist.

*/

need_tlist_eval = true;

}

/*

* create_plan returns a plan with just a "flat" tlist of required

* Vars. Usually we need to insert the sub_tlist as the tlist of

* the top plan node. However, we can skip that if we determined

* that whatever create_plan chose to return will be good enough.

*/

if (need_tlist_eval)

{

/*

* If the top-level plan node is one that cannot do expression

* evaluation and its existing target list isn't already what

* we need, we must insert a Result node to project the

* desired tlist.

*/

if (!is_projection_capable_plan(result_plan) &&

!tlist_same_exprs(sub_tlist, result_plan->targetlist))

{

result_plan = (Plan *) make_result(root,

sub_tlist,

NULL,

result_plan);

}

else

{

/*

* Otherwise, just replace the subplan's flat tlist with

* the desired tlist.

*/

result_plan->targetlist = sub_tlist;

}

/*

* Also, account for the cost of evaluation of the sub_tlist.

* See comments for add_tlist_costs_to_plan() for more info.

*/

add_tlist_costs_to_plan(root, result_plan, sub_tlist);

}

else

{

/*

* Since we're using create_plan's tlist and not the one

* make_subplanTargetList calculated, we have to refigure any

* grouping-column indexes make_subplanTargetList computed.

*/

locate_grouping_columns(root, tlist, result_plan->targetlist,

groupColIdx);

}

/*

* Insert AGG or GROUP node if needed, plus an explicit sort step

* if necessary.

*

* HAVING clause, if any, becomes qual of the Agg or Group node.

*/

if (use_hashed_grouping)

{

/* Hashed aggregate plan --- no sort needed */

result_plan = (Plan *) make_agg(root,

tlist,

(List *) parse->havingQual,

AGG_HASHED,

&agg_costs,

numGroupCols,

groupColIdx,

extract_grouping_ops(parse->groupClause),

numGroups,

result_plan);

/* Hashed aggregation produces randomly-ordered results */

current_pathkeys = NIL;

}

else if (parse->hasAggs)

{

/* Plain aggregate plan --- sort if needed */

AggStrategy aggstrategy;

if (parse->groupClause)

{

if (need_sort_for_grouping)

{

result_plan = (Plan *)

make_sort_from_groupcols(root,

parse->groupClause,

groupColIdx,

result_plan);

current_pathkeys = root->group_pathkeys;

}

aggstrategy = AGG_SORTED;

/*

* The AGG node will not change the sort ordering of its

* groups, so current_pathkeys describes the result too.

*/

}

else

{

aggstrategy = AGG_PLAIN;

/* Result will be only one row anyway; no sort order */

current_pathkeys = NIL;

}

result_plan = (Plan *) make_agg(root,

tlist,

(List *) parse->havingQual,

aggstrategy,

&agg_costs,

numGroupCols,

groupColIdx,

extract_grouping_ops(parse->groupClause),

numGroups,

result_plan);

}

else if (parse->groupClause)

{

/*

* GROUP BY without aggregation, so insert a group node (plus

* the appropriate sort node, if necessary).

*

* Add an explicit sort if we couldn't make the path come out

* the way the GROUP node needs it.

*/

if (need_sort_for_grouping)

{

result_plan = (Plan *)

make_sort_from_groupcols(root,

parse->groupClause,

groupColIdx,

result_plan);

current_pathkeys = root->group_pathkeys;

}

result_plan = (Plan *) make_group(root,

tlist,

(List *) parse->havingQual,

numGroupCols,

groupColIdx,

extract_grouping_ops(parse->groupClause),

dNumGroups,

result_plan);

/* The Group node won't change sort ordering */

}

else if (root->hasHavingQual)

{

/*

* No aggregates, and no GROUP BY, but we have a HAVING qual.

* This is a degenerate case in which we are supposed to emit

* either 0 or 1 row depending on whether HAVING succeeds.

* Furthermore, there cannot be any variables in either HAVING

* or the targetlist, so we actually do not need the FROM

* table at all! We can just throw away the plan-so-far and

* generate a Result node. This is a sufficiently unusual

* corner case that it's not worth contorting the structure of

* this routine to avoid having to generate the plan in the

* first place.

*/

result_plan = (Plan *) make_result(root,

tlist,

parse->havingQual,

NULL);

}

} /* end of non-minmax-aggregate case */

/*

* Since each window function could require a different sort order, we

* stack up a WindowAgg node for each window, with sort steps between

* them as needed.

*/

if (activeWindows)

{

List *window_tlist;

ListCell *l;

/*

* If the top-level plan node is one that cannot do expression

* evaluation, we must insert a Result node to project the desired

* tlist. (In some cases this might not really be required, but

* it's not worth trying to avoid it. In particular, think not to

* skip adding the Result if the initial window_tlist matches the

* top-level plan node's output, because we might change the tlist

* inside the following loop.) Note that on second and subsequent

* passes through the following loop, the top-level node will be a

* WindowAgg which we know can project; so we only need to check

* once.

*/

if (!is_projection_capable_plan(result_plan))

{

result_plan = (Plan *) make_result(root,

NIL,

NULL,

result_plan);

}

/*

* The "base" targetlist for all steps of the windowing process is

* a flat tlist of all Vars and Aggs needed in the result. (In

* some cases we wouldn't need to propagate all of these all the

* way to the top, since they might only be needed as inputs to

* WindowFuncs. It's probably not worth trying to optimize that

* though.) We also add window partitioning and sorting

* expressions to the base tlist, to ensure they're computed only

* once at the bottom of the stack (that's critical for volatile

* functions). As we climb up the stack, we'll add outputs for

* the WindowFuncs computed at each level.

*/

window_tlist = make_windowInputTargetList(root,

tlist,

activeWindows);

/*

* The copyObject steps here are needed to ensure that each plan

* node has a separately modifiable tlist. (XXX wouldn't a

* shallow list copy do for that?)

*/

result_plan->targetlist = (List *) copyObject(window_tlist);

foreach(l, activeWindows)

{

WindowClause *wc = (WindowClause *) lfirst(l);

List *window_pathkeys;

int partNumCols;

AttrNumber *partColIdx;

Oid *partOperators;

int ordNumCols;

AttrNumber *ordColIdx;

Oid *ordOperators;

window_pathkeys = make_pathkeys_for_window(root,

wc,

tlist);

/*

* This is a bit tricky: we build a sort node even if we don't

* really have to sort. Even when no explicit sort is needed,

* we need to have suitable resjunk items added to the input

* plan's tlist for any partitioning or ordering columns that

* aren't plain Vars. (In theory, make_windowInputTargetList

* should have provided all such columns, but let's not assume

* that here.) Furthermore, this way we can use existing

* infrastructure to identify which input columns are the

* interesting ones.

*/

if (window_pathkeys)

{

Sort *sort_plan;

sort_plan = make_sort_from_pathkeys(root,

result_plan,

window_pathkeys,

-1.0);

if (!pathkeys_contained_in(window_pathkeys,

current_pathkeys))

{

/* we do indeed need to sort */

result_plan = (Plan *) sort_plan;

current_pathkeys = window_pathkeys;

}

/* In either case, extract the per-column information */

get_column_info_for_window(root, wc, tlist,

sort_plan->numCols,

sort_plan->sortColIdx,

&partNumCols,

&partColIdx,

&partOperators,

&ordNumCols,

&ordColIdx,

&ordOperators);

}

else

{

/* empty window specification, nothing to sort */

partNumCols = 0;

partColIdx = NULL;

partOperators = NULL;

ordNumCols = 0;

ordColIdx = NULL;

ordOperators = NULL;

}

if (lnext(l))

{

/* Add the current WindowFuncs to the running tlist */

window_tlist = add_to_flat_tlist(window_tlist,

wflists->windowFuncs[wc->winref]);

}

else

{

/* Install the original tlist in the topmost WindowAgg */

window_tlist = tlist;

}

/* ... and make the WindowAgg plan node */

result_plan = (Plan *)

make_windowagg(root,

(List *) copyObject(window_tlist),

wflists->windowFuncs[wc->winref],

wc->winref,

partNumCols,

partColIdx,

partOperators,

ordNumCols,

ordColIdx,

ordOperators,

wc->frameOptions,

wc->startOffset,

wc->endOffset,

result_plan);

}

}

} /* end of if (setOperations) */

/*

* If there is a DISTINCT clause, add the necessary node(s).

*/

if (parse->distinctClause)

{

double dNumDistinctRows;

long numDistinctRows;

/*

* If there was grouping or aggregation, use the current number of

* rows as the estimated number of DISTINCT rows (ie, assume the

* result was already mostly unique). If not, use the number of

* distinct-groups calculated by query_planner.

*/

if (parse->groupClause || root->hasHavingQual || parse->hasAggs)

dNumDistinctRows = result_plan->plan_rows;

else

dNumDistinctRows = dNumGroups;

/* Also convert to long int --- but 'ware overflow! */

numDistinctRows = (long) Min(dNumDistinctRows, (double) LONG_MAX);

/* Choose implementation method if we didn't already */

if (!tested_hashed_distinct)

{

/*

* At this point, either hashed or sorted grouping will have to

* work from result_plan, so we pass that as both "cheapest" and

* "sorted".

*/

use_hashed_distinct =

choose_hashed_distinct(root,

tuple_fraction, limit_tuples,

result_plan->plan_rows,

result_plan->plan_width,

result_plan->startup_cost,

result_plan->total_cost,

result_plan->startup_cost,

result_plan->total_cost,

current_pathkeys,

dNumDistinctRows);

}

if (use_hashed_distinct)

{

/* Hashed aggregate plan --- no sort needed */

result_plan = (Plan *) make_agg(root,

result_plan->targetlist,

NIL,

AGG_HASHED,

NULL,

list_length(parse->distinctClause),

extract_grouping_cols(parse->distinctClause,

result_plan->targetlist),

extract_grouping_ops(parse->distinctClause),

numDistinctRows,

result_plan);

/* Hashed aggregation produces randomly-ordered results */

current_pathkeys = NIL;

}

else

{

/*

* Use a Unique node to implement DISTINCT. Add an explicit sort

* if we couldn't make the path come out the way the Unique node

* needs it. If we do have to sort, always sort by the more

* rigorous of DISTINCT and ORDER BY, to avoid a second sort

* below. However, for regular DISTINCT, don't sort now if we

* don't have to --- sorting afterwards will likely be cheaper,

* and also has the possibility of optimizing via LIMIT. But for

* DISTINCT ON, we *must* force the final sort now, else it won't

* have the desired behavior.

*/

List *needed_pathkeys;

if (parse->hasDistinctOn &&

list_length(root->distinct_pathkeys) <

list_length(root->sort_pathkeys))

needed_pathkeys = root->sort_pathkeys;

else

needed_pathkeys = root->distinct_pathkeys;

if (!pathkeys_contained_in(needed_pathkeys, current_pathkeys))

{

if (list_length(root->distinct_pathkeys) >=

list_length(root->sort_pathkeys))

current_pathkeys = root->distinct_pathkeys;

else

{

current_pathkeys = root->sort_pathkeys;

/* Assert checks that parser didn't mess up... */

Assert(pathkeys_contained_in(root->distinct_pathkeys,

current_pathkeys));

}

result_plan = (Plan *) make_sort_from_pathkeys(root,

result_plan,

current_pathkeys,

-1.0);

}

result_plan = (Plan *) make_unique(result_plan,

parse->distinctClause);

result_plan->plan_rows = dNumDistinctRows;

/* The Unique node won't change sort ordering */

}

}

/*

* If ORDER BY was given and we were not able to make the plan come out in

* the right order, add an explicit sort step.

*/

if (parse->sortClause)

{

if (!pathkeys_contained_in(root->sort_pathkeys, current_pathkeys))

{

result_plan = (Plan *) make_sort_from_pathkeys(root,

result_plan,

root->sort_pathkeys,

limit_tuples);

current_pathkeys = root->sort_pathkeys;

}

}

/*

* If there is a FOR [KEY] UPDATE/SHARE clause, add the LockRows node. (Note: we

* intentionally test parse->rowMarks not root->rowMarks here. If there

* are only non-locking rowmarks, they should be handled by the

* ModifyTable node instead.)

*/

if (parse->rowMarks)

{

result_plan = (Plan *) make_lockrows(result_plan,

root->rowMarks,

SS_assign_special_param(root));

/*

* The result can no longer be assumed sorted, since locking might

* cause the sort key columns to be replaced with new values.

*/

current_pathkeys = NIL;

}

/*

* Finally, if there is a LIMIT/OFFSET clause, add the LIMIT node.

*/

if (limit_needed(parse))

{

result_plan = (Plan *) make_limit(result_plan,

parse->limitOffset,

parse->limitCount,

offset_est,

count_est);

}

/*

* Return the actual output ordering in query_pathkeys for possible use by

* an outer query level.

*/

root->query_pathkeys = current_pathkeys;

return result_plan;

}

| static Plan * inheritance_planner | ( | PlannerInfo * | root | ) | [static] |

Definition at line 764 of file planner.c.

References adjust_appendrel_attrs(), PlannerInfo::append_rel_list, Assert, Query::canSetTag, ChangeVarNodes(), AppendRelInfo::child_relid, Query::commandType, copyObject(), grouping_planner(), PlannerInfo::hasInheritedTarget, PlannerInfo::init_plans, is_dummy_plan(), PlannerInfo::join_info_list, lappend(), lappend_int(), PlannerInfo::lateral_info_list, lfirst, list_concat(), list_copy_tail(), list_length(), list_make1, make_modifytable(), make_result(), makeBoolConst(), makeNode, NIL, NULL, AppendRelInfo::parent_relid, PlannerInfo::parse, parse(), PlannerInfo::placeholder_list, preprocess_targetlist(), PlannerInfo::query_pathkeys, Query::resultRelation, Query::returningList, Query::rowMarks, PlannerInfo::rowMarks, Query::rtable, RTE_SUBQUERY, RangeTblEntry::rtekind, PlannerInfo::simple_rel_array, PlannerInfo::simple_rel_array_size, SS_assign_special_param(), and Query::targetList.

Referenced by subquery_planner().

{

Query *parse = root->parse;

int parentRTindex = parse->resultRelation;

List *final_rtable = NIL;

int save_rel_array_size = 0;

RelOptInfo **save_rel_array = NULL;

List *subplans = NIL;

List *resultRelations = NIL;

List *returningLists = NIL;

List *rowMarks;

ListCell *lc;

/*

* We generate a modified instance of the original Query for each target

* relation, plan that, and put all the plans into a list that will be

* controlled by a single ModifyTable node. All the instances share the

* same rangetable, but each instance must have its own set of subquery

* RTEs within the finished rangetable because (1) they are likely to get

* scribbled on during planning, and (2) it's not inconceivable that

* subqueries could get planned differently in different cases. We need

* not create duplicate copies of other RTE kinds, in particular not the

* target relations, because they don't have either of those issues. Not

* having to duplicate the target relations is important because doing so

* (1) would result in a rangetable of length O(N^2) for N targets, with

* at least O(N^3) work expended here; and (2) would greatly complicate

* management of the rowMarks list.

*/

foreach(lc, root->append_rel_list)

{

AppendRelInfo *appinfo = (AppendRelInfo *) lfirst(lc);

PlannerInfo subroot;

Plan *subplan;

Index rti;

/* append_rel_list contains all append rels; ignore others */

if (appinfo->parent_relid != parentRTindex)

continue;

/*

* We need a working copy of the PlannerInfo so that we can control

* propagation of information back to the main copy.

*/

memcpy(&subroot, root, sizeof(PlannerInfo));

/*

* Generate modified query with this rel as target. We first apply

* adjust_appendrel_attrs, which copies the Query and changes

* references to the parent RTE to refer to the current child RTE,

* then fool around with subquery RTEs.

*/

subroot.parse = (Query *)

adjust_appendrel_attrs(root,

(Node *) parse,

appinfo);

/*

* The rowMarks list might contain references to subquery RTEs, so

* make a copy that we can apply ChangeVarNodes to. (Fortunately, the

* executor doesn't need to see the modified copies --- we can just

* pass it the original rowMarks list.)

*/

subroot.rowMarks = (List *) copyObject(root->rowMarks);

/*

* Add placeholders to the child Query's rangetable list to fill the

* RT indexes already reserved for subqueries in previous children.

* These won't be referenced, so there's no need to make them very

* valid-looking.

*/

while (list_length(subroot.parse->rtable) < list_length(final_rtable))

subroot.parse->rtable = lappend(subroot.parse->rtable,

makeNode(RangeTblEntry));

/*

* If this isn't the first child Query, generate duplicates of all

* subquery RTEs, and adjust Var numbering to reference the

* duplicates. To simplify the loop logic, we scan the original rtable

* not the copy just made by adjust_appendrel_attrs; that should be OK

* since subquery RTEs couldn't contain any references to the target

* rel.

*/

if (final_rtable != NIL)

{

ListCell *lr;

rti = 1;

foreach(lr, parse->rtable)

{

RangeTblEntry *rte = (RangeTblEntry *) lfirst(lr);

if (rte->rtekind == RTE_SUBQUERY)

{

Index newrti;

/*

* The RTE can't contain any references to its own RT

* index, so we can save a few cycles by applying

* ChangeVarNodes before we append the RTE to the

* rangetable.

*/

newrti = list_length(subroot.parse->rtable) + 1;

ChangeVarNodes((Node *) subroot.parse, rti, newrti, 0);

ChangeVarNodes((Node *) subroot.rowMarks, rti, newrti, 0);

rte = copyObject(rte);

subroot.parse->rtable = lappend(subroot.parse->rtable,

rte);

}

rti++;

}

}

/* We needn't modify the child's append_rel_list */

/* There shouldn't be any OJ or LATERAL info to translate, as yet */

Assert(subroot.join_info_list == NIL);

Assert(subroot.lateral_info_list == NIL);

/* and we haven't created PlaceHolderInfos, either */

Assert(subroot.placeholder_list == NIL);

/* hack to mark target relation as an inheritance partition */

subroot.hasInheritedTarget = true;

/* Generate plan */

subplan = grouping_planner(&subroot, 0.0 /* retrieve all tuples */ );

/*

* If this child rel was excluded by constraint exclusion, exclude it

* from the result plan.

*/

if (is_dummy_plan(subplan))

continue;

subplans = lappend(subplans, subplan);

/*

* If this is the first non-excluded child, its post-planning rtable

* becomes the initial contents of final_rtable; otherwise, append

* just its modified subquery RTEs to final_rtable.

*/

if (final_rtable == NIL)

final_rtable = subroot.parse->rtable;

else

final_rtable = list_concat(final_rtable,

list_copy_tail(subroot.parse->rtable,

list_length(final_rtable)));

/*

* We need to collect all the RelOptInfos from all child plans into

* the main PlannerInfo, since setrefs.c will need them. We use the

* last child's simple_rel_array (previous ones are too short), so we

* have to propagate forward the RelOptInfos that were already built

* in previous children.

*/

Assert(subroot.simple_rel_array_size >= save_rel_array_size);

for (rti = 1; rti < save_rel_array_size; rti++)

{

RelOptInfo *brel = save_rel_array[rti];

if (brel)

subroot.simple_rel_array[rti] = brel;

}

save_rel_array_size = subroot.simple_rel_array_size;

save_rel_array = subroot.simple_rel_array;

/* Make sure any initplans from this rel get into the outer list */

root->init_plans = subroot.init_plans;

/* Build list of target-relation RT indexes */

resultRelations = lappend_int(resultRelations, appinfo->child_relid);

/* Build list of per-relation RETURNING targetlists */

if (parse->returningList)

returningLists = lappend(returningLists,

subroot.parse->returningList);

}

/* Mark result as unordered (probably unnecessary) */

root->query_pathkeys = NIL;

/*

* If we managed to exclude every child rel, return a dummy plan; it

* doesn't even need a ModifyTable node.

*/

if (subplans == NIL)

{

/* although dummy, it must have a valid tlist for executor */

List *tlist;

tlist = preprocess_targetlist(root, parse->targetList);

return (Plan *) make_result(root,

tlist,

(Node *) list_make1(makeBoolConst(false,

false)),

NULL);

}

/*

* Put back the final adjusted rtable into the master copy of the Query.

*/

parse->rtable = final_rtable;

root->simple_rel_array_size = save_rel_array_size;

root->simple_rel_array = save_rel_array;

/*

* If there was a FOR [KEY] UPDATE/SHARE clause, the LockRows node will have

* dealt with fetching non-locked marked rows, else we need to have

* ModifyTable do that.

*/

if (parse->rowMarks)

rowMarks = NIL;

else

rowMarks = root->rowMarks;

/* And last, tack on a ModifyTable node to do the UPDATE/DELETE work */

return (Plan *) make_modifytable(root,

parse->commandType,

parse->canSetTag,

resultRelations,

subplans,

returningLists,

rowMarks,

SS_assign_special_param(root));

}

Definition at line 1848 of file planner.c.

References Const::constisnull, Const::constvalue, DatumGetBool, IsA, linitial, and list_length().

Referenced by create_append_plan(), inheritance_planner(), and set_subquery_pathlist().

{

if (IsA(plan, Result))

{

List *rcqual = (List *) ((Result *) plan)->resconstantqual;

if (list_length(rcqual) == 1)

{

Const *constqual = (Const *) linitial(rcqual);

if (constqual && IsA(constqual, Const))

{

if (!constqual->constisnull &&

!DatumGetBool(constqual->constvalue))

return true;

}

}

}

return false;

}

Definition at line 2251 of file planner.c.

References DatumGetInt64, IsA, Query::limitCount, and Query::limitOffset.

{

Node *node;

node = parse->limitCount;

if (node)

{

if (IsA(node, Const))

{

/* NULL indicates LIMIT ALL, ie, no limit */

if (!((Const *) node)->constisnull)

return true; /* LIMIT with a constant value */

}

else

return true; /* non-constant LIMIT */

}

node = parse->limitOffset;

if (node)

{

if (IsA(node, Const))

{

/* Treat NULL as no offset; the executor would too */

if (!((Const *) node)->constisnull)

{

int64 offset = DatumGetInt64(((Const *) node)->constvalue);

/* Executor would treat less-than-zero same as zero */

if (offset > 0)

return true; /* OFFSET with a positive value */

}

}

else

return true; /* non-constant OFFSET */

}

return false; /* don't need a Limit plan node */

}

| static void locate_grouping_columns | ( | PlannerInfo * | root, | |

| List * | tlist, | |||

| List * | sub_tlist, | |||

| AttrNumber * | groupColIdx | |||

| ) | [static] |

Definition at line 2967 of file planner.c.

References Assert, elog, ERROR, get_sortgroupclause_expr(), Query::groupClause, lfirst, NULL, PlannerInfo::parse, TargetEntry::resno, and tlist_member().

Referenced by grouping_planner().

{

int keyno = 0;

ListCell *gl;

/*

* No work unless grouping.

*/

if (!root->parse->groupClause)

{

Assert(groupColIdx == NULL);

return;

}

Assert(groupColIdx != NULL);

foreach(gl, root->parse->groupClause)

{

SortGroupClause *grpcl = (SortGroupClause *) lfirst(gl);

Node *groupexpr = get_sortgroupclause_expr(grpcl, tlist);

TargetEntry *te = tlist_member(groupexpr, sub_tlist);

if (!te)

elog(ERROR, "failed to locate grouping columns");

groupColIdx[keyno++] = te->resno;

}

}

| static List * make_pathkeys_for_window | ( | PlannerInfo * | root, | |

| WindowClause * | wc, | |||

| List * | tlist | |||

| ) | [static] |

Definition at line 3255 of file planner.c.

References ereport, errcode(), errdetail(), errmsg(), ERROR, grouping_is_sortable(), list_concat(), list_copy(), list_free(), make_pathkeys_for_sortclauses(), WindowClause::orderClause, and WindowClause::partitionClause.

Referenced by grouping_planner(), and standard_qp_callback().

{

List *window_pathkeys;

List *window_sortclauses;

/* Throw error if can't sort */

if (!grouping_is_sortable(wc->partitionClause))

ereport(ERROR,

(errcode(ERRCODE_FEATURE_NOT_SUPPORTED),

errmsg("could not implement window PARTITION BY"),

errdetail("Window partitioning columns must be of sortable datatypes.")));

if (!grouping_is_sortable(wc->orderClause))

ereport(ERROR,

(errcode(ERRCODE_FEATURE_NOT_SUPPORTED),

errmsg("could not implement window ORDER BY"),

errdetail("Window ordering columns must be of sortable datatypes.")));

/* Okay, make the combined pathkeys */

window_sortclauses = list_concat(list_copy(wc->partitionClause),

list_copy(wc->orderClause));

window_pathkeys = make_pathkeys_for_sortclauses(root,

window_sortclauses,

tlist);

list_free(window_sortclauses);

return window_pathkeys;

}

| static List * make_subplanTargetList | ( | PlannerInfo * | root, | |

| List * | tlist, | |||

| AttrNumber ** | groupColIdx, | |||

| bool * | need_tlist_eval | |||

| ) | [static] |

Definition at line 2807 of file planner.c.

References add_to_flat_tlist(), Assert, TargetEntry::expr, get_grouping_column_index(), Query::groupClause, Query::hasAggs, PlannerInfo::hasHavingQual, Query::hasWindowFuncs, Query::havingQual, IsA, lappend(), lfirst, list_copy(), list_free(), list_length(), makeTargetEntry(), NULL, palloc0(), PlannerInfo::parse, parse(), pull_var_clause(), PVC_INCLUDE_PLACEHOLDERS, PVC_RECURSE_AGGREGATES, and TargetEntry::resno.

Referenced by grouping_planner().

{

Query *parse = root->parse;

List *sub_tlist;

List *non_group_cols;

List *non_group_vars;

int numCols;

*groupColIdx = NULL;

/*

* If we're not grouping or aggregating, there's nothing to do here;

* query_planner should receive the unmodified target list.

*/

if (!parse->hasAggs && !parse->groupClause && !root->hasHavingQual &&

!parse->hasWindowFuncs)

{

*need_tlist_eval = true;

return tlist;

}

/*

* Otherwise, we must build a tlist containing all grouping columns, plus

* any other Vars mentioned in the targetlist and HAVING qual.

*/

sub_tlist = NIL;

non_group_cols = NIL;

*need_tlist_eval = false; /* only eval if not flat tlist */

numCols = list_length(parse->groupClause);

if (numCols > 0)

{

/*

* If grouping, create sub_tlist entries for all GROUP BY columns, and

* make an array showing where the group columns are in the sub_tlist.

*

* Note: with this implementation, the array entries will always be

* 1..N, but we don't want callers to assume that.

*/

AttrNumber *grpColIdx;

ListCell *tl;

grpColIdx = (AttrNumber *) palloc0(sizeof(AttrNumber) * numCols);

*groupColIdx = grpColIdx;

foreach(tl, tlist)

{

TargetEntry *tle = (TargetEntry *) lfirst(tl);

int colno;

colno = get_grouping_column_index(parse, tle);

if (colno >= 0)

{

/*

* It's a grouping column, so add it to the result tlist and

* remember its resno in grpColIdx[].

*/

TargetEntry *newtle;

newtle = makeTargetEntry(tle->expr,

list_length(sub_tlist) + 1,

NULL,

false);

sub_tlist = lappend(sub_tlist, newtle);

Assert(grpColIdx[colno] == 0); /* no dups expected */

grpColIdx[colno] = newtle->resno;

if (!(newtle->expr && IsA(newtle->expr, Var)))

*need_tlist_eval = true; /* tlist contains non Vars */

}

else

{

/*

* Non-grouping column, so just remember the expression for

* later call to pull_var_clause. There's no need for

* pull_var_clause to examine the TargetEntry node itself.

*/

non_group_cols = lappend(non_group_cols, tle->expr);

}

}

}

else

{

/*

* With no grouping columns, just pass whole tlist to pull_var_clause.

* Need (shallow) copy to avoid damaging input tlist below.

*/

non_group_cols = list_copy(tlist);

}

/*

* If there's a HAVING clause, we'll need the Vars it uses, too.

*/

if (parse->havingQual)

non_group_cols = lappend(non_group_cols, parse->havingQual);

/*

* Pull out all the Vars mentioned in non-group cols (plus HAVING), and

* add them to the result tlist if not already present. (A Var used

* directly as a GROUP BY item will be present already.) Note this

* includes Vars used in resjunk items, so we are covering the needs of

* ORDER BY and window specifications. Vars used within Aggrefs will be

* pulled out here, too.

*/

non_group_vars = pull_var_clause((Node *) non_group_cols,

PVC_RECURSE_AGGREGATES,

PVC_INCLUDE_PLACEHOLDERS);

sub_tlist = add_to_flat_tlist(sub_tlist, non_group_vars);

/* clean up cruft */

list_free(non_group_vars);

list_free(non_group_cols);

return sub_tlist;

}

| static List * make_windowInputTargetList | ( | PlannerInfo * | root, | |

| List * | tlist, | |||

| List * | activeWindows | |||

| ) | [static] |

Definition at line 3138 of file planner.c.

References add_to_flat_tlist(), Assert, bms_add_member(), bms_is_member(), TargetEntry::expr, Query::groupClause, Query::hasWindowFuncs, lappend(), lfirst, list_free(), list_length(), makeTargetEntry(), NULL, WindowClause::orderClause, PlannerInfo::parse, parse(), WindowClause::partitionClause, pull_var_clause(), PVC_INCLUDE_AGGREGATES, PVC_INCLUDE_PLACEHOLDERS, TargetEntry::ressortgroupref, and SortGroupClause::tleSortGroupRef.

Referenced by grouping_planner().

{

Query *parse = root->parse;

Bitmapset *sgrefs;

List *new_tlist;

List *flattenable_cols;

List *flattenable_vars;

ListCell *lc;

Assert(parse->hasWindowFuncs);

/*

* Collect the sortgroupref numbers of window PARTITION/ORDER BY clauses

* into a bitmapset for convenient reference below.

*/

sgrefs = NULL;

foreach(lc, activeWindows)

{

WindowClause *wc = (WindowClause *) lfirst(lc);

ListCell *lc2;

foreach(lc2, wc->partitionClause)

{

SortGroupClause *sortcl = (SortGroupClause *) lfirst(lc2);

sgrefs = bms_add_member(sgrefs, sortcl->tleSortGroupRef);

}

foreach(lc2, wc->orderClause)

{

SortGroupClause *sortcl = (SortGroupClause *) lfirst(lc2);

sgrefs = bms_add_member(sgrefs, sortcl->tleSortGroupRef);

}

}

/* Add in sortgroupref numbers of GROUP BY clauses, too */

foreach(lc, parse->groupClause)

{

SortGroupClause *grpcl = (SortGroupClause *) lfirst(lc);

sgrefs = bms_add_member(sgrefs, grpcl->tleSortGroupRef);

}

/*

* Construct a tlist containing all the non-flattenable tlist items, and

* save aside the others for a moment.

*/

new_tlist = NIL;

flattenable_cols = NIL;

foreach(lc, tlist)

{

TargetEntry *tle = (TargetEntry *) lfirst(lc);

/*

* Don't want to deconstruct window clauses or GROUP BY items. (Note

* that such items can't contain window functions, so it's okay to

* compute them below the WindowAgg nodes.)

*/

if (tle->ressortgroupref != 0 &&

bms_is_member(tle->ressortgroupref, sgrefs))

{

/* Don't want to deconstruct this value, so add to new_tlist */

TargetEntry *newtle;

newtle = makeTargetEntry(tle->expr,

list_length(new_tlist) + 1,

NULL,

false);

/* Preserve its sortgroupref marking, in case it's volatile */

newtle->ressortgroupref = tle->ressortgroupref;

new_tlist = lappend(new_tlist, newtle);

}

else

{

/*

* Column is to be flattened, so just remember the expression for

* later call to pull_var_clause. There's no need for

* pull_var_clause to examine the TargetEntry node itself.

*/

flattenable_cols = lappend(flattenable_cols, tle->expr);

}

}

/*

* Pull out all the Vars and Aggrefs mentioned in flattenable columns, and

* add them to the result tlist if not already present. (Some might be

* there already because they're used directly as window/group clauses.)

*

* Note: it's essential to use PVC_INCLUDE_AGGREGATES here, so that the

* Aggrefs are placed in the Agg node's tlist and not left to be computed

* at higher levels.

*/

flattenable_vars = pull_var_clause((Node *) flattenable_cols,

PVC_INCLUDE_AGGREGATES,

PVC_INCLUDE_PLACEHOLDERS);

new_tlist = add_to_flat_tlist(new_tlist, flattenable_vars);

/* clean up cruft */

list_free(flattenable_vars);

list_free(flattenable_cols);

return new_tlist;

}

Definition at line 3443 of file planner.c.

References build_simple_rel(), Query::commandType, cost_qual_eval(), cost_sort(), create_index_path(), create_seqscan_path(), CurrentMemoryContext, ForwardScanDirection, get_relation_data_width(), PlannerInfo::glob, RelOptInfo::indexlist, IndexOptInfo::indexoid, IndexOptInfo::indexprs, RangeTblEntry::inFromCl, RangeTblEntry::inh, RangeTblEntry::lateral, lfirst, list_make1, maintenance_work_mem, makeNode, NIL, NULL, RelOptInfo::pages, PlannerInfo::parse, IndexPath::path, QualCost::per_tuple, PlannerInfo::planner_cxt, PlannerInfo::query_level, RangeTblEntry::relid, RangeTblEntry::relkind, RELOPT_BASEREL, RelOptInfo::rows, Query::rtable, RangeTblEntry::rtekind, setup_simple_rel_arrays(), QualCost::startup, Path::total_cost, PlannerInfo::total_table_pages, RelOptInfo::tuples, RelOptInfo::width, and PlannerInfo::wt_param_id.

Referenced by copy_heap_data().

{

PlannerInfo *root;

Query *query;

PlannerGlobal *glob;

RangeTblEntry *rte;

RelOptInfo *rel;

IndexOptInfo *indexInfo;

QualCost indexExprCost;

Cost comparisonCost;

Path *seqScanPath;

Path seqScanAndSortPath;

IndexPath *indexScanPath;

ListCell *lc;

/* Set up mostly-dummy planner state */

query = makeNode(Query);

query->commandType = CMD_SELECT;

glob = makeNode(PlannerGlobal);

root = makeNode(PlannerInfo);

root->parse = query;

root->glob = glob;

root->query_level = 1;

root->planner_cxt = CurrentMemoryContext;

root->wt_param_id = -1;

/* Build a minimal RTE for the rel */

rte = makeNode(RangeTblEntry);

rte->rtekind = RTE_RELATION;

rte->relid = tableOid;

rte->relkind = RELKIND_RELATION; /* Don't be too picky. */

rte->lateral = false;

rte->inh = false;

rte->inFromCl = true;

query->rtable = list_make1(rte);

/* Set up RTE/RelOptInfo arrays */

setup_simple_rel_arrays(root);

/* Build RelOptInfo */

rel = build_simple_rel(root, 1, RELOPT_BASEREL);

/* Locate IndexOptInfo for the target index */

indexInfo = NULL;

foreach(lc, rel->indexlist)

{

indexInfo = (IndexOptInfo *) lfirst(lc);

if (indexInfo->indexoid == indexOid)

break;

}

/*

* It's possible that get_relation_info did not generate an IndexOptInfo

* for the desired index; this could happen if it's not yet reached its

* indcheckxmin usability horizon, or if it's a system index and we're

* ignoring system indexes. In such cases we should tell CLUSTER to not

* trust the index contents but use seqscan-and-sort.

*/

if (lc == NULL) /* not in the list? */

return true; /* use sort */

/*

* Rather than doing all the pushups that would be needed to use

* set_baserel_size_estimates, just do a quick hack for rows and width.

*/

rel->rows = rel->tuples;

rel->width = get_relation_data_width(tableOid, NULL);

root->total_table_pages = rel->pages;

/*

* Determine eval cost of the index expressions, if any. We need to

* charge twice that amount for each tuple comparison that happens during

* the sort, since tuplesort.c will have to re-evaluate the index

* expressions each time. (XXX that's pretty inefficient...)

*/

cost_qual_eval(&indexExprCost, indexInfo->indexprs, root);

comparisonCost = 2.0 * (indexExprCost.startup + indexExprCost.per_tuple);

/* Estimate the cost of seq scan + sort */

seqScanPath = create_seqscan_path(root, rel, NULL);

cost_sort(&seqScanAndSortPath, root, NIL,

seqScanPath->total_cost, rel->tuples, rel->width,

comparisonCost, maintenance_work_mem, -1.0);

/* Estimate the cost of index scan */

indexScanPath = create_index_path(root, indexInfo,

NIL, NIL, NIL, NIL, NIL,

ForwardScanDirection, false,

NULL, 1.0);

return (seqScanAndSortPath.total_cost < indexScanPath->path.total_cost);

}

| PlannedStmt* planner | ( | Query * | parse, | |

| int | cursorOptions, | |||

| ParamListInfo | boundParams | |||

| ) |

Definition at line 131 of file planner.c.

References planner_hook, and standard_planner().

Referenced by BeginCopy(), and pg_plan_query().

{

PlannedStmt *result;

if (planner_hook)

result = (*planner_hook) (parse, cursorOptions, boundParams);

else

result = standard_planner(parse, cursorOptions, boundParams);

return result;

}

Definition at line 3008 of file planner.c.

References Assert, elog, ERROR, lfirst, list_head(), lnext, NULL, TargetEntry::resjunk, TargetEntry::resno, and TargetEntry::ressortgroupref.

Referenced by grouping_planner().

{

ListCell *l;

ListCell *orig_tlist_item = list_head(orig_tlist);

foreach(l, new_tlist)

{

TargetEntry *new_tle = (TargetEntry *) lfirst(l);

TargetEntry *orig_tle;

/* ignore resjunk columns in setop result */

if (new_tle->resjunk)

continue;

Assert(orig_tlist_item != NULL);

orig_tle = (TargetEntry *) lfirst(orig_tlist_item);

orig_tlist_item = lnext(orig_tlist_item);

if (orig_tle->resjunk) /* should not happen */

elog(ERROR, "resjunk output columns are not implemented");

Assert(new_tle->resno == orig_tle->resno);

new_tle->ressortgroupref = orig_tle->ressortgroupref;

}

if (orig_tlist_item != NULL)

elog(ERROR, "resjunk output columns are not implemented");

return new_tlist;

}

| static Node * preprocess_expression | ( | PlannerInfo * | root, | |

| Node * | expr, | |||

| int | kind | |||

| ) | [static] |

Definition at line 617 of file planner.c.

References canonicalize_qual(), eval_const_expressions(), EXPRKIND_QUAL, EXPRKIND_RTFUNC, EXPRKIND_VALUES, flatten_join_alias_vars(), PlannerInfo::hasJoinRTEs, Query::hasSubLinks, make_ands_implicit(), NULL, PlannerInfo::parse, pprint(), PlannerInfo::query_level, SS_process_sublinks(), and SS_replace_correlation_vars().

Referenced by preprocess_phv_expression(), preprocess_qual_conditions(), and subquery_planner().

{

/*

* Fall out quickly if expression is empty. This occurs often enough to

* be worth checking. Note that null->null is the correct conversion for

* implicit-AND result format, too.

*/

if (expr == NULL)

return NULL;

/*

* If the query has any join RTEs, replace join alias variables with

* base-relation variables. We must do this before sublink processing,

* else sublinks expanded out from join aliases would not get processed.

* We can skip it in non-lateral RTE functions and VALUES lists, however,

* since they can't contain any Vars of the current query level.

*/

if (root->hasJoinRTEs &&

!(kind == EXPRKIND_RTFUNC || kind == EXPRKIND_VALUES))

expr = flatten_join_alias_vars(root, expr);

/*

* Simplify constant expressions.

*

* Note: an essential effect of this is to convert named-argument function

* calls to positional notation and insert the current actual values of