Network Diagram :

Publicly editable image source at https://docs.google.com/drawings/d/1GX3FXmkz3c_tUDpZXUVMpyIxicWuHs5fNsHvYNjwNNk/edit?usp=sharing

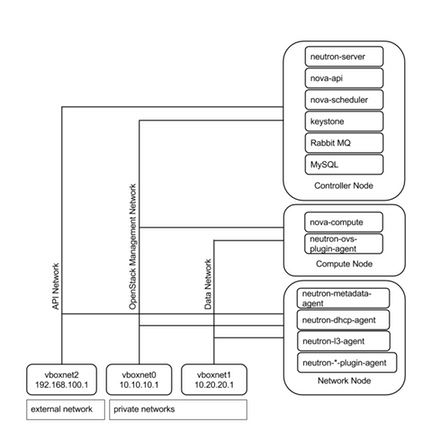

Vboxnet0, Vboxnet1, Vboxnet2 - are virtual networks setup up by virtual box with your host machine. This is the way your host can communicate with the virtual machines. These networks are in turn used by virtual box VM’s for OpenStack networks, so that OpenStack’s services can communicate with each other.

Controller Node

Start your Controller Node the one you setup in previous section.

Preparing Ubuntu 13.04/12.04

After you install Ubuntu Server, go in sudo mode

$ sudo su

Add Havana repositories:

# apt-get install ubuntu-cloud-keyring python-software-properties software-properties-common python-keyring

# echo deb http://ubuntu-cloud.archive.canonical.com/ubuntu precise-updates/icehouse main >> /etc/apt/sources.list.d/icehouse.list

Update your system:

# apt-get update # apt-get upgrade # apt-get dist-upgrade

Networking :

Configure your network by editing /etc/network/interfaces file

Open

/etc/network/interfacesand edit file as mentioned:# This file describes the network interfaces available on your system # and how to activate them. For more information, see interfaces(5). # This file is configured for OpenStack Control Node by dguitarbite. # Note: Selection of the IP addresses is important, changing them may break some of OpenStack Related services, # As these IP addresses are essential for communication between them. # The loopback network interface - for Host-Onlyroot auto lo iface lo inet loopback # Virtual Box vboxnet0 - OpenStack Management Network # (Virtual Box Network Adapter 1) auto eth0 iface eth0 inet static address 10.10.10.51 netmask 255.255.255.0 gateway 10.10.10.1 # Virtual Box vboxnet2 - for exposing OpenStack API over external network # (Virtual Box Network Adapter 2) auto eth1 iface eth1 inet static address 192.168.100.51 netmask 255.255.255.0 gateway 192.168.100.1 # The primary network interface - Virtual Box NAT connection # (Virtual Box Network Adapter 3) auto eth2 iface eth2 inet dhcp

After saving the interfaces file, restart the networking service

# service networking restart

# ifconfig

You should see the expected network interface cards having the required IP Addresses.

SSH from HOST

Create an SSH key pair for your Control Node. Follow the same steps as you did in the starting section of the article for your host machine.

To SSH into the Control Node from the Host Machine type the below command.

$ ssh [email protected]

$ sudo su

Now you can have access to your host clipboard.

My SQL

Install MySQL:

# apt-get install -y mysql-server python-mysqldb

Configure mysql to accept all incoming requests:

# sed -i 's/127.0.0.1/0.0.0.0/g' /etc/mysql/my.cnf

# service mysql restart

RabbitMQ

Install RabbitMQ:

# apt-get install -y rabbitmq-server

Install NTP service:

# apt-get install -y ntp

Create these databases:

$ mysql -u root -p

mysql> CREATE DATABASE keystone;

mysql> GRANT ALL ON keystone.* TO 'keystoneUser'@'%' IDENTIFIED BY 'keystonePass';

mysql> CREATE DATABASE glance;

mysql> GRANT ALL ON glance.* TO 'glanceUser'@'%' IDENTIFIED BY 'glancePass';

mysql> CREATE DATABASE neutron;

mysql> GRANT ALL ON neutron.* TO 'neutronUser'@'%' IDENTIFIED BY 'neutronPass';

mysql> CREATE DATABASE nova;

mysql> GRANT ALL ON nova.* TO 'novaUser'@'%' IDENTIFIED BY 'novaPass';

mysql> CREATE DATABASE cinder;

mysql> GRANT ALL ON cinder.* TO 'cinderUser'@'%' IDENTIFIED BY 'cinderPass';

mysql> quit;

Other

Install other services:

# apt-get install -y vlan bridge-utils

Enable IP_Forwarding:

# sed -i 's/#net.ipv4.ip_forward=1/net.ipv4.ip_forward=1/' /etc/sysctl.conf

Also add the following two lines into

/etc/sysctl.conf:net.ipv4.conf.all.rp_filter=0

net.ipv4.conf.default.rp_filter=0

To save you from reboot, perform the following

# sysctl net.ipv4.ip_forward=1

# sysctl net.ipv4.conf.all.rp_filter=0

# sysctl net.ipv4.conf.default.rp_filter=0

# sysctl -p

Keystone

Keystone is an OpenStack project that provides Identity, Token, Catalog and Policy services for use specifically by projects in the OpenStack family. It implements OpenStack’s Identity API.

Install Keystone packages:

# apt-get install -y keystone

Adapt the connection attribute in the

/etc/keystone/keystone.confto the new database:connection = mysql://keystoneUser:[email protected]/keystone

Restart the identity service then synchronize the database:

# service keystone restart

# keystone-manage db_sync

Fill up the keystone database using the below two scripts:

Run Scripts:

$ chmod +x keystone_basic.sh

$ chmod +x keystone_endpoints_basic.sh

$ ./keystone_basic.sh

$ ./keystone_endpoints_basic.sh

Create a simple credentials file

nano Crediantials.sh

Paste the following:

$ export OS_TENANT_NAME=admin

$ export OS_USERNAME=admin

$ export OS_PASSWORD=admin_pass

$ export OS_AUTH_URL="http://192.168.100.51:5000/v2.0/"

Load the above credentials:

$ source Crediantials.sh

To test Keystone, we use a simple CLI command:

$ keystone user-list

Glance

The OpenStack Glance project provides services for discovering, registering, and retrieving virtual machine images. Glance has a RESTful API that allows querying of VM image metadata as well as retrieval of the actual image.

VM images made available through Glance can be stored in a variety of locations from simple file systems to object-storage systems like the OpenStack Swift project.

Glance, as with all OpenStack projects, is written with the following design guidelines in mind:

Component based architecture: Quickly adds new behaviors

Highly available: Scales to very serious workloads

Fault tolerant: Isolated processes avoid cascading failures

Recoverable: Failures should be easy to diagnose, debug, and rectify

Open standards: Be a reference implementation for a community-driven api

Install Glance

# apt-get install -y glance

Update

/etc/glance/glance-api-paste.ini[filter:authtoken] paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory delay_auth_decision = true auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = glance admin_password = service_pass

Update the

/etc/glance/glance-registry-paste.ini[filter:authtoken] paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = glance admin_password = service_pass

Update the

/etc/glance/glance-api.confsql_connection = mysql://glanceUser:[email protected]/glance [keystone_authtoken] auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = glance admin_password = service_pass [paste_deploy] flavor = keystone

Update the

/etc/glance/glance-registry.confsql_connection = mysql://glanceUser:[email protected]/glance [keystone_authtoken] auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = glance admin_password = service_pass [paste_deploy] flavor = keystone

Restart the glance-api and glance-registry services:

# service glance-api restart; service glance-registry restart

Synchronize the Glance database:

# glance-manage db_sync

To test Glance, upload the “cirros cloud image” directly from the internet:

$ glance image-create --name OS4Y_Cirros --is-public true --container-format bare --disk-format qcow2 --location https://launchpad.net/cirros/trunk/0.3.0/+download/cirros-0.3.0-x86_64-disk.img

Check if the image is successfully uploaded:

$ glance image-list

Neutron

Neutron is an OpenStack project to provide “network connectivity as a service" between interface devices (e.g., vNICs) managed by other OpenStack services (e.g., nova).

Install the Neutron Server and the Open vSwitch package collection:

# apt-get install -y neutron-server

Edit the

/etc/neutron/plugins/openvswitch/ovs_neutron_plugin.ini:[database] connection = mysql://neutronUser:[email protected]/neutron #Under the OVS section [ovs] tenant_network_type = gre tunnel_id_ranges = 1:1000 enable_tunneling = True [agent] tunnel_types = gre #Firewall driver for realizing neutron security group function [securitygroup] firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriver

Edit the

/etc/neutron/api-paste.ini:[filter:authtoken] firewall_driver = neutron.agent.linux.iptables_firewall.OVSHybridIptablesFirewallDriverpaste. filter_factory = keystoneclient.middleware.auth_token:filter_factory auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = neutron admin_password = service_pass

Edit the

/etc/neutron/neutron.conf:rabbit_host = 10.10.10.51 [keystone_authtoken] auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = neutron admin_password = service_pass signing_dir = /var/lib/neutron/keystone-signing [database] connection = mysql://neutronUser:[email protected]/neutron

Restart Neutron services:

# service neutron-server restart

Nova

Nova is the project name for OpenStack Compute, a cloud computing fabric controller, the main part of an IaaS system. Individuals and organizations can use Nova to host and manage their own cloud computing systems. Nova originated as a project out of NASA Ames Research Laboratory.

Nova is written with the following design guidelines in mind:

Component based architecture: Quickly adds new behaviors.

Highly available: Scales to very serious workloads.

Fault-Tolerant: Isolated processes avoid cascading failures.

Recoverable: Failures should be easy to diagnose, debug, and rectify.

Open standards: Be a reference implementation for a community-driven api.

API compatibility: Nova strives to be API-compatible with popular systems like Amazon EC2.

Install nova components:

# apt-get install -y nova-novncproxy novnc nova-api nova-ajax-console-proxy nova-cert nova-conductor nova-consoleauth nova-doc nova-scheduler python-novaclient

Edit

/etc/nova/api-paste.ini[filter:authtoken] paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = nova admin_password = service_pass signing_dir = /tmp/keystone-signing-nova # Workaround for https://bugs.launchpad.net/nova/+bug/1154809 auth_version = v2.0

Edit

/etc/nova/nova.conf[DEFAULT] logdir=/var/log/nova state_path=/var/lib/nova lock_path=/run/lock/nova verbose=True api_paste_config=/etc/nova/api-paste.ini compute_scheduler_driver=nova.scheduler.simple.SimpleScheduler rabbit_host=10.10.10.51 nova_url=http://10.10.10.51:8774/v1.1/ sql_connection=mysql://novaUser:[email protected]/nova root_helper=sudo nova-rootwrap /etc/nova/rootwrap.conf # Auth use_deprecated_auth=false auth_strategy=keystone # Imaging service glance_api_servers=10.10.10.51:9292 image_service=nova.image.glance.GlanceImageService # Vnc configuration novnc_enabled=true novncproxy_base_url=http://192.168.1.51:6080/vnc_auto.html novncproxy_port=6080 vncserver_proxyclient_address=10.10.10.51 vncserver_listen=0.0.0.0 # Network settings network_api_class=nova.network.neutronv2.api.API neutron_url=http://10.10.10.51:9696 neutron_auth_strategy=keystone neutron_admin_tenant_name=service neutron_admin_username=neutron neutron_admin_password=service_pass neutron_admin_auth_url=http://10.10.10.51:35357/v2.0 libvirt_vif_driver=nova.virt.libvirt.vif.LibvirtHybridOVSBridgeDriver linuxnet_interface_driver=nova.network.linux_net.LinuxOVSInterfaceDriver #If you want Neutron + Nova Security groups firewall_driver=nova.virt.firewall.NoopFirewallDriver security_group_api=neutron #If you want Nova Security groups only, comment the two lines above and uncomment line -1-. #-1-firewall_driver=nova.virt.libvirt.firewall.IptablesFirewallDriver #Metadata service_neutron_metadata_proxy = True neutron_metadata_proxy_shared_secret = helloOpenStack # Compute # compute_driver=libvirt.LibvirtDriver # Cinder # volume_api_class=nova.volume.cinder.API osapi_volume_listen_port=5900

Synchronize your database:

# nova-manage db sync

Restart nova-* services (all nova services):

# cd /etc/init.d/; for i in $( ls nova-* ); do service $i restart; done

Check for the smiling faces on

nova-*services to confirm your installation:# nova-manage service list

Cinder

Cinder is an OpenStack project to provide “block storage as a service”.

Component based architecture: Quickly adds new behavior.

Highly available: Scales to very serious workloads.

Fault-Tolerant: Isolated processes avoid cascading failures.

Recoverable: Failures should be easy to diagnose, debug and rectify.

Open standards: Be a reference implementation for a community-driven API.

API compatibility: Cinder strives to be API-compatible with popular systems like Amazon EC2.

Install Cinder components:

# apt-get install -y cinder-api cinder-scheduler cinder-volume iscsitarget open-iscsi iscsitarget-dkms

Configure the iSCSI services:

# sed -i 's/false/true/g' /etc/default/iscsitarget

Restart the services:

# service iscsitarget start

# service open-iscsi start

Edit

/etc/cinder/api-paste.ini:[filter:authtoken] paste.filter_factory = keystoneclient.middleware.auth_token:filter_factory service_protocol = http service_host = 192.168.100.51 service_port = 5000 auth_host = 10.10.10.51 auth_port = 35357 auth_protocol = http admin_tenant_name = service admin_user = cinder admin_password = service_pass signing_dir = /var/lib/cinder

Edit

/etc/cinder/cinder.conf:[DEFAULT] rootwrap_config=/etc/cinder/rootwrap.conf sql_connection = mysql://cinderUser:[email protected]/cinder api_paste_config = /etc/cinder/api-paste.ini iscsi_helper=ietadm volume_name_template = volume-%s volume_group = cinder-volumes verbose = True auth_strategy = keystone iscsi_ip_address=10.10.10.51 rpc_backend = cinder.openstack.common.rpc.impl_kombu rabbit_host = 10.10.10.51 rabbit_port = 5672

Then, synchronize Cinder database:

# cinder-manage db sync

Finally, create a volume group and name it

cinder-volumes:# dd if=/dev/zero of=cinder-volumes bs=1 count=0 seek=2G

# losetup /dev/loop2 cinder-volumes

# fdisk /dev/loop2

Command (m for help): n

Command (m for help): p

Command (m for help): 1

Command (m for help): t

Command (m for help): 8e

Command (m for help): w

Proceed to create the physical volume then the volume group:

# pvcreate /dev/loop2

# vgcreate cinder-volumes /dev/loop2

Note: Be aware that this volume group gets lost after a system reboot. If you do not want to perform this step again, make sure that you save the machine state and do not shut it down.

Restart the Cinder services:

# cd /etc/init.d/; for i in $( ls cinder-* ); do service $i restart; done

Verify if Cinder services are running:

# cd /etc/init.d/; for i in $( ls cinder-* ); do service $i status; done

Horizon

Horizon is the canonical implementation of OpenStack’s dashboard, which provides a web-based user interface to OpenStack services including Nova, Swift, Keystone, etc.

To install Horizon, proceed with the following steps:

# apt-get install -y openstack-dashboard memcached

If you do not like the OpenStack Ubuntu Theme, you can remove it with help of the below command:

# dpkg --purge openstack-dashboard-ubuntu-theme

Reload Apache and memcached:

# service apache2 restart; service memcached restart