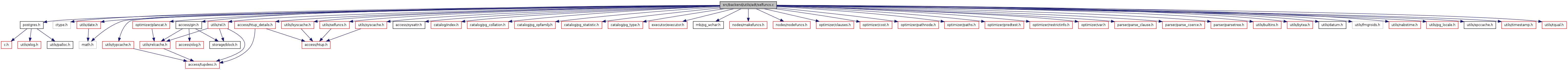

#include "postgres.h"#include <ctype.h>#include <math.h>#include "access/gin.h"#include "access/htup_details.h"#include "access/sysattr.h"#include "catalog/index.h"#include "catalog/pg_collation.h"#include "catalog/pg_opfamily.h"#include "catalog/pg_statistic.h"#include "catalog/pg_type.h"#include "executor/executor.h"#include "mb/pg_wchar.h"#include "nodes/makefuncs.h"#include "nodes/nodeFuncs.h"#include "optimizer/clauses.h"#include "optimizer/cost.h"#include "optimizer/pathnode.h"#include "optimizer/paths.h"#include "optimizer/plancat.h"#include "optimizer/predtest.h"#include "optimizer/restrictinfo.h"#include "optimizer/var.h"#include "parser/parse_clause.h"#include "parser/parse_coerce.h"#include "parser/parsetree.h"#include "utils/builtins.h"#include "utils/bytea.h"#include "utils/date.h"#include "utils/datum.h"#include "utils/fmgroids.h"#include "utils/lsyscache.h"#include "utils/nabstime.h"#include "utils/pg_locale.h"#include "utils/rel.h"#include "utils/selfuncs.h"#include "utils/spccache.h"#include "utils/syscache.h"#include "utils/timestamp.h"#include "utils/tqual.h"#include "utils/typcache.h"

Go to the source code of this file.

Data Structures | |

| struct | GroupVarInfo |

| struct | GenericCosts |

| struct | GinQualCounts |

Defines | |

| #define | FIXED_CHAR_SEL 0.20 |

| #define | CHAR_RANGE_SEL 0.25 |

| #define | ANY_CHAR_SEL 0.9 |

| #define | FULL_WILDCARD_SEL 5.0 |

| #define | PARTIAL_WILDCARD_SEL 2.0 |

Functions | |

| static double | var_eq_const (VariableStatData *vardata, Oid operator, Datum constval, bool constisnull, bool varonleft) |

| static double | var_eq_non_const (VariableStatData *vardata, Oid operator, Node *other, bool varonleft) |

| static double | ineq_histogram_selectivity (PlannerInfo *root, VariableStatData *vardata, FmgrInfo *opproc, bool isgt, Datum constval, Oid consttype) |

| static double | eqjoinsel_inner (Oid operator, VariableStatData *vardata1, VariableStatData *vardata2) |

| static double | eqjoinsel_semi (Oid operator, VariableStatData *vardata1, VariableStatData *vardata2, RelOptInfo *inner_rel) |

| static bool | convert_to_scalar (Datum value, Oid valuetypid, double *scaledvalue, Datum lobound, Datum hibound, Oid boundstypid, double *scaledlobound, double *scaledhibound) |

| static double | convert_numeric_to_scalar (Datum value, Oid typid) |

| static void | convert_string_to_scalar (char *value, double *scaledvalue, char *lobound, double *scaledlobound, char *hibound, double *scaledhibound) |

| static void | convert_bytea_to_scalar (Datum value, double *scaledvalue, Datum lobound, double *scaledlobound, Datum hibound, double *scaledhibound) |

| static double | convert_one_string_to_scalar (char *value, int rangelo, int rangehi) |

| static double | convert_one_bytea_to_scalar (unsigned char *value, int valuelen, int rangelo, int rangehi) |

| static char * | convert_string_datum (Datum value, Oid typid) |

| static double | convert_timevalue_to_scalar (Datum value, Oid typid) |

| static void | examine_simple_variable (PlannerInfo *root, Var *var, VariableStatData *vardata) |

| static bool | get_variable_range (PlannerInfo *root, VariableStatData *vardata, Oid sortop, Datum *min, Datum *max) |

| static bool | get_actual_variable_range (PlannerInfo *root, VariableStatData *vardata, Oid sortop, Datum *min, Datum *max) |

| static RelOptInfo * | find_join_input_rel (PlannerInfo *root, Relids relids) |

| static Selectivity | prefix_selectivity (PlannerInfo *root, VariableStatData *vardata, Oid vartype, Oid opfamily, Const *prefixcon) |

| static Selectivity | like_selectivity (const char *patt, int pattlen, bool case_insensitive) |

| static Selectivity | regex_selectivity (const char *patt, int pattlen, bool case_insensitive, int fixed_prefix_len) |

| static Datum | string_to_datum (const char *str, Oid datatype) |

| static Const * | string_to_const (const char *str, Oid datatype) |

| static Const * | string_to_bytea_const (const char *str, size_t str_len) |

| static List * | add_predicate_to_quals (IndexOptInfo *index, List *indexQuals) |

| Datum | eqsel (PG_FUNCTION_ARGS) |

| Datum | neqsel (PG_FUNCTION_ARGS) |

| static double | scalarineqsel (PlannerInfo *root, Oid operator, bool isgt, VariableStatData *vardata, Datum constval, Oid consttype) |

| double | mcv_selectivity (VariableStatData *vardata, FmgrInfo *opproc, Datum constval, bool varonleft, double *sumcommonp) |

| double | histogram_selectivity (VariableStatData *vardata, FmgrInfo *opproc, Datum constval, bool varonleft, int min_hist_size, int n_skip, int *hist_size) |

| Datum | scalarltsel (PG_FUNCTION_ARGS) |

| Datum | scalargtsel (PG_FUNCTION_ARGS) |

| static double | patternsel (PG_FUNCTION_ARGS, Pattern_Type ptype, bool negate) |

| Datum | regexeqsel (PG_FUNCTION_ARGS) |

| Datum | icregexeqsel (PG_FUNCTION_ARGS) |

| Datum | likesel (PG_FUNCTION_ARGS) |

| Datum | iclikesel (PG_FUNCTION_ARGS) |

| Datum | regexnesel (PG_FUNCTION_ARGS) |

| Datum | icregexnesel (PG_FUNCTION_ARGS) |

| Datum | nlikesel (PG_FUNCTION_ARGS) |

| Datum | icnlikesel (PG_FUNCTION_ARGS) |

| Selectivity | booltestsel (PlannerInfo *root, BoolTestType booltesttype, Node *arg, int varRelid, JoinType jointype, SpecialJoinInfo *sjinfo) |

| Selectivity | nulltestsel (PlannerInfo *root, NullTestType nulltesttype, Node *arg, int varRelid, JoinType jointype, SpecialJoinInfo *sjinfo) |

| static Node * | strip_array_coercion (Node *node) |

| Selectivity | scalararraysel (PlannerInfo *root, ScalarArrayOpExpr *clause, bool is_join_clause, int varRelid, JoinType jointype, SpecialJoinInfo *sjinfo) |

| int | estimate_array_length (Node *arrayexpr) |

| Selectivity | rowcomparesel (PlannerInfo *root, RowCompareExpr *clause, int varRelid, JoinType jointype, SpecialJoinInfo *sjinfo) |

| Datum | eqjoinsel (PG_FUNCTION_ARGS) |

| Datum | neqjoinsel (PG_FUNCTION_ARGS) |

| Datum | scalarltjoinsel (PG_FUNCTION_ARGS) |

| Datum | scalargtjoinsel (PG_FUNCTION_ARGS) |

| static double | patternjoinsel (PG_FUNCTION_ARGS, Pattern_Type ptype, bool negate) |

| Datum | regexeqjoinsel (PG_FUNCTION_ARGS) |

| Datum | icregexeqjoinsel (PG_FUNCTION_ARGS) |

| Datum | likejoinsel (PG_FUNCTION_ARGS) |

| Datum | iclikejoinsel (PG_FUNCTION_ARGS) |

| Datum | regexnejoinsel (PG_FUNCTION_ARGS) |

| Datum | icregexnejoinsel (PG_FUNCTION_ARGS) |

| Datum | nlikejoinsel (PG_FUNCTION_ARGS) |

| Datum | icnlikejoinsel (PG_FUNCTION_ARGS) |

| void | mergejoinscansel (PlannerInfo *root, Node *clause, Oid opfamily, int strategy, bool nulls_first, Selectivity *leftstart, Selectivity *leftend, Selectivity *rightstart, Selectivity *rightend) |

| static List * | add_unique_group_var (PlannerInfo *root, List *varinfos, Node *var, VariableStatData *vardata) |

| double | estimate_num_groups (PlannerInfo *root, List *groupExprs, double input_rows) |

| Selectivity | estimate_hash_bucketsize (PlannerInfo *root, Node *hashkey, double nbuckets) |

| bool | get_restriction_variable (PlannerInfo *root, List *args, int varRelid, VariableStatData *vardata, Node **other, bool *varonleft) |

| void | get_join_variables (PlannerInfo *root, List *args, SpecialJoinInfo *sjinfo, VariableStatData *vardata1, VariableStatData *vardata2, bool *join_is_reversed) |

| void | examine_variable (PlannerInfo *root, Node *node, int varRelid, VariableStatData *vardata) |

| double | get_variable_numdistinct (VariableStatData *vardata, bool *isdefault) |

| static int | pattern_char_isalpha (char c, bool is_multibyte, pg_locale_t locale, bool locale_is_c) |

| static Pattern_Prefix_Status | like_fixed_prefix (Const *patt_const, bool case_insensitive, Oid collation, Const **prefix_const, Selectivity *rest_selec) |

| static Pattern_Prefix_Status | regex_fixed_prefix (Const *patt_const, bool case_insensitive, Oid collation, Const **prefix_const, Selectivity *rest_selec) |

| Pattern_Prefix_Status | pattern_fixed_prefix (Const *patt, Pattern_Type ptype, Oid collation, Const **prefix, Selectivity *rest_selec) |

| static Selectivity | regex_selectivity_sub (const char *patt, int pattlen, bool case_insensitive) |

| static bool | byte_increment (unsigned char *ptr, int len) |

| Const * | make_greater_string (const Const *str_const, FmgrInfo *ltproc, Oid collation) |

| static void | genericcostestimate (PlannerInfo *root, IndexPath *path, double loop_count, GenericCosts *costs) |

| Datum | btcostestimate (PG_FUNCTION_ARGS) |

| Datum | hashcostestimate (PG_FUNCTION_ARGS) |

| Datum | gistcostestimate (PG_FUNCTION_ARGS) |

| Datum | spgcostestimate (PG_FUNCTION_ARGS) |

| static int | find_index_column (Node *op, IndexOptInfo *index) |

| static bool | gincost_pattern (IndexOptInfo *index, int indexcol, Oid clause_op, Datum query, GinQualCounts *counts) |

| static bool | gincost_opexpr (IndexOptInfo *index, OpExpr *clause, GinQualCounts *counts) |

| static bool | gincost_scalararrayopexpr (IndexOptInfo *index, ScalarArrayOpExpr *clause, double numIndexEntries, GinQualCounts *counts) |

| Datum | gincostestimate (PG_FUNCTION_ARGS) |

Variables | |

| get_relation_stats_hook_type | get_relation_stats_hook = NULL |

| get_index_stats_hook_type | get_index_stats_hook = NULL |

| #define ANY_CHAR_SEL 0.9 |

Definition at line 5474 of file selfuncs.c.

| #define CHAR_RANGE_SEL 0.25 |

Definition at line 5473 of file selfuncs.c.

Referenced by regex_selectivity_sub().

| #define FIXED_CHAR_SEL 0.20 |

Definition at line 5472 of file selfuncs.c.

Referenced by regex_selectivity().

| #define FULL_WILDCARD_SEL 5.0 |

Definition at line 5475 of file selfuncs.c.

| #define PARTIAL_WILDCARD_SEL 2.0 |

Definition at line 5476 of file selfuncs.c.

| static List * add_predicate_to_quals | ( | IndexOptInfo * | index, | |

| List * | indexQuals | |||

| ) | [static] |

Definition at line 6172 of file selfuncs.c.

References IndexOptInfo::indpred, lfirst, list_concat(), list_make1, NIL, and predicate_implied_by().

Referenced by btcostestimate(), and genericcostestimate().

{

List *predExtraQuals = NIL;

ListCell *lc;

if (index->indpred == NIL)

return indexQuals;

foreach(lc, index->indpred)

{

Node *predQual = (Node *) lfirst(lc);

List *oneQual = list_make1(predQual);

if (!predicate_implied_by(oneQual, indexQuals))

predExtraQuals = list_concat(predExtraQuals, oneQual);

}

/* list_concat avoids modifying the passed-in indexQuals list */

return list_concat(predExtraQuals, indexQuals);

}

| static List* add_unique_group_var | ( | PlannerInfo * | root, | |

| List * | varinfos, | |||

| Node * | var, | |||

| VariableStatData * | vardata | |||

| ) | [static] |

Definition at line 3090 of file selfuncs.c.

References equal(), exprs_known_equal(), get_variable_numdistinct(), lappend(), lfirst, list_delete_ptr(), list_head(), lnext, GroupVarInfo::ndistinct, palloc(), GroupVarInfo::rel, VariableStatData::rel, and GroupVarInfo::var.

Referenced by estimate_num_groups().

{

GroupVarInfo *varinfo;

double ndistinct;

bool isdefault;

ListCell *lc;

ndistinct = get_variable_numdistinct(vardata, &isdefault);

/* cannot use foreach here because of possible list_delete */

lc = list_head(varinfos);

while (lc)

{

varinfo = (GroupVarInfo *) lfirst(lc);

/* must advance lc before list_delete possibly pfree's it */

lc = lnext(lc);

/* Drop exact duplicates */

if (equal(var, varinfo->var))

return varinfos;

/*

* Drop known-equal vars, but only if they belong to different

* relations (see comments for estimate_num_groups)

*/

if (vardata->rel != varinfo->rel &&

exprs_known_equal(root, var, varinfo->var))

{

if (varinfo->ndistinct <= ndistinct)

{

/* Keep older item, forget new one */

return varinfos;

}

else

{

/* Delete the older item */

varinfos = list_delete_ptr(varinfos, varinfo);

}

}

}

varinfo = (GroupVarInfo *) palloc(sizeof(GroupVarInfo));

varinfo->var = var;

varinfo->rel = vardata->rel;

varinfo->ndistinct = ndistinct;

varinfos = lappend(varinfos, varinfo);

return varinfos;

}

| Selectivity booltestsel | ( | PlannerInfo * | root, | |

| BoolTestType | booltesttype, | |||

| Node * | arg, | |||

| int | varRelid, | |||

| JoinType | jointype, | |||

| SpecialJoinInfo * | sjinfo | |||

| ) |

Definition at line 1446 of file selfuncs.c.

References VariableStatData::atttype, VariableStatData::atttypmod, CLAMP_PROBABILITY, clause_selectivity(), DatumGetBool, elog, ERROR, examine_variable(), free_attstatsslot(), get_attstatsslot(), GETSTRUCT, HeapTupleIsValid, InvalidOid, IS_FALSE, IS_NOT_FALSE, IS_NOT_TRUE, IS_NOT_UNKNOWN, IS_TRUE, IS_UNKNOWN, NULL, ReleaseVariableStats, STATISTIC_KIND_MCV, VariableStatData::statsTuple, and values.

Referenced by clause_selectivity().

{

VariableStatData vardata;

double selec;

examine_variable(root, arg, varRelid, &vardata);

if (HeapTupleIsValid(vardata.statsTuple))

{

Form_pg_statistic stats;

double freq_null;

Datum *values;

int nvalues;

float4 *numbers;

int nnumbers;

stats = (Form_pg_statistic) GETSTRUCT(vardata.statsTuple);

freq_null = stats->stanullfrac;

if (get_attstatsslot(vardata.statsTuple,

vardata.atttype, vardata.atttypmod,

STATISTIC_KIND_MCV, InvalidOid,

NULL,

&values, &nvalues,

&numbers, &nnumbers)

&& nnumbers > 0)

{

double freq_true;

double freq_false;

/*

* Get first MCV frequency and derive frequency for true.

*/

if (DatumGetBool(values[0]))

freq_true = numbers[0];

else

freq_true = 1.0 - numbers[0] - freq_null;

/*

* Next derive frequency for false. Then use these as appropriate

* to derive frequency for each case.

*/

freq_false = 1.0 - freq_true - freq_null;

switch (booltesttype)

{

case IS_UNKNOWN:

/* select only NULL values */

selec = freq_null;

break;

case IS_NOT_UNKNOWN:

/* select non-NULL values */

selec = 1.0 - freq_null;

break;

case IS_TRUE:

/* select only TRUE values */

selec = freq_true;

break;

case IS_NOT_TRUE:

/* select non-TRUE values */

selec = 1.0 - freq_true;

break;

case IS_FALSE:

/* select only FALSE values */

selec = freq_false;

break;

case IS_NOT_FALSE:

/* select non-FALSE values */

selec = 1.0 - freq_false;

break;

default:

elog(ERROR, "unrecognized booltesttype: %d",

(int) booltesttype);

selec = 0.0; /* Keep compiler quiet */

break;

}

free_attstatsslot(vardata.atttype, values, nvalues,

numbers, nnumbers);

}

else

{

/*

* No most-common-value info available. Still have null fraction

* information, so use it for IS [NOT] UNKNOWN. Otherwise adjust

* for null fraction and assume an even split for boolean tests.

*/

switch (booltesttype)

{

case IS_UNKNOWN:

/*

* Use freq_null directly.

*/

selec = freq_null;

break;

case IS_NOT_UNKNOWN:

/*

* Select not unknown (not null) values. Calculate from

* freq_null.

*/

selec = 1.0 - freq_null;

break;

case IS_TRUE:

case IS_NOT_TRUE:

case IS_FALSE:

case IS_NOT_FALSE:

selec = (1.0 - freq_null) / 2.0;

break;

default:

elog(ERROR, "unrecognized booltesttype: %d",

(int) booltesttype);

selec = 0.0; /* Keep compiler quiet */

break;

}

}

}

else

{

/*

* If we can't get variable statistics for the argument, perhaps

* clause_selectivity can do something with it. We ignore the

* possibility of a NULL value when using clause_selectivity, and just

* assume the value is either TRUE or FALSE.

*/

switch (booltesttype)

{

case IS_UNKNOWN:

selec = DEFAULT_UNK_SEL;

break;

case IS_NOT_UNKNOWN:

selec = DEFAULT_NOT_UNK_SEL;

break;

case IS_TRUE:

case IS_NOT_FALSE:

selec = (double) clause_selectivity(root, arg,

varRelid,

jointype, sjinfo);

break;

case IS_FALSE:

case IS_NOT_TRUE:

selec = 1.0 - (double) clause_selectivity(root, arg,

varRelid,

jointype, sjinfo);

break;

default:

elog(ERROR, "unrecognized booltesttype: %d",

(int) booltesttype);

selec = 0.0; /* Keep compiler quiet */

break;

}

}

ReleaseVariableStats(vardata);

/* result should be in range, but make sure... */

CLAMP_PROBABILITY(selec);

return (Selectivity) selec;

}

| Datum btcostestimate | ( | PG_FUNCTION_ARGS | ) |

Definition at line 6194 of file selfuncs.c.

References add_predicate_to_quals(), NullTest::arg, ScalarArrayOpExpr::args, Assert, BoolGetDatum, BTEqualStrategyNumber, BTLessStrategyNumber, RestrictInfo::clause, clauselist_selectivity(), cpu_operator_cost, elog, ERROR, estimate_array_length(), forboth, free_attstatsslot(), VariableStatData::freefunc, genericcostestimate(), get_attstatsslot(), get_commutator(), get_index_stats_hook, get_leftop(), get_op_opfamily_strategy(), get_opfamily_member(), get_relation_stats_hook, get_rightop(), HeapTupleIsValid, GenericCosts::indexCorrelation, IndexPath::indexinfo, IndexOptInfo::indexkeys, IndexOptInfo::indexoid, IndexPath::indexqualcols, IndexPath::indexquals, GenericCosts::indexSelectivity, GenericCosts::indexStartupCost, GenericCosts::indexTotalCost, RangeTblEntry::inh, Int16GetDatum, InvalidOid, IS_NULL, IsA, JOIN_INNER, lappend(), RowCompareExpr::largs, lfirst, lfirst_int, linitial, linitial_oid, lsecond, match_index_to_operand(), MemSet, IndexOptInfo::ncolumns, nodeTag, NULL, NullTest::nulltesttype, GenericCosts::num_sa_scans, GenericCosts::numIndexTuples, ObjectIdGetDatum, OidIsValid, IndexOptInfo::opcintype, IndexOptInfo::opfamily, ScalarArrayOpExpr::opno, RowCompareExpr::opnos, PG_GETARG_FLOAT8, PG_GETARG_POINTER, PG_RETURN_VOID, planner_rt_fetch, RowCompareExpr::rargs, IndexOptInfo::rel, ReleaseVariableStats, RangeTblEntry::relid, RelOptInfo::relid, IndexOptInfo::reverse_sort, rint(), RTE_RELATION, RangeTblEntry::rtekind, SearchSysCache3, STATISTIC_KIND_CORRELATION, STATRELATTINH, VariableStatData::statsTuple, IndexOptInfo::tree_height, IndexOptInfo::tuples, RelOptInfo::tuples, and IndexOptInfo::unique.

{

PlannerInfo *root = (PlannerInfo *) PG_GETARG_POINTER(0);

IndexPath *path = (IndexPath *) PG_GETARG_POINTER(1);

double loop_count = PG_GETARG_FLOAT8(2);

Cost *indexStartupCost = (Cost *) PG_GETARG_POINTER(3);

Cost *indexTotalCost = (Cost *) PG_GETARG_POINTER(4);

Selectivity *indexSelectivity = (Selectivity *) PG_GETARG_POINTER(5);

double *indexCorrelation = (double *) PG_GETARG_POINTER(6);

IndexOptInfo *index = path->indexinfo;

GenericCosts costs;

Oid relid;

AttrNumber colnum;

VariableStatData vardata;

double numIndexTuples;

Cost descentCost;

List *indexBoundQuals;

int indexcol;

bool eqQualHere;

bool found_saop;

bool found_is_null_op;

double num_sa_scans;

ListCell *lcc,

*lci;

/*

* For a btree scan, only leading '=' quals plus inequality quals for the

* immediately next attribute contribute to index selectivity (these are

* the "boundary quals" that determine the starting and stopping points of

* the index scan). Additional quals can suppress visits to the heap, so

* it's OK to count them in indexSelectivity, but they should not count

* for estimating numIndexTuples. So we must examine the given indexquals

* to find out which ones count as boundary quals. We rely on the

* knowledge that they are given in index column order.

*

* For a RowCompareExpr, we consider only the first column, just as

* rowcomparesel() does.

*

* If there's a ScalarArrayOpExpr in the quals, we'll actually perform N

* index scans not one, but the ScalarArrayOpExpr's operator can be

* considered to act the same as it normally does.

*/

indexBoundQuals = NIL;

indexcol = 0;

eqQualHere = false;

found_saop = false;

found_is_null_op = false;

num_sa_scans = 1;

forboth(lcc, path->indexquals, lci, path->indexqualcols)

{

RestrictInfo *rinfo = (RestrictInfo *) lfirst(lcc);

Expr *clause;

Node *leftop,

*rightop PG_USED_FOR_ASSERTS_ONLY;

Oid clause_op;

int op_strategy;

bool is_null_op = false;

if (indexcol != lfirst_int(lci))

{

/* Beginning of a new column's quals */

if (!eqQualHere)

break; /* done if no '=' qual for indexcol */

eqQualHere = false;

indexcol++;

if (indexcol != lfirst_int(lci))

break; /* no quals at all for indexcol */

}

Assert(IsA(rinfo, RestrictInfo));

clause = rinfo->clause;

if (IsA(clause, OpExpr))

{

leftop = get_leftop(clause);

rightop = get_rightop(clause);

clause_op = ((OpExpr *) clause)->opno;

}

else if (IsA(clause, RowCompareExpr))

{

RowCompareExpr *rc = (RowCompareExpr *) clause;

leftop = (Node *) linitial(rc->largs);

rightop = (Node *) linitial(rc->rargs);

clause_op = linitial_oid(rc->opnos);

}

else if (IsA(clause, ScalarArrayOpExpr))

{

ScalarArrayOpExpr *saop = (ScalarArrayOpExpr *) clause;

leftop = (Node *) linitial(saop->args);

rightop = (Node *) lsecond(saop->args);

clause_op = saop->opno;

found_saop = true;

}

else if (IsA(clause, NullTest))

{

NullTest *nt = (NullTest *) clause;

leftop = (Node *) nt->arg;

rightop = NULL;

clause_op = InvalidOid;

if (nt->nulltesttype == IS_NULL)

{

found_is_null_op = true;

is_null_op = true;

}

}

else

{

elog(ERROR, "unsupported indexqual type: %d",

(int) nodeTag(clause));

continue; /* keep compiler quiet */

}

if (match_index_to_operand(leftop, indexcol, index))

{

/* clause_op is correct */

}

else

{

Assert(match_index_to_operand(rightop, indexcol, index));

/* Must flip operator to get the opfamily member */

clause_op = get_commutator(clause_op);

}

/* check for equality operator */

if (OidIsValid(clause_op))

{

op_strategy = get_op_opfamily_strategy(clause_op,

index->opfamily[indexcol]);

Assert(op_strategy != 0); /* not a member of opfamily?? */

if (op_strategy == BTEqualStrategyNumber)

eqQualHere = true;

}

else if (is_null_op)

{

/* IS NULL is like = for purposes of selectivity determination */

eqQualHere = true;

}

/* count up number of SA scans induced by indexBoundQuals only */

if (IsA(clause, ScalarArrayOpExpr))

{

ScalarArrayOpExpr *saop = (ScalarArrayOpExpr *) clause;

int alength = estimate_array_length(lsecond(saop->args));

if (alength > 1)

num_sa_scans *= alength;

}

indexBoundQuals = lappend(indexBoundQuals, rinfo);

}

/*

* If index is unique and we found an '=' clause for each column, we can

* just assume numIndexTuples = 1 and skip the expensive

* clauselist_selectivity calculations. However, a ScalarArrayOp or

* NullTest invalidates that theory, even though it sets eqQualHere.

*/

if (index->unique &&

indexcol == index->ncolumns - 1 &&

eqQualHere &&

!found_saop &&

!found_is_null_op)

numIndexTuples = 1.0;

else

{

List *selectivityQuals;

Selectivity btreeSelectivity;

/*

* If the index is partial, AND the index predicate with the

* index-bound quals to produce a more accurate idea of the number of

* rows covered by the bound conditions.

*/

selectivityQuals = add_predicate_to_quals(index, indexBoundQuals);

btreeSelectivity = clauselist_selectivity(root, selectivityQuals,

index->rel->relid,

JOIN_INNER,

NULL);

numIndexTuples = btreeSelectivity * index->rel->tuples;

/*

* As in genericcostestimate(), we have to adjust for any

* ScalarArrayOpExpr quals included in indexBoundQuals, and then round

* to integer.

*/

numIndexTuples = rint(numIndexTuples / num_sa_scans);

}

/*

* Now do generic index cost estimation.

*/

MemSet(&costs, 0, sizeof(costs));

costs.numIndexTuples = numIndexTuples;

genericcostestimate(root, path, loop_count, &costs);

/*

* Add a CPU-cost component to represent the costs of initial btree

* descent. We don't charge any I/O cost for touching upper btree levels,

* since they tend to stay in cache, but we still have to do about log2(N)

* comparisons to descend a btree of N leaf tuples. We charge one

* cpu_operator_cost per comparison.

*

* If there are ScalarArrayOpExprs, charge this once per SA scan. The

* ones after the first one are not startup cost so far as the overall

* plan is concerned, so add them only to "total" cost.

*/

if (index->tuples > 1) /* avoid computing log(0) */

{

descentCost = ceil(log(index->tuples) / log(2.0)) * cpu_operator_cost;

costs.indexStartupCost += descentCost;

costs.indexTotalCost += costs.num_sa_scans * descentCost;

}

/*

* Even though we're not charging I/O cost for touching upper btree pages,

* it's still reasonable to charge some CPU cost per page descended

* through. Moreover, if we had no such charge at all, bloated indexes

* would appear to have the same search cost as unbloated ones, at least

* in cases where only a single leaf page is expected to be visited. This

* cost is somewhat arbitrarily set at 50x cpu_operator_cost per page

* touched. The number of such pages is btree tree height plus one (ie,

* we charge for the leaf page too). As above, charge once per SA scan.

*/

descentCost = (index->tree_height + 1) * 50.0 * cpu_operator_cost;

costs.indexStartupCost += descentCost;

costs.indexTotalCost += costs.num_sa_scans * descentCost;

/*

* If we can get an estimate of the first column's ordering correlation C

* from pg_statistic, estimate the index correlation as C for a

* single-column index, or C * 0.75 for multiple columns. (The idea here

* is that multiple columns dilute the importance of the first column's

* ordering, but don't negate it entirely. Before 8.0 we divided the

* correlation by the number of columns, but that seems too strong.)

*/

MemSet(&vardata, 0, sizeof(vardata));

if (index->indexkeys[0] != 0)

{

/* Simple variable --- look to stats for the underlying table */

RangeTblEntry *rte = planner_rt_fetch(index->rel->relid, root);

Assert(rte->rtekind == RTE_RELATION);

relid = rte->relid;

Assert(relid != InvalidOid);

colnum = index->indexkeys[0];

if (get_relation_stats_hook &&

(*get_relation_stats_hook) (root, rte, colnum, &vardata))

{

/*

* The hook took control of acquiring a stats tuple. If it did

* supply a tuple, it'd better have supplied a freefunc.

*/

if (HeapTupleIsValid(vardata.statsTuple) &&

!vardata.freefunc)

elog(ERROR, "no function provided to release variable stats with");

}

else

{

vardata.statsTuple = SearchSysCache3(STATRELATTINH,

ObjectIdGetDatum(relid),

Int16GetDatum(colnum),

BoolGetDatum(rte->inh));

vardata.freefunc = ReleaseSysCache;

}

}

else

{

/* Expression --- maybe there are stats for the index itself */

relid = index->indexoid;

colnum = 1;

if (get_index_stats_hook &&

(*get_index_stats_hook) (root, relid, colnum, &vardata))

{

/*

* The hook took control of acquiring a stats tuple. If it did

* supply a tuple, it'd better have supplied a freefunc.

*/

if (HeapTupleIsValid(vardata.statsTuple) &&

!vardata.freefunc)

elog(ERROR, "no function provided to release variable stats with");

}

else

{

vardata.statsTuple = SearchSysCache3(STATRELATTINH,

ObjectIdGetDatum(relid),

Int16GetDatum(colnum),

BoolGetDatum(false));

vardata.freefunc = ReleaseSysCache;

}

}

if (HeapTupleIsValid(vardata.statsTuple))

{

Oid sortop;

float4 *numbers;

int nnumbers;

sortop = get_opfamily_member(index->opfamily[0],

index->opcintype[0],

index->opcintype[0],

BTLessStrategyNumber);

if (OidIsValid(sortop) &&

get_attstatsslot(vardata.statsTuple, InvalidOid, 0,

STATISTIC_KIND_CORRELATION,

sortop,

NULL,

NULL, NULL,

&numbers, &nnumbers))

{

double varCorrelation;

Assert(nnumbers == 1);

varCorrelation = numbers[0];

if (index->reverse_sort[0])

varCorrelation = -varCorrelation;

if (index->ncolumns > 1)

costs.indexCorrelation = varCorrelation * 0.75;

else

costs.indexCorrelation = varCorrelation;

free_attstatsslot(InvalidOid, NULL, 0, numbers, nnumbers);

}

}

ReleaseVariableStats(vardata);

*indexStartupCost = costs.indexStartupCost;

*indexTotalCost = costs.indexTotalCost;

*indexSelectivity = costs.indexSelectivity;

*indexCorrelation = costs.indexCorrelation;

PG_RETURN_VOID();

}

| static bool byte_increment | ( | unsigned char * | ptr, | |

| int | len | |||

| ) | [static] |

Definition at line 5643 of file selfuncs.c.

{

if (*ptr >= 255)

return false;

(*ptr)++;

return true;

}

| static void convert_bytea_to_scalar | ( | Datum | value, | |

| double * | scaledvalue, | |||

| Datum | lobound, | |||

| double * | scaledlobound, | |||

| Datum | hibound, | |||

| double * | scaledhibound | |||

| ) | [static] |

Definition at line 3974 of file selfuncs.c.

References convert_one_bytea_to_scalar(), DatumGetPointer, i, Min, VARDATA, and VARSIZE.

Referenced by convert_to_scalar().

{

int rangelo,

rangehi,

valuelen = VARSIZE(DatumGetPointer(value)) - VARHDRSZ,

loboundlen = VARSIZE(DatumGetPointer(lobound)) - VARHDRSZ,

hiboundlen = VARSIZE(DatumGetPointer(hibound)) - VARHDRSZ,

i,

minlen;

unsigned char *valstr = (unsigned char *) VARDATA(DatumGetPointer(value)),

*lostr = (unsigned char *) VARDATA(DatumGetPointer(lobound)),

*histr = (unsigned char *) VARDATA(DatumGetPointer(hibound));

/*

* Assume bytea data is uniformly distributed across all byte values.

*/

rangelo = 0;

rangehi = 255;

/*

* Now strip any common prefix of the three strings.

*/

minlen = Min(Min(valuelen, loboundlen), hiboundlen);

for (i = 0; i < minlen; i++)

{

if (*lostr != *histr || *lostr != *valstr)

break;

lostr++, histr++, valstr++;

loboundlen--, hiboundlen--, valuelen--;

}

/*

* Now we can do the conversions.

*/

*scaledvalue = convert_one_bytea_to_scalar(valstr, valuelen, rangelo, rangehi);

*scaledlobound = convert_one_bytea_to_scalar(lostr, loboundlen, rangelo, rangehi);

*scaledhibound = convert_one_bytea_to_scalar(histr, hiboundlen, rangelo, rangehi);

}

Definition at line 3687 of file selfuncs.c.

References BOOLOID, DatumGetBool, DatumGetFloat4, DatumGetFloat8, DatumGetInt16, DatumGetInt32, DatumGetInt64, DatumGetObjectId, DirectFunctionCall1, elog, ERROR, FLOAT4OID, FLOAT8OID, INT2OID, INT4OID, INT8OID, numeric_float8_no_overflow(), NUMERICOID, OIDOID, REGCLASSOID, REGCONFIGOID, REGDICTIONARYOID, REGOPERATOROID, REGOPEROID, REGPROCEDUREOID, REGPROCOID, and REGTYPEOID.

Referenced by convert_to_scalar().

{

switch (typid)

{

case BOOLOID:

return (double) DatumGetBool(value);

case INT2OID:

return (double) DatumGetInt16(value);

case INT4OID:

return (double) DatumGetInt32(value);

case INT8OID:

return (double) DatumGetInt64(value);

case FLOAT4OID:

return (double) DatumGetFloat4(value);

case FLOAT8OID:

return (double) DatumGetFloat8(value);

case NUMERICOID:

/* Note: out-of-range values will be clamped to +-HUGE_VAL */

return (double)

DatumGetFloat8(DirectFunctionCall1(numeric_float8_no_overflow,

value));

case OIDOID:

case REGPROCOID:

case REGPROCEDUREOID:

case REGOPEROID:

case REGOPERATOROID:

case REGCLASSOID:

case REGTYPEOID:

case REGCONFIGOID:

case REGDICTIONARYOID:

/* we can treat OIDs as integers... */

return (double) DatumGetObjectId(value);

}

/*

* Can't get here unless someone tries to use scalarltsel/scalargtsel on

* an operator with one numeric and one non-numeric operand.

*/

elog(ERROR, "unsupported type: %u", typid);

return 0;

}

| static double convert_one_bytea_to_scalar | ( | unsigned char * | value, | |

| int | valuelen, | |||

| int | rangelo, | |||

| int | rangehi | |||

| ) | [static] |

Definition at line 4019 of file selfuncs.c.

Referenced by convert_bytea_to_scalar().

{

double num,

denom,

base;

if (valuelen <= 0)

return 0.0; /* empty string has scalar value 0 */

/*

* Since base is 256, need not consider more than about 10 chars (even

* this many seems like overkill)

*/

if (valuelen > 10)

valuelen = 10;

/* Convert initial characters to fraction */

base = rangehi - rangelo + 1;

num = 0.0;

denom = base;

while (valuelen-- > 0)

{

int ch = *value++;

if (ch < rangelo)

ch = rangelo - 1;

else if (ch > rangehi)

ch = rangehi + 1;

num += ((double) (ch - rangelo)) / denom;

denom *= base;

}

return num;

}

| static double convert_one_string_to_scalar | ( | char * | value, | |

| int | rangelo, | |||

| int | rangehi | |||

| ) | [static] |

Definition at line 3830 of file selfuncs.c.

Referenced by convert_string_to_scalar().

{

int slen = strlen(value);

double num,

denom,

base;

if (slen <= 0)

return 0.0; /* empty string has scalar value 0 */

/*

* Since base is at least 10, need not consider more than about 20 chars

*/

if (slen > 20)

slen = 20;

/* Convert initial characters to fraction */

base = rangehi - rangelo + 1;

num = 0.0;

denom = base;

while (slen-- > 0)

{

int ch = (unsigned char) *value++;

if (ch < rangelo)

ch = rangelo - 1;

else if (ch > rangehi)

ch = rangehi + 1;

num += ((double) (ch - rangelo)) / denom;

denom *= base;

}

return num;

}

Definition at line 3872 of file selfuncs.c.

References Assert, BPCHAROID, CHAROID, DatumGetChar, DatumGetPointer, DEFAULT_COLLATION_OID, elog, ERROR, lc_collate_is_c(), NAMEOID, NameStr, NULL, palloc(), pfree(), pstrdup(), TextDatumGetCString, TEXTOID, val, and VARCHAROID.

Referenced by convert_to_scalar().

{

char *val;

switch (typid)

{

case CHAROID:

val = (char *) palloc(2);

val[0] = DatumGetChar(value);

val[1] = '\0';

break;

case BPCHAROID:

case VARCHAROID:

case TEXTOID:

val = TextDatumGetCString(value);

break;

case NAMEOID:

{

NameData *nm = (NameData *) DatumGetPointer(value);

val = pstrdup(NameStr(*nm));

break;

}

default:

/*

* Can't get here unless someone tries to use scalarltsel on an

* operator with one string and one non-string operand.

*/

elog(ERROR, "unsupported type: %u", typid);

return NULL;

}

if (!lc_collate_is_c(DEFAULT_COLLATION_OID))

{

char *xfrmstr;

size_t xfrmlen;

size_t xfrmlen2 PG_USED_FOR_ASSERTS_ONLY;

/*

* Note: originally we guessed at a suitable output buffer size, and

* only needed to call strxfrm twice if our guess was too small.

* However, it seems that some versions of Solaris have buggy strxfrm

* that can write past the specified buffer length in that scenario.

* So, do it the dumb way for portability.

*

* Yet other systems (e.g., glibc) sometimes return a smaller value

* from the second call than the first; thus the Assert must be <= not

* == as you'd expect. Can't any of these people program their way

* out of a paper bag?

*

* XXX: strxfrm doesn't support UTF-8 encoding on Win32, it can return

* bogus data or set an error. This is not really a problem unless it

* crashes since it will only give an estimation error and nothing

* fatal.

*/

#if _MSC_VER == 1400 /* VS.Net 2005 */

/*

*

* http://connect.microsoft.com/VisualStudio/feedback/ViewFeedback.aspx?

* FeedbackID=99694 */

{

char x[1];

xfrmlen = strxfrm(x, val, 0);

}

#else

xfrmlen = strxfrm(NULL, val, 0);

#endif

#ifdef WIN32

/*

* On Windows, strxfrm returns INT_MAX when an error occurs. Instead

* of trying to allocate this much memory (and fail), just return the

* original string unmodified as if we were in the C locale.

*/

if (xfrmlen == INT_MAX)

return val;

#endif

xfrmstr = (char *) palloc(xfrmlen + 1);

xfrmlen2 = strxfrm(xfrmstr, val, xfrmlen + 1);

Assert(xfrmlen2 <= xfrmlen);

pfree(val);

val = xfrmstr;

}

return val;

}

| static void convert_string_to_scalar | ( | char * | value, | |

| double * | scaledvalue, | |||

| char * | lobound, | |||

| double * | scaledlobound, | |||

| char * | hibound, | |||

| double * | scaledhibound | |||

| ) | [static] |

Definition at line 3750 of file selfuncs.c.

References convert_one_string_to_scalar().

Referenced by convert_to_scalar().

{

int rangelo,

rangehi;

char *sptr;

rangelo = rangehi = (unsigned char) hibound[0];

for (sptr = lobound; *sptr; sptr++)

{

if (rangelo > (unsigned char) *sptr)

rangelo = (unsigned char) *sptr;

if (rangehi < (unsigned char) *sptr)

rangehi = (unsigned char) *sptr;

}

for (sptr = hibound; *sptr; sptr++)

{

if (rangelo > (unsigned char) *sptr)

rangelo = (unsigned char) *sptr;

if (rangehi < (unsigned char) *sptr)

rangehi = (unsigned char) *sptr;

}

/* If range includes any upper-case ASCII chars, make it include all */

if (rangelo <= 'Z' && rangehi >= 'A')

{

if (rangelo > 'A')

rangelo = 'A';

if (rangehi < 'Z')

rangehi = 'Z';

}

/* Ditto lower-case */

if (rangelo <= 'z' && rangehi >= 'a')

{

if (rangelo > 'a')

rangelo = 'a';

if (rangehi < 'z')

rangehi = 'z';

}

/* Ditto digits */

if (rangelo <= '9' && rangehi >= '0')

{

if (rangelo > '0')

rangelo = '0';

if (rangehi < '9')

rangehi = '9';

}

/*

* If range includes less than 10 chars, assume we have not got enough

* data, and make it include regular ASCII set.

*/

if (rangehi - rangelo < 9)

{

rangelo = ' ';

rangehi = 127;

}

/*

* Now strip any common prefix of the three strings.

*/

while (*lobound)

{

if (*lobound != *hibound || *lobound != *value)

break;

lobound++, hibound++, value++;

}

/*

* Now we can do the conversions.

*/

*scaledvalue = convert_one_string_to_scalar(value, rangelo, rangehi);

*scaledlobound = convert_one_string_to_scalar(lobound, rangelo, rangehi);

*scaledhibound = convert_one_string_to_scalar(hibound, rangelo, rangehi);

}

Definition at line 4059 of file selfuncs.c.

References abstime_timestamp(), ABSTIMEOID, TimeIntervalData::data, date2timestamp_no_overflow(), DATEOID, DatumGetDateADT, DatumGetIntervalP, DatumGetRelativeTime, DatumGetTimeADT, DatumGetTimeInterval, DatumGetTimestamp, DatumGetTimestampTz, DatumGetTimeTzADTP, Interval::day, DAYS_PER_YEAR, DirectFunctionCall1, elog, ERROR, INTERVALOID, Interval::month, MONTHS_PER_YEAR, RELTIMEOID, SECS_PER_DAY, TimeIntervalData::status, TimeTzADT::time, Interval::time, TIMEOID, TIMESTAMPOID, TIMESTAMPTZOID, TIMETZOID, TINTERVALOID, USECS_PER_DAY, and TimeTzADT::zone.

Referenced by convert_to_scalar().

{

switch (typid)

{

case TIMESTAMPOID:

return DatumGetTimestamp(value);

case TIMESTAMPTZOID:

return DatumGetTimestampTz(value);

case ABSTIMEOID:

return DatumGetTimestamp(DirectFunctionCall1(abstime_timestamp,

value));

case DATEOID:

return date2timestamp_no_overflow(DatumGetDateADT(value));

case INTERVALOID:

{

Interval *interval = DatumGetIntervalP(value);

/*

* Convert the month part of Interval to days using assumed

* average month length of 365.25/12.0 days. Not too

* accurate, but plenty good enough for our purposes.

*/

#ifdef HAVE_INT64_TIMESTAMP

return interval->time + interval->day * (double) USECS_PER_DAY +

interval->month * ((DAYS_PER_YEAR / (double) MONTHS_PER_YEAR) * USECS_PER_DAY);

#else

return interval->time + interval->day * SECS_PER_DAY +

interval->month * ((DAYS_PER_YEAR / (double) MONTHS_PER_YEAR) * (double) SECS_PER_DAY);

#endif

}

case RELTIMEOID:

#ifdef HAVE_INT64_TIMESTAMP

return (DatumGetRelativeTime(value) * 1000000.0);

#else

return DatumGetRelativeTime(value);

#endif

case TINTERVALOID:

{

TimeInterval tinterval = DatumGetTimeInterval(value);

#ifdef HAVE_INT64_TIMESTAMP

if (tinterval->status != 0)

return ((tinterval->data[1] - tinterval->data[0]) * 1000000.0);

#else

if (tinterval->status != 0)

return tinterval->data[1] - tinterval->data[0];

#endif

return 0; /* for lack of a better idea */

}

case TIMEOID:

return DatumGetTimeADT(value);

case TIMETZOID:

{

TimeTzADT *timetz = DatumGetTimeTzADTP(value);

/* use GMT-equivalent time */

#ifdef HAVE_INT64_TIMESTAMP

return (double) (timetz->time + (timetz->zone * 1000000.0));

#else

return (double) (timetz->time + timetz->zone);

#endif

}

}

/*

* Can't get here unless someone tries to use scalarltsel/scalargtsel on

* an operator with one timevalue and one non-timevalue operand.

*/

elog(ERROR, "unsupported type: %u", typid);

return 0;

}

| static bool convert_to_scalar | ( | Datum | value, | |

| Oid | valuetypid, | |||

| double * | scaledvalue, | |||

| Datum | lobound, | |||

| Datum | hibound, | |||

| Oid | boundstypid, | |||

| double * | scaledlobound, | |||

| double * | scaledhibound | |||

| ) | [static] |

Definition at line 3572 of file selfuncs.c.

References ABSTIMEOID, BOOLOID, BPCHAROID, BYTEAOID, CHAROID, CIDROID, convert_bytea_to_scalar(), convert_network_to_scalar(), convert_numeric_to_scalar(), convert_string_datum(), convert_string_to_scalar(), convert_timevalue_to_scalar(), DATEOID, FLOAT4OID, FLOAT8OID, INETOID, INT2OID, INT4OID, INT8OID, INTERVALOID, MACADDROID, NAMEOID, NUMERICOID, OIDOID, pfree(), REGCLASSOID, REGCONFIGOID, REGDICTIONARYOID, REGOPERATOROID, REGOPEROID, REGPROCEDUREOID, REGPROCOID, REGTYPEOID, RELTIMEOID, TEXTOID, TIMEOID, TIMESTAMPOID, TIMESTAMPTZOID, TIMETZOID, TINTERVALOID, and VARCHAROID.

Referenced by ineq_histogram_selectivity().

{

/*

* Both the valuetypid and the boundstypid should exactly match the

* declared input type(s) of the operator we are invoked for, so we just

* error out if either is not recognized.

*

* XXX The histogram we are interpolating between points of could belong

* to a column that's only binary-compatible with the declared type. In

* essence we are assuming that the semantics of binary-compatible types

* are enough alike that we can use a histogram generated with one type's

* operators to estimate selectivity for the other's. This is outright

* wrong in some cases --- in particular signed versus unsigned

* interpretation could trip us up. But it's useful enough in the

* majority of cases that we do it anyway. Should think about more

* rigorous ways to do it.

*/

switch (valuetypid)

{

/*

* Built-in numeric types

*/

case BOOLOID:

case INT2OID:

case INT4OID:

case INT8OID:

case FLOAT4OID:

case FLOAT8OID:

case NUMERICOID:

case OIDOID:

case REGPROCOID:

case REGPROCEDUREOID:

case REGOPEROID:

case REGOPERATOROID:

case REGCLASSOID:

case REGTYPEOID:

case REGCONFIGOID:

case REGDICTIONARYOID:

*scaledvalue = convert_numeric_to_scalar(value, valuetypid);

*scaledlobound = convert_numeric_to_scalar(lobound, boundstypid);

*scaledhibound = convert_numeric_to_scalar(hibound, boundstypid);

return true;

/*

* Built-in string types

*/

case CHAROID:

case BPCHAROID:

case VARCHAROID:

case TEXTOID:

case NAMEOID:

{

char *valstr = convert_string_datum(value, valuetypid);

char *lostr = convert_string_datum(lobound, boundstypid);

char *histr = convert_string_datum(hibound, boundstypid);

convert_string_to_scalar(valstr, scaledvalue,

lostr, scaledlobound,

histr, scaledhibound);

pfree(valstr);

pfree(lostr);

pfree(histr);

return true;

}

/*

* Built-in bytea type

*/

case BYTEAOID:

{

convert_bytea_to_scalar(value, scaledvalue,

lobound, scaledlobound,

hibound, scaledhibound);

return true;

}

/*

* Built-in time types

*/

case TIMESTAMPOID:

case TIMESTAMPTZOID:

case ABSTIMEOID:

case DATEOID:

case INTERVALOID:

case RELTIMEOID:

case TINTERVALOID:

case TIMEOID:

case TIMETZOID:

*scaledvalue = convert_timevalue_to_scalar(value, valuetypid);

*scaledlobound = convert_timevalue_to_scalar(lobound, boundstypid);

*scaledhibound = convert_timevalue_to_scalar(hibound, boundstypid);

return true;

/*

* Built-in network types

*/

case INETOID:

case CIDROID:

case MACADDROID:

*scaledvalue = convert_network_to_scalar(value, valuetypid);

*scaledlobound = convert_network_to_scalar(lobound, boundstypid);

*scaledhibound = convert_network_to_scalar(hibound, boundstypid);

return true;

}

/* Don't know how to convert */

*scaledvalue = *scaledlobound = *scaledhibound = 0;

return false;

}

| Datum eqjoinsel | ( | PG_FUNCTION_ARGS | ) |

Definition at line 2133 of file selfuncs.c.

References CLAMP_PROBABILITY, elog, eqjoinsel_inner(), eqjoinsel_semi(), ERROR, find_join_input_rel(), get_commutator(), get_join_variables(), JOIN_ANTI, JOIN_FULL, JOIN_INNER, JOIN_LEFT, JOIN_SEMI, SpecialJoinInfo::jointype, SpecialJoinInfo::min_righthand, PG_GETARG_INT16, PG_GETARG_POINTER, PG_RETURN_FLOAT8, and ReleaseVariableStats.

Referenced by neqjoinsel().

{

PlannerInfo *root = (PlannerInfo *) PG_GETARG_POINTER(0);

Oid operator = PG_GETARG_OID(1);

List *args = (List *) PG_GETARG_POINTER(2);

#ifdef NOT_USED

JoinType jointype = (JoinType) PG_GETARG_INT16(3);

#endif

SpecialJoinInfo *sjinfo = (SpecialJoinInfo *) PG_GETARG_POINTER(4);

double selec;

VariableStatData vardata1;

VariableStatData vardata2;

bool join_is_reversed;

RelOptInfo *inner_rel;

get_join_variables(root, args, sjinfo,

&vardata1, &vardata2, &join_is_reversed);

switch (sjinfo->jointype)

{

case JOIN_INNER:

case JOIN_LEFT:

case JOIN_FULL:

selec = eqjoinsel_inner(operator, &vardata1, &vardata2);

break;

case JOIN_SEMI:

case JOIN_ANTI:

/*

* Look up the join's inner relation. min_righthand is sufficient

* information because neither SEMI nor ANTI joins permit any

* reassociation into or out of their RHS, so the righthand will

* always be exactly that set of rels.

*/

inner_rel = find_join_input_rel(root, sjinfo->min_righthand);

if (!join_is_reversed)

selec = eqjoinsel_semi(operator, &vardata1, &vardata2,

inner_rel);

else

selec = eqjoinsel_semi(get_commutator(operator),

&vardata2, &vardata1,

inner_rel);

break;

default:

/* other values not expected here */

elog(ERROR, "unrecognized join type: %d",

(int) sjinfo->jointype);

selec = 0; /* keep compiler quiet */

break;

}

ReleaseVariableStats(vardata1);

ReleaseVariableStats(vardata2);

CLAMP_PROBABILITY(selec);

PG_RETURN_FLOAT8((float8) selec);

}

| static double eqjoinsel_inner | ( | Oid | operator, | |

| VariableStatData * | vardata1, | |||

| VariableStatData * | vardata2 | |||

| ) | [static] |

Definition at line 2201 of file selfuncs.c.

References VariableStatData::atttype, VariableStatData::atttypmod, CLAMP_PROBABILITY, DatumGetBool, DEFAULT_COLLATION_OID, fmgr_info(), free_attstatsslot(), FunctionCall2Coll(), get_attstatsslot(), get_opcode(), get_variable_numdistinct(), GETSTRUCT, HeapTupleIsValid, i, InvalidOid, NULL, palloc0(), pfree(), STATISTIC_KIND_MCV, and VariableStatData::statsTuple.

Referenced by eqjoinsel().

{

double selec;

double nd1;

double nd2;

bool isdefault1;

bool isdefault2;

Form_pg_statistic stats1 = NULL;

Form_pg_statistic stats2 = NULL;

bool have_mcvs1 = false;

Datum *values1 = NULL;

int nvalues1 = 0;

float4 *numbers1 = NULL;

int nnumbers1 = 0;

bool have_mcvs2 = false;

Datum *values2 = NULL;

int nvalues2 = 0;

float4 *numbers2 = NULL;

int nnumbers2 = 0;

nd1 = get_variable_numdistinct(vardata1, &isdefault1);

nd2 = get_variable_numdistinct(vardata2, &isdefault2);

if (HeapTupleIsValid(vardata1->statsTuple))

{

stats1 = (Form_pg_statistic) GETSTRUCT(vardata1->statsTuple);

have_mcvs1 = get_attstatsslot(vardata1->statsTuple,

vardata1->atttype,

vardata1->atttypmod,

STATISTIC_KIND_MCV,

InvalidOid,

NULL,

&values1, &nvalues1,

&numbers1, &nnumbers1);

}

if (HeapTupleIsValid(vardata2->statsTuple))

{

stats2 = (Form_pg_statistic) GETSTRUCT(vardata2->statsTuple);

have_mcvs2 = get_attstatsslot(vardata2->statsTuple,

vardata2->atttype,

vardata2->atttypmod,

STATISTIC_KIND_MCV,

InvalidOid,

NULL,

&values2, &nvalues2,

&numbers2, &nnumbers2);

}

if (have_mcvs1 && have_mcvs2)

{

/*

* We have most-common-value lists for both relations. Run through

* the lists to see which MCVs actually join to each other with the

* given operator. This allows us to determine the exact join

* selectivity for the portion of the relations represented by the MCV

* lists. We still have to estimate for the remaining population, but

* in a skewed distribution this gives us a big leg up in accuracy.

* For motivation see the analysis in Y. Ioannidis and S.

* Christodoulakis, "On the propagation of errors in the size of join

* results", Technical Report 1018, Computer Science Dept., University

* of Wisconsin, Madison, March 1991 (available from ftp.cs.wisc.edu).

*/

FmgrInfo eqproc;

bool *hasmatch1;

bool *hasmatch2;

double nullfrac1 = stats1->stanullfrac;

double nullfrac2 = stats2->stanullfrac;

double matchprodfreq,

matchfreq1,

matchfreq2,

unmatchfreq1,

unmatchfreq2,

otherfreq1,

otherfreq2,

totalsel1,

totalsel2;

int i,

nmatches;

fmgr_info(get_opcode(operator), &eqproc);

hasmatch1 = (bool *) palloc0(nvalues1 * sizeof(bool));

hasmatch2 = (bool *) palloc0(nvalues2 * sizeof(bool));

/*

* Note we assume that each MCV will match at most one member of the

* other MCV list. If the operator isn't really equality, there could

* be multiple matches --- but we don't look for them, both for speed

* and because the math wouldn't add up...

*/

matchprodfreq = 0.0;

nmatches = 0;

for (i = 0; i < nvalues1; i++)

{

int j;

for (j = 0; j < nvalues2; j++)

{

if (hasmatch2[j])

continue;

if (DatumGetBool(FunctionCall2Coll(&eqproc,

DEFAULT_COLLATION_OID,

values1[i],

values2[j])))

{

hasmatch1[i] = hasmatch2[j] = true;

matchprodfreq += numbers1[i] * numbers2[j];

nmatches++;

break;

}

}

}

CLAMP_PROBABILITY(matchprodfreq);

/* Sum up frequencies of matched and unmatched MCVs */

matchfreq1 = unmatchfreq1 = 0.0;

for (i = 0; i < nvalues1; i++)

{

if (hasmatch1[i])

matchfreq1 += numbers1[i];

else

unmatchfreq1 += numbers1[i];

}

CLAMP_PROBABILITY(matchfreq1);

CLAMP_PROBABILITY(unmatchfreq1);

matchfreq2 = unmatchfreq2 = 0.0;

for (i = 0; i < nvalues2; i++)

{

if (hasmatch2[i])

matchfreq2 += numbers2[i];

else

unmatchfreq2 += numbers2[i];

}

CLAMP_PROBABILITY(matchfreq2);

CLAMP_PROBABILITY(unmatchfreq2);

pfree(hasmatch1);

pfree(hasmatch2);

/*

* Compute total frequency of non-null values that are not in the MCV

* lists.

*/

otherfreq1 = 1.0 - nullfrac1 - matchfreq1 - unmatchfreq1;

otherfreq2 = 1.0 - nullfrac2 - matchfreq2 - unmatchfreq2;

CLAMP_PROBABILITY(otherfreq1);

CLAMP_PROBABILITY(otherfreq2);

/*

* We can estimate the total selectivity from the point of view of

* relation 1 as: the known selectivity for matched MCVs, plus

* unmatched MCVs that are assumed to match against random members of

* relation 2's non-MCV population, plus non-MCV values that are

* assumed to match against random members of relation 2's unmatched

* MCVs plus non-MCV values.

*/

totalsel1 = matchprodfreq;

if (nd2 > nvalues2)

totalsel1 += unmatchfreq1 * otherfreq2 / (nd2 - nvalues2);

if (nd2 > nmatches)

totalsel1 += otherfreq1 * (otherfreq2 + unmatchfreq2) /

(nd2 - nmatches);

/* Same estimate from the point of view of relation 2. */

totalsel2 = matchprodfreq;

if (nd1 > nvalues1)

totalsel2 += unmatchfreq2 * otherfreq1 / (nd1 - nvalues1);

if (nd1 > nmatches)

totalsel2 += otherfreq2 * (otherfreq1 + unmatchfreq1) /

(nd1 - nmatches);

/*

* Use the smaller of the two estimates. This can be justified in

* essentially the same terms as given below for the no-stats case: to

* a first approximation, we are estimating from the point of view of

* the relation with smaller nd.

*/

selec = (totalsel1 < totalsel2) ? totalsel1 : totalsel2;

}

else

{

/*

* We do not have MCV lists for both sides. Estimate the join

* selectivity as MIN(1/nd1,1/nd2)*(1-nullfrac1)*(1-nullfrac2). This

* is plausible if we assume that the join operator is strict and the

* non-null values are about equally distributed: a given non-null

* tuple of rel1 will join to either zero or N2*(1-nullfrac2)/nd2 rows

* of rel2, so total join rows are at most

* N1*(1-nullfrac1)*N2*(1-nullfrac2)/nd2 giving a join selectivity of

* not more than (1-nullfrac1)*(1-nullfrac2)/nd2. By the same logic it

* is not more than (1-nullfrac1)*(1-nullfrac2)/nd1, so the expression

* with MIN() is an upper bound. Using the MIN() means we estimate

* from the point of view of the relation with smaller nd (since the

* larger nd is determining the MIN). It is reasonable to assume that

* most tuples in this rel will have join partners, so the bound is

* probably reasonably tight and should be taken as-is.

*

* XXX Can we be smarter if we have an MCV list for just one side? It

* seems that if we assume equal distribution for the other side, we

* end up with the same answer anyway.

*/

double nullfrac1 = stats1 ? stats1->stanullfrac : 0.0;

double nullfrac2 = stats2 ? stats2->stanullfrac : 0.0;

selec = (1.0 - nullfrac1) * (1.0 - nullfrac2);

if (nd1 > nd2)

selec /= nd1;

else

selec /= nd2;

}

if (have_mcvs1)

free_attstatsslot(vardata1->atttype, values1, nvalues1,

numbers1, nnumbers1);

if (have_mcvs2)

free_attstatsslot(vardata2->atttype, values2, nvalues2,

numbers2, nnumbers2);

return selec;

}

| static double eqjoinsel_semi | ( | Oid | operator, | |

| VariableStatData * | vardata1, | |||

| VariableStatData * | vardata2, | |||

| RelOptInfo * | inner_rel | |||

| ) | [static] |

Definition at line 2427 of file selfuncs.c.

References VariableStatData::atttype, VariableStatData::atttypmod, CLAMP_PROBABILITY, DatumGetBool, DEFAULT_COLLATION_OID, fmgr_info(), free_attstatsslot(), FunctionCall2Coll(), get_attstatsslot(), get_opcode(), get_variable_numdistinct(), GETSTRUCT, HeapTupleIsValid, i, InvalidOid, Min, NULL, OidIsValid, palloc0(), pfree(), VariableStatData::rel, RelOptInfo::rows, STATISTIC_KIND_MCV, and VariableStatData::statsTuple.

Referenced by eqjoinsel().

{

double selec;

double nd1;

double nd2;

bool isdefault1;

bool isdefault2;

Form_pg_statistic stats1 = NULL;

bool have_mcvs1 = false;

Datum *values1 = NULL;

int nvalues1 = 0;

float4 *numbers1 = NULL;

int nnumbers1 = 0;

bool have_mcvs2 = false;

Datum *values2 = NULL;

int nvalues2 = 0;

float4 *numbers2 = NULL;

int nnumbers2 = 0;

nd1 = get_variable_numdistinct(vardata1, &isdefault1);

nd2 = get_variable_numdistinct(vardata2, &isdefault2);

/*

* We clamp nd2 to be not more than what we estimate the inner relation's

* size to be. This is intuitively somewhat reasonable since obviously

* there can't be more than that many distinct values coming from the

* inner rel. The reason for the asymmetry (ie, that we don't clamp nd1

* likewise) is that this is the only pathway by which restriction clauses

* applied to the inner rel will affect the join result size estimate,

* since set_joinrel_size_estimates will multiply SEMI/ANTI selectivity by

* only the outer rel's size. If we clamped nd1 we'd be double-counting

* the selectivity of outer-rel restrictions.

*

* We can apply this clamping both with respect to the base relation from

* which the join variable comes (if there is just one), and to the

* immediate inner input relation of the current join.

*/

if (vardata2->rel)

nd2 = Min(nd2, vardata2->rel->rows);

nd2 = Min(nd2, inner_rel->rows);

if (HeapTupleIsValid(vardata1->statsTuple))

{

stats1 = (Form_pg_statistic) GETSTRUCT(vardata1->statsTuple);

have_mcvs1 = get_attstatsslot(vardata1->statsTuple,

vardata1->atttype,

vardata1->atttypmod,

STATISTIC_KIND_MCV,

InvalidOid,

NULL,

&values1, &nvalues1,

&numbers1, &nnumbers1);

}

if (HeapTupleIsValid(vardata2->statsTuple))

{

have_mcvs2 = get_attstatsslot(vardata2->statsTuple,

vardata2->atttype,

vardata2->atttypmod,

STATISTIC_KIND_MCV,

InvalidOid,

NULL,

&values2, &nvalues2,

&numbers2, &nnumbers2);

}

if (have_mcvs1 && have_mcvs2 && OidIsValid(operator))

{

/*

* We have most-common-value lists for both relations. Run through

* the lists to see which MCVs actually join to each other with the

* given operator. This allows us to determine the exact join

* selectivity for the portion of the relations represented by the MCV

* lists. We still have to estimate for the remaining population, but

* in a skewed distribution this gives us a big leg up in accuracy.

*/

FmgrInfo eqproc;

bool *hasmatch1;

bool *hasmatch2;

double nullfrac1 = stats1->stanullfrac;

double matchfreq1,

uncertainfrac,

uncertain;

int i,

nmatches,

clamped_nvalues2;

/*

* The clamping above could have resulted in nd2 being less than

* nvalues2; in which case, we assume that precisely the nd2 most

* common values in the relation will appear in the join input, and so

* compare to only the first nd2 members of the MCV list. Of course

* this is frequently wrong, but it's the best bet we can make.

*/

clamped_nvalues2 = Min(nvalues2, nd2);

fmgr_info(get_opcode(operator), &eqproc);

hasmatch1 = (bool *) palloc0(nvalues1 * sizeof(bool));

hasmatch2 = (bool *) palloc0(clamped_nvalues2 * sizeof(bool));

/*

* Note we assume that each MCV will match at most one member of the

* other MCV list. If the operator isn't really equality, there could

* be multiple matches --- but we don't look for them, both for speed

* and because the math wouldn't add up...

*/

nmatches = 0;

for (i = 0; i < nvalues1; i++)

{

int j;

for (j = 0; j < clamped_nvalues2; j++)

{

if (hasmatch2[j])

continue;

if (DatumGetBool(FunctionCall2Coll(&eqproc,

DEFAULT_COLLATION_OID,

values1[i],

values2[j])))

{

hasmatch1[i] = hasmatch2[j] = true;

nmatches++;

break;

}

}

}

/* Sum up frequencies of matched MCVs */

matchfreq1 = 0.0;

for (i = 0; i < nvalues1; i++)

{

if (hasmatch1[i])

matchfreq1 += numbers1[i];

}

CLAMP_PROBABILITY(matchfreq1);

pfree(hasmatch1);

pfree(hasmatch2);

/*

* Now we need to estimate the fraction of relation 1 that has at

* least one join partner. We know for certain that the matched MCVs

* do, so that gives us a lower bound, but we're really in the dark

* about everything else. Our crude approach is: if nd1 <= nd2 then

* assume all non-null rel1 rows have join partners, else assume for

* the uncertain rows that a fraction nd2/nd1 have join partners. We

* can discount the known-matched MCVs from the distinct-values counts

* before doing the division.

*

* Crude as the above is, it's completely useless if we don't have

* reliable ndistinct values for both sides. Hence, if either nd1 or

* nd2 is default, punt and assume half of the uncertain rows have

* join partners.

*/

if (!isdefault1 && !isdefault2)

{

nd1 -= nmatches;

nd2 -= nmatches;

if (nd1 <= nd2 || nd2 < 0)

uncertainfrac = 1.0;

else

uncertainfrac = nd2 / nd1;

}

else

uncertainfrac = 0.5;

uncertain = 1.0 - matchfreq1 - nullfrac1;

CLAMP_PROBABILITY(uncertain);

selec = matchfreq1 + uncertainfrac * uncertain;

}

else

{

/*

* Without MCV lists for both sides, we can only use the heuristic

* about nd1 vs nd2.

*/

double nullfrac1 = stats1 ? stats1->stanullfrac : 0.0;

if (!isdefault1 && !isdefault2)

{

if (nd1 <= nd2 || nd2 < 0)

selec = 1.0 - nullfrac1;

else

selec = (nd2 / nd1) * (1.0 - nullfrac1);

}

else

selec = 0.5 * (1.0 - nullfrac1);

}

if (have_mcvs1)

free_attstatsslot(vardata1->atttype, values1, nvalues1,

numbers1, nnumbers1);

if (have_mcvs2)

free_attstatsslot(vardata2->atttype, values2, nvalues2,

numbers2, nnumbers2);

return selec;

}

| Datum eqsel | ( | PG_FUNCTION_ARGS | ) |

Definition at line 216 of file selfuncs.c.

References DEFAULT_EQ_SEL, get_restriction_variable(), IsA, PG_GETARG_INT32, PG_GETARG_POINTER, PG_RETURN_FLOAT8, ReleaseVariableStats, var_eq_const(), and var_eq_non_const().

Referenced by neqsel().

{

PlannerInfo *root = (PlannerInfo *) PG_GETARG_POINTER(0);

Oid operator = PG_GETARG_OID(1);

List *args = (List *) PG_GETARG_POINTER(2);

int varRelid = PG_GETARG_INT32(3);

VariableStatData vardata;

Node *other;

bool varonleft;

double selec;

/*

* If expression is not variable = something or something = variable, then

* punt and return a default estimate.

*/

if (!get_restriction_variable(root, args, varRelid,

&vardata, &other, &varonleft))

PG_RETURN_FLOAT8(DEFAULT_EQ_SEL);

/*

* We can do a lot better if the something is a constant. (Note: the

* Const might result from estimation rather than being a simple constant

* in the query.)

*/

if (IsA(other, Const))

selec = var_eq_const(&vardata, operator,

((Const *) other)->constvalue,

((Const *) other)->constisnull,

varonleft);

else

selec = var_eq_non_const(&vardata, operator, other,

varonleft);

ReleaseVariableStats(vardata);

PG_RETURN_FLOAT8((float8) selec);

}

| int estimate_array_length | ( | Node * | arrayexpr | ) |

Definition at line 2028 of file selfuncs.c.

References ARR_DIMS, ARR_NDIM, ArrayGetNItems(), DatumGetArrayTypeP, IsA, list_length(), and strip_array_coercion().

Referenced by btcostestimate(), cost_qual_eval_walker(), cost_tidscan(), genericcostestimate(), and gincost_scalararrayopexpr().

{

/* look through any binary-compatible relabeling of arrayexpr */

arrayexpr = strip_array_coercion(arrayexpr);

if (arrayexpr && IsA(arrayexpr, Const))

{

Datum arraydatum = ((Const *) arrayexpr)->constvalue;

bool arrayisnull = ((Const *) arrayexpr)->constisnull;

ArrayType *arrayval;

if (arrayisnull)

return 0;

arrayval = DatumGetArrayTypeP(arraydatum);

return ArrayGetNItems(ARR_NDIM(arrayval), ARR_DIMS(arrayval));

}

else if (arrayexpr && IsA(arrayexpr, ArrayExpr) &&

!((ArrayExpr *) arrayexpr)->multidims)

{

return list_length(((ArrayExpr *) arrayexpr)->elements);

}

else

{

/* default guess --- see also scalararraysel */

return 10;

}

}

| Selectivity estimate_hash_bucketsize | ( | PlannerInfo * | root, | |

| Node * | hashkey, | |||

| double | nbuckets | |||

| ) |

Definition at line 3430 of file selfuncs.c.

References VariableStatData::atttype, VariableStatData::atttypmod, examine_variable(), free_attstatsslot(), get_attstatsslot(), get_variable_numdistinct(), GETSTRUCT, HeapTupleIsValid, InvalidOid, NULL, VariableStatData::rel, ReleaseVariableStats, RelOptInfo::rows, STATISTIC_KIND_MCV, VariableStatData::statsTuple, and RelOptInfo::tuples.

Referenced by final_cost_hashjoin().

{

VariableStatData vardata;

double estfract,

ndistinct,

stanullfrac,

mcvfreq,

avgfreq;

bool isdefault;

float4 *numbers;

int nnumbers;

examine_variable(root, hashkey, 0, &vardata);

/* Get number of distinct values */

ndistinct = get_variable_numdistinct(&vardata, &isdefault);

/* If ndistinct isn't real, punt and return 0.1, per comments above */

if (isdefault)

{

ReleaseVariableStats(vardata);

return (Selectivity) 0.1;

}

/* Get fraction that are null */

if (HeapTupleIsValid(vardata.statsTuple))

{

Form_pg_statistic stats;

stats = (Form_pg_statistic) GETSTRUCT(vardata.statsTuple);

stanullfrac = stats->stanullfrac;

}

else

stanullfrac = 0.0;

/* Compute avg freq of all distinct data values in raw relation */

avgfreq = (1.0 - stanullfrac) / ndistinct;

/*

* Adjust ndistinct to account for restriction clauses. Observe we are

* assuming that the data distribution is affected uniformly by the

* restriction clauses!

*

* XXX Possibly better way, but much more expensive: multiply by

* selectivity of rel's restriction clauses that mention the target Var.

*/

if (vardata.rel)

ndistinct *= vardata.rel->rows / vardata.rel->tuples;

/*

* Initial estimate of bucketsize fraction is 1/nbuckets as long as the

* number of buckets is less than the expected number of distinct values;

* otherwise it is 1/ndistinct.

*/

if (ndistinct > nbuckets)

estfract = 1.0 / nbuckets;

else

estfract = 1.0 / ndistinct;

/*

* Look up the frequency of the most common value, if available.

*/

mcvfreq = 0.0;

if (HeapTupleIsValid(vardata.statsTuple))

{

if (get_attstatsslot(vardata.statsTuple,

vardata.atttype, vardata.atttypmod,

STATISTIC_KIND_MCV, InvalidOid,

NULL,

NULL, NULL,

&numbers, &nnumbers))

{

/*

* The first MCV stat is for the most common value.

*/

if (nnumbers > 0)

mcvfreq = numbers[0];

free_attstatsslot(vardata.atttype, NULL, 0,

numbers, nnumbers);

}

}

/*

* Adjust estimated bucketsize upward to account for skewed distribution.

*/

if (avgfreq > 0.0 && mcvfreq > avgfreq)

estfract *= mcvfreq / avgfreq;

/*

* Clamp bucketsize to sane range (the above adjustment could easily

* produce an out-of-range result). We set the lower bound a little above

* zero, since zero isn't a very sane result.

*/

if (estfract < 1.0e-6)

estfract = 1.0e-6;

else if (estfract > 1.0)

estfract = 1.0;

ReleaseVariableStats(vardata);

return (Selectivity) estfract;

}

| double estimate_num_groups | ( | PlannerInfo * | root, | |

| List * | groupExprs, | |||

| double | input_rows | |||

| ) |

Definition at line 3206 of file selfuncs.c.

References add_unique_group_var(), Assert, contain_volatile_functions(), examine_variable(), exprType(), for_each_cell, HeapTupleIsValid, VariableStatData::isunique, lcons(), lfirst, linitial, list_head(), lnext, GroupVarInfo::ndistinct, NIL, pull_var_clause(), PVC_RECURSE_AGGREGATES, PVC_RECURSE_PLACEHOLDERS, GroupVarInfo::rel, ReleaseVariableStats, RELOPT_BASEREL, RelOptInfo::reloptkind, RelOptInfo::rows, VariableStatData::statsTuple, and RelOptInfo::tuples.

Referenced by create_unique_path(), query_planner(), and recurse_set_operations().

{

List *varinfos = NIL;

double numdistinct;

ListCell *l;

/*

* If no grouping columns, there's exactly one group. (This can't happen

* for normal cases with GROUP BY or DISTINCT, but it is possible for

* corner cases with set operations.)

*/

if (groupExprs == NIL)

return 1.0;

/*

* Count groups derived from boolean grouping expressions. For other

* expressions, find the unique Vars used, treating an expression as a Var

* if we can find stats for it. For each one, record the statistical

* estimate of number of distinct values (total in its table, without

* regard for filtering).

*/

numdistinct = 1.0;

foreach(l, groupExprs)

{

Node *groupexpr = (Node *) lfirst(l);

VariableStatData vardata;

List *varshere;

ListCell *l2;

/* Short-circuit for expressions returning boolean */

if (exprType(groupexpr) == BOOLOID)

{

numdistinct *= 2.0;

continue;

}

/*

* If examine_variable is able to deduce anything about the GROUP BY

* expression, treat it as a single variable even if it's really more

* complicated.

*/

examine_variable(root, groupexpr, 0, &vardata);

if (HeapTupleIsValid(vardata.statsTuple) || vardata.isunique)

{

varinfos = add_unique_group_var(root, varinfos,

groupexpr, &vardata);

ReleaseVariableStats(vardata);

continue;

}

ReleaseVariableStats(vardata);

/*

* Else pull out the component Vars. Handle PlaceHolderVars by

* recursing into their arguments (effectively assuming that the

* PlaceHolderVar doesn't change the number of groups, which boils

* down to ignoring the possible addition of nulls to the result set).

*/

varshere = pull_var_clause(groupexpr,

PVC_RECURSE_AGGREGATES,

PVC_RECURSE_PLACEHOLDERS);

/*

* If we find any variable-free GROUP BY item, then either it is a

* constant (and we can ignore it) or it contains a volatile function;

* in the latter case we punt and assume that each input row will

* yield a distinct group.

*/

if (varshere == NIL)

{

if (contain_volatile_functions(groupexpr))

return input_rows;

continue;

}

/*

* Else add variables to varinfos list

*/

foreach(l2, varshere)

{

Node *var = (Node *) lfirst(l2);

examine_variable(root, var, 0, &vardata);

varinfos = add_unique_group_var(root, varinfos, var, &vardata);

ReleaseVariableStats(vardata);

}

}

/*

* If now no Vars, we must have an all-constant or all-boolean GROUP BY

* list.

*/

if (varinfos == NIL)

{

/* Guard against out-of-range answers */

if (numdistinct > input_rows)

numdistinct = input_rows;

return numdistinct;

}

/*

* Group Vars by relation and estimate total numdistinct.

*

* For each iteration of the outer loop, we process the frontmost Var in

* varinfos, plus all other Vars in the same relation. We remove these

* Vars from the newvarinfos list for the next iteration. This is the

* easiest way to group Vars of same rel together.

*/

do

{

GroupVarInfo *varinfo1 = (GroupVarInfo *) linitial(varinfos);

RelOptInfo *rel = varinfo1->rel;

double reldistinct = varinfo1->ndistinct;

double relmaxndistinct = reldistinct;

int relvarcount = 1;

List *newvarinfos = NIL;

/*

* Get the product of numdistinct estimates of the Vars for this rel.

* Also, construct new varinfos list of remaining Vars.

*/

for_each_cell(l, lnext(list_head(varinfos)))

{

GroupVarInfo *varinfo2 = (GroupVarInfo *) lfirst(l);

if (varinfo2->rel == varinfo1->rel)

{

reldistinct *= varinfo2->ndistinct;

if (relmaxndistinct < varinfo2->ndistinct)

relmaxndistinct = varinfo2->ndistinct;

relvarcount++;

}

else

{

/* not time to process varinfo2 yet */

newvarinfos = lcons(varinfo2, newvarinfos);

}

}

/*

* Sanity check --- don't divide by zero if empty relation.

*/

Assert(rel->reloptkind == RELOPT_BASEREL);

if (rel->tuples > 0)

{

/*

* Clamp to size of rel, or size of rel / 10 if multiple Vars. The

* fudge factor is because the Vars are probably correlated but we

* don't know by how much. We should never clamp to less than the

* largest ndistinct value for any of the Vars, though, since

* there will surely be at least that many groups.

*/

double clamp = rel->tuples;

if (relvarcount > 1)

{

clamp *= 0.1;

if (clamp < relmaxndistinct)

{

clamp = relmaxndistinct;

/* for sanity in case some ndistinct is too large: */

if (clamp > rel->tuples)

clamp = rel->tuples;

}

}

if (reldistinct > clamp)

reldistinct = clamp;

/*

* Multiply by restriction selectivity.

*/

reldistinct *= rel->rows / rel->tuples;

/*

* Update estimate of total distinct groups.

*/

numdistinct *= reldistinct;

}

varinfos = newvarinfos;

} while (varinfos != NIL);

numdistinct = ceil(numdistinct);

/* Guard against out-of-range answers */

if (numdistinct > input_rows)

numdistinct = input_rows;

if (numdistinct < 1.0)

numdistinct = 1.0;

return numdistinct;

}

| static void examine_simple_variable | ( | PlannerInfo * | root, | |

| Var * | var, | |||

| VariableStatData * | vardata | |||

| ) | [static] |

Definition at line 4462 of file selfuncs.c.