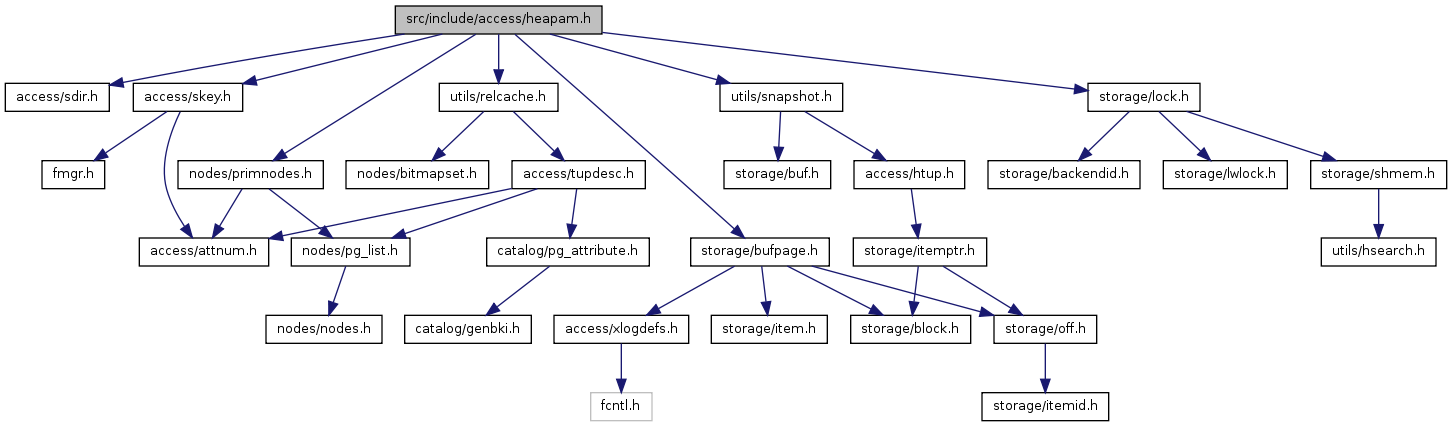

#include "access/sdir.h"#include "access/skey.h"#include "nodes/primnodes.h"#include "storage/bufpage.h"#include "storage/lock.h"#include "utils/relcache.h"#include "utils/snapshot.h"

Go to the source code of this file.

| #define heap_close | ( | r, | ||

| l | ||||

| ) | relation_close(r,l) |

Definition at line 95 of file heapam.h.

Referenced by acquire_inherited_sample_rows(), AcquireRewriteLocks(), AddEnumLabel(), AddNewAttributeTuples(), addRangeTableEntry(), AddRoleMems(), afterTriggerInvokeEvents(), AfterTriggerSetState(), AggregateCreate(), AlterConstraintNamespaces(), AlterDatabase(), AlterDatabaseOwner(), AlterDomainAddConstraint(), AlterDomainDefault(), AlterDomainDropConstraint(), AlterDomainNotNull(), AlterDomainValidateConstraint(), AlterEventTrigger(), AlterEventTriggerOwner(), AlterEventTriggerOwner_oid(), AlterExtensionNamespace(), AlterForeignDataWrapper(), AlterForeignDataWrapperOwner(), AlterForeignDataWrapperOwner_oid(), AlterForeignServer(), AlterForeignServerOwner(), AlterForeignServerOwner_oid(), AlterFunction(), AlterObjectNamespace_oid(), AlterRole(), AlterSchemaOwner(), AlterSchemaOwner_oid(), AlterSetting(), AlterTableCreateToastTable(), AlterTableNamespaceInternal(), AlterTableSpaceOptions(), AlterTSConfiguration(), AlterTSDictionary(), AlterTypeNamespaceInternal(), AlterTypeOwner(), AlterTypeOwnerInternal(), AlterUserMapping(), AppendAttributeTuples(), ApplyExtensionUpdates(), AssignTypeArrayOid(), ATAddCheckConstraint(), ATAddForeignKeyConstraint(), ATExecAddColumn(), ATExecAddInherit(), ATExecAddOf(), ATExecAlterColumnGenericOptions(), ATExecAlterColumnType(), ATExecChangeOwner(), ATExecDropColumn(), ATExecDropConstraint(), ATExecDropInherit(), ATExecDropNotNull(), ATExecDropOf(), ATExecGenericOptions(), ATExecSetNotNull(), ATExecSetOptions(), ATExecSetRelOptions(), ATExecSetStatistics(), ATExecSetStorage(), ATExecSetTableSpace(), ATExecValidateConstraint(), ATRewriteTable(), ATRewriteTables(), AttrDefaultFetch(), boot_openrel(), BootstrapToastTable(), build_indices(), build_physical_tlist(), CatalogCacheInitializeCache(), change_owner_fix_column_acls(), changeDependencyFor(), changeDependencyOnOwner(), check_db_file_conflict(), check_functional_grouping(), check_selective_binary_conversion(), CheckConstraintFetch(), checkSharedDependencies(), ChooseConstraintName(), close_lo_relation(), closerel(), cluster(), CollationCreate(), ConstraintNameIsUsed(), ConversionCreate(), copy_heap_data(), copyTemplateDependencies(), create_proc_lang(), create_toast_table(), CreateCast(), CreateComments(), CreateConstraintEntry(), createdb(), CreateForeignDataWrapper(), CreateForeignServer(), CreateForeignTable(), CreateOpFamily(), CreateRole(), CreateSharedComments(), CreateTableSpace(), CreateTrigger(), CreateUserMapping(), currtid_byrelname(), currtid_byreloid(), currtid_for_view(), database_to_xmlschema_internal(), DefineIndex(), DefineOpClass(), DefineQueryRewrite(), DefineSequence(), DefineTSConfiguration(), DefineTSDictionary(), DefineTSParser(), DefineTSTemplate(), DeleteAttributeTuples(), DeleteComments(), deleteDependencyRecordsFor(), deleteDependencyRecordsForClass(), deleteOneObject(), DeleteRelationTuple(), DeleteSecurityLabel(), DeleteSharedComments(), deleteSharedDependencyRecordsFor(), DeleteSharedSecurityLabel(), DeleteSystemAttributeTuples(), deleteWhatDependsOn(), DelRoleMems(), deparseSelectSql(), do_autovacuum(), DoCopy(), drop_parent_dependency(), DropCastById(), dropDatabaseDependencies(), dropdb(), DropProceduralLanguageById(), DropRole(), DropSetting(), DropTableSpace(), EnableDisableRule(), EnableDisableTrigger(), enum_endpoint(), enum_range_internal(), EnumValuesCreate(), EnumValuesDelete(), EvalPlanQualEnd(), EventTriggerSQLDropAddObject(), exec_object_restorecon(), ExecAlterExtensionStmt(), ExecAlterObjectSchemaStmt(), ExecAlterOwnerStmt(), ExecCloseScanRelation(), ExecEndPlan(), ExecGrant_Database(), ExecGrant_Fdw(), ExecGrant_ForeignServer(), ExecGrant_Function(), ExecGrant_Language(), ExecGrant_Largeobject(), ExecGrant_Namespace(), ExecGrant_Relation(), ExecGrant_Tablespace(), ExecGrant_Type(), ExecRefreshMatView(), ExecRenameStmt(), ExecuteTruncate(), expand_inherited_rtentry(), expand_targetlist(), extension_config_remove(), find_inheritance_children(), find_language_template(), find_typed_table_dependencies(), fireRIRrules(), free_parsestate(), get_actual_variable_range(), get_constraint_index(), get_database_list(), get_database_oid(), get_db_info(), get_domain_constraint_oid(), get_extension_name(), get_extension_oid(), get_extension_schema(), get_file_fdw_attribute_options(), get_index_constraint(), get_object_address_relobject(), get_pkey_attnames(), get_rel_oids(), get_relation_constraint_oid(), get_relation_constraints(), get_relation_data_width(), get_relation_info(), get_rewrite_oid_without_relid(), get_tablespace_name(), get_tablespace_oid(), get_trigger_oid(), GetComment(), getConstraintTypeDescription(), GetDatabaseTuple(), GetDatabaseTupleByOid(), GetDefaultOpClass(), GetDomainConstraints(), getExtensionOfObject(), getObjectDescription(), getObjectIdentity(), getOwnedSequences(), getRelationsInNamespace(), GetSecurityLabel(), GetSharedSecurityLabel(), gettype(), GrantRole(), heap_create_with_catalog(), heap_drop_with_catalog(), heap_sync(), heap_truncate(), heap_truncate_find_FKs(), heap_truncate_one_rel(), index_build(), index_constraint_create(), index_create(), index_drop(), index_set_state_flags(), index_update_stats(), insert_event_trigger_tuple(), InsertExtensionTuple(), InsertRule(), intorel_shutdown(), isQueryUsingTempRelation_walker(), LargeObjectCreate(), LargeObjectDrop(), LargeObjectExists(), load_enum_cache_data(), lookup_ts_config_cache(), LookupOpclassInfo(), make_new_heap(), make_viewdef(), makeArrayTypeName(), mark_index_clustered(), MergeAttributes(), MergeAttributesIntoExisting(), MergeConstraintsIntoExisting(), MergeWithExistingConstraint(), movedb(), myLargeObjectExists(), NamespaceCreate(), objectsInSchemaToOids(), OperatorCreate(), OperatorShellMake(), OperatorUpd(), performDeletion(), performMultipleDeletions(), pg_extension_config_dump(), pg_extension_ownercheck(), pg_get_serial_sequence(), pg_get_triggerdef_worker(), pg_identify_object(), pg_largeobject_aclmask_snapshot(), pg_largeobject_ownercheck(), pgrowlocks(), pgstat_collect_oids(), postgresPlanForeignModify(), ProcedureCreate(), process_settings(), RangeCreate(), RangeDelete(), rebuild_relation(), recordMultipleDependencies(), recordSharedDependencyOn(), regclassin(), regoperin(), regprocin(), regtypein(), reindex_index(), reindex_relation(), ReindexDatabase(), RelationBuildRuleLock(), RelationBuildTriggers(), RelationBuildTupleDesc(), RelationGetExclusionInfo(), RelationGetIndexList(), RelationRemoveInheritance(), RelationSetNewRelfilenode(), remove_dbtablespaces(), RemoveAmOpEntryById(), RemoveAmProcEntryById(), RemoveAttrDefault(), RemoveAttrDefaultById(), RemoveAttributeById(), RemoveCollationById(), RemoveConstraintById(), RemoveConversionById(), RemoveDefaultACLById(), RemoveEventTriggerById(), RemoveExtensionById(), RemoveForeignDataWrapperById(), RemoveForeignServerById(), RemoveFunctionById(), RemoveObjects(), RemoveOpClassById(), RemoveOperatorById(), RemoveOpFamilyById(), RemoveRewriteRuleById(), RemoveRoleFromObjectACL(), RemoveSchemaById(), RemoveStatistics(), RemoveTriggerById(), RemoveTSConfigurationById(), RemoveTSDictionaryById(), RemoveTSParserById(), RemoveTSTemplateById(), RemoveTypeById(), RemoveUserMappingById(), renameatt_internal(), RenameConstraint(), RenameConstraintById(), RenameDatabase(), RenameRelationInternal(), RenameRewriteRule(), RenameRole(), RenameSchema(), RenameTableSpace(), renametrig(), RenameType(), RenameTypeInternal(), RewriteQuery(), rewriteTargetView(), RI_FKey_cascade_del(), RI_FKey_cascade_upd(), RI_FKey_check(), RI_FKey_setdefault_del(), RI_FKey_setdefault_upd(), RI_FKey_setnull_del(), RI_FKey_setnull_upd(), ri_restrict_del(), ri_restrict_upd(), ScanPgRelation(), schema_to_xmlschema_internal(), SearchCatCache(), SearchCatCacheList(), sepgsql_attribute_post_create(), sepgsql_database_post_create(), sepgsql_index_modify(), sepgsql_proc_post_create(), sepgsql_proc_setattr(), sepgsql_relation_post_create(), sepgsql_relation_setattr(), sepgsql_schema_post_create(), sequenceIsOwned(), SetDefaultACL(), SetFunctionArgType(), SetFunctionReturnType(), SetRelationHasSubclass(), SetRelationNumChecks(), SetRelationRuleStatus(), SetSecurityLabel(), SetSharedSecurityLabel(), setTargetTable(), shdepDropOwned(), shdepReassignOwned(), StoreAttrDefault(), StoreCatalogInheritance(), storeOperators(), storeProcedures(), swap_relation_files(), table_to_xml_and_xmlschema(), table_to_xmlschema(), ThereIsAtLeastOneRole(), toast_delete_datum(), toast_fetch_datum(), toast_fetch_datum_slice(), toast_save_datum(), toastid_valueid_exists(), transformIndexConstraint(), transformIndexStmt(), transformRuleStmt(), transformTableLikeClause(), transientrel_shutdown(), TypeCreate(), typeInheritsFrom(), TypeShellMake(), update_attstats(), updateAclDependencies(), UpdateIndexRelation(), vac_truncate_clog(), vac_update_datfrozenxid(), vac_update_relstats(), validate_index(), and validateDomainConstraint().

| #define HEAP_INSERT_FROZEN 0x0004 |

Definition at line 29 of file heapam.h.

Referenced by heap_prepare_insert().

| #define HEAP_INSERT_SKIP_FSM 0x0002 |

Definition at line 28 of file heapam.h.

Referenced by intorel_startup(), raw_heap_insert(), and transientrel_startup().

| #define HEAP_INSERT_SKIP_WAL 0x0001 |

Definition at line 27 of file heapam.h.

Referenced by ATRewriteTable(), CopyFrom(), heap_insert(), intorel_shutdown(), raw_heap_insert(), and transientrel_shutdown().

| typedef struct BulkInsertStateData* BulkInsertState |

| typedef struct HeapScanDescData* HeapScanDesc |

| typedef struct HeapUpdateFailureData HeapUpdateFailureData |

| typedef enum LockTupleMode LockTupleMode |

| enum LockTupleMode |

Definition at line 36 of file heapam.h.

{

/* SELECT FOR KEY SHARE */

LockTupleKeyShare,

/* SELECT FOR SHARE */

LockTupleShare,

/* SELECT FOR NO KEY UPDATE, and UPDATEs that don't modify key columns */

LockTupleNoKeyExclusive,

/* SELECT FOR UPDATE, UPDATEs that modify key columns, and DELETE */

LockTupleExclusive

} LockTupleMode;

| void FreeBulkInsertState | ( | BulkInsertState | ) |

Definition at line 1969 of file heapam.c.

References BulkInsertStateData::current_buf, FreeAccessStrategy(), InvalidBuffer, pfree(), ReleaseBuffer(), and BulkInsertStateData::strategy.

Referenced by ATRewriteTable(), CopyFrom(), intorel_shutdown(), and transientrel_shutdown().

{

if (bistate->current_buf != InvalidBuffer)

ReleaseBuffer(bistate->current_buf);

FreeAccessStrategy(bistate->strategy);

pfree(bistate);

}

| BulkInsertState GetBulkInsertState | ( | void | ) |

Definition at line 1955 of file heapam.c.

References BAS_BULKWRITE, BulkInsertStateData::current_buf, GetAccessStrategy(), palloc(), and BulkInsertStateData::strategy.

Referenced by ATRewriteTable(), CopyFrom(), intorel_startup(), and transientrel_startup().

{

BulkInsertState bistate;

bistate = (BulkInsertState) palloc(sizeof(BulkInsertStateData));

bistate->strategy = GetAccessStrategy(BAS_BULKWRITE);

bistate->current_buf = InvalidBuffer;

return bistate;

}

| HeapScanDesc heap_beginscan | ( | Relation | relation, | |

| Snapshot | snapshot, | |||

| int | nkeys, | |||

| ScanKey | key | |||

| ) |

Definition at line 1280 of file heapam.c.

References heap_beginscan_internal().

Referenced by AlterDomainNotNull(), AlterTableSpaceOptions(), ATRewriteTable(), boot_openrel(), check_db_file_conflict(), copy_heap_data(), CopyTo(), createdb(), DefineQueryRewrite(), do_autovacuum(), DropSetting(), DropTableSpace(), find_typed_table_dependencies(), get_database_list(), get_rel_oids(), get_rewrite_oid_without_relid(), get_tables_to_cluster(), get_tablespace_name(), get_tablespace_oid(), getRelationsInNamespace(), gettype(), index_update_stats(), InitScanRelation(), objectsInSchemaToOids(), pgrowlocks(), pgstat_collect_oids(), ReindexDatabase(), remove_dbtablespaces(), RemoveConversionById(), RenameTableSpace(), ThereIsAtLeastOneRole(), vac_truncate_clog(), validateCheckConstraint(), validateDomainConstraint(), and validateForeignKeyConstraint().

{

return heap_beginscan_internal(relation, snapshot, nkeys, key,

true, true, false);

}

| HeapScanDesc heap_beginscan_bm | ( | Relation | relation, | |

| Snapshot | snapshot, | |||

| int | nkeys, | |||

| ScanKey | key | |||

| ) |

Definition at line 1297 of file heapam.c.

References heap_beginscan_internal().

Referenced by ExecInitBitmapHeapScan().

{

return heap_beginscan_internal(relation, snapshot, nkeys, key,

false, false, true);

}

| HeapScanDesc heap_beginscan_strat | ( | Relation | relation, | |

| Snapshot | snapshot, | |||

| int | nkeys, | |||

| ScanKey | key, | |||

| bool | allow_strat, | |||

| bool | allow_sync | |||

| ) |

Definition at line 1288 of file heapam.c.

References heap_beginscan_internal().

Referenced by IndexBuildHeapScan(), IndexCheckExclusion(), pgstat_heap(), systable_beginscan(), and validate_index_heapscan().

{

return heap_beginscan_internal(relation, snapshot, nkeys, key,

allow_strat, allow_sync, false);

}

| HTSU_Result heap_delete | ( | Relation | relation, | |

| ItemPointer | tid, | |||

| CommandId | cid, | |||

| Snapshot | crosscheck, | |||

| bool | wait, | |||

| HeapUpdateFailureData * | hufd | |||

| ) |

Definition at line 2523 of file heapam.c.

References xl_heap_delete::all_visible_cleared, Assert, XLogRecData::buffer, BUFFER_LOCK_EXCLUSIVE, BUFFER_LOCK_UNLOCK, XLogRecData::buffer_std, BufferGetBlockNumber(), BufferGetPage, CacheInvalidateHeapTuple(), CheckForSerializableConflictIn(), HeapUpdateFailureData::cmax, compute_infobits(), compute_new_xmax_infomask(), HeapUpdateFailureData::ctid, XLogRecData::data, elog, END_CRIT_SECTION, ERROR, GetCurrentTransactionId(), HEAP_XMAX_BITS, HEAP_XMAX_INVALID, HEAP_XMAX_IS_LOCKED_ONLY, HEAP_XMAX_IS_MULTI, HeapTupleBeingUpdated, HeapTupleHasExternal, HeapTupleHeaderAdjustCmax(), HeapTupleHeaderClearHotUpdated, HeapTupleHeaderGetCmax(), HeapTupleHeaderGetRawXmax, HeapTupleHeaderGetUpdateXid, HeapTupleHeaderIsOnlyLocked(), HeapTupleHeaderSetCmax, HeapTupleHeaderSetXmax, HeapTupleInvisible, HeapTupleMayBeUpdated, HeapTupleSatisfiesUpdate(), HeapTupleSatisfiesVisibility, HeapTupleSelfUpdated, HeapTupleUpdated, xl_heap_delete::infobits_set, InvalidBuffer, InvalidSnapshot, ItemIdGetLength, ItemIdIsNormal, ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, ItemPointerIsValid, XLogRecData::len, LockBuffer(), LockTupleExclusive, LockTupleTuplock, MarkBufferDirty(), MultiXactIdSetOldestMember(), MultiXactIdWait(), MultiXactStatusUpdate, XLogRecData::next, xl_heaptid::node, PageClearAllVisible, PageGetItem, PageGetItemId, PageIsAllVisible, PageSetLSN, PageSetPrunable, pgstat_count_heap_delete(), RelationData::rd_node, RelationData::rd_rel, ReadBuffer(), RelationNeedsWAL, ReleaseBuffer(), RELKIND_MATVIEW, RELKIND_RELATION, START_CRIT_SECTION, HeapTupleHeaderData::t_ctid, HeapTupleData::t_data, HeapTupleHeaderData::t_infomask, HeapTupleHeaderData::t_infomask2, HeapTupleData::t_len, HeapTupleData::t_self, xl_heap_delete::target, xl_heaptid::tid, toast_delete(), TransactionIdEquals, UnlockReleaseBuffer(), UnlockTupleTuplock, UpdateXmaxHintBits(), visibilitymap_clear(), visibilitymap_pin(), XactLockTableWait(), XLOG_HEAP_DELETE, XLogInsert(), xl_heap_delete::xmax, and HeapUpdateFailureData::xmax.

Referenced by ExecDelete(), and simple_heap_delete().

{

HTSU_Result result;

TransactionId xid = GetCurrentTransactionId();

ItemId lp;

HeapTupleData tp;

Page page;

BlockNumber block;

Buffer buffer;

Buffer vmbuffer = InvalidBuffer;

TransactionId new_xmax;

uint16 new_infomask,

new_infomask2;

bool have_tuple_lock = false;

bool iscombo;

bool all_visible_cleared = false;

Assert(ItemPointerIsValid(tid));

block = ItemPointerGetBlockNumber(tid);

buffer = ReadBuffer(relation, block);

page = BufferGetPage(buffer);

/*

* Before locking the buffer, pin the visibility map page if it appears to

* be necessary. Since we haven't got the lock yet, someone else might be

* in the middle of changing this, so we'll need to recheck after we have

* the lock.

*/

if (PageIsAllVisible(page))

visibilitymap_pin(relation, block, &vmbuffer);

LockBuffer(buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* If we didn't pin the visibility map page and the page has become all

* visible while we were busy locking the buffer, we'll have to unlock and

* re-lock, to avoid holding the buffer lock across an I/O. That's a bit

* unfortunate, but hopefully shouldn't happen often.

*/

if (vmbuffer == InvalidBuffer && PageIsAllVisible(page))

{

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

visibilitymap_pin(relation, block, &vmbuffer);

LockBuffer(buffer, BUFFER_LOCK_EXCLUSIVE);

}

lp = PageGetItemId(page, ItemPointerGetOffsetNumber(tid));

Assert(ItemIdIsNormal(lp));

tp.t_data = (HeapTupleHeader) PageGetItem(page, lp);

tp.t_len = ItemIdGetLength(lp);

tp.t_self = *tid;

l1:

result = HeapTupleSatisfiesUpdate(tp.t_data, cid, buffer);

if (result == HeapTupleInvisible)

{

UnlockReleaseBuffer(buffer);

elog(ERROR, "attempted to delete invisible tuple");

}

else if (result == HeapTupleBeingUpdated && wait)

{

TransactionId xwait;

uint16 infomask;

/* must copy state data before unlocking buffer */

xwait = HeapTupleHeaderGetRawXmax(tp.t_data);

infomask = tp.t_data->t_infomask;

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

/*

* Acquire tuple lock to establish our priority for the tuple (see

* heap_lock_tuple). LockTuple will release us when we are

* next-in-line for the tuple.

*

* If we are forced to "start over" below, we keep the tuple lock;

* this arranges that we stay at the head of the line while rechecking

* tuple state.

*/

if (!have_tuple_lock)

{

LockTupleTuplock(relation, &(tp.t_self), LockTupleExclusive);

have_tuple_lock = true;

}

/*

* Sleep until concurrent transaction ends. Note that we don't care

* which lock mode the locker has, because we need the strongest one.

*/

if (infomask & HEAP_XMAX_IS_MULTI)

{

/* wait for multixact */

MultiXactIdWait((MultiXactId) xwait, MultiXactStatusUpdate,

NULL, infomask);

LockBuffer(buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* If xwait had just locked the tuple then some other xact could

* update this tuple before we get to this point. Check for xmax

* change, and start over if so.

*/

if (!(tp.t_data->t_infomask & HEAP_XMAX_IS_MULTI) ||

!TransactionIdEquals(HeapTupleHeaderGetRawXmax(tp.t_data),

xwait))

goto l1;

/*

* You might think the multixact is necessarily done here, but not

* so: it could have surviving members, namely our own xact or

* other subxacts of this backend. It is legal for us to delete

* the tuple in either case, however (the latter case is

* essentially a situation of upgrading our former shared lock to

* exclusive). We don't bother changing the on-disk hint bits

* since we are about to overwrite the xmax altogether.

*/

}

else

{

/* wait for regular transaction to end */

XactLockTableWait(xwait);

LockBuffer(buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* xwait is done, but if xwait had just locked the tuple then some

* other xact could update this tuple before we get to this point.

* Check for xmax change, and start over if so.

*/

if ((tp.t_data->t_infomask & HEAP_XMAX_IS_MULTI) ||

!TransactionIdEquals(HeapTupleHeaderGetRawXmax(tp.t_data),

xwait))

goto l1;

/* Otherwise check if it committed or aborted */

UpdateXmaxHintBits(tp.t_data, buffer, xwait);

}

/*

* We may overwrite if previous xmax aborted, or if it committed but

* only locked the tuple without updating it.

*/

if ((tp.t_data->t_infomask & HEAP_XMAX_INVALID) ||

HEAP_XMAX_IS_LOCKED_ONLY(tp.t_data->t_infomask) ||

HeapTupleHeaderIsOnlyLocked(tp.t_data))

result = HeapTupleMayBeUpdated;

else

result = HeapTupleUpdated;

}

if (crosscheck != InvalidSnapshot && result == HeapTupleMayBeUpdated)

{

/* Perform additional check for transaction-snapshot mode RI updates */

if (!HeapTupleSatisfiesVisibility(&tp, crosscheck, buffer))

result = HeapTupleUpdated;

}

if (result != HeapTupleMayBeUpdated)

{

Assert(result == HeapTupleSelfUpdated ||

result == HeapTupleUpdated ||

result == HeapTupleBeingUpdated);

Assert(!(tp.t_data->t_infomask & HEAP_XMAX_INVALID));

hufd->ctid = tp.t_data->t_ctid;

hufd->xmax = HeapTupleHeaderGetUpdateXid(tp.t_data);

if (result == HeapTupleSelfUpdated)

hufd->cmax = HeapTupleHeaderGetCmax(tp.t_data);

else

hufd->cmax = 0; /* for lack of an InvalidCommandId value */

UnlockReleaseBuffer(buffer);

if (have_tuple_lock)

UnlockTupleTuplock(relation, &(tp.t_self), LockTupleExclusive);

if (vmbuffer != InvalidBuffer)

ReleaseBuffer(vmbuffer);

return result;

}

/*

* We're about to do the actual delete -- check for conflict first, to

* avoid possibly having to roll back work we've just done.

*/

CheckForSerializableConflictIn(relation, &tp, buffer);

/* replace cid with a combo cid if necessary */

HeapTupleHeaderAdjustCmax(tp.t_data, &cid, &iscombo);

START_CRIT_SECTION();

/*

* If this transaction commits, the tuple will become DEAD sooner or

* later. Set flag that this page is a candidate for pruning once our xid

* falls below the OldestXmin horizon. If the transaction finally aborts,

* the subsequent page pruning will be a no-op and the hint will be

* cleared.

*/

PageSetPrunable(page, xid);

if (PageIsAllVisible(page))

{

all_visible_cleared = true;

PageClearAllVisible(page);

visibilitymap_clear(relation, BufferGetBlockNumber(buffer),

vmbuffer);

}

/*

* If this is the first possibly-multixact-able operation in the

* current transaction, set my per-backend OldestMemberMXactId setting.

* We can be certain that the transaction will never become a member of

* any older MultiXactIds than that. (We have to do this even if we

* end up just using our own TransactionId below, since some other

* backend could incorporate our XID into a MultiXact immediately

* afterwards.)

*/

MultiXactIdSetOldestMember();

compute_new_xmax_infomask(HeapTupleHeaderGetRawXmax(tp.t_data),

tp.t_data->t_infomask, tp.t_data->t_infomask2,

xid, LockTupleExclusive, true,

&new_xmax, &new_infomask, &new_infomask2);

/* store transaction information of xact deleting the tuple */

tp.t_data->t_infomask &= ~(HEAP_XMAX_BITS | HEAP_MOVED);

tp.t_data->t_infomask2 &= ~HEAP_KEYS_UPDATED;

tp.t_data->t_infomask |= new_infomask;

tp.t_data->t_infomask2 |= new_infomask2;

HeapTupleHeaderClearHotUpdated(tp.t_data);

HeapTupleHeaderSetXmax(tp.t_data, new_xmax);

HeapTupleHeaderSetCmax(tp.t_data, cid, iscombo);

/* Make sure there is no forward chain link in t_ctid */

tp.t_data->t_ctid = tp.t_self;

MarkBufferDirty(buffer);

/* XLOG stuff */

if (RelationNeedsWAL(relation))

{

xl_heap_delete xlrec;

XLogRecPtr recptr;

XLogRecData rdata[2];

xlrec.all_visible_cleared = all_visible_cleared;

xlrec.infobits_set = compute_infobits(tp.t_data->t_infomask,

tp.t_data->t_infomask2);

xlrec.target.node = relation->rd_node;

xlrec.target.tid = tp.t_self;

xlrec.xmax = new_xmax;

rdata[0].data = (char *) &xlrec;

rdata[0].len = SizeOfHeapDelete;

rdata[0].buffer = InvalidBuffer;

rdata[0].next = &(rdata[1]);

rdata[1].data = NULL;

rdata[1].len = 0;

rdata[1].buffer = buffer;

rdata[1].buffer_std = true;

rdata[1].next = NULL;

recptr = XLogInsert(RM_HEAP_ID, XLOG_HEAP_DELETE, rdata);

PageSetLSN(page, recptr);

}

END_CRIT_SECTION();

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

if (vmbuffer != InvalidBuffer)

ReleaseBuffer(vmbuffer);

/*

* If the tuple has toasted out-of-line attributes, we need to delete

* those items too. We have to do this before releasing the buffer

* because we need to look at the contents of the tuple, but it's OK to

* release the content lock on the buffer first.

*/

if (relation->rd_rel->relkind != RELKIND_RELATION &&

relation->rd_rel->relkind != RELKIND_MATVIEW)

{

/* toast table entries should never be recursively toasted */

Assert(!HeapTupleHasExternal(&tp));

}

else if (HeapTupleHasExternal(&tp))

toast_delete(relation, &tp);

/*

* Mark tuple for invalidation from system caches at next command

* boundary. We have to do this before releasing the buffer because we

* need to look at the contents of the tuple.

*/

CacheInvalidateHeapTuple(relation, &tp, NULL);

/* Now we can release the buffer */

ReleaseBuffer(buffer);

/*

* Release the lmgr tuple lock, if we had it.

*/

if (have_tuple_lock)

UnlockTupleTuplock(relation, &(tp.t_self), LockTupleExclusive);

pgstat_count_heap_delete(relation);

return HeapTupleMayBeUpdated;

}

| void heap_endscan | ( | HeapScanDesc | scan | ) |

Definition at line 1398 of file heapam.c.

References BufferIsValid, FreeAccessStrategy(), pfree(), RelationDecrementReferenceCount(), ReleaseBuffer(), HeapScanDescData::rs_cbuf, HeapScanDescData::rs_key, HeapScanDescData::rs_rd, and HeapScanDescData::rs_strategy.

Referenced by AlterDomainNotNull(), AlterTableSpaceOptions(), ATRewriteTable(), boot_openrel(), check_db_file_conflict(), copy_heap_data(), CopyTo(), createdb(), DefineQueryRewrite(), do_autovacuum(), DropSetting(), DropTableSpace(), ExecEndBitmapHeapScan(), ExecEndSeqScan(), find_typed_table_dependencies(), get_database_list(), get_rel_oids(), get_rewrite_oid_without_relid(), get_tables_to_cluster(), get_tablespace_name(), get_tablespace_oid(), getRelationsInNamespace(), gettype(), index_update_stats(), IndexBuildHeapScan(), IndexCheckExclusion(), objectsInSchemaToOids(), pgrowlocks(), pgstat_collect_oids(), pgstat_heap(), ReindexDatabase(), remove_dbtablespaces(), RemoveConversionById(), RenameTableSpace(), systable_endscan(), ThereIsAtLeastOneRole(), vac_truncate_clog(), validate_index_heapscan(), validateCheckConstraint(), validateDomainConstraint(), and validateForeignKeyConstraint().

{

/* Note: no locking manipulations needed */

/*

* unpin scan buffers

*/

if (BufferIsValid(scan->rs_cbuf))

ReleaseBuffer(scan->rs_cbuf);

/*

* decrement relation reference count and free scan descriptor storage

*/

RelationDecrementReferenceCount(scan->rs_rd);

if (scan->rs_key)

pfree(scan->rs_key);

if (scan->rs_strategy != NULL)

FreeAccessStrategy(scan->rs_strategy);

pfree(scan);

}

| bool heap_fetch | ( | Relation | relation, | |

| Snapshot | snapshot, | |||

| HeapTuple | tuple, | |||

| Buffer * | userbuf, | |||

| bool | keep_buf, | |||

| Relation | stats_relation | |||

| ) |

Definition at line 1515 of file heapam.c.

References BUFFER_LOCK_SHARE, BUFFER_LOCK_UNLOCK, BufferGetPage, CheckForSerializableConflictOut(), HeapTupleSatisfiesVisibility, ItemIdGetLength, ItemIdIsNormal, ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, LockBuffer(), PageGetItem, PageGetItemId, PageGetMaxOffsetNumber, pgstat_count_heap_fetch, PredicateLockTuple(), ReadBuffer(), RelationGetRelid, ReleaseBuffer(), HeapTupleData::t_data, HeapTupleData::t_len, HeapTupleData::t_self, and HeapTupleData::t_tableOid.

Referenced by AfterTriggerExecute(), EvalPlanQualFetch(), EvalPlanQualFetchRowMarks(), ExecDelete(), ExecLockRows(), heap_lock_updated_tuple_rec(), and TidNext().

{

ItemPointer tid = &(tuple->t_self);

ItemId lp;

Buffer buffer;

Page page;

OffsetNumber offnum;

bool valid;

/*

* Fetch and pin the appropriate page of the relation.

*/

buffer = ReadBuffer(relation, ItemPointerGetBlockNumber(tid));

/*

* Need share lock on buffer to examine tuple commit status.

*/

LockBuffer(buffer, BUFFER_LOCK_SHARE);

page = BufferGetPage(buffer);

/*

* We'd better check for out-of-range offnum in case of VACUUM since the

* TID was obtained.

*/

offnum = ItemPointerGetOffsetNumber(tid);

if (offnum < FirstOffsetNumber || offnum > PageGetMaxOffsetNumber(page))

{

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

if (keep_buf)

*userbuf = buffer;

else

{

ReleaseBuffer(buffer);

*userbuf = InvalidBuffer;

}

tuple->t_data = NULL;

return false;

}

/*

* get the item line pointer corresponding to the requested tid

*/

lp = PageGetItemId(page, offnum);

/*

* Must check for deleted tuple.

*/

if (!ItemIdIsNormal(lp))

{

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

if (keep_buf)

*userbuf = buffer;

else

{

ReleaseBuffer(buffer);

*userbuf = InvalidBuffer;

}

tuple->t_data = NULL;

return false;

}

/*

* fill in *tuple fields

*/

tuple->t_data = (HeapTupleHeader) PageGetItem(page, lp);

tuple->t_len = ItemIdGetLength(lp);

tuple->t_tableOid = RelationGetRelid(relation);

/*

* check time qualification of tuple, then release lock

*/

valid = HeapTupleSatisfiesVisibility(tuple, snapshot, buffer);

if (valid)

PredicateLockTuple(relation, tuple, snapshot);

CheckForSerializableConflictOut(valid, relation, tuple, buffer, snapshot);

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

if (valid)

{

/*

* All checks passed, so return the tuple as valid. Caller is now

* responsible for releasing the buffer.

*/

*userbuf = buffer;

/* Count the successful fetch against appropriate rel, if any */

if (stats_relation != NULL)

pgstat_count_heap_fetch(stats_relation);

return true;

}

/* Tuple failed time qual, but maybe caller wants to see it anyway. */

if (keep_buf)

*userbuf = buffer;

else

{

ReleaseBuffer(buffer);

*userbuf = InvalidBuffer;

}

return false;

}

| bool heap_freeze_tuple | ( | HeapTupleHeader | tuple, | |

| TransactionId | cutoff_xid, | |||

| TransactionId | cutoff_multi | |||

| ) |

| void heap_get_latest_tid | ( | Relation | relation, | |

| Snapshot | snapshot, | |||

| ItemPointer | tid | |||

| ) |

Definition at line 1805 of file heapam.c.

References BUFFER_LOCK_SHARE, BufferGetPage, CheckForSerializableConflictOut(), elog, ERROR, HEAP_XMAX_INVALID, HeapTupleHeaderGetUpdateXid, HeapTupleHeaderGetXmin, HeapTupleHeaderIsOnlyLocked(), HeapTupleSatisfiesVisibility, ItemIdGetLength, ItemIdIsNormal, ItemPointerEquals(), ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, ItemPointerIsValid, LockBuffer(), PageGetItem, PageGetItemId, PageGetMaxOffsetNumber, ReadBuffer(), RelationGetNumberOfBlocks, RelationGetRelationName, HeapTupleHeaderData::t_ctid, HeapTupleData::t_data, HeapTupleHeaderData::t_infomask, HeapTupleData::t_len, HeapTupleData::t_self, TransactionIdEquals, TransactionIdIsValid, and UnlockReleaseBuffer().

Referenced by currtid_byrelname(), currtid_byreloid(), and TidNext().

{

BlockNumber blk;

ItemPointerData ctid;

TransactionId priorXmax;

/* this is to avoid Assert failures on bad input */

if (!ItemPointerIsValid(tid))

return;

/*

* Since this can be called with user-supplied TID, don't trust the input

* too much. (RelationGetNumberOfBlocks is an expensive check, so we

* don't check t_ctid links again this way. Note that it would not do to

* call it just once and save the result, either.)

*/

blk = ItemPointerGetBlockNumber(tid);

if (blk >= RelationGetNumberOfBlocks(relation))

elog(ERROR, "block number %u is out of range for relation \"%s\"",

blk, RelationGetRelationName(relation));

/*

* Loop to chase down t_ctid links. At top of loop, ctid is the tuple we

* need to examine, and *tid is the TID we will return if ctid turns out

* to be bogus.

*

* Note that we will loop until we reach the end of the t_ctid chain.

* Depending on the snapshot passed, there might be at most one visible

* version of the row, but we don't try to optimize for that.

*/

ctid = *tid;

priorXmax = InvalidTransactionId; /* cannot check first XMIN */

for (;;)

{

Buffer buffer;

Page page;

OffsetNumber offnum;

ItemId lp;

HeapTupleData tp;

bool valid;

/*

* Read, pin, and lock the page.

*/

buffer = ReadBuffer(relation, ItemPointerGetBlockNumber(&ctid));

LockBuffer(buffer, BUFFER_LOCK_SHARE);

page = BufferGetPage(buffer);

/*

* Check for bogus item number. This is not treated as an error

* condition because it can happen while following a t_ctid link. We

* just assume that the prior tid is OK and return it unchanged.

*/

offnum = ItemPointerGetOffsetNumber(&ctid);

if (offnum < FirstOffsetNumber || offnum > PageGetMaxOffsetNumber(page))

{

UnlockReleaseBuffer(buffer);

break;

}

lp = PageGetItemId(page, offnum);

if (!ItemIdIsNormal(lp))

{

UnlockReleaseBuffer(buffer);

break;

}

/* OK to access the tuple */

tp.t_self = ctid;

tp.t_data = (HeapTupleHeader) PageGetItem(page, lp);

tp.t_len = ItemIdGetLength(lp);

/*

* After following a t_ctid link, we might arrive at an unrelated

* tuple. Check for XMIN match.

*/

if (TransactionIdIsValid(priorXmax) &&

!TransactionIdEquals(priorXmax, HeapTupleHeaderGetXmin(tp.t_data)))

{

UnlockReleaseBuffer(buffer);

break;

}

/*

* Check time qualification of tuple; if visible, set it as the new

* result candidate.

*/

valid = HeapTupleSatisfiesVisibility(&tp, snapshot, buffer);

CheckForSerializableConflictOut(valid, relation, &tp, buffer, snapshot);

if (valid)

*tid = ctid;

/*

* If there's a valid t_ctid link, follow it, else we're done.

*/

if ((tp.t_data->t_infomask & HEAP_XMAX_INVALID) ||

HeapTupleHeaderIsOnlyLocked(tp.t_data) ||

ItemPointerEquals(&tp.t_self, &tp.t_data->t_ctid))

{

UnlockReleaseBuffer(buffer);

break;

}

ctid = tp.t_data->t_ctid;

priorXmax = HeapTupleHeaderGetUpdateXid(tp.t_data);

UnlockReleaseBuffer(buffer);

} /* end of loop */

}

| void heap_get_root_tuples | ( | Page | page, | |

| OffsetNumber * | root_offsets | |||

| ) |

Definition at line 705 of file pruneheap.c.

References Assert, FirstOffsetNumber, HeapTupleHeaderGetUpdateXid, HeapTupleHeaderGetXmin, HeapTupleHeaderIsHeapOnly, HeapTupleHeaderIsHotUpdated, ItemIdGetRedirect, ItemIdIsDead, ItemIdIsNormal, ItemIdIsRedirected, ItemIdIsUsed, ItemPointerGetOffsetNumber, MaxHeapTuplesPerPage, MemSet, OffsetNumberNext, PageGetItem, PageGetItemId, PageGetMaxOffsetNumber, HeapTupleHeaderData::t_ctid, TransactionIdEquals, and TransactionIdIsValid.

Referenced by IndexBuildHeapScan(), and validate_index_heapscan().

{

OffsetNumber offnum,

maxoff;

MemSet(root_offsets, 0, MaxHeapTuplesPerPage * sizeof(OffsetNumber));

maxoff = PageGetMaxOffsetNumber(page);

for (offnum = FirstOffsetNumber; offnum <= maxoff; offnum = OffsetNumberNext(offnum))

{

ItemId lp = PageGetItemId(page, offnum);

HeapTupleHeader htup;

OffsetNumber nextoffnum;

TransactionId priorXmax;

/* skip unused and dead items */

if (!ItemIdIsUsed(lp) || ItemIdIsDead(lp))

continue;

if (ItemIdIsNormal(lp))

{

htup = (HeapTupleHeader) PageGetItem(page, lp);

/*

* Check if this tuple is part of a HOT-chain rooted at some other

* tuple. If so, skip it for now; we'll process it when we find

* its root.

*/

if (HeapTupleHeaderIsHeapOnly(htup))

continue;

/*

* This is either a plain tuple or the root of a HOT-chain.

* Remember it in the mapping.

*/

root_offsets[offnum - 1] = offnum;

/* If it's not the start of a HOT-chain, we're done with it */

if (!HeapTupleHeaderIsHotUpdated(htup))

continue;

/* Set up to scan the HOT-chain */

nextoffnum = ItemPointerGetOffsetNumber(&htup->t_ctid);

priorXmax = HeapTupleHeaderGetUpdateXid(htup);

}

else

{

/* Must be a redirect item. We do not set its root_offsets entry */

Assert(ItemIdIsRedirected(lp));

/* Set up to scan the HOT-chain */

nextoffnum = ItemIdGetRedirect(lp);

priorXmax = InvalidTransactionId;

}

/*

* Now follow the HOT-chain and collect other tuples in the chain.

*

* Note: Even though this is a nested loop, the complexity of the

* function is O(N) because a tuple in the page should be visited not

* more than twice, once in the outer loop and once in HOT-chain

* chases.

*/

for (;;)

{

lp = PageGetItemId(page, nextoffnum);

/* Check for broken chains */

if (!ItemIdIsNormal(lp))

break;

htup = (HeapTupleHeader) PageGetItem(page, lp);

if (TransactionIdIsValid(priorXmax) &&

!TransactionIdEquals(priorXmax, HeapTupleHeaderGetXmin(htup)))

break;

/* Remember the root line pointer for this item */

root_offsets[nextoffnum - 1] = offnum;

/* Advance to next chain member, if any */

if (!HeapTupleHeaderIsHotUpdated(htup))

break;

nextoffnum = ItemPointerGetOffsetNumber(&htup->t_ctid);

priorXmax = HeapTupleHeaderGetUpdateXid(htup);

}

}

}

| HeapTuple heap_getnext | ( | HeapScanDesc | scan, | |

| ScanDirection | direction | |||

| ) |

Definition at line 1447 of file heapam.c.

References heapgettup(), heapgettup_pagemode(), pgstat_count_heap_getnext, HeapScanDescData::rs_ctup, HeapScanDescData::rs_key, HeapScanDescData::rs_nkeys, HeapScanDescData::rs_pageatatime, HeapScanDescData::rs_rd, and HeapTupleData::t_data.

Referenced by AlterDomainNotNull(), AlterTableSpaceOptions(), ATRewriteTable(), boot_openrel(), check_db_file_conflict(), copy_heap_data(), CopyTo(), createdb(), DefineQueryRewrite(), do_autovacuum(), DropSetting(), DropTableSpace(), find_typed_table_dependencies(), get_database_list(), get_rel_oids(), get_rewrite_oid_without_relid(), get_tables_to_cluster(), get_tablespace_name(), get_tablespace_oid(), getRelationsInNamespace(), gettype(), index_update_stats(), IndexBuildHeapScan(), IndexCheckExclusion(), objectsInSchemaToOids(), pgrowlocks(), pgstat_collect_oids(), pgstat_heap(), ReindexDatabase(), remove_dbtablespaces(), RemoveConversionById(), RenameTableSpace(), SeqNext(), systable_getnext(), ThereIsAtLeastOneRole(), vac_truncate_clog(), validate_index_heapscan(), validateCheckConstraint(), validateDomainConstraint(), and validateForeignKeyConstraint().

{

/* Note: no locking manipulations needed */

HEAPDEBUG_1; /* heap_getnext( info ) */

if (scan->rs_pageatatime)

heapgettup_pagemode(scan, direction,

scan->rs_nkeys, scan->rs_key);

else

heapgettup(scan, direction, scan->rs_nkeys, scan->rs_key);

if (scan->rs_ctup.t_data == NULL)

{

HEAPDEBUG_2; /* heap_getnext returning EOS */

return NULL;

}

/*

* if we get here it means we have a new current scan tuple, so point to

* the proper return buffer and return the tuple.

*/

HEAPDEBUG_3; /* heap_getnext returning tuple */

pgstat_count_heap_getnext(scan->rs_rd);

return &(scan->rs_ctup);

}

| bool heap_hot_search | ( | ItemPointer | tid, | |

| Relation | relation, | |||

| Snapshot | snapshot, | |||

| bool * | all_dead | |||

| ) |

Definition at line 1777 of file heapam.c.

References BUFFER_LOCK_SHARE, BUFFER_LOCK_UNLOCK, heap_hot_search_buffer(), ItemPointerGetBlockNumber, LockBuffer(), ReadBuffer(), and ReleaseBuffer().

Referenced by _bt_check_unique().

{

bool result;

Buffer buffer;

HeapTupleData heapTuple;

buffer = ReadBuffer(relation, ItemPointerGetBlockNumber(tid));

LockBuffer(buffer, BUFFER_LOCK_SHARE);

result = heap_hot_search_buffer(tid, relation, buffer, snapshot,

&heapTuple, all_dead, true);

LockBuffer(buffer, BUFFER_LOCK_UNLOCK);

ReleaseBuffer(buffer);

return result;

}

| bool heap_hot_search_buffer | ( | ItemPointer | tid, | |

| Relation | relation, | |||

| Buffer | buffer, | |||

| Snapshot | snapshot, | |||

| HeapTuple | heapTuple, | |||

| bool * | all_dead, | |||

| bool | first_call | |||

| ) |

Definition at line 1649 of file heapam.c.

References Assert, BufferGetBlockNumber(), BufferGetPage, CheckForSerializableConflictOut(), HeapTupleHeaderGetUpdateXid, HeapTupleHeaderGetXmin, HeapTupleIsHeapOnly, HeapTupleIsHotUpdated, HeapTupleIsSurelyDead(), HeapTupleSatisfiesVisibility, ItemIdGetLength, ItemIdGetRedirect, ItemIdIsNormal, ItemIdIsRedirected, ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, ItemPointerSetOffsetNumber, PageGetItem, PageGetItemId, PageGetMaxOffsetNumber, PredicateLockTuple(), RelationData::rd_id, RecentGlobalXmin, HeapTupleHeaderData::t_ctid, HeapTupleData::t_data, HeapTupleData::t_len, HeapTupleData::t_self, HeapTupleData::t_tableOid, TransactionIdEquals, and TransactionIdIsValid.

Referenced by bitgetpage(), heap_hot_search(), and index_fetch_heap().

{

Page dp = (Page) BufferGetPage(buffer);

TransactionId prev_xmax = InvalidTransactionId;

OffsetNumber offnum;

bool at_chain_start;

bool valid;

bool skip;

/* If this is not the first call, previous call returned a (live!) tuple */

if (all_dead)

*all_dead = first_call;

Assert(TransactionIdIsValid(RecentGlobalXmin));

Assert(ItemPointerGetBlockNumber(tid) == BufferGetBlockNumber(buffer));

offnum = ItemPointerGetOffsetNumber(tid);

at_chain_start = first_call;

skip = !first_call;

/* Scan through possible multiple members of HOT-chain */

for (;;)

{

ItemId lp;

/* check for bogus TID */

if (offnum < FirstOffsetNumber || offnum > PageGetMaxOffsetNumber(dp))

break;

lp = PageGetItemId(dp, offnum);

/* check for unused, dead, or redirected items */

if (!ItemIdIsNormal(lp))

{

/* We should only see a redirect at start of chain */

if (ItemIdIsRedirected(lp) && at_chain_start)

{

/* Follow the redirect */

offnum = ItemIdGetRedirect(lp);

at_chain_start = false;

continue;

}

/* else must be end of chain */

break;

}

heapTuple->t_data = (HeapTupleHeader) PageGetItem(dp, lp);

heapTuple->t_len = ItemIdGetLength(lp);

heapTuple->t_tableOid = relation->rd_id;

heapTuple->t_self = *tid;

/*

* Shouldn't see a HEAP_ONLY tuple at chain start.

*/

if (at_chain_start && HeapTupleIsHeapOnly(heapTuple))

break;

/*

* The xmin should match the previous xmax value, else chain is

* broken.

*/

if (TransactionIdIsValid(prev_xmax) &&

!TransactionIdEquals(prev_xmax,

HeapTupleHeaderGetXmin(heapTuple->t_data)))

break;

/*

* When first_call is true (and thus, skip is initially false) we'll

* return the first tuple we find. But on later passes, heapTuple

* will initially be pointing to the tuple we returned last time.

* Returning it again would be incorrect (and would loop forever), so

* we skip it and return the next match we find.

*/

if (!skip)

{

/* If it's visible per the snapshot, we must return it */

valid = HeapTupleSatisfiesVisibility(heapTuple, snapshot, buffer);

CheckForSerializableConflictOut(valid, relation, heapTuple,

buffer, snapshot);

if (valid)

{

ItemPointerSetOffsetNumber(tid, offnum);

PredicateLockTuple(relation, heapTuple, snapshot);

if (all_dead)

*all_dead = false;

return true;

}

}

skip = false;

/*

* If we can't see it, maybe no one else can either. At caller

* request, check whether all chain members are dead to all

* transactions.

*/

if (all_dead && *all_dead &&

!HeapTupleIsSurelyDead(heapTuple->t_data, RecentGlobalXmin))

*all_dead = false;

/*

* Check to see if HOT chain continues past this tuple; if so fetch

* the next offnum and loop around.

*/

if (HeapTupleIsHotUpdated(heapTuple))

{

Assert(ItemPointerGetBlockNumber(&heapTuple->t_data->t_ctid) ==

ItemPointerGetBlockNumber(tid));

offnum = ItemPointerGetOffsetNumber(&heapTuple->t_data->t_ctid);

at_chain_start = false;

prev_xmax = HeapTupleHeaderGetUpdateXid(heapTuple->t_data);

}

else

break; /* end of chain */

}

return false;

}

Definition at line 4968 of file heapam.c.

References XLogRecData::buffer, BUFFER_LOCK_EXCLUSIVE, XLogRecData::buffer_std, BufferGetPage, CacheInvalidateHeapTuple(), XLogRecData::data, elog, END_CRIT_SECTION, ERROR, IsBootstrapProcessingMode, ItemIdGetLength, ItemIdIsNormal, ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, XLogRecData::len, LockBuffer(), MarkBufferDirty(), XLogRecData::next, xl_heaptid::node, PageGetItem, PageGetItemId, PageGetMaxOffsetNumber, PageSetLSN, RelationData::rd_node, ReadBuffer(), RelationNeedsWAL, START_CRIT_SECTION, HeapTupleData::t_data, HeapTupleHeaderData::t_hoff, HeapTupleData::t_len, HeapTupleData::t_self, xl_heap_inplace::target, xl_heaptid::tid, UnlockReleaseBuffer(), XLOG_HEAP_INPLACE, and XLogInsert().

Referenced by create_toast_table(), index_set_state_flags(), index_update_stats(), vac_update_datfrozenxid(), and vac_update_relstats().

{

Buffer buffer;

Page page;

OffsetNumber offnum;

ItemId lp = NULL;

HeapTupleHeader htup;

uint32 oldlen;

uint32 newlen;

buffer = ReadBuffer(relation, ItemPointerGetBlockNumber(&(tuple->t_self)));

LockBuffer(buffer, BUFFER_LOCK_EXCLUSIVE);

page = (Page) BufferGetPage(buffer);

offnum = ItemPointerGetOffsetNumber(&(tuple->t_self));

if (PageGetMaxOffsetNumber(page) >= offnum)

lp = PageGetItemId(page, offnum);

if (PageGetMaxOffsetNumber(page) < offnum || !ItemIdIsNormal(lp))

elog(ERROR, "heap_inplace_update: invalid lp");

htup = (HeapTupleHeader) PageGetItem(page, lp);

oldlen = ItemIdGetLength(lp) - htup->t_hoff;

newlen = tuple->t_len - tuple->t_data->t_hoff;

if (oldlen != newlen || htup->t_hoff != tuple->t_data->t_hoff)

elog(ERROR, "heap_inplace_update: wrong tuple length");

/* NO EREPORT(ERROR) from here till changes are logged */

START_CRIT_SECTION();

memcpy((char *) htup + htup->t_hoff,

(char *) tuple->t_data + tuple->t_data->t_hoff,

newlen);

MarkBufferDirty(buffer);

/* XLOG stuff */

if (RelationNeedsWAL(relation))

{

xl_heap_inplace xlrec;

XLogRecPtr recptr;

XLogRecData rdata[2];

xlrec.target.node = relation->rd_node;

xlrec.target.tid = tuple->t_self;

rdata[0].data = (char *) &xlrec;

rdata[0].len = SizeOfHeapInplace;

rdata[0].buffer = InvalidBuffer;

rdata[0].next = &(rdata[1]);

rdata[1].data = (char *) htup + htup->t_hoff;

rdata[1].len = newlen;

rdata[1].buffer = buffer;

rdata[1].buffer_std = true;

rdata[1].next = NULL;

recptr = XLogInsert(RM_HEAP_ID, XLOG_HEAP_INPLACE, rdata);

PageSetLSN(page, recptr);

}

END_CRIT_SECTION();

UnlockReleaseBuffer(buffer);

/*

* Send out shared cache inval if necessary. Note that because we only

* pass the new version of the tuple, this mustn't be used for any

* operations that could change catcache lookup keys. But we aren't

* bothering with index updates either, so that's true a fortiori.

*/

if (!IsBootstrapProcessingMode())

CacheInvalidateHeapTuple(relation, tuple, NULL);

}

| Oid heap_insert | ( | Relation | relation, | |

| HeapTuple | tup, | |||

| CommandId | cid, | |||

| int | options, | |||

| BulkInsertState | bistate | |||

| ) |

Definition at line 2012 of file heapam.c.

References xl_heap_insert::all_visible_cleared, XLogRecData::buffer, XLogRecData::buffer_std, BufferGetPage, CacheInvalidateHeapTuple(), CheckForSerializableConflictIn(), XLogRecData::data, END_CRIT_SECTION, FirstOffsetNumber, GetCurrentTransactionId(), heap_freetuple(), HEAP_INSERT_SKIP_WAL, heap_prepare_insert(), HeapTupleGetOid, InvalidBuffer, ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, XLogRecData::len, MarkBufferDirty(), XLogRecData::next, xl_heaptid::node, offsetof, PageClearAllVisible, PageGetMaxOffsetNumber, PageIsAllVisible, PageSetLSN, pgstat_count_heap_insert(), RelationData::rd_node, RelationGetBufferForTuple(), RelationNeedsWAL, RelationPutHeapTuple(), ReleaseBuffer(), START_CRIT_SECTION, HeapTupleData::t_data, HeapTupleHeaderData::t_hoff, xl_heap_header::t_hoff, HeapTupleHeaderData::t_infomask, xl_heap_header::t_infomask, HeapTupleHeaderData::t_infomask2, xl_heap_header::t_infomask2, HeapTupleData::t_len, HeapTupleData::t_self, xl_heap_insert::target, xl_heaptid::tid, UnlockReleaseBuffer(), visibilitymap_clear(), and XLogInsert().

Referenced by ATRewriteTable(), CopyFrom(), ExecInsert(), intorel_receive(), simple_heap_insert(), toast_save_datum(), and transientrel_receive().

{

TransactionId xid = GetCurrentTransactionId();

HeapTuple heaptup;

Buffer buffer;

Buffer vmbuffer = InvalidBuffer;

bool all_visible_cleared = false;

/*

* Fill in tuple header fields, assign an OID, and toast the tuple if

* necessary.

*

* Note: below this point, heaptup is the data we actually intend to store

* into the relation; tup is the caller's original untoasted data.

*/

heaptup = heap_prepare_insert(relation, tup, xid, cid, options);

/*

* We're about to do the actual insert -- but check for conflict first, to

* avoid possibly having to roll back work we've just done.

*

* For a heap insert, we only need to check for table-level SSI locks. Our

* new tuple can't possibly conflict with existing tuple locks, and heap

* page locks are only consolidated versions of tuple locks; they do not

* lock "gaps" as index page locks do. So we don't need to identify a

* buffer before making the call.

*/

CheckForSerializableConflictIn(relation, NULL, InvalidBuffer);

/*

* Find buffer to insert this tuple into. If the page is all visible,

* this will also pin the requisite visibility map page.

*/

buffer = RelationGetBufferForTuple(relation, heaptup->t_len,

InvalidBuffer, options, bistate,

&vmbuffer, NULL);

/* NO EREPORT(ERROR) from here till changes are logged */

START_CRIT_SECTION();

RelationPutHeapTuple(relation, buffer, heaptup);

if (PageIsAllVisible(BufferGetPage(buffer)))

{

all_visible_cleared = true;

PageClearAllVisible(BufferGetPage(buffer));

visibilitymap_clear(relation,

ItemPointerGetBlockNumber(&(heaptup->t_self)),

vmbuffer);

}

/*

* XXX Should we set PageSetPrunable on this page ?

*

* The inserting transaction may eventually abort thus making this tuple

* DEAD and hence available for pruning. Though we don't want to optimize

* for aborts, if no other tuple in this page is UPDATEd/DELETEd, the

* aborted tuple will never be pruned until next vacuum is triggered.

*

* If you do add PageSetPrunable here, add it in heap_xlog_insert too.

*/

MarkBufferDirty(buffer);

/* XLOG stuff */

if (!(options & HEAP_INSERT_SKIP_WAL) && RelationNeedsWAL(relation))

{

xl_heap_insert xlrec;

xl_heap_header xlhdr;

XLogRecPtr recptr;

XLogRecData rdata[3];

Page page = BufferGetPage(buffer);

uint8 info = XLOG_HEAP_INSERT;

xlrec.all_visible_cleared = all_visible_cleared;

xlrec.target.node = relation->rd_node;

xlrec.target.tid = heaptup->t_self;

rdata[0].data = (char *) &xlrec;

rdata[0].len = SizeOfHeapInsert;

rdata[0].buffer = InvalidBuffer;

rdata[0].next = &(rdata[1]);

xlhdr.t_infomask2 = heaptup->t_data->t_infomask2;

xlhdr.t_infomask = heaptup->t_data->t_infomask;

xlhdr.t_hoff = heaptup->t_data->t_hoff;

/*

* note we mark rdata[1] as belonging to buffer; if XLogInsert decides

* to write the whole page to the xlog, we don't need to store

* xl_heap_header in the xlog.

*/

rdata[1].data = (char *) &xlhdr;

rdata[1].len = SizeOfHeapHeader;

rdata[1].buffer = buffer;

rdata[1].buffer_std = true;

rdata[1].next = &(rdata[2]);

/* PG73FORMAT: write bitmap [+ padding] [+ oid] + data */

rdata[2].data = (char *) heaptup->t_data + offsetof(HeapTupleHeaderData, t_bits);

rdata[2].len = heaptup->t_len - offsetof(HeapTupleHeaderData, t_bits);

rdata[2].buffer = buffer;

rdata[2].buffer_std = true;

rdata[2].next = NULL;

/*

* If this is the single and first tuple on page, we can reinit the

* page instead of restoring the whole thing. Set flag, and hide

* buffer references from XLogInsert.

*/

if (ItemPointerGetOffsetNumber(&(heaptup->t_self)) == FirstOffsetNumber &&

PageGetMaxOffsetNumber(page) == FirstOffsetNumber)

{

info |= XLOG_HEAP_INIT_PAGE;

rdata[1].buffer = rdata[2].buffer = InvalidBuffer;

}

recptr = XLogInsert(RM_HEAP_ID, info, rdata);

PageSetLSN(page, recptr);

}

END_CRIT_SECTION();

UnlockReleaseBuffer(buffer);

if (vmbuffer != InvalidBuffer)

ReleaseBuffer(vmbuffer);

/*

* If tuple is cachable, mark it for invalidation from the caches in case

* we abort. Note it is OK to do this after releasing the buffer, because

* the heaptup data structure is all in local memory, not in the shared

* buffer.

*/

CacheInvalidateHeapTuple(relation, heaptup, NULL);

pgstat_count_heap_insert(relation, 1);

/*

* If heaptup is a private copy, release it. Don't forget to copy t_self

* back to the caller's image, too.

*/

if (heaptup != tup)

{

tup->t_self = heaptup->t_self;

heap_freetuple(heaptup);

}

return HeapTupleGetOid(tup);

}

| HTSU_Result heap_lock_tuple | ( | Relation | relation, | |

| HeapTuple | tuple, | |||

| CommandId | cid, | |||

| LockTupleMode | mode, | |||

| bool | nowait, | |||

| bool | follow_update, | |||

| Buffer * | buffer, | |||

| HeapUpdateFailureData * | hufd | |||

| ) |

Definition at line 3895 of file heapam.c.

References Assert, XLogRecData::buffer, BUFFER_LOCK_EXCLUSIVE, BUFFER_LOCK_UNLOCK, XLogRecData::buffer_std, BufferGetPage, HeapUpdateFailureData::cmax, compute_infobits(), compute_new_xmax_infomask(), ConditionalLockTupleTuplock, ConditionalMultiXactIdWait(), ConditionalXactLockTableWait(), HeapUpdateFailureData::ctid, XLogRecData::data, elog, END_CRIT_SECTION, ereport, errcode(), errmsg(), ERROR, get_mxact_status_for_lock(), GetCurrentTransactionId(), GetMultiXactIdMembers(), HEAP_KEYS_UPDATED, heap_lock_updated_tuple(), HEAP_XMAX_COMMITTED, HEAP_XMAX_INVALID, HEAP_XMAX_IS_EXCL_LOCKED, HEAP_XMAX_IS_KEYSHR_LOCKED, HEAP_XMAX_IS_LOCKED_ONLY, HEAP_XMAX_IS_MULTI, HEAP_XMAX_IS_SHR_LOCKED, HeapTupleBeingUpdated, HeapTupleHeaderClearHotUpdated, HeapTupleHeaderGetCmax(), HeapTupleHeaderGetRawXmax, HeapTupleHeaderGetUpdateXid, HeapTupleHeaderIsOnlyLocked(), HeapTupleHeaderSetXmax, HeapTupleInvisible, HeapTupleMayBeUpdated, HeapTupleSatisfiesUpdate(), HeapTupleSelfUpdated, HeapTupleUpdated, i, xl_heap_lock::infobits_set, ItemIdGetLength, ItemIdIsNormal, ItemPointerCopy, ItemPointerGetBlockNumber, ItemPointerGetOffsetNumber, XLogRecData::len, LockBuffer(), xl_heap_lock::locking_xid, LockTupleKeyShare, LockTupleNoKeyExclusive, LockTupleShare, LockTupleTuplock, MarkBufferDirty(), MultiXactIdSetOldestMember(), MultiXactIdWait(), MultiXactStatusForKeyShare, MultiXactStatusNoKeyUpdate, XLogRecData::next, xl_heaptid::node, PageGetItem, PageGetItemId, PageSetLSN, pfree(), RelationData::rd_node, ReadBuffer(), RelationGetRelationName, RelationGetRelid, RelationNeedsWAL, START_CRIT_SECTION, status(), HeapTupleHeaderData::t_ctid, HeapTupleData::t_data, HeapTupleHeaderData::t_infomask, HeapTupleHeaderData::t_infomask2, HeapTupleData::t_len, HeapTupleData::t_self, HeapTupleData::t_tableOid, xl_heap_lock::target, xl_heaptid::tid, TransactionIdEquals, TransactionIdIsCurrentTransactionId(), TUPLOCK_from_mxstatus, UnlockReleaseBuffer(), UnlockTupleTuplock, UpdateXmaxHintBits(), XactLockTableWait(), XLOG_HEAP_LOCK, XLogInsert(), and HeapUpdateFailureData::xmax.

Referenced by EvalPlanQualFetch(), ExecLockRows(), and GetTupleForTrigger().

{

HTSU_Result result;

ItemPointer tid = &(tuple->t_self);

ItemId lp;

Page page;

TransactionId xid,

xmax;

uint16 old_infomask,

new_infomask,

new_infomask2;

bool have_tuple_lock = false;

*buffer = ReadBuffer(relation, ItemPointerGetBlockNumber(tid));

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

page = BufferGetPage(*buffer);

lp = PageGetItemId(page, ItemPointerGetOffsetNumber(tid));

Assert(ItemIdIsNormal(lp));

tuple->t_data = (HeapTupleHeader) PageGetItem(page, lp);

tuple->t_len = ItemIdGetLength(lp);

tuple->t_tableOid = RelationGetRelid(relation);

l3:

result = HeapTupleSatisfiesUpdate(tuple->t_data, cid, *buffer);

if (result == HeapTupleInvisible)

{

UnlockReleaseBuffer(*buffer);

elog(ERROR, "attempted to lock invisible tuple");

}

else if (result == HeapTupleBeingUpdated)

{

TransactionId xwait;

uint16 infomask;

uint16 infomask2;

bool require_sleep;

ItemPointerData t_ctid;

/* must copy state data before unlocking buffer */

xwait = HeapTupleHeaderGetRawXmax(tuple->t_data);

infomask = tuple->t_data->t_infomask;

infomask2 = tuple->t_data->t_infomask2;

ItemPointerCopy(&tuple->t_data->t_ctid, &t_ctid);

LockBuffer(*buffer, BUFFER_LOCK_UNLOCK);

/*

* If any subtransaction of the current top transaction already holds a

* lock as strong or stronger than what we're requesting, we

* effectively hold the desired lock already. We *must* succeed

* without trying to take the tuple lock, else we will deadlock against

* anyone wanting to acquire a stronger lock.

*/

if (infomask & HEAP_XMAX_IS_MULTI)

{

int i;

int nmembers;

MultiXactMember *members;

/*

* We don't need to allow old multixacts here; if that had been the

* case, HeapTupleSatisfiesUpdate would have returned MayBeUpdated

* and we wouldn't be here.

*/

nmembers = GetMultiXactIdMembers(xwait, &members, false);

for (i = 0; i < nmembers; i++)

{

if (TransactionIdIsCurrentTransactionId(members[i].xid))

{

LockTupleMode membermode;

membermode = TUPLOCK_from_mxstatus(members[i].status);

if (membermode >= mode)

{

if (have_tuple_lock)

UnlockTupleTuplock(relation, tid, mode);

pfree(members);

return HeapTupleMayBeUpdated;

}

}

}

pfree(members);

}

/*

* Acquire tuple lock to establish our priority for the tuple.

* LockTuple will release us when we are next-in-line for the tuple.

* We must do this even if we are share-locking.

*

* If we are forced to "start over" below, we keep the tuple lock;

* this arranges that we stay at the head of the line while rechecking

* tuple state.

*/

if (!have_tuple_lock)

{

if (nowait)

{

if (!ConditionalLockTupleTuplock(relation, tid, mode))

ereport(ERROR,

(errcode(ERRCODE_LOCK_NOT_AVAILABLE),

errmsg("could not obtain lock on row in relation \"%s\"",

RelationGetRelationName(relation))));

}

else

LockTupleTuplock(relation, tid, mode);

have_tuple_lock = true;

}

/*

* Initially assume that we will have to wait for the locking

* transaction(s) to finish. We check various cases below in which

* this can be turned off.

*/

require_sleep = true;

if (mode == LockTupleKeyShare)

{

/*

* If we're requesting KeyShare, and there's no update present, we

* don't need to wait. Even if there is an update, we can still

* continue if the key hasn't been modified.

*

* However, if there are updates, we need to walk the update chain

* to mark future versions of the row as locked, too. That way, if

* somebody deletes that future version, we're protected against

* the key going away. This locking of future versions could block

* momentarily, if a concurrent transaction is deleting a key; or

* it could return a value to the effect that the transaction

* deleting the key has already committed. So we do this before

* re-locking the buffer; otherwise this would be prone to

* deadlocks.

*

* Note that the TID we're locking was grabbed before we unlocked

* the buffer. For it to change while we're not looking, the other

* properties we're testing for below after re-locking the buffer

* would also change, in which case we would restart this loop

* above.

*/

if (!(infomask2 & HEAP_KEYS_UPDATED))

{

bool updated;

updated = !HEAP_XMAX_IS_LOCKED_ONLY(infomask);

/*

* If there are updates, follow the update chain; bail out

* if that cannot be done.

*/

if (follow_updates && updated)

{

HTSU_Result res;

res = heap_lock_updated_tuple(relation, tuple, &t_ctid,

GetCurrentTransactionId(),

mode);

if (res != HeapTupleMayBeUpdated)

{

result = res;

/* recovery code expects to have buffer lock held */

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

goto failed;

}

}

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* Make sure it's still an appropriate lock, else start over.

* Also, if it wasn't updated before we released the lock, but

* is updated now, we start over too; the reason is that we now

* need to follow the update chain to lock the new versions.

*/

if (!HeapTupleHeaderIsOnlyLocked(tuple->t_data) &&

((tuple->t_data->t_infomask2 & HEAP_KEYS_UPDATED) ||

!updated))

goto l3;

/* Things look okay, so we can skip sleeping */

require_sleep = false;

/*

* Note we allow Xmax to change here; other updaters/lockers

* could have modified it before we grabbed the buffer lock.

* However, this is not a problem, because with the recheck we

* just did we ensure that they still don't conflict with the

* lock we want.

*/

}

}

else if (mode == LockTupleShare)

{

/*

* If we're requesting Share, we can similarly avoid sleeping if

* there's no update and no exclusive lock present.

*/

if (HEAP_XMAX_IS_LOCKED_ONLY(infomask) &&

!HEAP_XMAX_IS_EXCL_LOCKED(infomask))

{

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* Make sure it's still an appropriate lock, else start over.

* See above about allowing xmax to change.

*/

if (!HEAP_XMAX_IS_LOCKED_ONLY(tuple->t_data->t_infomask) ||

HEAP_XMAX_IS_EXCL_LOCKED(tuple->t_data->t_infomask))

goto l3;

require_sleep = false;

}

}

else if (mode == LockTupleNoKeyExclusive)

{

/*

* If we're requesting NoKeyExclusive, we might also be able to

* avoid sleeping; just ensure that there's no other lock type than

* KeyShare. Note that this is a bit more involved than just

* checking hint bits -- we need to expand the multixact to figure

* out lock modes for each one (unless there was only one such

* locker).

*/

if (infomask & HEAP_XMAX_IS_MULTI)

{

int nmembers;

MultiXactMember *members;

/*

* We don't need to allow old multixacts here; if that had been

* the case, HeapTupleSatisfiesUpdate would have returned

* MayBeUpdated and we wouldn't be here.

*/

nmembers = GetMultiXactIdMembers(xwait, &members, false);

if (nmembers <= 0)

{

/*

* No need to keep the previous xmax here. This is unlikely

* to happen.

*/

require_sleep = false;

}

else

{

int i;

bool allowed = true;

for (i = 0; i < nmembers; i++)

{

if (members[i].status != MultiXactStatusForKeyShare)

{

allowed = false;

break;

}

}

if (allowed)

{

/*

* if the xmax changed under us in the meantime, start

* over.

*/

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

if (!(tuple->t_data->t_infomask & HEAP_XMAX_IS_MULTI) ||

!TransactionIdEquals(HeapTupleHeaderGetRawXmax(tuple->t_data),

xwait))

{

pfree(members);

goto l3;

}

/* otherwise, we're good */

require_sleep = false;

}

pfree(members);

}

}

else if (HEAP_XMAX_IS_KEYSHR_LOCKED(infomask))

{

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

/* if the xmax changed in the meantime, start over */

if ((tuple->t_data->t_infomask & HEAP_XMAX_IS_MULTI) ||

!TransactionIdEquals(HeapTupleHeaderGetRawXmax(tuple->t_data),

xwait))

goto l3;

/* otherwise, we're good */

require_sleep = false;

}

}

/*

* By here, we either have already acquired the buffer exclusive lock,

* or we must wait for the locking transaction or multixact; so below

* we ensure that we grab buffer lock after the sleep.

*/

if (require_sleep)

{

if (infomask & HEAP_XMAX_IS_MULTI)

{

MultiXactStatus status = get_mxact_status_for_lock(mode, false);

/* We only ever lock tuples, never update them */

if (status >= MultiXactStatusNoKeyUpdate)

elog(ERROR, "invalid lock mode in heap_lock_tuple");

/* wait for multixact to end */

if (nowait)

{

if (!ConditionalMultiXactIdWait((MultiXactId) xwait,

status, NULL, infomask))

ereport(ERROR,

(errcode(ERRCODE_LOCK_NOT_AVAILABLE),

errmsg("could not obtain lock on row in relation \"%s\"",

RelationGetRelationName(relation))));

}

else

MultiXactIdWait((MultiXactId) xwait, status, NULL, infomask);

/* if there are updates, follow the update chain */

if (follow_updates &&

!HEAP_XMAX_IS_LOCKED_ONLY(infomask))

{

HTSU_Result res;

res = heap_lock_updated_tuple(relation, tuple, &t_ctid,

GetCurrentTransactionId(),

mode);

if (res != HeapTupleMayBeUpdated)

{

result = res;

/* recovery code expects to have buffer lock held */

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

goto failed;

}

}

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* If xwait had just locked the tuple then some other xact

* could update this tuple before we get to this point. Check

* for xmax change, and start over if so.

*/

if (!(tuple->t_data->t_infomask & HEAP_XMAX_IS_MULTI) ||

!TransactionIdEquals(HeapTupleHeaderGetRawXmax(tuple->t_data),

xwait))

goto l3;

/*

* Of course, the multixact might not be done here: if we're

* requesting a light lock mode, other transactions with light

* locks could still be alive, as well as locks owned by our

* own xact or other subxacts of this backend. We need to

* preserve the surviving MultiXact members. Note that it

* isn't absolutely necessary in the latter case, but doing so

* is simpler.

*/

}

else

{

/* wait for regular transaction to end */

if (nowait)

{

if (!ConditionalXactLockTableWait(xwait))

ereport(ERROR,

(errcode(ERRCODE_LOCK_NOT_AVAILABLE),

errmsg("could not obtain lock on row in relation \"%s\"",

RelationGetRelationName(relation))));

}

else

XactLockTableWait(xwait);

/* if there are updates, follow the update chain */

if (follow_updates &&

!HEAP_XMAX_IS_LOCKED_ONLY(infomask))

{

HTSU_Result res;

res = heap_lock_updated_tuple(relation, tuple, &t_ctid,

GetCurrentTransactionId(),

mode);

if (res != HeapTupleMayBeUpdated)

{

result = res;

/* recovery code expects to have buffer lock held */

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

goto failed;

}

}

LockBuffer(*buffer, BUFFER_LOCK_EXCLUSIVE);

/*

* xwait is done, but if xwait had just locked the tuple then

* some other xact could update this tuple before we get to

* this point. Check for xmax change, and start over if so.

*/

if ((tuple->t_data->t_infomask & HEAP_XMAX_IS_MULTI) ||

!TransactionIdEquals(HeapTupleHeaderGetRawXmax(tuple->t_data),

xwait))

goto l3;

/*

* Otherwise check if it committed or aborted. Note we cannot

* be here if the tuple was only locked by somebody who didn't

* conflict with us; that should have been handled above. So

* that transaction must necessarily be gone by now.

*/

UpdateXmaxHintBits(tuple->t_data, *buffer, xwait);

}

}

/* By here, we're certain that we hold buffer exclusive lock again */

/*

* We may lock if previous xmax aborted, or if it committed but only

* locked the tuple without updating it; or if we didn't have to wait

* at all for whatever reason.

*/

if (!require_sleep ||

(tuple->t_data->t_infomask & HEAP_XMAX_INVALID) ||

HEAP_XMAX_IS_LOCKED_ONLY(tuple->t_data->t_infomask) ||

HeapTupleHeaderIsOnlyLocked(tuple->t_data))

result = HeapTupleMayBeUpdated;

else

result = HeapTupleUpdated;

}

failed:

if (result != HeapTupleMayBeUpdated)

{

Assert(result == HeapTupleSelfUpdated || result == HeapTupleUpdated);

Assert(!(tuple->t_data->t_infomask & HEAP_XMAX_INVALID));

hufd->ctid = tuple->t_data->t_ctid;

hufd->xmax = HeapTupleHeaderGetUpdateXid(tuple->t_data);

if (result == HeapTupleSelfUpdated)

hufd->cmax = HeapTupleHeaderGetCmax(tuple->t_data);

else

hufd->cmax = 0; /* for lack of an InvalidCommandId value */

LockBuffer(*buffer, BUFFER_LOCK_UNLOCK);

if (have_tuple_lock)

UnlockTupleTuplock(relation, tid, mode);

return result;

}

xmax = HeapTupleHeaderGetRawXmax(tuple->t_data);

old_infomask = tuple->t_data->t_infomask;

/*

* We might already hold the desired lock (or stronger), possibly under a

* different subtransaction of the current top transaction. If so, there

* is no need to change state or issue a WAL record. We already handled

* the case where this is true for xmax being a MultiXactId, so now check

* for cases where it is a plain TransactionId.

*

* Note in particular that this covers the case where we already hold

* exclusive lock on the tuple and the caller only wants key share or share

* lock. It would certainly not do to give up the exclusive lock.

*/

if (!(old_infomask & (HEAP_XMAX_INVALID |

HEAP_XMAX_COMMITTED |

HEAP_XMAX_IS_MULTI)) &&

(mode == LockTupleKeyShare ?

(HEAP_XMAX_IS_KEYSHR_LOCKED(old_infomask) ||

HEAP_XMAX_IS_SHR_LOCKED(old_infomask) ||

HEAP_XMAX_IS_EXCL_LOCKED(old_infomask)) :

mode == LockTupleShare ?

(HEAP_XMAX_IS_SHR_LOCKED(old_infomask) ||

HEAP_XMAX_IS_EXCL_LOCKED(old_infomask)) :

(HEAP_XMAX_IS_EXCL_LOCKED(old_infomask))) &&

TransactionIdIsCurrentTransactionId(xmax))

{

LockBuffer(*buffer, BUFFER_LOCK_UNLOCK);

/* Probably can't hold tuple lock here, but may as well check */

if (have_tuple_lock)

UnlockTupleTuplock(relation, tid, mode);

return HeapTupleMayBeUpdated;

}

/*

* If this is the first possibly-multixact-able operation in the

* current transaction, set my per-backend OldestMemberMXactId setting.

* We can be certain that the transaction will never become a member of

* any older MultiXactIds than that. (We have to do this even if we

* end up just using our own TransactionId below, since some other

* backend could incorporate our XID into a MultiXact immediately

* afterwards.)

*/

MultiXactIdSetOldestMember();

/*

* Compute the new xmax and infomask to store into the tuple. Note we do

* not modify the tuple just yet, because that would leave it in the wrong

* state if multixact.c elogs.

*/

compute_new_xmax_infomask(xmax, old_infomask, tuple->t_data->t_infomask2,

GetCurrentTransactionId(), mode, false,

&xid, &new_infomask, &new_infomask2);

START_CRIT_SECTION();

/*

* Store transaction information of xact locking the tuple.

*

* Note: Cmax is meaningless in this context, so don't set it; this avoids

* possibly generating a useless combo CID. Moreover, if we're locking a

* previously updated tuple, it's important to preserve the Cmax.

*

* Also reset the HOT UPDATE bit, but only if there's no update; otherwise

* we would break the HOT chain.

*/

tuple->t_data->t_infomask &= ~HEAP_XMAX_BITS;

tuple->t_data->t_infomask2 &= ~HEAP_KEYS_UPDATED;

tuple->t_data->t_infomask |= new_infomask;

tuple->t_data->t_infomask2 |= new_infomask2;

if (HEAP_XMAX_IS_LOCKED_ONLY(new_infomask))

HeapTupleHeaderClearHotUpdated(tuple->t_data);

HeapTupleHeaderSetXmax(tuple->t_data, xid);

/*

* Make sure there is no forward chain link in t_ctid. Note that in the

* cases where the tuple has been updated, we must not overwrite t_ctid,

* because it was set by the updater. Moreover, if the tuple has been

* updated, we need to follow the update chain to lock the new versions

* of the tuple as well.

*/

if (HEAP_XMAX_IS_LOCKED_ONLY(new_infomask))

tuple->t_data->t_ctid = *tid;

MarkBufferDirty(*buffer);

/*

* XLOG stuff. You might think that we don't need an XLOG record because

* there is no state change worth restoring after a crash. You would be

* wrong however: we have just written either a TransactionId or a

* MultiXactId that may never have been seen on disk before, and we need

* to make sure that there are XLOG entries covering those ID numbers.

* Else the same IDs might be re-used after a crash, which would be

* disastrous if this page made it to disk before the crash. Essentially

* we have to enforce the WAL log-before-data rule even in this case.

* (Also, in a PITR log-shipping or 2PC environment, we have to have XLOG

* entries for everything anyway.)

*/

if (RelationNeedsWAL(relation))

{

xl_heap_lock xlrec;

XLogRecPtr recptr;

XLogRecData rdata[2];

xlrec.target.node = relation->rd_node;

xlrec.target.tid = tuple->t_self;

xlrec.locking_xid = xid;

xlrec.infobits_set = compute_infobits(new_infomask,

tuple->t_data->t_infomask2);

rdata[0].data = (char *) &xlrec;

rdata[0].len = SizeOfHeapLock;

rdata[0].buffer = InvalidBuffer;

rdata[0].next = &(rdata[1]);

rdata[1].data = NULL;

rdata[1].len = 0;

rdata[1].buffer = *buffer;

rdata[1].buffer_std = true;

rdata[1].next = NULL;

recptr = XLogInsert(RM_HEAP_ID, XLOG_HEAP_LOCK, rdata);

PageSetLSN(page, recptr);

}

END_CRIT_SECTION();

LockBuffer(*buffer, BUFFER_LOCK_UNLOCK);

/*

* Don't update the visibility map here. Locking a tuple doesn't change

* visibility info.

*/

/*

* Now that we have successfully marked the tuple as locked, we can

* release the lmgr tuple lock, if we had it.

*/

if (have_tuple_lock)

UnlockTupleTuplock(relation, tid, mode);

return HeapTupleMayBeUpdated;

}

| void heap_markpos | ( | HeapScanDesc | scan | ) |

Definition at line 5486 of file heapam.c.

References ItemPointerSetInvalid, HeapScanDescData::rs_cindex, HeapScanDescData::rs_ctup, HeapScanDescData::rs_mctid, HeapScanDescData::rs_mindex, HeapScanDescData::rs_pageatatime, HeapTupleData::t_data, and HeapTupleData::t_self.

Referenced by ExecSeqMarkPos().

| void heap_multi_insert | ( | Relation | relation, | |

| HeapTuple * | tuples, | |||

| int | ntuples, | |||

| CommandId | cid, | |||

| int | options, | |||

| BulkInsertState | bistate | |||