- AggregateInstanceExtraSpecsFilter

- AggregateMultiTenancyIsolation

- AllHostsFilter

- AvailabilityZoneFilter

- ComputeCapabilitiesFilter

- ComputeFilter

- CoreFilter

- DifferentHostFilter

- DiskFilter

- GroupAntiAffinityFilter

- ImagePropertiesFilter

- IsolatedHostsFilter

- JsonFilter

- RamFilter

- RetryFilter

- SameHostFilter

- SimpleCIDRAffinityFilter

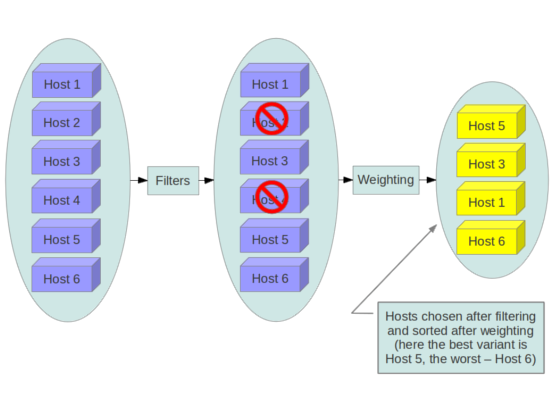

When the Filter Scheduler receives a request for a resource, it first applies filters to determine which hosts are eligible for consideration when dispatching a resource. Filters are binary: either a host is accepted by the filter, or it is rejected. Hosts that are accepted by the filter are then processed by a different algorithm to decide which hosts to use for that request, described in the Weights section.

The scheduler_available_filters

configuration option in nova.conf

provides the Compute service with the list of the filters

that will be used by the scheduler. The default setting

specifies all of the filter that are included with the

Compute service:

scheduler_available_filters=nova.scheduler.filters.all_filters

This configuration option can be specified multiple

times. For example, if you implemented your own custom

filter in Python called

myfilter.MyFilter and you wanted to

use both the built-in filters and your custom filter, your

nova.conf file would contain:

scheduler_available_filters=nova.scheduler.filters.all_filters scheduler_available_filters=myfilter.MyFilter

The scheduler_default_filters

configuration option in nova.conf

defines the list of filters that will be applied by the

nova-scheduler service. As

mentioned above, the default filters are:

scheduler_default_filters=AvailabilityZoneFilter,RamFilter,ComputeFilter

The available filters are described below.

Matches properties defined in an instance type's extra specs against admin-defined properties on a host aggregate. See the host aggregates section for documentation on how to use this filter.

Isolates tenants to specifichost aggregates.

If a host is in an aggregate that has the metadata key

filter_tenant_id it will only

create instances from that tenant (or list of

tenants). A host can be in different aggregates. If a

host does not belong to an aggregate with the metadata

key, it can create instances from all tenants.

Filters hosts by availability zone. This filter must be enabled for the scheduler to respect availability zones in requests.

Matches properties defined in an instance type's extra specs against compute capabilities.

If an extra specs key contains a colon ":", anything before the colon is treated as a namespace, and anything after the colon is treated as the key to be matched. If a namespace is present and is not 'capabilities', it is ignored by this filter.

![[Note]](../common/images/admon/note.png) | Note |

|---|---|

Disable the ComputeCapabilitiesFilter when using a Bare Metal configuration, due to bug 1129485 |

Filters hosts by flavor (also known as instance type) and image properties. The scheduler will check to ensure that a compute host has sufficient capabilities to run a virtual machine instance that corresponds to the specified flavor. If the image has properties specified, this filter will also check that the host can support them. The image properties that the filter checks for are:

architecture: Architecture describes the machine architecture required by the image. Examples are i686, x86_64, arm, and ppc64.hypervisor_type: Hypervisor type describes the hypervisor required by the image. Examples are xen, kvm, qemu, xenapi, and powervm.vm_mode: Virtual machine mode describes the hypervisor application binary interface (ABI) required by the image. Examples are 'xen' for Xen 3.0 paravirtual ABI, 'hvm' for native ABI, 'uml' for User Mode Linux paravirtual ABI, exe for container virt executable ABI.

In general, this filter should always be enabled.

Only schedule instances on hosts if there are sufficient CPU cores available. If this filter is not set, the scheduler may over provision a host based on cores (i.e., the virtual cores running on an instance may exceed the physical cores).

This filter can be configured to allow a fixed

amount of vCPU overcommitment by using the

cpu_allocation_ratio

Configuration option in

nova.conf. The default setting

is:

cpu_allocation_ratio=16.0

With this setting, if there are 8 vCPUs on a node, the scheduler will allow instances up to 128 vCPU to be run on that node.

To disallow vCPU overcommitment set:

cpu_allocation_ratio=1.0

Schedule the instance on a different host from a set

of instances. To take advantage of this filter, the

requester must pass a scheduler hint, using

different_host as the key and a

list of instance uuids as the value. This filter is

the opposite of the SameHostFilter.

Using the nova command-line tool,

use the --hint flag. For

example:

$ nova boot --image cedef40a-ed67-4d10-800e-17455edce175 --flavor 1 --hint different_host=a0cf03a5-d921-4877-bb5c-86d26cf818e1 --hint different_host=8c19174f-4220-44f0-824a-cd1eeef10287 server-1

With the API, use the

os:scheduler_hints key. For

example:

{

"server":{

"name":"server-1",

"imageRef":"cedef40a-ed67-4d10-800e-17455edce175",

"flavorRef":"1"

},

"os:scheduler_hints":{

"different_host":[

"a0cf03a5-d921-4877-bb5c-86d26cf818e1",

"8c19174f-4220-44f0-824a-cd1eeef10287"

]

}

}

Only schedule instances on hosts if there are sufficient Disk available for ephemeral storage.

This filter can be configured to allow a fixed

amount of disk overcommitment by using the

disk_allocation_ratio

Configuration option in

nova.conf. The default setting

is:

disk_allocation_ratio=1.0

Adjusting this value to be greater than 1.0 will allow scheduling instances while over committing disk resources on the node. This may be desirable if you use an image format that is sparse or copy on write such that each virtual instance does not require a 1:1 allocation of virtual disk to physical storage.

The GroupAntiAffinityFilter ensures that each

instance in a group is on a different host. To take

advantage of this filter, the requester must pass a

scheduler hint, using group as the

key and a list of instance uuids as the value. Using

the nova command-line tool, use the

--hint flag. For example:

$ nova boot --image cedef40a-ed67-4d10-800e-17455edce175 --flavor 1 --hint group=a0cf03a5-d921-4877-bb5c-86d26cf818e1 --hint group=8c19174f-4220-44f0-824a-cd1eeef10287 server-1

Filters hosts based on properties defined on the instance's image. It passes hosts that can support the specified image properties contained in the instance. Properties include the architecture, hypervisor type, and virtual machine mode. E.g., an instance might require a host that runs an ARM-based processor and QEMU as the hypervisor. An image can be decorated with these properties:

$ glance image-update img-uuid --property architecture=arm --property hypervisor_type=qemu

Allows the admin to define a special (isolated) set of images and a special (isolated) set of hosts, such that the isolated images can only run on the isolated hosts, and the isolated hosts can only run isolated images.

The admin must specify the isolated set of images

and hosts in the nova.conf file

using the isolated_hosts and

isolated_images configuration

options. For example:

isolated_hosts=server1,server2 isolated_images=342b492c-128f-4a42-8d3a-c5088cf27d13,ebd267a6-ca86-4d6c-9a0e-bd132d6b7d09

The JsonFilter allows a user to construct a custom filter by passing a scheduler hint in JSON format. The following operators are supported:

=

<

>

in

<=

>=

not

or

and

The filter supports the following variables:

$free_ram_mb

$free_disk_mb

$total_usable_ram_mb

$vcpus_total

$vcpus_used

Using the nova

command-line tool, use the --hint

flag:

$ nova boot --image 827d564a-e636-4fc4-a376-d36f7ebe1747 --flavor 1 --hint query='[">=","$free_ram_mb",1024]' server1

With the API, use the

os:scheduler_hints key:

{

"server":{

"name":"server-1",

"imageRef":"cedef40a-ed67-4d10-800e-17455edce175",

"flavorRef":"1"

},

"os:scheduler_hints":{

"query":"[>=,$free_ram_mb,1024]"

}

}

Only schedule instances on hosts if there is sufficient RAM available. If this filter is not set, the scheduler may over provision a host based on RAM (i.e., the RAM allocated by virtual machine instances may exceed the physical RAM).

This filter can be configured to allow a fixed

amount of RAM overcommitment by using the

ram_allocation_ratio

configuration option in

nova.conf. The default setting

is:

ram_allocation_ratio=1.5

With this setting, if there is 1GB of free RAM, the scheduler will allow instances up to size 1.5GB to be run on that instance.

Filter out hosts that have already been attempted for scheduling purposes. If the scheduler selects a host to respond to a service request, and the host fails to respond to the request, this filter will prevent the scheduler from retrying that host for the service request.

This filter is only useful if the

scheduler_max_attempts

configuration option is set to a value greater than

zero.

Schedule the instance on the same host as another

instance in a set of instances. To take advantage of

this filter, the requester must pass a scheduler hint,

using same_host as the key and a

list of instance uuids as the value. This filter is

the opposite of the

DifferentHostFilter. Using the

nova command-line tool, use the

--hint flag:

$ nova boot --image cedef40a-ed67-4d10-800e-17455edce175 --flavor 1 --hint same_host=a0cf03a5-d921-4877-bb5c-86d26cf818e1 --hint same_host=8c19174f-4220-44f0-824a-cd1eeef10287 server-1

With the API, use the

os:scheduler_hints key:

{

"server":{

"name":"server-1",

"imageRef":"cedef40a-ed67-4d10-800e-17455edce175",

"flavorRef":"1"

},

"os:scheduler_hints":{

"same_host":[

"a0cf03a5-d921-4877-bb5c-86d26cf818e1",

"8c19174f-4220-44f0-824a-cd1eeef10287"

]

}

}

Schedule the instance based on host IP subnet range. To take advantage of this filter, the requester must specify a range of valid IP address in CIDR format, by passing two scheduler hints:

build_near_host_ipThe first IP address in the subnet (e.g.,

192.168.1.1)cidrThe CIDR that corresponds to the subnet (e.g.,

/24)

Using the nova

command-line tool, use the --hint

flag. For example, to specify the IP subnet

192.168.1.1/24

$ nova boot --image cedef40a-ed67-4d10-800e-17455edce175 --flavor 1 --hint build_near_host_ip=192.168.1.1 --hint cidr=/24 server-1

With the API, use the

os:scheduler_hints key:

{

"server":{

"name":"server-1",

"imageRef":"cedef40a-ed67-4d10-800e-17455edce175",

"flavorRef":"1"

},

"os:scheduler_hints":{

"build_near_host_ip":"192.168.1.1",

"cidr":"24"

}

}